Turbocharge your Shiny Apps with remote submission to HPC

Turbocharge your Shiny Apps with remote submission to HPC - Michael Mayer Resources mentioned in the workshop: * Workshop site: https://pub.current.posit.team/public/shiny-remote-hpc/ * Workshop GitHub repository: https://github.com/sol-eng/shiny-hpc-offload

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

set up. Thanks, everybody, for joining. This is an R and Pharma workshop. We're excited to bring this workshop after the conference that we held last month. We're going to kick things off today looking at Shiny and high-performance computing with Michael Mayer, who's an engineer at Posit. With that, Michael, I'm going to go ahead and turn off my screen share, and we'll see if you can kick things off.

Okay, great. I think the title is probably Turbocharge your Shiny App by offloading computations to HPC environments. I think this topic is fairly dear or close to me because I've been talking to so many people, customers, users, friends that are developing Shiny applications but at some point their computations are just getting too big and then either the interactivity of the application is suffering or sometimes the whole environment is crashing because they're using too much resources and stuff like that. I thought it would be good to highlight possibilities how we can offload computations from an HPC cluster.

Also important for today, a little caveat, I'm not a Shiny developer. I'm not a data scientist either, so you probably wonder, I mean, what's this guy actually doing here? The reason why I thought I would provide this lecture today, because quite often you have data scientists that would like to use such a thing, but then they are kind of not aware of how the whole infrastructure setup works. I will today show you two basic workflows using two of my favorite R packages, how you can deal with that in a way that you can abstract all of the infrastructure setup out of things.

Let me also just introduce myself again. My name is Michael. I'm a solutions engineer here at POSIT. I joined about four years ago. As a solution engineer, I'm working with many large pharma customers, but also customers with specific HPC needs. As mentioned before, today I will present you with two possibilities, two ways how you can offload computations to HPC cluster in a very elegant way.

Workshop setup and approach

The idea that I have here is I will first talk a little bit more how this all is going to work. There is a document that you can follow along. I will post the link in that chat box, and probably also can share the screen. I think you should be able to see my screen. The idea is I will start with introducing the problem, the approach that we will take, and then every one of you, I hope, has an existing RStudio or POSIT one or IDE locally available. Then we can play with a shiny application that is using some computation locally, and then we will go step by step by connecting that to a remote HPC cluster to see what happens.

HPC background and complexity

All right. I mean, now in terms of background, combining a shiny application with HPC cluster is definitely not something for the faint-hearted, because there is also lots of complexity involved. Number one is you are on a shiny server. How do you reach your HPC cluster? How can you travel the network? What we will use today is to use SSH connection, which stands for secure shell. Even if you have a shiny server, from where you can directly submit jobs to HPC clusters, such as Slurm cluster also, then you still also need a way to actually use the CLI or use some package that helps you to abstract out that whole gory CLI usage.

In addition to that, we all know that R, and this workshop is mainly focusing on R today, needs lots of packages. From a shiny application perspective, you probably want to have the same R packages on your shiny server than you have on your remote HPC, while at the same time you don't want to pollute your library pass on your remote HPC with some packages that you then can override. So, any package installation on the remote HPC should be done in a way that it doesn't interfere with you running other programs on the HPC cluster.

I mean, I hope that everyone knows what a HPC cluster is. I mean, very high level HPC cluster for the purpose of today consists of three types of servers or nodes. The head node, where the main scheduler process runs, which I always call like the brain of the operations. Login nodes, this is where the users can log in to interact with the HPC cluster, like submitting jobs, post-processing, and so on and so forth. That's where interaction is happening. And then you have compute nodes. And compute nodes is where the actual computations are happening. And access to the compute nodes typically is only possible by submitting a job to the scheduler.

And to further down, I have a little graph here that shows the two ways how I can integrate things. So, here you have a shiny server on a so-called service or login node. And login nodes, as we just discussed, allow you to submit jobs directly to the compute nodes via HPC CLI, like SLURM CLI and stuff like that. However, given which organization that you are with, your HPC administrators may not want the idea of adding a service node or opening the login nodes to host something like a shiny server or even POSIT Connect. So, that's why we will actually start with the tough bit here by having a shiny server outside of HPC cluster and then connecting to the SSH, to the login node via SSH. And then, via some magic, we are then automatically submitting the actual computation to the HPC nodes and the results will be transferred back to the shiny application, all very transparently.

results will be transferred back to the shiny application, all very transparently.

Key packages: clusterMq and mirai

And the main two packages, those of you that haven't seen already, is clusterMq and a fairly new package called mirai. By way of a little bit of background, clusterMq is our front-end for a messaging framework called zeroMQ. And zeroMQ is a highly efficient communication framework that allows you to have low latency or high efficiency message passing of sorts. In addition to that, you will also see a little bit of mirai tonight. For me, it's tonight because, as you probably can hear, I'm not based in the US, I'm based in Europe, specifically Switzerland. The second package is called mirai, which is kind of similar to clusterMq, that it uses also a very efficient messaging framework. However, it's using a tool called nanoMessage or NMG for short. And that is supposed to be like the successor of zeroMQ eventually.

Cloning the repository and environment setup

So I probably also should have mentioned to you that there is this Git repository that I created. So what you can do is, I will post the first exercise. You can go ahead and clone that GitHub repository locally. At this point in time, you don't need to worry about any HPC access, user account details, we will all get there eventually. So I'll probably stop here and give you like two or three moments, two or three minutes to clone the repository.

And once you've done that, I will be using Positron, as you can see here. I already had a little bit of a head start, so I just can click on open folder and do. Yeah, I mean, that's a good idea. Good question. I mean, do we have, does everyone have the ability to run R code locally, either with RStudio or VS Code, or do we need access? Michael, I've got a few environments I can share in the chat box, and I'll put all the instructions there so people can just join. And I think if it's mostly just going to be local, they can use that for a hands-on for today's session.

Okay, fair enough. Cool. Thanks a lot.

Okay. The end goal of this first exercise is that you have map0 open and working directory set. Yeah. Actually, maybe I can also do that here so people can kind of follow along. So, this is the workshop environment. So, I think I can click here. I already have an account. You can register real quick, and then you will get access to my PDF.

You can register real quick, and then you come here to the new session, and then you have access to whatever IDE you want. Let's just use Positron here, and click on launch, and within Positron, it should come up in a moment. Ah, there we are. So eventually we should see Positron in the web browser here, and there we are.

Now let's do open folder, there is the new folder here, open, new, new folder from template, yeah, so take a new folder from template, and we choose our project, we click on next, and maybe do, maybe Shiny HPC, and I, let's, ah, actually we can do it, let's do it differently, I mean we also can directly clone the repository over here, that's much easier, so just need to go back to the, to my little web browser to see the URL again, this URL, and I go back to the clone repository, copy and paste that stuff, and I clone it in that folder, yeah, why not, and now it's cloning, and it's asking me to open that whole thing in a separate folder.

Okay, so just a little pulse check, I mean, do we have, do we have someone that already has managed to do that, or are you still doing things? Someone can type either no or yes in the chat box, or send a... Yeah, I'm adding, Michael, the PositCloud, if people are familiar with Posit Workbench and Positron, I added the steps to get into that, so they should be in the process of setting that up, if they're newer to this environment, or haven't used Positron, I'm going to add PositCloud, and this is going to make it work pretty easy for you, because I went ahead and cloned all the files, just give me two seconds, I'm just now pasting that into the chat box, so it should be, should be ready here, okay, cool, yeah, what, my hash means set that, that one should be fine for now, okay, so I just put the PositCloud, and so for people on today, you have basically two different ways to do the hands-on portion, you can use the Workbench instructions, if you want to try out Positron, you'll need to clone the GitHub repo directly in Positron, if you're not familiar how to clone, or you're not familiar with Positron, or you're not familiar with Posit Workbench, then I put PositCloud in, there's basically a link, you click that, you sign into PositCloud, it will take you directly to the workspace for today, and then you'll just click start, and it should launch the environment for you, and all of the files will be available inside of Positron, or excuse me, PositCloud.

Okay, great, so, okay, now, now that you have, the first thing I wanted to do is to change the work directory in this app-zero here, and I've already done that stuff, and, and once, so you get wd, should report that you're in this app-zero folder, and as a starting point, so that we, you get a reproducible result, let's type renv colon colon init, let me init on the R console, and this will, and this will, no, sorry, not that, renv colon restore, sorry, I'm lost in the equation, that's what it should run, and renv restore, in your case, will, will ask you whether to activate the environment, and it will eventually install a number of R packages that you would need for, for the Shiny app that we will load.

I mean, I can make it a little bit, a little bit more exciting for me here, if I do renv, if I just delete this, renv folder, if I delete this thing from R profile, go back to the console, then I'm starting with the same thing than you, so if I now do renv restore, it asks me to activate the project, and type run, and it will immediately ask me here, whether it wants me to update those packages, I say yes, and it will install those things.

Very good, so this will take a moment, no, let's, it asks me to restart my session, and now I see it has some stuff to complain about, if I do renv status, then you see it's lots of, in my case, it has lots of packages that it wants to install, but hasn't installed yet, so maybe let's just do another renv restore.

Well, maybe we have a problem, some permission denied. Good, okay, so Ivo, I mean, are you, which environment are you working on, are you on the workshop environment, or POSIX cloud, or locally? I think in the workbench environment they mentioned. Oh, yeah, okay, it might be the working directory, I had to, in the cloud environment, go to the first app, and set the working directory as the application with the lock file, so I don't know if that could be it, yes.

Ah, because it's trying to install things into the side library, which is not, ah, if that's the, Ivo, could you maybe try first to run renv activate, and then renv restore, maybe it works that way. And once you did the activate, then restart the session, so it should load the, should set up the R environment, and once that's set up, it should have a different library parse locally to install the packages, and then the renv restore should work. For Clonium, maybe, yes, once you did the renv activate, for sure, starting the session, restarting the session is always actually needed, because it needs to set up the new library parse.

Okay, so, I mean, once you master that thing, I mean, you should be, ah, in a position that if you run renv status, that should, that, like, my, on my screen should, shouldn't report any issue. I think then we finally can take a look at the app as such, and if you want to open, open the app, we can go through it, so it is a shiny application, of course.

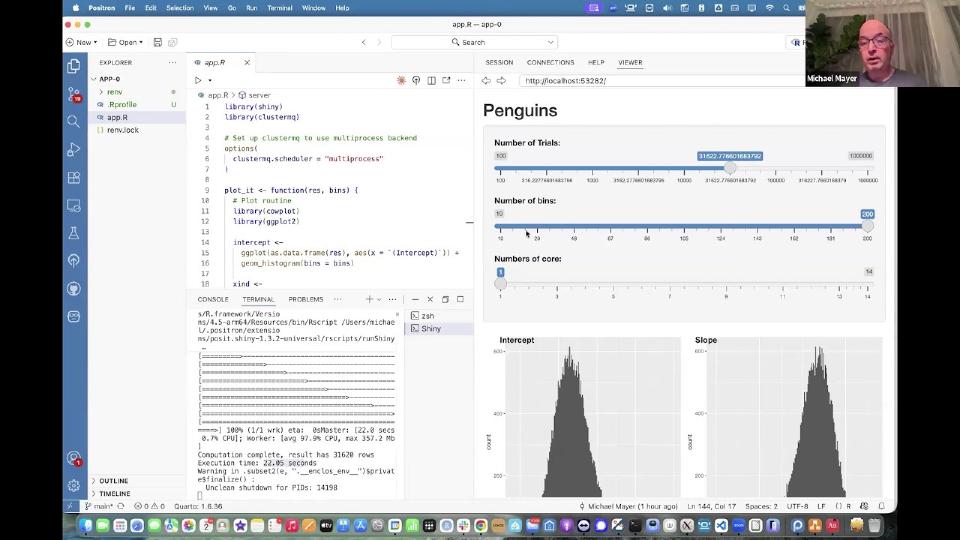

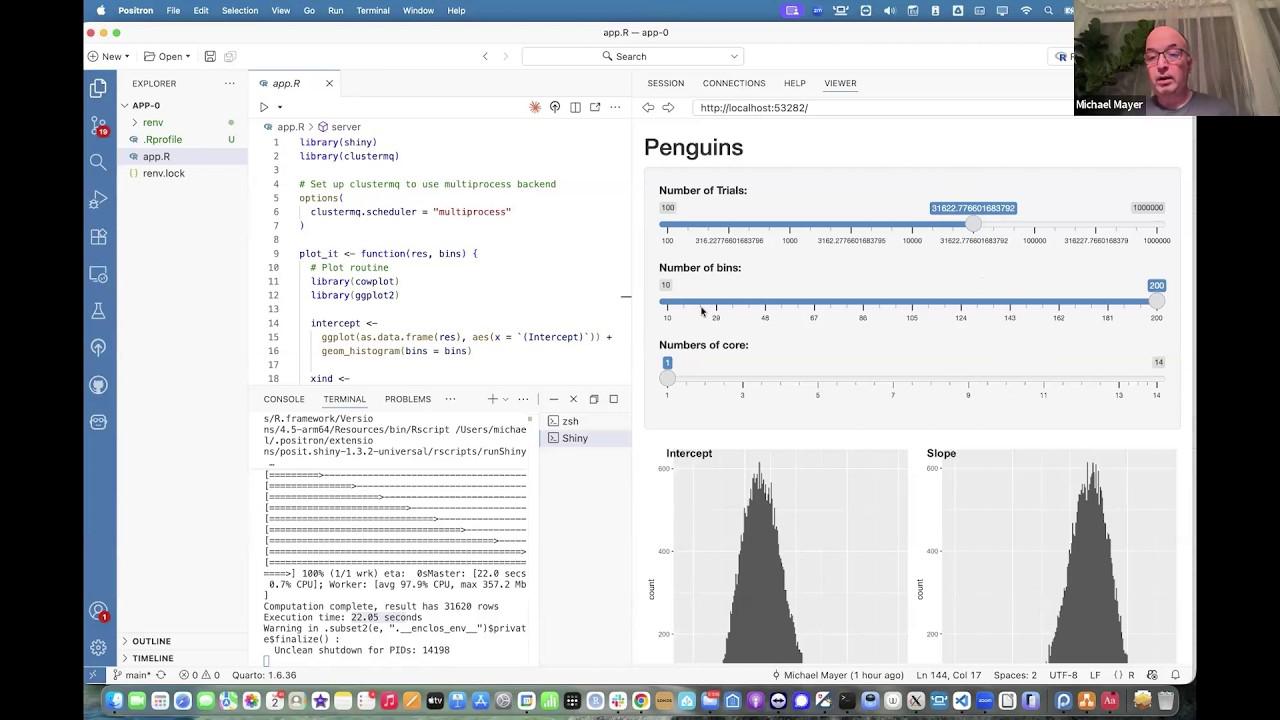

Exploring the Shiny app

And we are using already here the ClusterMQ package to do at least some kind of parallel computing locally, and that is what we set here, because ClusterMQ supports a couple of compute backends, and we will use, for the first iteration, like, multiprocess backend, which means it just forks locally our processes, and there's no remote HPC, nothing involved, so that's all well and good, and then I do a, I use the Palmer Penguins dataset to run a GLM process in a form of a bootstrap, and finally I am, I'm plotting, plotting a histogram of the intercept and the slope of the, of the, of the model.

So here is the actual compute function, where you see I'm doing bootstrapping, where I'm sampling from the actual dataset, and running the GLM, and returning back the, the output coefficients for intercept and x and 1, which is like the slope, and, and the nice thing about this ClusterMQ function, and we will not have too much time to really go very deep into the ClusterMQ package, but I mean there is good documentation that also reference in, in the quarter document that I shared earlier, the magic in the ClusterMQ happens behind a so-called Q function, so you have like a capital Q here, and this Q function is kind of the secret sauce, it will run the function, like the bootstrap trial function that you saw earlier, against these, these initial parameters, like in a range, all numbers from 1 to number of trials, it will also export some constant data, that is kind of the Palmer Penguins dataset, it will run so and so many processes, as many as I said for number of calls, you see how that works in a moment, I'm using the Palmer Penguins dataset, and instead of sending one, one function call after the others, I'm using some chunking here, to, and chunk size of one would mean all, all stuff is being sent at once, chunk size equals trials would mean that, that all, that one would be sent after the other, and here I'm just using something that, depending on the call size, would produce 10 chunks in total.

Okay, and this result is then being returned, and then I'm doing a couple of, what are UI elements, like some sliders, where I can set the number of bins, and for the histogram, number of trials, and stuff like that, and I have a server function here, that calls all of that together, I mean maybe doing is probably better than talking, if I just click on one Shiny application, then you see that a Shiny application eventually will come, come up here.

And you already can see that there's some stuff going on, so it already has ran things for 100 trials, using a bin size of 100, or allowing for 100 bins, and on one call, so if I rerun the same thing with 316 trials, you see the same thing is rerun, still all locally, and the histograms are being updated, and with more data, you see the histograms are getting a little bit more rounded, or more smooth, and as I increase the number of trials here really, you see not only does the histogram get smoother, also the compute time is expanding, and depending on what, which environment you have, you already may, may be maxing out on a thousand trials, or 3,000 trials, so I mean just be a little bit cognizant of the local resources.

And of course, if I now change the slider to maybe only have like 10 bins, then that of course the plot is updated without recalculating, but now you also can see that the, need to get rid of this, I have to, yeah, so you see for me, the last thing finished in about eight seconds, which was quite kind of long, but now I just can select a different number of calls, and now the whole thing runs on three calls, and let's see what it has come up, and now the whole thing is, instead of eight seconds, it's one, it's now running, it has run in five seconds, so if I now go maybe to 31,000, you can then see that it takes a lot longer now, it has now run in nine seconds on three CPUs, but now let's do a test again to, do it on one call, and you see immediately it's taking much longer, you see the ETA as well has been much longer, you're probably more looking at 20 something seconds, there we are, I don't know why I did that, well, but yeah, execution time is now 22 seconds, so that means by using the three calls, we managed to get the execution time down from 22 seconds to nine seconds, which I think is not too bad for now, but we will do a bit more later.

When we go to the next step, and I mean, I mean, as you probably also can see, you see, by using this cluster mq package, the actual compute function is fairly,

fairly simple, actually, we just need to worry about this q function, and you need to prepare the actual compute function, and you need to prepare the actual compute function, like this bootstrap trial, to make it use those, the respective parameters, and then the only other thing you need to do is to define why the options, which, which backend you want to have, and for the multiprocess backend, it's fairly, backend, it's fairly, it's fairly easy, because you just need to set that option.

Later on, when we go to remote HPC, or full remote HPC, you will see that, that we not only need to set the, the scheduler or the backend, we also need to specify some thing called templates, well, but more of that later. I think I'll probably start, stop here for now, and just see how far people have gone. Yeah, and by the way, by the way, if, if you cannot use more than one, one call for now, don't worry, I mean, we will soon push that gap, and I think then, I hope that the performance improvements will be even, even bigger.

Yeah, it looks like it's working, and a few people have cloned into Posit Cloud, so I think they should be in pretty good shape there. Michael, I was just going to say, in Posit Cloud, there's only four cores available, so people can go up to that amount. I know, I can, I can see you have 14 on yours, I wasn't, I wasn't sure. Yes, sure, yeah, no, I mean, I mean, the reason why I have 14 is because I'm working locally on my MacBook Pro, but I mean, don't worry if you only have four, maybe even two or one, I think, I just want you to get a general feeling of how, how this Shiny application is being set up by having like this, those three sliders, that you can configure the cluster MQ package by loading it and setting the appropriate scheduler, which for now is multiprocessing, and then depending on how much cores you have, how much trials you define, the respective computation will be done, and the histogram will be plotted.

And by the way, this is also what will, what will remain, what will keep you following for the remainder of today's workshop. Yeah, because we will stick with the same example for one or two more iterations before we leave and go over to the MIRA stuff. Yeah, Michael, a quick note here, the Workbench images, I think only have two cores, and the Posit Cloud can be maxed up to four cores. I thought it was pretty interesting that Posit Cloud, I think, will default to one. If, and if you go up to the top right-hand side, there's a settings wheel, and you can change the resources or the cores to four. And when I did that, and I re-ran the Shiny app, the number of cores were automatically detected from what I had changed. So I thought that was pretty cool that the app could figure out automatically how many cores I had available for the computation. So if you, if you want to see that in Posit Cloud, you can just add more cores if needed, so.

The parallelly package and core detection

Yes, and by the way, yeah, if I, I mean, I mean, many, many people, if they do parallel computing for the first time with R, for example, they may end up eventually using the parallel package. And if they load the package parallel, which is a base R package, then they will run into lots of trouble, no matter on which system they are. The reason is that parallel is base R package and has been there right from day one of the R software. So it is not aware of modern ways of allocating CPUs and stuff like that. So parallel by default will use all the physically available cores on whatever server you have. However, as you probably know, when it comes to HPC clusters, the schedule will only allocate you two out of 20 cores on a computer maybe, or in Posit Cloud, there's also mechanisms, although the physical server behind the scenes is much larger, the parallel package will probably likely still detect all of those physically available cores while your session only has like the two or four cores that Phil was explaining to you.

So if you want to avoid any problems by using too much resources, I always recommend you to use the parallelly package, which is like a package from Futureverse. But parallelly, instead of using detect cores, it's using available cores. available cores and that has support for any scheduler, for Linux cgroups and stuff like that. So whatever parallelly detects is much better than what the parallel package is doing. So that's why I'm setting for the number of cores a max value of parallelly available cores. And to Phil's point, I mean, this is precisely why I'm seeing 14 cores because I have like 14 cores available. POSIT Cloud has two and POSIT Workshop has four available. So, I mean, just keep that in mind that figuring out available cores, always use the parallelly package.

So if you want to avoid any problems by using too much resources, I always recommend you to use the parallelly package, which is like a package from Futureverse. But parallelly, instead of using detect cores, it's using available cores.

Setting up SSH access to the HPC cluster

Good point, Phil. Thanks. Cool. So, I think we have like a couple of people have probably succeeded running the app as it is, right? So, I at least know that Ivo, Mahesh Kumar and Lovemore Kava have succeeded. So, that's good. And I hope there's others also that are following along. Right. So, now, I mean, we have some nice toy example. And the next step now, we want to make use of some remote HPC cluster. And if we go back to this document that I started to share with you earlier, there is we now need to run a couple of commands on the terminal.

And the first step that we now do is we build an SSH connection to the HPC cluster. And maybe before we do that, you will need to first claim your user account name. And I will put another link into the chat box here. This is like an application on shinyapps.io. If you click on that app, you will it will contemplate a little bit. And after that, it will give you back a username. And this username is different for each of you. And you just need to maybe write it down for now because that will be very helpful. This will be important for what is coming next.

And so, while you extract your and claim your usernames again, let me just go back to this document. So, the SSH connection to the HPC cluster. I'm sure that some of you have used SSH for various bits and pieces. So, by default, when you do when you so-called SSH into your server, it will probably ask you for your username and password. And you supply that all. And then everything is happy. And you enter the remote server. But SSH also supports key-based authentication where you create a key pair consisting of a so-called private key and a public key. And if you then store the public key on the remote server, once you trigger SSH connection, then your local private key will be... Your local private key will be then used to compare against remote public key. And if they match, then you will be allowed in without any password. So, it's something that you should handle with care. But to make things easy here, I have prepared this command here that you all can run. It should work on both POSIT workshop and POSIT Cloud.

So, if I go back to my little thing here. And I hope that you have access to a terminal as well. So, if I go to the terminal here, I just interrupt the app for now. And I run this SSH key chain command. And this, in my case, it already has some SSH key generated. Now, I just can overwrite mine. And now, it has created something locally. So, if I look into the .ssh folder, you should see some MyHPC... Yeah. MyHPC stuff. So, it has created a MyHPC file that is kind of the private key. And MyHPC.pub is the public key. If you want to see how that looks like, you can do cut on it. So, it's got lots of gibberish, I always say.

Michael, I was going to say, if you don't mind just sharing what we need to run in the terminal in the chat box, might be good. Yes. Okay, cool. I'll do that. And then, just to make sure I'm on the same page. So, we went to the ShinyApps.io link for the user account. It assigned us a user account. And then, we're going to use that and the terminal with the SSH. Yeah. I mean, for this step, you don't need to use it yet. Okay. It'll come in next step. And I'll explain to you. But for now, maybe focusing on creating... Calling this SSH keychain command.

And that should hopefully create you something. And then, if you then run this cut command as well in the terminal, then you also should see similar gibberish showing the private and the so-called public key. Okay, amazing. So, just to make sure I'm on the same page. So, run the first SSH keygen in the terminal. Let that run. And then, when that's finished, run the cat statement in the terminal. Okay. Yes, precisely. I mean, the cat command is not really needed. It's just to sort of give you a little bit of confidence that something has happened and there are some stuff there.

And then, I mean, those two files, like the public private key, they will be your entry point to the remote HPC cluster in a moment. Okay. I tested those in PositCloud and it seems to be working great. So... Awesome.

Copying the SSH key to the remote cluster

Next thing that you need to now run is this command here. This is maybe a little bit more involving. And here, you need to make sure that the username is precisely the username that you got. So, in my case, it was Posit00007. So, in your case, it should be anything slightly above 007. And if I now run this command here locally, I can show it again.

I'm doing this thing and I go back to do Posit00007. Right. And now you see it's telling you what it's trying to do. Attempting to log in. One key remains to be installed. And then the password is a little bit super secure. So, secure that I put it in a document. I hope that IT security doesn't listen here.

All right. Let's just do the following thing. I mean, we already have close to the one hour mark. Maybe let's make let's do a five minute break and let's reconvene at a full hour. So, I will just look to see what's going on. And for those of you that need a quick bio break, we also can have a quick bio break. So, I'll be back in a moment.

I mean, Mahesh, I think MyHPC is not a public host. It is like the private key that you have installed in your home directory until the slash dot SSH MyHPC. And I mean, the SSH copy ID command, it basically takes all of those parameters. Where dash I is like the specifies the private key, dash P is like the port number, 443. And username at this long thing is the kind of the host name and the username that you want to connect to. And that should be your POSET 00 something at PWB NLB, blah, blah, blah.

Yeah. I do. Okay. So, this is the first app, which seems to be running fine. I had no issues with that. Here's the second app. RMF and PAC are a little bit strange in POSET Cloud, because you don't really have the idea of projects in POSET Cloud, because everything's managed and contained. So, sometimes I have to kind of work around some of the RMF features.

So, here's the history. So, I ran in the terminal, this first function, which seemed to run just fine, if you can see that. And then I ran the second one. And then I ran this one, and I changed to my username that I got from the Shiny app. And then that's it. So, when I ran, it outprinted some of the text that's on my screen here. And it gave me this large private key, gave me a private key for this. And then, I think this is the part where I ran the new section. And it says, info to be installed, info attempting. And then I got this error, as it cannot connect host. It's actually timed out.

Ah, right. Hang on, guys. I think I know what's going on here. The problem is pretty much me. Okay. No, zero. I think I know what I need to fix. So, it should be... Okay, good. Phil, can someone try again and see if it works? I think it just worked on my screen. Okay, cool. Yeah, yeah. That's great. So, Phil, I mean, now you just can press... Just type in, type yes. Yes. And now it asks you for the password that I posted in the chat earlier. I'll do that once more. Hang on one moment. And then press enter once you paste it.

Great. Successful. Yeah, Evo is also there. Great. Awesome. And I mean, now what we now can do as a little test, we can run the following command. And then you're on the command line and then you will check if you actually can log in. So, if you run this SSH command, again, replacing username with your actual username, then you should be able to log into the HPC cluster.

Logging into the HPC cluster without a password

Oh, Jennifer's also in. Great. You're making progress. You'll get there. You'll get there. Cool. Password? Is that the same password you just gave us? Yes. Sorry. And now you either can supply your same password and alternatively you also can do this thing. For those of you that want to see, want to get in without a password, I can run this command here. So, if you just press control C and we run the same thing with the dash I tilde slash SSH. Yeah. Control Evo will get you all the way immediately.

Yeah. And there you go. I mean, now just by running this SSH command and specifying our private SSH key, you are able to log onto the remote HPC cluster without any passwords. Sweet. Awesome. Okay. Cool. And I mean, this is precisely what we will, what is like a one-time setup that we had to do. I mean, going forward, whenever you use that server and you run SSH like we just did, you will be able to log into the HPC cluster without any password. So great. So maybe let me just take over the screen share a little bit and let's go back to my little process run thing. So you also see, I only ran like the SSH copy ID command. And again, as a little sanity check, I do SSH dash I for the key dash P, my username, and I'm in. Bang. And you also, if you want to be falsicious, you can change the username to whatever you want. For example, to mine. Well, I'm 100% sure that you will not get in because my private key is different to yours.

Opening app-1 with SSH backend

Okay. Excellent. So now let's do, go to the next step. Now we already made this one application working. Now let's open the app dash one. And app dash one is pretty much the same as the app zero. The exception being is that this options thing has changed a little bit. And I will walk you through what that means here. So the backend scheduler is SSH. That means whenever cluster MQ wants to compute something, it runs some kind of SSH command. That's the first line. Second line is you need to tell them which host to use. And again, in my case, it's positive zero zero seven at something something. So in your case, you just need to make sure that the username up here is the one for you that you got assigned.

Then for debugging, which we may need in a moment or not, there's output being dumped into that file. And in order to hide all of the gory stuff like we had to do before, like which SSH key, which port number and many other things. Now all of that is hidden in a so-called template such as SSH TMPL. And you also see that in the same folder, you have this SSH TMPL file. And here you see this is very, looks very daunting. And I think it's very difficult to understand. I mean, for those of you that are more technically involved, here is the P443 and the dash I option. You probably should still remember from the previous command. All the other things is kind of more gibberish. But what we are doing here, we are creating a so-called SSH tunnel to this remote host. And we are running some ClusterMQ processes on the remote server. And I mean, all of those curly brackets are just using some kind of templating with variables that are being taken care of within the ClusterMQ package.

All right. Now let's do the following. Let's save the app. Let's try if we can get it running. And I think the document that I prepared has one additional step. Because I mean, what? You will probably again see the same thing than me connected to something running one calculations. However, this is what happens behind the scenes here. So now, as we mentioned previously, there has to be some kind of setup on the remote server. And maybe before I go further with the explanation, does anyone of you get any error message already? Or are we still okay? Or do you see the same thing

than me? Okay. That's excellent. Cool. And I also can kind of share this window here. Here I am logged into this HPC cluster. And if I do sq here. Oh, right. And here, yeah. Here I can already see that process zero. That two users are already running stuff. And now I think that will improve very shortly. At the moment,

yeah. And the HPC cluster that you're connected to is a cloud-based HPC cluster. And that means not in a cloud environment, not all of the compute nodes are up and running all the time. That means that at the moment, only one compute node is up and running.

So whoever will run that user at the job 210.

Do we see? It's been canceled already. Okay. Anyway.

Okay. Now, what I'm trying to say here, because it's a cloud-based environment, not all the compute nodes are up and running all the time. So whenever there's a job coming, like you have like three jobs now, they're all waiting. This column in the sq output under st, which stands for job state, reads cf for everyone. And cf is short for configuring. So that means at the moment, because you guys have submitted a job of sorts, the HPC cluster is spinning up a compute node provisioning for you. And that typically takes about four minutes and a bit. Yeah, great. And we see already four users that have managed to do that stuff.

And once we are beyond the four or five minute mark, then we should see things running.

Let's watch that thing. I can do watch. So we should see it flipping to running at any given point.

Ah, there we are. So one person already should have their job being running now.

And let's wait until the others are also starting to run. And while I'm waiting, let's wait until the others are also starting to run. And while that is kind of doing things. So I mentioned before that because the HPC cluster does not have any information on what R packages you want to use. I mean, obviously we want to use the Mirai R package, right? The cluster MQ R package. But I mean, we also need to use the Shiny package, maybe the palmer penguins package and stuff like that. So in order to facilitate that, I have come up with a little bit of a glue code that is this HPC setup function that you can see in my screen share here.

HPC setup will predefine the following two things. It will generate a random UUID where the whole Shiny deployment on the remote HPC can be identified. And the UUID is then being used to generate a directory on the remote HPC where all of the packages will be installed. And additionally, I'm also taking note of all of the locally installed packages on my local server, in the case on POSIT Workbench or POSIT Cloud and stuff like that. And eventually it returns with a package list, package directory, and then it eventually runs this init function that is being run remotely. And there it will then install all of those packages in the respective library paths.

HPC setup will predefine the following two things. It will generate a random UUID where the whole Shiny deployment on the remote HPC can be identified. And the UUID is then being used to generate a directory on the remote HPC where all of the packages will be installed.

Troubleshooting package installation errors

Error in pack can, could not solve package dependence. That is something that I also figured. I got the same message in POSIT Cloud. I don't know if the person is working there, but I was able to get through it. Like I just worked beyond the error message and it seemed like things were still working okay. So I've noticed in the past, if you're in POSIT Cloud, rinf and pack seem to throw some strange errors just because POSIT Cloud doesn't really have the idea of like projects. I think that really shouldn't worry here. That doesn't worry me too much here, to be honest, but let's maybe, let me try it here on the workshop thing as well, because there is one thing that is probably a problem here.

Yeah, I think you're right. I just got the same error message in the console. Yeah.

Yes. I just need to reconnect to my session that seems to have stalled on me.

Sorry about that problem guys, but I think this is the same issue I had when I prepared for this demo and I think we are entering a little bit of a shaky terror here, so to speak, and I'll explain to you why that is in a moment.

So if I type lib here, what do we see? Okay, so we have those two folders and I check this thing here. Yes. Yes. Oh my God. Yeah. The reason, and I mean that kind of disqualifies the workshop environment here for sure, is because if you think about our installation, our installation has the main installation folder where it installs a binary library, the shared library, the R scripts and stuff like that, but it also has a so-called system library, that is this folder over here. But in the system library, by default, only base and recommended packages are being installed. While here, here we see that we have 229 packages, Robert.

Unfortunately, there's only 30 base and recommended packages. So this workshop environment, and maybe it's the same with the POSI Cloud as well, has a polluted system library, which is not in accordance with anything that I would call best practice. This Puck tool that I'm using in the application code, it is trying to exactly replicate the same packages on the remote HPC than it has installed locally. Robert, because some of the package versions are incompatible with what you have in your R environment and stuff like that, Puck cannot solve dependencies if everything is inconsistent. Okay. Let me just think how we can make ends work.

I mean, if it comes to diversity, we probably need to switch to me doing the whole thing. I haven't fully given up yet. What is here?

By the way, can you guys also check what you have in this folder in your home directories on POSI Cloud? If there's anything, can I just ask you to remove that thing completely?

So the home directory in POSI Cloud falls to the, I think it falls to the project folder.

Yeah. I mean, those of you that are working on the workshop environment, they should probably run this command here. The rm-r. Okay. Got it. Perfect.

Does it matter where the working directory is or do we run that in the console? Yeah. That's a good point. Let's do it in like that.

That's easy, safer that way. So if you want to run it like that and see if that helps, because we need to get some kind of consistency here.

So run that in the terminal, because I think my terminal is still inside the HPC user account. Yes. So if it's there, then you just need to type exit or quit.

You just need to type exit or quit. Okay. I mean, you should be properly local through your Puzzle Cloud thing. We've ordered this loading. Let me try, if I can fix it on Puzzle Cloud.

I think if you click on the link that I gave you, Michael, it will take you automatically into the environment that I got set up for today. Yeah, let me give you the link. It should take you right to where you need to go. Yeah. Here you go. I'm going to put it in the chat box for you. Thanks. The only problem with Puzzle Cloud though, is it doesn't, I only installed the Tidyverse and not the other packages because of the RMF. So if you just click content at the top.

Tidyverse and not the other packages because of the RMF. So if you just click content at the top, yeah, and then start. Yeah, perfect. Cool, thanks.

By the way, has someone cleaned up the R folder? Do you still get the same error or when you did it, Phil? I haven't had a chance to do it in Puzzle Cloud. That's going to see what the right location of the terminal. I'm back into the cloud projects location and I'm just going to wait and double check and see what you do here. So yeah. Yeah, so this is where I'm at. Okay. There should be also a home folder here.

Okay. So I think we then need to go to Cloud Lib and remove that thing here. Well, let's just look what's there. Yeah. There's plenty of crap. We don't need that. It's just convoluting things. Okay.

And if you now do console session restart R, then it's still pointing to that two things. But now let's look into this folder. I mean, this is kind of what you see here. This is a system library with just less than 30 packages, exactly 30 packages that I would expect. Well, but then in the Cloud Lib thing, you now just should have nothing left.

Do you mind, Michael, either freezing on this page for a second or giving me or pasting what we need to run in the terminal? Yes. The chat box might help a lot. Yeah. So, I mean, what we need to do is Cloud Lib. Yes. Oh, I mean, yeah, we are getting further. That's great. So, I mean, what we need to run is this thing here.

Okay. So the only other thing I think I need to do is I got to change. So my user directory falls to the projects folder. So I just need to change it. Did you change it to the LibPath folder in the terminal? Yeah. Well, let me just. Okay. No, no, no. I mean, I didn't change any LibPath here. I just ran the Rm command as I specified. Okay. So now let's just open the file. We are at app-1, right? App.R. Now if I do go here, set WD. App-1. Get WD. Right. That's good. Session. Restart. R. Now let's maybe do run. Restore. Okay.

I think that's maybe I need to reinstall Ben if we are quick. That's a single dependency thing, so that should be okay. We do run, restore. Yes. Activate. I've just been hitting yes on that. I don't know. Yeah. Do we need to restore? Yes. I mean, after we removed it, I would recommend that we restore in the app-1 folder as well because it's a separate R environment. Yeah. So it's downloading things here.

And when this completes, do you mind just letting me see your terminal? Yeah. I'm just going to take a peek. If you don't mind expanding that, I'm going to look at that real quick. I don't know what I did. Oh, there we are. Yeah, I see it. Well, I cannot type anything at the moment. Oh, maybe it is. Ah, okay. I can maybe try. New terminal.

Yeah, so I think maybe we just need to wait. Wait. Ah, okay. That can be a bit painful now because, I mean, that means we are actually running. This beautiful setup is using binary packages.

Then I can try to interrupt it and we can. There we go. Now I can type something. Oh, it's very slow. Is it also slow for you guys? Running lots of GCC stuff now. Maybe a session. Terminator. I will terminate it real quick and restart because that's way too painful.

Switching to a working demo

And now let's do options, repose.

Unfortunately, that may take a while, but I think maybe in the interest of time, I probably can switch to my Positron instance and I can walk you through what's happening next. If that's okay with everyone. And I mean, you can also do the run restore and hopefully it will eventually come back. So again, let me just. This is my app-run locally. I run the app again.

Right. And you see, it works for me now. And I mean, what has already happened behind the scenes is the following.

We are here. So, I mean, we have found some error here. Okay, so we have an error here. No package called palmer penguins. So where does this appear? What is happening? App.r line 164. Ah, that's in the queue function. Okay, I think that's also some of the errors that we saw earlier, right?

Right. And this is now a little bit. I think you also see something like the. You also see the libpath set like that. Correct.

I mean, if you do see that, now let's just use the SSH command. Let's log on to the cluster. And I mean, those of you that came to the palmer penguins error, if you log on to the HPC cluster using this SSH command as before, with the dash I and dash P option, you will find that there is a .shiny folder. And the .shiny folder is what we need to replace this libpath statement here with. So instead of the cluster mqlibs, we set it to this thing. In your case, it will be shiny dash some UUID thing. And if I do it like that, so you just have to copy and paste that into the chat box here. This is the piece of code and .shiny something should be different from UUID. And then if I rerun the shiny app, it should be more likely to have it working.

Yeah, that's much better now. Great. I mean, as you can see, once I change it to the respective libpath, I think there's a missing bit in the code that I will fix after the workshop, and you can try it in your own leisure. So now we have the working shiny app again. You see the look and feel is still the same.

Running jobs on the HPC cluster

And you see there's tooltips on the bottom that is currently running some stuff. And I hope that will come back. Yeah, there we are. Okay, and I think now we can do precisely the same stuff. We can do a thousand trials and immediately it reruns the computation. And for those of you that are logged onto the HPC cluster, you can see that there's now some jobs running, and I see that others are also resubmitting, so that's good to see. And my process is already running here and has just finished, and you see I get a new histogram.

And now I think we can beef up the number of calls to, let's say, like three. And if I now go back to my little window, then you see I'm suddenly running three jobs. And that means that I'm using three calls instead of one.

Let's just rerun it again here. On three calls, and you can also watch the progress bar in a moment. You see the progress bar with three or four calls is fairly fast after the initial setup phase. However, and it has run the computation in 20 seconds. However, when I reduce the whole thing to one call in a moment, so again, 21 seconds. Let's rerun the same thing on one call. Then it should be somehow different. Oh, but we still run with 100 trials, so I don't expect it to be too different for now. Because we need to increase the number of trials to add more work, because one of the disadvantages of that code, of that approach is, or in general, the more computation you have, the better it also is to actually distribute it over many calls. So, yeah.

Maybe now I'm also, now everyone is stuck on this spinning up thing. By the way, I haven't checked in the chat box. Maybe let's, does anyone have a working Shiny app, app-1 running? Or are you still working? Or do we get some error messages? I can't see anything from anyone, but I can also check a little bit on what you're doing. Because we should see.

And, Zulu dash L, Z, D, O, L, S, plus Z. Because, I mean, as we discussed earlier, there should be some CMQ SSH models. Yeah, so a couple of you have that already. So, we can take a look what POSIT-009 is doing.

There we are. I mean, that seems to have run. That seems to run okay. It's just submitted and waiting. Let's go. Here we have an error message. Argument successes missing. All the others seem to be. There's also argument successes missing. Why is that? But, I mean, we also can check one thing. So, if I go to POSIT-009 user.

Because, I mean, one of the things that we haven't done yet is, here you see the SLURM template that uses R to do stuff. In order to start running, to execute this cluster MQ package, it needs to be available somewhere.

So, what I will do here, and you also can follow along, if you use the SSH command once more and then run. So, I'm logged in as user POSIT-009. So, you don't need to do that stuff. But, if you then type capital R, then run install.packages. Cluster MQ.

Because, there's also the problem that without this package pre-installed somewhere on your user account, the whole thing will not work. So, if you can follow that stuff and go to this SSH session, like here.

And, I will interrupt my SSH for now. So, if you do run this thing here again, like that. And, of course, with your POSIT user, and I run the same thing, then I type R, install.packages, cluster MQ.

And, you see it asks me that I want to have a personal library. I say yes, yes. Then, I'm installing that cluster MQ stuff in there. Try that and see if that works better.

Troubleshooting with participants

Any takers or anyone willing to put something in the chat? I mean, we also can get a screen share going with some of you, so we can see what's going on. Ah, for Evo, it works. That's excellent.

Okay, so we have Evo working. And then, Mahesh Kumar, you updated libpath. If you rerun stuff, does it work now or still a problem? And, Jennifer, you also are still getting the error.

I mean, maybe if either Jennifer or Mahesh Kumar wants to try, if you can share your screen, maybe we can take a look together.

Okay, so you're on Pulsar Cloud. So, Renfrew Store, yeah. Okay, that's great. And now, can you run the app as it is now? Yeah, you have that folder. That's great. Now, just run the app and see what's happening.

Okay, so the first thing is working. That's great. It's now starting the app.

Ah, okay, now we have an error. Oh, there's no package path.

Yeah, and this is. I'm sure that this is the same here. Ah, okay. Can you change into the .shiny folder, please? CD .shiny and then press the tab key on the HPC here.

Okay, I think that we need to. I mean, what happened here? I think you previously probably got the error message, cannot resolve packages or version conflict and stuff like that. So it hasn't installed all of the needed packages.

I mean, there's two possibilities. I mean, either you remove that .shiny folder, you restart the .shiny application, and we'll try to reinstall the packages. Alternatively, we also can manually install the Palmer Penguins package real quick, as you prefer.

Michael, I'm getting pretty close, but I think I'm getting the same issue that when my app two runs, it sets everything up. It looks like it's getting the remote environment and installing the packages, and then it fails on the Penguins package.

Mahesh and Phil, I think the reason why this doesn't work that way is because the technical design here is. I mean, first of all, you need to install that package remotely on the HPC cluster. And you need to install it into the same .shiny folder, where the bioc manager and the pack is installed.

No, Mahesh, go back. Go to the other terminal where you're locked under HPC. And here you need to manually. Here you manually need to type capital R to launch our session. Enter. And now you can set the .lib path, like you would set in the code.

And now you run install.packages.PrimalPenguins.

Michael, do you mind putting in the chat box the what you just, the lib paths piece, I think is the bit that I'm stuck on. Yeah. The lib path is. Oh, great. A lot more. Also got it working. It's good. So the .lib path is this thing. And .shiny something.

So we need to run this stuff on the HPC cluster in our session. And whatever you need to, then the .shiny folder is different for you.

So in the terminal, I'm in the cluster. Yes. And so if I run the lib path, shiny dot dot dot, is that the right thing for me to execute on the. Yes.

Mahesh, I think to leave your, okay. To leave that session, just press type Q and run brackets open and close.

Can you type LS again, Mahesh? LS. Okay, now you have to panel penguin stuff. And now go back and restart the shiny app again.

Mahesh, I mean, the thing that is maybe difficult to understand if you do that for the first time is that you now have like the R packages all set up on your shiny server on POSIT Cloud or POSIT Workbench or workshop environment. And then the whole HPC stuff running on the remote HPC cluster that also needs the R packages. There we are. Now it's working for you.

So, Phil, are you also making progress? It's not crazy about my libpaths command, so I'm just trying to wrap my head if I'm. Sure, I mean, maybe do you want to share the screen and we can. Yeah, sure, I can real quick.

Maybe it's also helpful for others. I mean, that is. The libpath thing is also kind of an omission for me. So, are you on the HPC cluster? Yeah. You're on the terminal.

So, first of all, do a ls-la. La? Yeah, like that. Enter. And now you see you have a .shiny folder somewhere up here. In the middle. You see over here. Right here. Yes. And now I want you to copy that, and now press type capital R to launch an interactive R session.

Oh, capital R. Control C or enter. Okay. Capital R. Capital R, got it. That will launch a manual R session. Enter. And now type the .libpath thing.

Okay. .libpath. Oops, not that. Let me see. Okay, so . .lib, capital P. Capital P. Capital P, gotcha. Okay. Open brackets, double quotes, and then your .shiny stuff. .shiny. Got it. Double quotes and close brackets. Cool. Enter.

I'm just going to copy this real quick because I need it. Okay. And then you can run install the packages palmer-penguins.

Almost there. Almost. Let me see if I can. Yeah. Yeah, just making sure how to spell penguins. Cool. Yeah, there we go.

Cool, just like that? Yeah, like that. Enter. Okay. And you see it's now installing it in the .shiny folder. Sweet. Now you can go back straight to your .shiny app on your R console. Or you can leave that with Q or go to the console and run the .shiny app proper.

Okay. So I think I've got it up. I've got it up here. I can just run the app. Yeah, exactly.

So setting up the world environment. That's good. And it's doing the same error again now. Yeah. Do I need to restart the session? No. No, what I mean, the .libpass, in the code, did you modify it? Oh, I know. I did not.

So I got this. I'm sure that's it. ClusterMqLibs thing here. Tell me. Yeah, there we are, line 150. That is, you'd also need to, like, tilde slash and then your .shiny stuff. Oh, the .shiny stuff. So, no, I don't think I saved it.

So, Phil, did you add that to your code, the .libpass?

Oh, but, well, to your question, I mean, it prompts me to enter the password. Is that, I guess that's the password for the HPC login here. I mean, if that's the case, then I think your SSH copy ID command has not worked. So, can you maybe re-execute the SSH copy ID command and see if that fixes it?

And by all means, since Phil stopped sharing his screen, also feel free to share the screen. Sorry about that. I, for some reason, lost my screen share, but I'm back. Okay, cool.

Hang on two more minutes and we'll get Phil fixed up. Awesome. So, what do I replace here, Michael? You need to put in the .shiny stuff. Yeah, do I replace all of this cluster? All of that stuff. Just like this? Like this, precisely. And now you save that and rerun it. Forward slash at the end here? Okay. It doesn't matter. It doesn't matter. Okay, cool. All right. So, don't need to restart R? Just run the puppy? Just run the puppy, I guess.

Oh, my terminal. My terminal. Maybe interrupt it first. Yeah. Control C. Yeah, I hit that. It's still frozen here. Oh. Manually stop it.

So, if you could run this command here, on your... In the workshop or POSIT cloud environment, in a terminal window, that would be good. And just make sure that you replace username with your POSIT username here.

Okay, so I mean, Phil, I think I think so. I think I think my session is a bit froze, but I'm I think we're in a good shape, so I'm going to run it and I'll let you know here in two seconds, so. Okay, awesome. Yeah. And I mean, if that's the case, I think we have like another 15 minutes. So if you if you look at the project folder, I mean, there is, I mean, I probably prepared a bit bit more than we.

Overview of remaining apps and the Mirai package

Michael, if you want to share your screen again, just FYI. Sure, I'll do that. So, I mean, if you look, if you look back at, I mean, I think now we have a good success with App0 and App1. I think we, and I probably would probably stop here.

Stop here for now, because I mean, the next apps would now mean to go to the Mirai package. However, I mean, you have the access to the GitHub repository. And you also have, I mean, I will leave the HPC cluster running for the rest of the week. So App0 means you can come back and explore those other packages as well.

So the reason why I went with the cluster MQ package, because I think it, it has the ability to use this SSH connection from your Shiny server, and then uses locally the Slurm commands to distribute the jobs to the respective compute nodes. And that makes it, in my opinion, very elegant. And I also, because things are so simple from that perspective, we also went through the whole hoops of using the init functions here, the init data, HPC setup functions, and the init function that will remotely create all of those, the .shiny folders, and install all of the packages that you would need.

So I mean, that's just to keep that thing very clean and relatively easy to understand.

The Mirai package, and I probably can just gloss over it a little bit, is kind of different, because the Mirai package does not use any templates that would allow you to kind of hide the complexity of some of the IT stuff. So you see I have some strange looking stuff here, like the MyHPC stuff, the private key stuff is now hard-coded in the source code. However, I think the reason why I think Mirai is definitely worth exploring is because I believe it is kind of the future. And secondly, if you manage to define such a Mirai context, or if you're able to start so-called Mirai daemons that are, in that case, pointing to SSH configuration that is doing all the remote SSHing and stuff like that, I mean, then this opens you a whole world of possibilities, because there is a package called future.mirai, where you then can directly use futures from within your Shiny app, and they will then be automatically exported onto the HPC cluster video without you ever thinking more about it.

if you manage to define such a Mirai context, or if you're able to start so-called Mirai daemons that are, in that case, pointing to SSH configuration that is doing all the remote SSHing and stuff like that, I mean, then this opens you a whole world of possibilities, because there is a package called future.mirai, where you then can directly use futures from within your Shiny app, and they will then be automatically exported onto the HPC cluster video without you ever thinking more about it.

So, which means instead of, again, having access to the resource in local Shiny server, you again can make use of as many resources on HPC cluster, and you have all in one unified environment. And secondly, and that's maybe adding a bit more deposit touch now, the Mirai package also is at the moment integrated into the so-called Perl package, which is... I should put it in the chat box.

What is this package here? Yes, and Perl is like a package for object-oriented programming, and there you have lots of map commands of sorts and many other things. And as of late, behind the scenes, it already launches a Mirai cluster if there's no Mirai context available, but if you predefine your Mirai daemons using the Slurm stuff, then any call to the Perl package will automatically use those daemons, and instead of, again, being limited to local processes, you can make use of your HPC environment again. So, I think that's why I put out, added this Mirai stuff again.

The last reason why I didn't go full in on Mirai, which would have been an obvious thing to do, is because Mirai at the moment does not support the nice chaining of the SSH connection to the actual Slurm submissions. So, as a matter of fact, if you look at the app4, app3 and app4, app3 will allow you to launch those Mirai daemons to do SSH connections and then launch the R processes locally on the login node, which may not be the best thing to do, but I mean, I just put it there as an example. And the app4 is then doing the same thing on a Slurm cluster, where you would need to run the Shiny app really locally on a login node where you can directly submit stuff to HPC cluster.

Deploying to Posit Connect

But now I see Mahesh is asking a very good question. How will it work for Shiny app deployed on POSIX RSConnect? Yes.

I probably could turn the arrow around and say to you, Mahesh, I mean, in principle, now you have everything you need to know about how to make it work with POSIX RSConnect. However, don't get me wrong. I mean, you still have the process of deploying the app to POSIX Connect.

So, however, again, let's just take a step back for the last five or six minutes, how we made the app work. I mean, the app1 with the SSH connections. So, the app1 is using, I just go back here, it's using this SSH connection to do SSH tunnel and stuff like that. And the only thing it really needs is the location of this private SSH key.

If you now think about such a Shiny application wants to be deployed on the Connect server, that means that the so-called application bundle that is being created on the workbench side of things needs to somehow contain that this .ssh or the my.hpc.ssh key. And so, the only change that you really need to do is to make sure that my.hpc key is part of the application bundle. So, you probably want to copy it into the application directory, maybe make a .ssh my.hpc here, make sure that it's also part of the files to be deployed. And then last but not least, you also need to then modify the SSH template to not have this tilt in front while one tries to use relative path. Because the Shiny app on the Connect server will be executed from some random directory that no one ever should know. So, this command will then use the .ssh thing via relative path.

Yeah. So, I think those are the two main things that need to be changing. And I mean, as a little homework, I mean, you guys probably have access to this document, right? I will just share it once more.

This document, you can make a bookmark locally. And for those of you that have survived until now, I will commit to add one example that you can deploy to your local Connect server.

And provided that this local Connect server is allowing SSH access to this Shiny HPC cluster, you should be able to deploy that. And if not, I'm happy to have an individual conversation with you guys.

Let me just maybe send an email to InfoEd. I mean, Phil, would it best go at InfoEd our farmer, or should I just let them have my email? Yeah, I would say either way is perfectly fine with us. If you're trying to get in touch with R&Pharma, I would probably say there's two ways to do that. One is our contact page, which I just put in the chat box. But then also, info at R&Pharma.com will also go to the nonprofit. And we can send stuff to Michael. But feel free to reach out to Michael directly as well. Either way works perfectly fine.

Okay, cool. I mean, I posted my email address as well, so you can contact me offline. I mean, because we only didn't have 100 people today, I mean, I see the traffic should be fairly small. But I mean, I'm interested to make sure that

this Connect deployment also works. Yeah. So, I mean, give me maybe one or two days for this document also should have an example. And the GitHub repository as well, an example that you can deploy to Connect and then remote submit to this HPC cluster.

Okay, I think this was rather fast. Two and a half hours. I hope that it was at least somewhat helpful for some of you. I think we at least had success with a couple of people. I mean, just watch this document. And this will be extended as we go along further. So, you can use that as your... And likewise, the GitHub repository as well, you can use as your go-to resource going forward, because I will also keep adding stuff to that stuff as well.

I mean, with that, thanks for your patience. Thanks for the collaboration also from Phil and from Arne Farmer. Thanks for hosting me today. And I'm almost curious to see if one of you will actually be using that stuff in your day-to-day work. If you do and you have success stories, feel free to share.

Closing remarks and community announcements

Absolutely. Thank you so much, Michael. I felt like I was doing the whole thing. So, I feel like I can claim the badge for this one because I completed it. But very much appreciate volunteering and giving the community your time and energy and knowledge today. I just got a couple of quick things and I'll close the bridge. If you need a badge or want a badge, fill out the form that we put in the chat box. The second thing is we'll edit this video and get it posted on YouTube. It takes a month or two. So, if you want to revisit the material, feel free to check out our YouTube page in probably a month or so.

Our next event is going to be the Hangout. I posted that in the chat box. That's going to be on December 16th. The Arne Farmer community gets together and has various topics. So, if you want to ask questions about HPC or whatever, that would be a great place to do it with other people from the pharma community. With that, thanks so much for coming. And I think, Michael, we can open it up for questions or end the bridge whenever people are ready. So, thanks again. And Michael, any final comments or any questions by the attendees?

As mentioned, thanks to Farmer for hosting and thanks for the patience of the people. And I think we at least got to some working applications, which is quite exciting, yeah? Thanks for everything. Right. And just on time, 1.30, we'll go ahead and end the bridge. And we'll see everybody next time. Thanks again, Michael. All right. Bye. Bye, guys.