Jeroen Janssens - Python Polars: The Definitive Crash Course - PyData Global 2025

Polars is a lightning fast DataFrame library that is taking the data science community by storm. Its elegant and expressive API makes analyses pleasant to write and efficient to run. In this workshop, we’ll demonstrate how Polars enables data scientists to go from raw data to reports–by reading, transforming, and visualizing data

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Welcome, welcome everyone. Without further ado, help me welcome Jeroen.

All right, let's get started. So my name is Jeroen Janssens. And yeah, I'm honored to be spending the next hour and a half with you and tell you, yeah, as much as I can, as much as time permits about Python Polars. So this is going to be a crash course, based on a book that I wrote a couple of months ago, together with Thijs Nieuwdog. Thijs is standing here on the left. That's me on the right. And so, yeah, we've written this book, it contains a lot of information. And what I've tried to do is extract, yeah, the best stuff from this. And, you know, this is the first time I'm giving this crash course. So, you know, I may have just way too much material, or, or not enough, I have no idea.

But you know, that's okay. This is a tutorial, right? And we're dealing here with a live audience. And I do want to make the most out of that, right? This is not some pre-recorded video. So questions are very welcome, very much encouraged.

But, you know, it's always a challenge. When when you're doing a tutorial like this, with so many people and, you know, text, I'll do my best to every now and then look at the chat. If I, you know, forget to look at it, please forgive me, or, you know, just remind me like, hey, we have some questions in the chat.

Oh, yeah, yeah, a few words about myself so that you know where I'm coming from. Right. I wrote this book Python Polars, the definitive guide earlier this year, and the two years before that, but it came out earlier this year.

I also wrote a book called Data Science at the command line. That's a bit older. First edition is from 2014. And then in 2021, we got a second edition. I work at Posit as the head of developer relations. And yeah, again, very, very happy to be here.

Overview and agenda

This here is the is the rough outline that I have in mind. But again, if there is any question that, you know, forces me to go into a different direction. Forces is actually not the right word because I do enjoy doing that. Then we'll just do that. Right. So again, questions. I love them.

We're going to start with the obvious, getting your data into Polars. Right. I mean, there's I don't want to. I want to get straight to business here. So we get we get we have data, right? You have hopefully some data in a in some format, whether that's CSV or an Excel spreadsheet or parquet.

And those queries are done using expressions. Those are the building blocks for a lot of the Polars code that you will be writing. And so we're going to spend a good amount over there looking at expressions.

I'll explain the difference between the eager and the lazy APIs. And, you know, by then, if we get that far, I'm already pretty happy. I then then you, you know, you know, 80% of Polars that's necessary.

But if there's time left, we can, you know, dive a bit deeper into selecting and creating columns, filtering and sorting rows. I have some code related to working with strings, date times, lists, arrays and instructs. Maybe some joining, maybe some concatenating. You know, we'll again, we'll see where we end up.

Yes, Polars. It's often said, come for the speed, stay for the API. Now, I am going to assume that by now, you sort of have an idea of what Polars is. Otherwise, you wouldn't be spending an hour and a half with me learning about it.

So you know that it's fast. You know that it's, you know, building rust and all this good stuff. So I'm not going to focus on that. Instead, we're going to be focusing on the API on writing actual Polars code. And, you know, I can imagine it would be quite boring watching me type. So what I've done is I've prepared a complete notebook.

That reminds me, you have access to this notebook, if you like. There's a QR code in here. I hope the resolution on my screen, my face is in front of that QR code. Although I can do, I can move my own face. I hope you can do the same so that you can scan this QR code.

This QR code will take you to the GitHub repository that is associated with the book. In there, there's a directory called Crash Course.

Right, it kind of looks like this. If you look here on the left, you can see all these notebooks, one per chapter. So all the code that's in the book is actually shared, is available for free. The book itself, unfortunately not. You can get the first chapter, but that's it.

And if for some reason this QR code is not working, you can go to polarsguide.com.

Okay, okay, okay. So, but you know, it's not necessary at all that you do that right now. You can just sit back and relax.

Import Polars as PL. That's the convention. Otherwise, you would be typing Polars all the time. So we like to abbreviate things here.

Now, the book itself, the one that's in stores, is built using Polars 1.20.0. I'm currently working on with 1.33. That's not even the latest. I mean, it's impossible to keep up with the pace at which the Polar team is releasing new versions.

I believe the newest one is 1.36, but hey, that's not a big deal for this tutorial.

Reading data into Polars

So reading and a little bit of writing data.

So this table here shows that, you know, there's a variety of formats that Polar supports.

The usual suspects are in there, CSV, databases, Excel, and Parquet. Those are the formats that I typically work with most often.

Now, there's a column for reading the data, and there's a column for reading the data lazily. So those functions start with scan underscore. And we'll get to that later, what that actually means, reading lazily, and it's related to the Lazy API, I can tell you that.

So CSV, yeah, it's a format that's still used a lot, despite its shortcomings. But so why don't we just start there? What I like is that it is, you can actually look at the raw data.

So that's what I'm doing right here. I have this CSV data with a bunch of penguins, one per row, some columns, and this looks to me like it's formatted nicely.

I don't expect that Polars will have any problem with this file. Yeah, it's, you know, there can be, different character can be used for separating columns. There might be different encoding, right? Polars assumes UTF-8. If you have a different encoding, it's best to specify that.

There can be lots of different issues with, you know, a CSV file. Luckily, the read CSV function is a top level function in the Polars namespace, accepts a variety of options, if you need to. But let's see here, we can, this just works, right?

And now we get a nice looking table. We, yeah, we see all the column names in bold. And immediately underneath that, we see their data types. Now, CSV by itself doesn't store any data type information. So this is inferred from the data itself. And that might also, you know, cause some issues every now and then.

So, but what do we have here? We've got some, we've got an int64. So 64 bits, numbers, a whole bunch of strings, even though, you know, when we look at length and depth, those look like they need to be, you know, numbers or floating point numbers to be precise.

But I guess here on this fourth row, there are some NA values. And I have a, I have a feeling that those are messing up reading the data.

So those are actually NA, right? Those stand for missing values. And with Polars, only empty strings are interpreted as missing values by default. So if you have any other values that should be interpreted as missing, you need to specify those explicitly.

So in our case, that is null values equals NA. And now, what do we see here? I'm reading in the same data, but we get back a Polars data frame, where it now seems that the column types, the data types for those columns are correct.

That's fantastic. Yeah, you can also see that those missing values are printed as null.

Yeah, you can – what's a very useful method on a data frame is null count. Yeah, I'm here transposing this matrix – or matrix – this data frame just so it's easier to read.

Otherwise, you get a very wide data frame. So we can see that there are some columns that indeed have missing values.

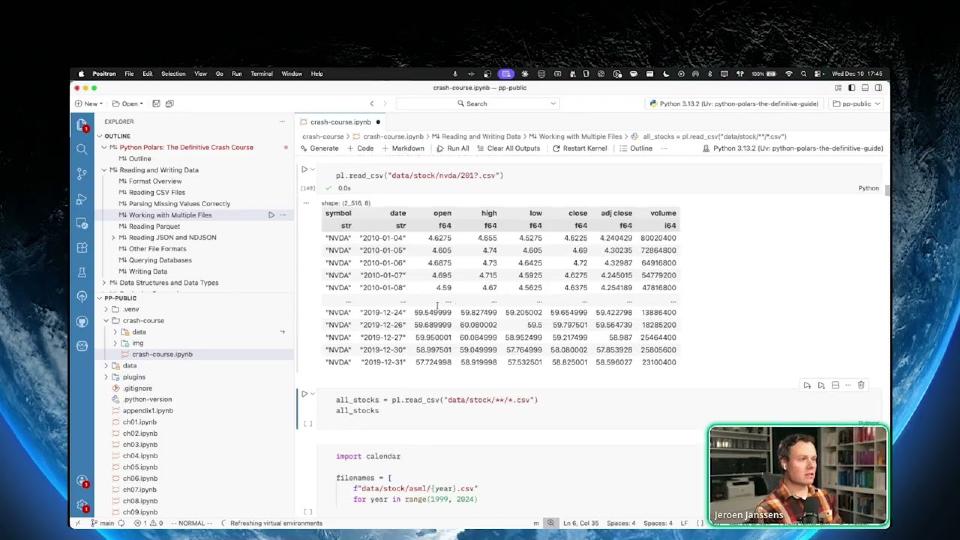

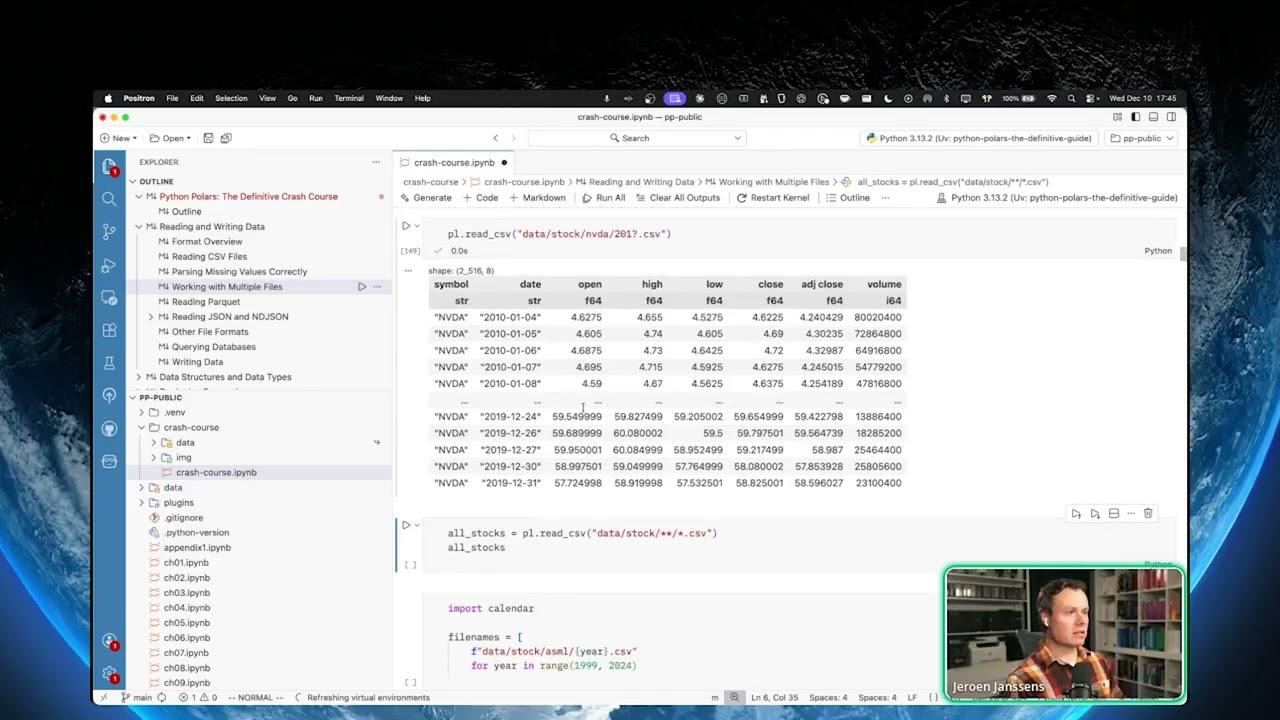

Okay. So let's have a look here at how we can work with multiple files. It's quite common that, especially when data is coming in on a regular basis, that you have a series or a collection, I should say, of files.

And there are different ways to be reading in all those files in one go as one data frame. You can use globbing. So you can specify some pattern that will match the file names that you want to read.

So I have some stock data right here, some NVIDIA stock data. And because there's a question mark here, it matches – well, every year that's in the 2010s. Yeah, so the question mark represents any single character.

Let me have a look at the questions. There are some questions here. Does read CSV detect bombs? I don't know. I would have to look that up if pollers had issues with the Palmer penguins. I would be worried, yes.

Well, issues – so size is – I guess you're referring to the size. And you know, pollers is indeed capable of handling very large data sets. And the Palmer's data set is by no means big data.

So the null values equals NA. What it does, whenever pollers encounters the text NA as it does here, it will treat that as a missing value. So this is without specifying null values equals NA.

And this is with. So you can actually have multiple values here. So what if Adélie – so that's one of the species here – is also a missing value, then now all those values over here are interpreted as null.

And it's best to specify that when you're reading in the data, because then pollers gets a chance to infer the correct data type from that column. You can always fix things later when it's already loaded in.

You can still replace your values and cast your strings to numbers. That's all possible. But if you have the opportunity to do that when you're reading in the CSV file, then it's best to specify that over here.

So if you know the answer to a question someone else has, you are absolutely welcome to answer it for me. So thank you, Ola. And Stephen, I hope that we have answered your question.

Oh, wait. Yes. Where are we? Yeah, reading multiple files. So if it's a globbing pattern and multiple files match that pattern, then pollers will just concatenate those automatically.

So this globbing pattern right here means that it will recursively go into any subdirectory and it will just read all the CSVs it will find in there. So yeah, if that works and if that's what you want, then that's fantastic. There's a lot of flexibility there.

Or you would generate a list of files, file names, right? These are all the leap years that we have for ASML, right? Just an example. And then what we do here is we have here a generator expression, I guess.

So for every file name in this list of file name, it will execute the read CSV function. So these are all tiny data frames. And then the PL concat, which stands for concatenate function, will then stack or concatenate all these data frames into one big data frame.

So that's, of course, also an option that you can use.

Great. Parquet, yes. If you are Parquet, then that's fantastic because Parquet, unlike CSV, does contain data type information and then some. So you can be assured that the data types are correct.

Yeah. Here there's another globbing pattern, right? I have no – let me see here. I'm timing. Yeah. Did you see how fast that was? This is just on a laptop, right? 1.3 seconds. And we're dealing here with almost 40 million rows. Not a problem.

And I guess that's – I just wanted to show off how pollers can handle this so easily. So – but from now on, I'll stick to the smaller data frames because that is just much easier to present.

Yeah. But now you can rest assured – oh, 40 million rows. That covers a lot of use cases. And yeah. Yeah, JSON or new line delimited JSON. That's something you can – you know, you often have when you work with RESTful APIs, for example.

And the reason why this is worth covering is because JSON is often nested. It has the ability to be nested. Here we have the information for all the Pokémon, right? We're just looking at a single Pokémon, Bulbasaur. And yeah, you can see there is some nesting going on here.

Now, pollers can read this, but it doesn't look pretty when you just don't give it any options or when you just read it as is. It reads the entire JSON structure as a single value, yeah? And that's because in this JSON file, there's only one key.

And that's – those are all the Pokémon, right? So, we need to do some work in order to turn this into a data frame that we can actually use. And two methods that you can use for this are explode, which allows you to convert a list of items into rows or, for example, a list of Pokémon.

And unnest can be used to convert object keys into columns, yeah? So, one makes the data frame longer, and the other one makes it wider.

Here's an example that uses both methods. Explode Pokémon, okay? So, that's that very first key. Every Pokémon gets its own row. And then all the keys for each Pokémon then become columns.

And I guess I don't even need to select there. Let me just show you everything in there. It is pretty wide. Here we have Bulbasaur again, just a single row. And this looks pretty good.

You may need to do some more, I don't know, unnesting or exploding depending on your use case because there is still some nested data in here. But you get the gist.

New line delimited data, I guess it's not that different. It's just that every line here is a complete JSON object. Yeah, so here we have very long lines. And this is what one line looks like.

Again, I guess it's kind of the same as the Pokédex file, but here for Polars you use a slightly different function. Yeah, readndjson.

And again, to demonstrate this, there's no exploding needed I guess in this case because every object is already its own line. So, we only need unnesting in order to create some columns.

Yeah, and there are so many other formats. Now, spreadsheets, I'm not going to cover those. Also not going to cover databases. But if there is some format that Polars doesn't handle, then as far as I'm concerned, it's totally fine to use Pandas.

Pandas has been around for over 15 years, so it can handle way more formats. And because you can use the fromPandas function to convert a Pandas data frame into a Polars data frame, you're good.

So, what I'm doing right here in this example, I'm using the readHTML function from Pandas to turn a HTML table into a data frame. And this is then in turn converted to a Polars data frame.

Yeah, and I guess this is now a good opportunity to mention that there's also the opposite function. You can also go to Pandas again. So, and this is a strategy that I have used together with my co-author Thijs Nieuwdorp in the past when we worked for a client and we needed to convert a very long or a large code base that used a lot of Pandas.

Instead of converting everything all at once, we just took out little bits and pieces and said, okay, here we are going to convert to Polars, do some work, and then convert back to Pandas. Yeah, and that allowed us to gradually convert this large code base from Pandas into Polars. So, there's a tip from the trenches.

Instead of converting everything all at once, we just took out little bits and pieces and said, okay, here we are going to convert to Polars, do some work, and then convert back to Pandas. Yeah, and that allowed us to gradually convert this large code base from Pandas into Polars. So, there's a tip from the trenches.

So, Ola, yes, when your CSV files are not compatible, then I do believe that you get an exception. Now, there is still a way to get around that by concatenating them and setting some parameter.

Joining and concatenating. Horizontal concatenation. So, that would be vertical, diagonal, align. So, here we have ABC. Yeah, so this would be your situation.

In this basic example, we have two data frames that we create from scratch. We're not reading from some file, but we're creating them by specifying the actual values. One has columns ID and value, and the other one has value and value too. So, they're incompatible.

Now, if you use the concat function and you specify how equals align, then Polars will create new columns if needed. Now, still, I advise you to double check if that is indeed the intended behavior.

Data structures: DataFrames, Series, and LazyFrames

Moving on. So, I kind of glossed over this, but that nice-looking table, that is a Polars data frame, a two-dimensional data structure that consists of rows and columns.

And those columns are actually called series under the hood. So, you can also have a series by itself, but in practice, that doesn't happen that often. Usually, you just work with a data frame.

But yeah, a series, kind of like a Python list, but a lot more restrictive. All the values in here need to be of the same type. In this case, they're a 64-bit float.

Now, besides a data frame, there's a third important data structure in Polars, and that's a lazy frame. So, a lazy frame by itself typically holds no data. It's more a recipe. It contains the instructions that Polars needs in order to read the data.

It needs to read some data, process it, but by itself, it doesn't do anything. Let me give you an example here. So, before we used the read CSV function. Now, I'm using scan underscore CSV.

And I also apply the with columns method, meaning I'm going to create a new column called is heavy. We're going to go into this. Don't worry. But let's just say I do – I'm reading. That's one instruction, and I'm also adding a new column.

And this is lazy. Okay. It's a big diagram here, but this is the lazy data frame, lazy DF. Again, just to demonstrate that it doesn't do anything by itself.

Now – so, it's an instruction. And when you then do want to execute, you want to materialize your lazy data frame into a data frame, you can do collect. And this will trigger all the instructions.

Now – so, here you can see this is a data frame. This prints nicely, but we started with something that was lazy. Okay. So, those are the three main data structures.

Now, in those series, right, which are very often columns, but not necessarily, every series has a data type associated with it. So, these can be numeric, related to dates and times. They can contain nested data, which then in turn can have other data types. Yeah. Strings.

Boolean columns or series are very common. So, there's a lot. There's a lot of possibility. And I believe that a very recent feature of Polars in the latest release, you can actually extend those types.

Now, I haven't played around with that yet, but that's quite exciting. So, I saw an example where you can easily specify, say, a coordinate, which is comprised of two floating point numbers. These are called extension types. That's quite exciting.

When you are working with a data frame, you do everything in an eager fashion. So, every time there is a certain instruction, then it gets executed immediately.

And you're doing everything in an eager fashion. Now, this has an advantage that, especially when you're working interactively with your data, say, in a notebook, you immediately see the result of what you're doing.

For example, if you have a filter, say, I only want to keep these rows, there is a chance that Polar's is able to move that filter in the reading part. So, it will only read in the data that's actually needed. That's quite exciting.

So, there's a whole bunch of optimizations that can happen. And which ones? It's a little bit out of scope for this tutorial, but I think the main important reason here is to remember that when you are working in a lazy fashion, you give Polar's the opportunity to do all those operations.

So, there's the array. There's a list. Now, a list is kind of like an array, but it can have, you can have different lengths per row. Yeah, within the same column.

Here's an example of a struct. It's often used when you want to work with multiple series at once. Yeah, I know it sounds kind of vague.

Expressions: the building blocks of Polars

Okay. Yes, this is the moment that I have been waiting for. Expressions. I'm very excited about this.

When Thijs, Nudorp, and I, when we set out to write the book, we thought that expressions, yeah, sure, they're important. Let's give them one chapter.

Turned out that we needed three chapters. So, at least 300 pages in the book are dedicated to just expressions.

So, we underestimated the depth of these things, how important they are. They really are the building blocks when it comes to writing polar's code.

So, that means that when you understand expressions, you're halfway there. You're halfway there.

This is, right, and I can imagine that some of you have some experience with pandas.

If you do, you know, it's not always an advantage. There are some things that you need to unlearn, right, because polar's is just different, and for a large part, that is because of those expressions.

I'm going to work here with some fruit. Fruit is good for us. So, we have here, this is just a simple data frame, 10 rows, 5 columns, and we're just going to do some operations, some very common ones, like selecting columns.

So, the select method here allows us to keep a subset of columns from the original data frame. We are, in this case, the first argument here selects the column that has the name, name.

This next argument contains a regular expression. Now, not to be confused with a polar's expression, but this one will select all the columns that contain the characters OR. In our case, that is color and origin.

The third argument here, it selects the weight column, but then it also divides it by a thousand. So, all these arguments, so the first three, they are all expressions.

You can see that because they start with PL.COL. COL stands for column. Now, the fourth one is not an expression. It's just a string. Now, the select method is just flexible enough to accept both expressions and strings.

So, going back here to the weight column, we're dividing it by a thousand. So, we're turning grams into kilograms. And this is only possible because this here is an expression. We cannot divide, well, isRound, we cannot do anything with it because it's just a string.

Now, let's run this and see what we get. So, we get not four, but five columns because the second argument here, this regular expression, selects both color and origin.

You can see that the weight column is indeed divided by a thousand. And that's it. That's about it. So, this is one method that uses three expressions where, and I'll get to this later, the third one is actually a combination of expressions.

Now, second example, let's create some new columns. I'll first run it. That's perhaps easiest. So, you can already see what is going on here.

Okay. Again, we're passing in two arguments to the withColumns method. Both arguments are expressions. The first one, this is pl.lit. This is another way of creating a new expression.

Before, we used pl.col to select an existing column from a data frame. pl.lit, which stands for literal, creates a new, well, creates a series that just contains the value true.

Alias here gives it a name. Otherwise, well, let's see here. Yes, otherwise, it gets the column name literal. And that is something you probably don't want.

So, we can give a new name to a column by using the alias method. Another way to give a column or a series, I should say, sorry, I'm very often confusing myself. It will become clear later when we get to the definition of what an expression is.

Sorry. There's another way to give a series another name, and that is by using a keyword argument. So, we're saying create a new column with the isBerry name, and it should be based on this expression here.

So, it starts with the column called name, and then it uses the str.endsWith method. So, this expression returns true when the value in the name column ends with berry, and otherwise, it returns false.

Now, this may look a little bit odd here. We're seeing str and then immediately another period here. Now, str here is known as a namespace, and expressions have a couple of them.

And that is mainly there to give some organization to the many methods that expressions have. So, str, the str namespace, contains a whole bunch of methods that have to do with strings. So, these are all string operations.

And one of them is endsWith. So, that creates two new columns. Notice that they're added at the end. Later on, hopefully I'll get there. I have a trick on how to easily bring those two columns to the front.

Filtering rows. Yes. Okay. So, this expression right here, I can also put it onto one line here. This expression here evaluates to either true or false. That's actually a requirement for filtering.

When it's true, it means keep this row. When it's false, discard this row. So, what we're doing here is we're checking both whether a piece of fruit is heavier than 1,000 grams, and whether it is round.

So, isRound is already a Boolean series. So, you can combine those two using the and operator here. So, you combine two expressions into a single one to determine which rows you want to keep.

Here's another example. Aggregation. Yes. In this case, actually, when it comes to aggregation, you can use expressions in two different places. The first one is over here. It's a long one, but this one determines how to group the data, how to split the data into groups.

So, what are we doing here? This will – yeah. So, if we look at the origin, sometimes it consists of two words. So, South America and North America. Both of those will be turned into just America. Yeah?

So, that's what this does. It's first the method split from the STR namespace and then the last method from the list namespace because this will create a list. It's quite a lot. As long as you remember – okay. Yeah. So, whenever we have North America and South America, they both turn into America. That will become one group.

And then per group, we compute two things here. We compute the number of rows in the group or the length using the PL.len function and the average weight.

So, this here is a method on a series that will turn many values into one by computing the mean. So, here we can see the result. All those different groups which all produce one row are then combined into a single data frame.

Okay. So, Ola, yes. The user – does the user have to use PL.call? Yes, if you want to do anything with that column. That's basically it. If you want to apply some other operation to it.

If you just want to use the column as is, which is very well possible when you're selecting columns. You don't want to do any other transformation. You can just use their name.

And then the last example of where expressions are used a lot, that's when it comes to sorting.

Yeah. So, here we are sorting by the length of the name. Yeah. I'm not sure how useful that is, but I just wanted to show you something here.

Yeah. What's interesting here, by the way, is that this will create a new series, right? It's a series with just how long each name is, and that is then used for sorting.

But we never get to see this series. So, that's actually an important property here and why there is actually a distinction between series and columns.

Yeah. So, every column is a series, but not every series actually becomes a column.

But I think we can do a little bit better. I want to spend a little bit of time here going into what an expression actually is.

And we have come up here with this definition. An expression is a tree of operations that describe how to construct one or more series.

Wow. Okay. Okay. So, there are a few things here to unpack.

First of all, series. That is what comes out of an expression, a series. And sometimes they become a column and sometimes not.

So, it's a tree of operations. Consider this expression right here. So, what we're doing here is we're adding 3 and 5 and we divide it by 6.

Well, we're not actually doing that. We just create the expression, but the expression by itself doesn't do anything. It's a description, which is actually my third point here. It's just a description of what to do.

But there's this nifty method that we can use here, meta.treeformat. And here we can see that those operations are indeed in a tree-like shape, upside down.

So, we start with these literal values all the way at the bottom. 5, 1, 5, and 3. And then the first two are combined into one using the add operator.

And the same for the third and the fourth value. And then in turn, those two new expressions are combined into a single one using the division operator.

Now, they describe as in an expression by itself doesn't do anything. It needs – it is lazy. You can create an expression. I might have an example of this later.

You can create an expression. Yeah, let me immediately do this here. Is orange is just an expression. That's it. We just – this is what is printed when we print the object is orange.

And we can pass that. It's just a Python object. We can pass that to a method such as with columns. And remember, with columns creates new columns.

But we can use that same expression to filter. Let's only keep the orange fruits here. We're passing in the same Python object, but now it has a – it's executed by a different method.

So, if expressions are like recipes, the methods are the cooks. And they decide what to do with it. Now, there is another part to that, which of course plays a big role with – in determining the output.

And that is of course the data frame itself. You can reuse expressions onto other data frames. So, here I have a brand new data frame with just three flowers.

I guess I can print this data frame first. So, we have three flowers. But then if we apply the filter is orange, we keep two rows. And all this time it was the exact same expression.

Now, this is very powerful. Being able to give expressions names, especially when you have those complicated expressions that combine all sorts of things. I think it can make your code a lot cleaner.

So, if expressions are like recipes, the methods are the cooks. And they decide what to do with it.

But when they do, they can also become more than one. So, with a single expression, you can actually generate or you can apply it to multiple columns.

Here's an example. I have two columns, A and B. I'm specifying here a dictionary in order to create a data frame. Two columns, A and B. And what I do is I multiply both columns by 10.

So, that's what this does. So, I select all the columns using pl.all. I multiply those by 10 and then I change the name of the column. Otherwise, those columns will be overwritten because they would still be called A and B.

Now, since I'm adding a suffix times 10, I get two new columns. That's quite awesome. You don't have to repeat yourself when you want to do the same thing to multiple columns.

The two most common ones are pl.call and pl.lit for literal values. Yeah. I think I can go a little bit faster. You can also get an error when a column doesn't exist. It's smelly. It's not a column that's in this data frame. So, we get an exception.

So, pl.all, we saw that before, is a way to select all the columns. You can select all the strings. No, but by passing in a data type. Data types are also part of the global namespace.

Multiple data types, column names. So, this is all cool, but what's even cooler are, I hope to show you in a moment, but I'm already giving you the name.

And that is, those are column selectors. Yeah. If we don't get there, write it down and look it up. Column selectors. They give you a lot more flexibility when it comes to selecting columns according to certain rules.

Right. Literal values. Two ways to give a new name to the column. Otherwise, it will be called literal. Yeah. Sometimes you'll see the alias method. Sometimes you see a keyword argument.

Now, what I like about the keyword argument is that you're being upfront about what you're creating. Although, when you use this approach, you do have some limitations. It has to be a valid name according to Python.

So, it cannot start with a number, for example. And if you want to select other columns after this, then you also – I cannot do that here with PL select. This will just execute this expression without a data frame.

But now I do want to give you an example. Fruit select. I guess we can even rename in here, right? So, we can say fruit name equals PL.call name. Let me try this one. Yeah. So, we can rename at the same time.

We can actually create new columns. That's what I'm actually doing here. Creating new columns within the select method. But now if I want to say, well, I'm also interested in the origin, well, then you get a syntax error. This is just not allowed in Python. Nothing that pollers can do about. You cannot have a positional argument to follow a keyword argument.

So, yeah, yeah, yeah. There are pros and cons to both of those approaches.

Renaming expressions. So, this is actually an interesting one. I learned something today as I was preparing for this tutorial.

And this used to be – this used to give an error. And it was a longstanding issue on the Pollers GitHub repo.

But when I tried this out today, it actually worked. So, there was this problem where you could not do multiple naming methods. You could not chain them. But now you can. So, that's fantastic. Go Pollers team.

Speaking of idiomatic, expressions are idiomatic. You may – if you're familiar with pandas, you may recognize this syntax here. Yeah. Lots of brackets. That's what pandas code usually has is lots of brackets.

And so, you may be tempted or just out of habit, be like, oh, let me try this one. And you know what? It works. So, we can extract a single series from a data frame using the bracket notation.

Yeah. And the ampersand – so, the and operator here works on those two series. It will create a new series and pass that into the filter method. So, there is no expression in this code cell.

This is all evaluated very eagerly. Yeah. So, this gets evaluated, this part, then this part, then they're combined, and then that's passed to the filter method. It works.