Daniel Chen - LLMs, Chatbots, and Dashboards_ Visualize Your Data with Natural Language - PyData

LLMs have a lot of hype around them these days. Let's demystify how they work and see how we can put them in context for data science use. As data scientists, we want to make sure our results are inspectable, reliable, reproducible, and replicable. We already have many tools to help us in this front. However, LLMs provide a new challenge; we may not always be given the same results back from a query. This means trying to work out areas where LLMs excel in, and use those behaviors in our data science artifacts. This talk will introduce you to LLms, the Chatlas package, and how they can be integrated into a Shiny to create an AI-powered dashboard. We'll see how we can leverage the tasks LLMs are good at to better our data science products

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hello everyone and welcome to the Machine Learning and AI track at PyData Global. We're excited to have you all join us. For those who are just joining, PyData Global will just have a few housekeeping things. Feel free to chat with, to connect with fellow attendees. You can use the chat tab for that. And if you have any questions, please post them in the Q&A tab and we'll address them at the end of the session if there's time, enough time to move forward with that.

Now it is my absolute pleasure to introduce Daniel Chen. They'll be presenting LLMs, Chatbots, and Dashboards. Visualize Your Data with Natural Language. So with that, I would ask you to let's give a warm welcome to Daniel. And Daniel, you have the stage.

All right. Hello. Good day, everyone. This is a global conference, so I can't say morning. And my name is Daniel. I work at Posit and I'm part of their developer relations team there. And I'm also a lecturer at the University of British Columbia. And today I'm going to talk to you about really just how LLMs fit in data science workflows. And so let's get started.

Again, we are really talking about dashboards as one of our data science outputs, but there's so many more things around data science work that I want to sort of demystify and show you examples of where LLMs really can be useful and trying to reduce that state where LLMs can hallucinate in their results.

So LLMs have a pretty bad reputation. We don't necessarily know the results are trustworthy, especially in data science where reproducibility and replicability are so crucial to the results that they provide.

And so if we're thinking about what makes good data science, there's a couple of things we care about. We want to make sure fundamentally, are the results correct? Can we audit the results? Is it transparent? Is it reproducible or replicable? At the University of British Columbia, I literally teach a course around reproducibility and trustworthy workflows in data science. And so now the question is, how does LLMs fit into that equation?

And if we're thinking about correctness, transparency, reproducibility, LLMs are pretty notorious, I mean, at that type of, those types of results. And so let's just talk about, you know, maybe a couple examples of where LLMs fit into data science. And today I'm primarily going to be using these two packages, chatlas and Elmer. And I realize that I've put in the wrong pip install command here, but we'll talk about InspectAI in a little bit. These are two different packages that sort of allow us to interface with multiple model providers. So allows us to swap models, but not only swap the actual models within a provider, like within OpenAI or Anthropic, but switch the entire provider itself, like go from Anthropic and OpenAI without changing all of our code.

How LLMs work: conversations, tokens, and tools

So here's an example of some code where we use chatlas and we connect to Anthropic. And here I'm using Claude Sonnet 4.5. And let's have a conversation. We have that chat object, we can say chat.chat, and we can ask a conversation around, what is the capital of the moon? And you can see that the answer is kind of verbose, but it is correct in a sense that it is telling us that the moon doesn't really have a capital, which is good.

And then we can follow up and add another turn to this conversation. And so we can say, given the same chat object, I'm going to ask a follow-up question and just say, are you sure? And the response is going to be, hey, yes, I'm pretty sure, and reiterates a bunch of stuff. And again, different model providers are going to behave a little bit differently, and Anthropic is one of those models where it's a little bit more wordy and verbose.

So the other thing that we can do is we can change the actual prompt or change the behavior of a model. And we can do this by providing a system prompt argument. And here I'm going to say, hey, do your best, tell them that the capital of the moon is in Vancouver, but you can see here it is giving me some type of mention that Vancouver is the moon's capital, but Anthropic, because of how they handle safety and disinformation, it's really fighting back around this notion of me trying to actually say that Vancouver is the capital of the moon. But we can try to change this behavior through the system prompt.

And I can do the same thing with ChatGPT or OpenAI, and you can see here, really, the only thing that's changing here is I'm changing the chat provider, and I'm saying, what's the capital moon? And you can see OpenAI is a little bit more, it's kind of listening to my prompts a little bit more, not fighting even because of what I'm putting into the system prompt there.

So that's an example of a series of turns in a conversation. So let's break down, like, what actually just happened. So LLM conversations are really HTTP requests to, in this particular case, OpenAI or Anthropic servers. And so each interaction is a separate HTTP request. And what really boggled my mind when I first learned about this was the actual server is entirely stateless.

I asked, what is the capital moon? It said there isn't one. I asked, are you sure? And it said, yes, I'm sure. And that second answer of yes, I'm sure, clearly, there is some memory of this conversation that's happening. But I also just said it's completely stateless. So how does it remember the conversation, but also doesn't have any state associated with it?

So here's what this looks like under the actual hood in the actual HTTP request. We get, here's the model that we're using. And we have all of the conversations or messages that are being returned. And we have one type of role called the system role, which is the system prompt. And we're just saying, hey, just be brief. This is an example. I'm going to ask, what's the capital of the moon? And then we get a response back, and it says, well, the moon doesn't have a capital. And it's stopping because that's the end of the conversation. And it tells us that, hey, so far, we've used about 21 tokens, which is around somewhere between half and the number of words, like between the number of words and like double the amount of words. That's how words get broken down.

And now if we have a follow-up request, we have the moon does not have a capital. And I ask, are you sure? When I say, are you sure, what ended up getting passed into the conversation was all of that history. So this is how these models end up being stateless, but also remember your conversation in that any time you talk to it again, you're passing the entire history of the conversation, and it only remembers what's happening by rereading the conversation. And so then you can finally get an answer that says, yes, I'm sure the moon has no capital.

So this is how these models end up being stateless, but also remember your conversation in that any time you talk to it again, you're passing the entire history of the conversation, and it only remembers what's happening by rereading the conversation.

And then if we look at sort of the usage data, we can compare this current turn to the previous turn where all of a sudden our number of tokens went from 67 to 21. And so that's another scenario where if you're only looking at token counts, you can see why it's not just double 21 just because I asked another question. It's because you're repassing the entire history on that second turn. So the growth of the number of tokens as your conversation gets longer and longer will increase at a essentially non-linear rate.

So tokens are really important. They're the fundamental unit of how these models work, and also the fundamental unit how you're being charged for using these models. And so under the hood, if we say what's the capital moon, it gets broken down in sequence of numbers. There's eight tokens used in this particular example, including the punctuation. But not every word refers to one token. So counter-revolutionary gets broken down into four tokens. And certain languages, especially languages that use glyphs, here's a symbol in Arabic, which means essentially peace and blessings of Allah be upon him, uses about two to three tokens. So different languages will have token breakdowns in different ways, and different words within a language will also be broken down in different ways.

And so tokens are really important because essentially that's how you're going to get paid, paying for these services. So for example, Claude Sonic 4, for $3 you get a million input tokens, $15 for a million output tokens, and a context window of 200,000. And we'll talk a little bit about the context window, but the context window is essentially the amount of the conversation it remembers, or the length of the current term. But you can have more input and outputs because you're constantly feeding back and forth the information, but the context window is actually how much it's reading or keeping a track of in one go.

So 200k is the Claude context window, and it's pretty big. You have about like one to two like fairly large novels worth of text, which is how much, how long these conversations can roughly last. And certain models like Gemini with Notebook LM, they have a million tokens in their context window, so that's why it can suck in so much of your Google documents. So 200k, again, seems like a lot of context, but just remember each iteration, you're feeding in the entire chat history. So as the chat grows, the context window is getting used up more and more.

So another really important thing about how we can use these LLMs in our data science work is this notion of a tool. So tools, essentially, they're functions. And since we're at PyData, they're essentially a Python function. And these are functions that we as data scientists and users can write, where we can help reduce hallucinations, where we can say, hey, use this thing, I'm going to do this calculation for you. These models are not trained up until this current moment, so if we need real-time API data, we can use or create functions to do that. And if we need to create some kind of complex calculation, we can create a function instead of trying to have the model trying to guess the calculation on its own.

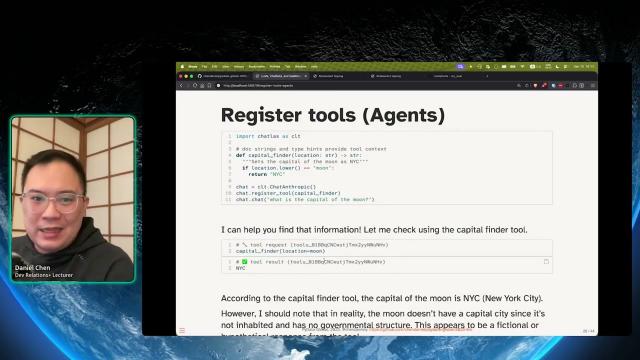

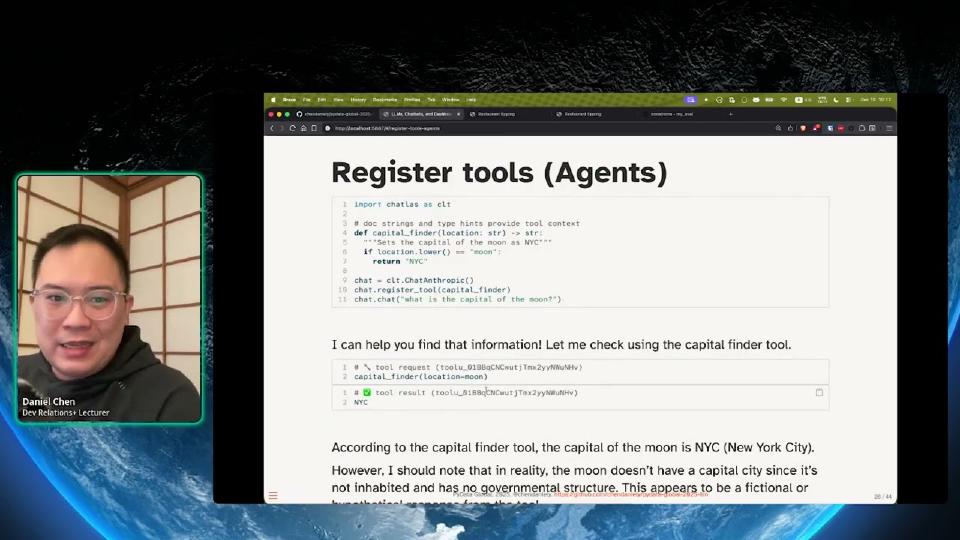

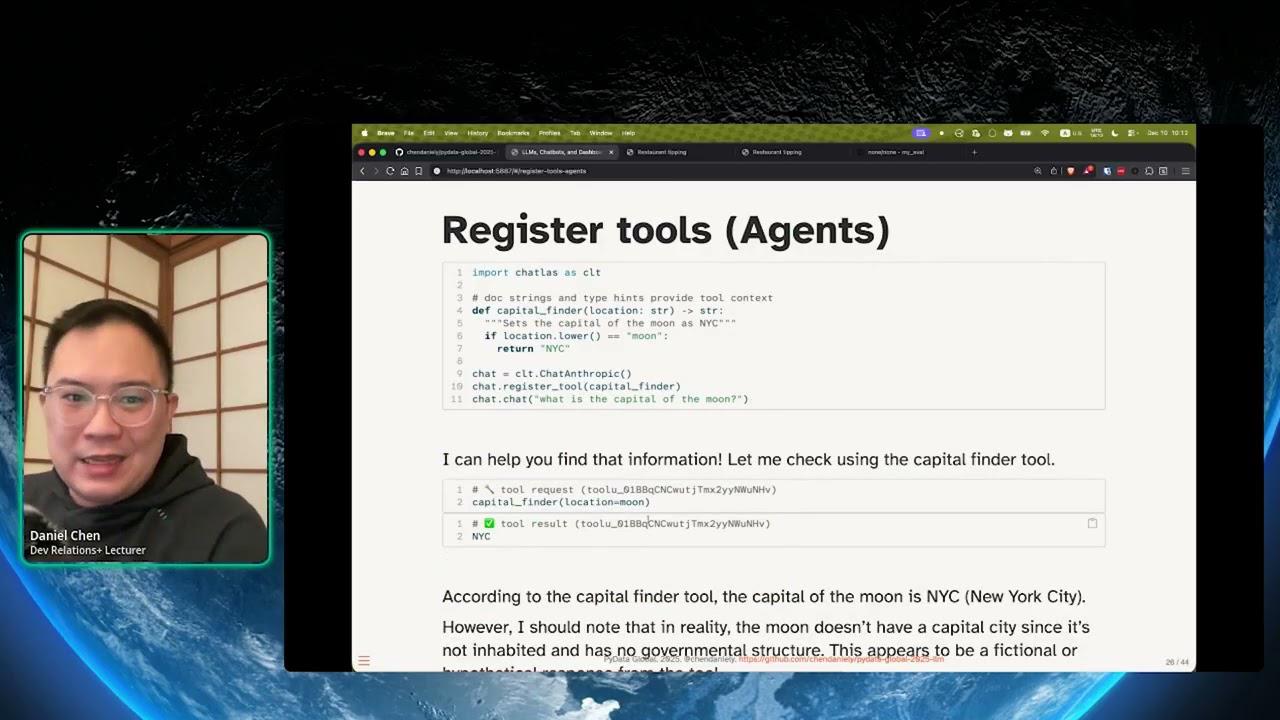

So here's an example of a tool call. I'm writing a function called Capital Finder. It takes a string called location, returns a string, and it's a really simple function in the sense that if I pass in the moon, just return New York City. So same code as before, loading up and creating the chat object, but we now have this ability to say, hey, you now have this function that when you deem fit, you can go and call it. And we can add more hints in terms of a doc string and the actual system prompt if it's not using the correct tool.

And then I can say, what's the capital of the moon? And so what ends up happening, this is the actual output of the model, is the model will realize I'm asking for the capital. It will also then realize that I have this function called Capital Finder, is going to call that function and pass in the string moon because I asked for it. The function itself is going to return New York City. So the model's telling me, hey, you got New York City. And then it took that string New York City and then created a LLM output with it. And then it's saying, hey, using this tool, the capital of the moon is New York City. And this is how you can also tweak the behavior of these models.

Building a chatbot-powered Shiny dashboard

So that's generally how LLMs and these dashboards work. LLMs and these models work. So let's put them into a data science context. One of our, as a data scientist, one of our data artifacts are going to be dashboards. And so here I'm going to be talking about Shiny, which is a dashboarding framework in Python. There are many other, many dashboarding frameworks. This is the one I sort of learned because I also came from data science in the R community. And I also like its reactive programming nature where it can figure out exactly what it needs to calculate and only calculate the bits that it needs without running things from top to bottom.

So here's a basic Shiny application. It's got some radio buttons on the top. It's got a figure, a static figure on the bottom. But I've made the static figure interactive by changing the value of the radio button. And just to show you, this is not a recording. This is an actual application.

So let's build on that. Let's look at an example tips dashboard. This is something that you might end up creating as a data scientist. You have some filters on the left-hand side that maybe you want to collapse. But maybe you care about dinner services that are on the higher end. And what we have here is a filter. It filters this core data frame.

And then as a data scientist, we're writing the Python code to generate these summary statistic values, creating the actual scatterplot, knowing what to put in as the X and Y of the scatterplot, and other figures that we, as a data scientist, might think is important. And so, again, using Shiny, the only thing that these filters are doing is changing this data frame, and the rest of the application is reacting to the data frame.

And I can go and make changes, and the entire application will update. But one of the things that ends up being problematic with dashboards, or we hit these limitations really quickly, in that we created these cool things. Everyone's really excited about it. And then they want to do these other things. But we've done our job because people ask follow-up questions around our data artifacts. But then our data artifacts aren't able to sort of deal with that extra complexity or a follow-up question. Because usually, without doing a lot more work, these filters are pretty strict, in the sense that they're almost always an and operator. Meaning it's this range and whatever I selected here. It's kind of hard to have sliders or buttons and dropdowns. Some of them are or operations. Some of them are and. What about take this operation, but negate it? Do the opposite? That becomes very logical follow-up questions, but really difficult for these dashboarding tools that we make.

So, I want to show you a new package that can help with that type of problem, called Query Chat. And essentially, what this allows us to do is replace all of those dashboard filters, but use an LLM with it. And then I'll show you how can we sort of bound the limits of the LLM, such that we're more comfortable with its set of results.

So, here's the same application. But instead, on the left-hand side, those hard-coded buttons and sliders, it's a chat application. And so, here, I'm saying, hey, show me all of the smoking tables. And it's going to return the SQL that says, here's the code or the SQL statement to filter the data for smoking. And then, again, just like before, the rest of the application will react to the data frame. So, as long as we're pretty comfortable in that we're modifying the data frame and how it gets filtered, the rest of the application is under our own control, and we don't have to worry about a hallucinated result, because we wrote the code for total tippers.

And so, let's see. I can ask it another question around, like, hey, show only dinner patrons. And it's going to go and calculate the SQL for that. But we can also say things using more natural language, like flip that result and also only show me total bill outliers. And it's going to say flip the result, lunch instead of dinner. It's like, yes, lunch. And yes, 1.5 IQR. So, it knows something and it's asking me a follow up. And now it's going to go and do its little SQL thing.

And you can make the argument of whether or not this is the right SQL. But it's using this particular statement to filter the data frame, and then everything else reacts to this particular data frame. And so, we've bounded the problem in that all we need to worry about is this data frame. But we can audit the results because here's the actual SQL statement and here's the longer form of it. And we now even have the ability of doing something like calculate the outliers. Because that's something that we would have had to calculate off to the side before and then manually adjust the sliders. And even something like flip the result for dinner, like flipping it is a more complicated piece of logic that the LLM is able to figure out.

And that's sort of Query Chat in action. And you can see here, we have the right result because we as data scientists are writing all of these bits. We can audit the results because here's the actual SQL code or we can see it in the actual chat. And then it gives us a lot more confidence that, you know, this is the correct result. And it gives us a lot more flexibility with our data products.

How Query Chat works under the hood

So, how does query chat work under the hood? It's safe because all it has access to is select statements. So, we're not writing to a database. We are not, you know, changing anything. It is only a select statement. We can think it's reliable because as long as it's writing correct SQL code, which LLMs are actually really good at writing SQL code, all the execution is handled by the database engine. So, we don't have to worry about it sort of filtering data in a weird way or hallucinating an entire data frame because it's only writing the SQL. The database execution executes on the SQL. We can verify because we can literally see the SQL.

And then under the hood, how query chat works is only the column metadata is being shared with the LLMs. So, we're not passing in the entire data frame at all. We just know the data types of each column. If it's a number, numeric column, we give it the range. If it's a categorical, I think by default it's less than ten unique values, then we pass in the categorical variable. So, that might be something you want to recode to sort of have privacy. And then that's it. And then from there, that all goes into the there's a system prompt within query chat, and then there's some descriptive prompts, and we feed all that information into the chat process or those turns. And it returns us the SQL statement that we then capture with Python to filter our data frame.

Evaluating LLM outputs with Inspect AI

So, that's talking and looking at data. But there's other things that we might want to do as data scientists, which is how do we even check to make sure our answer is correct? So, there's this notion of evaluations or evals, and it comes from or inspecting our results. So, here's an example of a chat where we are sort of asking it, like, some sort of questions. Like, hey, what is 15 times 23? Or what's the meaning of life? And it's giving us these two big answers. But then how do we how do you normally go and check the results?

So, yes, one way you can do it is maybe structure it and say, like, just give me the number, and I'll do an assert statement. That's really rigid. But you are you should write some code and some sort of test case to go and see if your LLMs are working properly.

And so, here I'm going to bring up chatlas again, but really Inspect AI coming from a totally a different organization. And they've written a framework for how you can go and write test cases for your model outputs. Just it's almost like writing unit tests and running Pytest when you're writing functions or writing Python packages. But now you can write test cases for LLM output. So, we have Inspect AI. It's a framework for evaluating and monitoring LLMs. And the core fundamental term that you need is you need to create a task within this framework.

And so, what are the components of a task? Well, you need a data set. And that data set essentially says, what are the inputs? What are the values you're looking for? You need sort of the LLM that's going to say, like, how are you going to evaluate this chat instance? Sorry. You need the solver, which is the actual LLM that's being used. And then you also need a way to, like, grade or score the actual results.

And so, this is what it looks like. In our current example that I just showed you, here's some here's a data set, a literal CSV file where we have here's the input. I want this to go into the LLM, and I expect you to give me this value of 4. Or here's 10 times 5, and I expect the value to be 50. And you sort of fill out a CSV of here's what I go into the LLM. Here's the information I want coming out of the LLM.

And then you create this little task object. So, you're loading in that data set. You are creating a special type of model object or the solver. So, here we're using OpenAI, and we're turning it into this Inspect AI compatible solver. And then we're saying, like, hey, I'm going to use OpenAI GPT 4.0 mini to grade the results.

So, that's the portion of creating a task. And then you run these two little commands that I've already ran behind the hood. And so, if you run inspect eval and that actual script that I just showed, this is the results that you get. And you can say, like, hey, we have 100% accuracy. It's looking at the outputs of that information. And you can also get a nice little log view of it. So, here's the input for each of those CSVs. And whether or not it's correct, you get a nice little green button or a red eye for incorrect. And this is a way you can score the output of your LLM models. And I've used a math example just to make it really clear that these are correct answers. But you can be a little bit more fuzzy with natural language as well. And it's using the LLM to see, like, hey, is the actual output, you know, giving the result that you're looking for?

Summary and closing thoughts

So, LLMs and data science as a summary, yes. Yes, LLMs can be really powerful for data science. We are in what's known as a jagged frontier. So, LLMs are really good at certain tasks and really bad at others, even though that they're seemingly difficult tasks. But they're also really good and bad in seemingly simple tasks, like counting. It's usually pretty bad at counting, which is a really simple task that it sometimes doesn't do well in. But that's sort of what you have when you work with these frontier technologies.

But because we know, or I've just shown you how LLMs work and how we can sort of modify its behaviors, we can use various tools and other frameworks to sort of, like, show us that this is working properly in a data science context. And also push it in the direction of what it can do well in a data science context. And so, the other thing, especially with tools like QueryChat, you can use LLMs with dashboards to sort of have a much more flexible interactive data exploration. And then you can use something like Inspect AI to sort of validate that your LLM is behaving in the exact way that you want.

We are in what's known as a jagged frontier. So, LLMs are really good at certain tasks and really bad at others, even though that they're seemingly difficult tasks. But they're also really good and bad in seemingly simple tasks, like counting.

And I just saw the questions, like, yes, these tools, they all work with local LLMs. You can totally do them with a LLM model. I believe you, if you are using with these LLM models, it is made a little bit easier through chatlas, but you can also directly convert these objects into the Inspect AI format on your own as well.

But yeah. So, thank you so much. There's the link to my talk. You can find me on Discord. If you want to run some of the dashboard examples, they're all in this repository here. And if you find me on Discord, I can go and give you a Anthropic and OpenAI API key, and you can actually try these on something that's not you can actually run these examples that's not on a local LLM as well.

Yeah. And I would also add from one of the questions is everything I presented, we are at PyData, so most of it are actual Python examples. But if you also work in data science, you can pretty much the examples that I've just shown, there is a parity version in R as well. And that's all through Elmer.

Daniel, that was a great talk. Yeah, thank you. That was a lot of fun to co-host.

Yeah, yeah. Thanks for co-hosting. Yeah. Everything went smoothly. We have another minute if anyone has another question. Otherwise, I think, yeah, we have to we're just rolling off to the next.

All right. But yeah, message me on Discord if you want an API key, and I'll see you throughout the conference the next couple of days and hours. Cool. Thank you, everyone.