From messy to meaningful data: LLM-powered classification in R (Dylan Pieper) | posit::conf(2025)

From messy to meaningful data: LLM-powered classification in R Speaker(s): Dylan Pieper Abstract: Transform messy, unstructured data into meaningful, structured data using ellmer, Posit's R package for interacting with large language models (LLMs). LLMs can quickly convert thousands of texts, PDFs, and images into the data you need for your analysis. This talk demonstrates the practical benefits and addresses the common pitfalls of using LLMs through three examples: classifying images of Iris flowers and text descriptions of disease symptoms and crimes. Learn structured outputs, prompting strategies, model accuracy and confidence measurements, and validation techniques that blend generative AI with traditional ML concepts. Perfect for both AI-curious beginners and experienced data scientists. Materials - https://github.com/dylanpieper/posit-25 posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hi, my name is Dylan Pieper. Thanks for joining my virtual conf session. Today I'm going to be guiding you through how to use LLMs to classify data in R.

Sometimes as a data scientist, I wish I had a magic wand that could easily transform messy, unstructured data, which could be in open text fields, PDF documents, or even the contents of an image, into some meaningful data object or data frame so I can get on with my analysis or work. If you buy into the hype of LLMs, that's what they offer. An accessible, low-cost way to process thousands of text, documents, and images. And one model can pretty much do any task you ask it.

So, is this hype worth it? Can we do it in R? These are some of the questions I've had and really explored the most robust way to do it.

So, what I'll cover today is using the tidyverse package ellmer to do robust data classification, specifically focusing on the data structure that we get as a result, how to prompt the model to get the best data, to evaluate the accuracy and the confidence of the model's predictions, and ultimately to validate its responses.

Traditional machine learning concepts

So, first let's take a step back to understand some traditional machine learning concepts. First is extraction. So, we want to extract the distinct features of an object. In the traditional Iris dataset, there are morphological characteristics such as the length and width of the flower petals that we'd use to predict the class or the species of flower. So, when we predict the species of flower, we would get probabilities, right? Probabilities, which kind of species it should be out of the possible options.

So, for all of these, I'm like 95% positive that they're the correct flower because I got these images from Wikipedia and I pretty much trust that Wikipedia has it right, but I'll leave some room for uncertainty. They could have uploaded the wrong picture or something.

Classifying iris flowers with ellmer

So, that's what it looks like traditionally. Now, how do we kind of do this in R? So, first ellmer starts with a chat object, and I won't show how to set up the API key or choose a model. You can learn how to do that in the GitHub repo for this talk, but you need to set up the chat. And then the most important thing is you need to define the type object, which consists of different properties. Here, the genus, the species, the features I want, and I'm just asking the LLM for string data. And without any further prompting, other than it should focus on the morphology, it provides the structure data after I use chat structured and provide the path to the file and the type object. You get this list, this list of the predictions, which is correct. It's an iris versicolor and some of the features that it extracted.

Now, this isn't quite the traditional machine learning probability structure that I showed you. So let's see how it does that. So we're going to define a little bit more complicated type object using type enum for enumerated. So it can choose between these three different categories of species and the type numbers. So it can provide a probability that the iris is that species. And we can see that it correctly guesses this iris flower again. And it has a pretty high probability prediction.

Now, can we extend this to different species of iris color, iris flower? Sadly, the answer is no. It actually predicted all three iris flowers as versicolor. So only one of them was correct. Now, this may be unfortunate if you're trying to classify iris flowers, but we're not necessarily. We're just understanding the limits of the LLM. So here we take a step back and we ask it to do something a little bit more simple, which is classify an iris or rose flower. And so here, if we give it the options, we provide it with the two images, which I should mention I didn't in the previous slide. But this chat function called parallel chat structured allows you to provide a list of prompts to process multiple at the same time and get a data frame. So here it'll process both these images and it makes the correct classification.

Prompting strategy

So now we kind of know the limitations. And this is what I think is really important. As you can see here, I didn't use much prompting. I think less is more. You should focus on a clear data structure and the limitations of your task. Some of this is backed up by a recent evaluation Simon did for his blog, and he was creating an application to use LLMs to create tidy model predictions. And he found that providing it with paragraphs and paragraphs of context actually hurt performance.

And he found that providing it with paragraphs and paragraphs of context actually hurt performance.

So I think you should only add context when it's really needed, such as when you want the model to handle edge cases or focus on something particular. Like if there's multiple categories that fit, you should tell it how to prioritize which category you'd want to pick in that scenario. Or, for example, when I had it extract the features to tell it what to focus on. So things like that, you can sort of guide it, but you don't want to provide too much context.

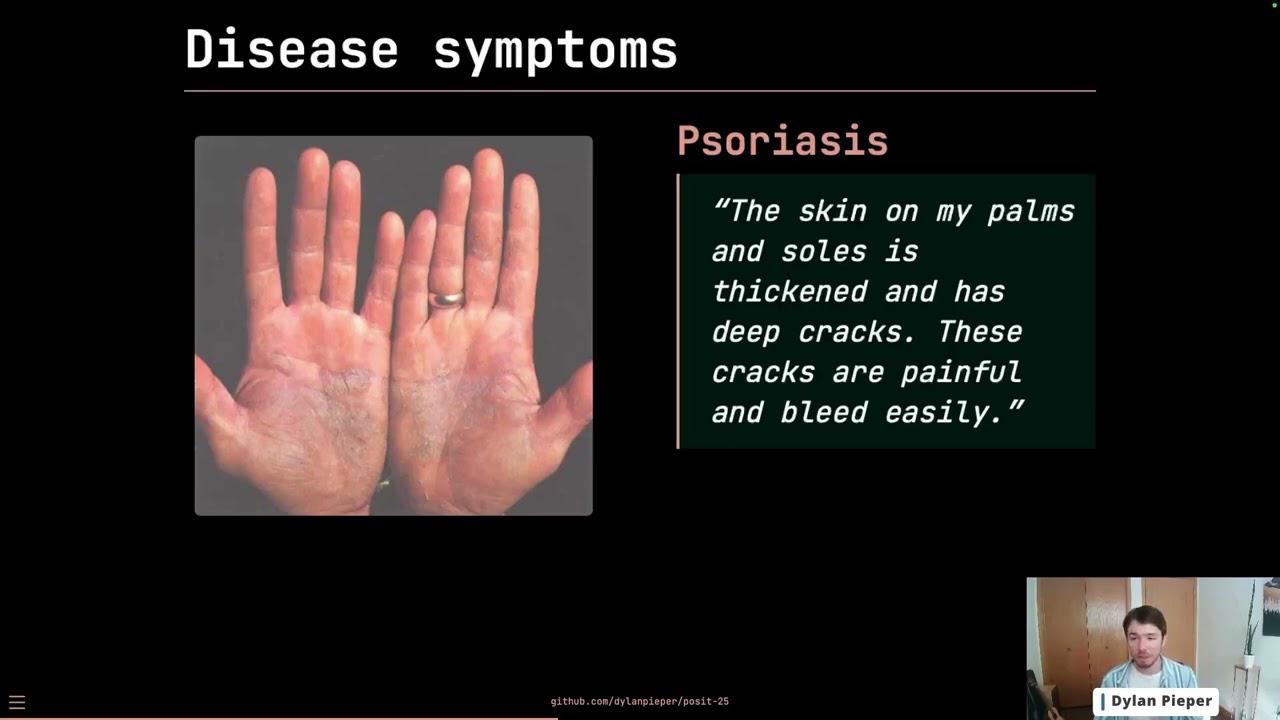

Disease symptom classification

So let's focus on a real world example with a lot more data. So here we're going to look at disease symptoms and trying to diagnose the disease based on the text description, like you see here in the example of psoriasis. So what we'll do is we'll read this data set, which is free open source from Kaggle. It has all the correct classifications that it should be so we can compare the accuracy. And what we're going to do is we're going to define the type. We're going to get the diagnosis of the 24 possible. And we also are going to ask it how certain it is of its response. So it could be interesting in a signal of the model's ability to predict. So we ask it to err on the side of caution, provide the score of uncertainty.

So to get the data, we run it through parallel chat structured again, changing to the open AI chat GPT-5 model. Going to pass it the list, the prompts, all of the text descriptions as a list. And I'm going to ask it to return the cost as well. So I'm going to ask for or look at a few data points here and look at the accuracy compared to the true label. I'm going to get the confidence from that uncertainty score and the cost.

So the accuracy was actually unfortunate. It was very low, 62% compared to the true values. Compare this to a traditional machine learning model that I ran in tidy models that got 93%. We're doing pretty poorly. We also got a low confidence score, which this is actually better news because the model is telling us, hey, I'm not doing well at this task. So that confidence that we asked for was actually more or less a true signal of its performance. The cost was fairly low, only 95 cents.

And you can see that it actually wasn't bad at predicting all different kinds of diseases. Diseases such as pneumonia and jaundice, it predicted with 100% accuracy. But others such as malaria and bronchitis, asthma, it did very, very poorly at. So it could work in some situations, but really we'd want to have a better model that could work with these kind of more difficult scenarios as well.

Now, again, going back to that uncertainty, the uncertainty object that we asked for, the confidence of the LLM actually correlates to the accuracy of predictions. So when the LLM is very confident in its predictions, it also has more correct responses.

Crime classification

So let's switch again to a new example and one that's a little bit different than the health diagnosis because we don't have the true diagnosis. We don't have the true classification. So we're kind of working in this space where we won't ever have a true baseline to compare it against. So we got or in part of some of the work, grant work that I've done at the University of Pittsburgh, we looked at descriptions of crimes from police reports. So this example here you can see involves harassment.

And what we'd want to ask the LLM to do is to tell us what type of crime it is, such as a violent crime, a traffic crime, etc. So this was the task that I asked the LLM to perform. And I use this schema again for different types of crime to ask for a category such as violent property, drug, etc. And then, you know, I added some additional context because certain crimes could belong to multiple categories. So somebody could be driving under the influence, which is a DUI offense, but they could have also done something violent while they're under the influence. So I ask it if it's violent or another crime to choose violent, but only if the intent seems to be to harm or injure, because that's really core to the definition of violence. I also asked based on some previous like piloting I did with this data to tell it that threats, harassment, stalking, etc. should all be considered violent because it tended to misclassify those as public order. And then I ask again for a score of uncertainty.

So we process, I process all of the data again using the parallel chat structured function. And one of the things, the cool things I did, like I said, there was no true baseline for this data. But there is an existing machine learning tool that uses a ton of data that's trained on hundreds of thousands of data to be able to classify crime descriptions into those categories. And it agreed with that model about 81%. So we're hitting a lot better target, I think, in this case, even though both sources, both the LLM and traditional ML tool could have errors. It was also 81% certain. Again, the uncertainty score seems to be a fairly good signal of how well it's doing. And the cost was, again, very low, under a dollar.

So what's really cool is when we have this data from the LLM and from another machine learning tool is we can see where they disagree and where they might be misclassifying. So here in this example of animal cruelty, the LLM was classifying it as a violent, which I believe to be true. Whereas the traditional machine learning tool classified it as public order, which I don't think that's necessarily the case.

Again, if you looked at the certainty score for all of these are very high and that high certainty does not mean a correct classification. So sometimes it's a signal, but it's not a signal for everything. So sometimes this disagreement can also really help. Now, if we looked at the certainty of the traditional machine learning model in the LLM, they don't correlate very well. And they're uncertain about different descriptions. So really, I think this speaks to the internal mechanisms of the models. They operate very differently and make decisions very differently.

LLMs and traditional ML in tandem

So I think we can use LLMs in tandem with traditional machine learning development and production models to help our workflows. So I think we can, for example, when developing a traditional machine learning model, we can use the LLM to classify data initially, a human to review and correct that data. And then traditional machine learning algorithms to train a model, which is really nice because those models will typically be free to use. And in production, we can add a step at the beginning to use our machine learning, current existing one machine learning model to classify the data. And then like the crimes example that I showed, also use an LLM to classify the data. And then we would compare them for disagreement and different levels of certainty to have a human compare and review those responses and then fill it in with the correct decision that they end up making. And then we can retrain the machine learning model so that it's better.

Caveats and limitations

So what's the catch? Frontier models are a black box. Pretty much everything that's happening inside them, the decision making are obscured by billions of parameters, unknown training data sets, secretive training and fine tuning algorithms that really require just using very that only have documentation for very small pieces of this. So some of the frontier model companies are more transparent about this than others. But even sometimes they have to do research on their models to understand what's going on inside. And on the other hand, local open source models tend to perform poorly. So it's best to be really careful or even avoid using them altogether.

Last, LLMs are not deterministic, so they're not always consistent or reliable. And here's an example. If we ask an LLM, in this case, chatGBT5, to tell us a joke, it gives us in the first run, why don't scientists trust atoms? Because they make up everything. And then in the second run, they give us a different joke, or the model provides a different joke, which is, why did the scarecrow win an award? Because he was outstanding in his field. And so basically, we provide the same input and get a different output. And this tends to happen more when we give it a general prompt like this. And it becomes less frequent, I think, when we ask it, for example, for structured data, we provide a little bit more prompting around it. But what it means is that we won't always get the same answer. And we really need to validate and look at our responses and understand that they could change even from one run to another.

Key takeaways

So what do I think I can take away from this exploration of using LLMs to classify data? I think we can learn that they are very accessible. And I think this is where the hype is true in some ways, it makes it so accessible to do unstructured data analysis to get your feet wet to explore. And that's, I think, a really cool thing about them.

We want to use actually minimal prompting that focuses on the data structure, and the task boundaries or limitations. So when we start to break down the hype, that means we have to be really careful about what data we're asking for, and how well it's performing on the tasks that we're asking it to do. A few ways we can do this is we can operationalize uncertainty scores for human review to evaluate the LLM output, and also use existing machine learning tools to compare to the LLMs responses. And in tandem, we can use LLMs with existing ML tools. And I think really, they should be supporting them, like they shouldn't replace traditional ML. But I think they can either be a good starting place or another tool to use in our existing methodologies and workflows.

And I think really, they should be supporting them, like they shouldn't replace traditional ML. But I think they can either be a good starting place or another tool to use in our existing methodologies and workflows.

So thank you for listening to my talk. It was such a pleasure to be able to do this for you. If you want to reach out to me over email or Blue Sky, you can check out the repository for this talk, and I'll be happy to stick around to discuss LLMs.