Building the Future of Data Apps: LLMs Meet Shiny

GenAI in Pharma 2025 kicks off with Posit's Phil Bowsher and Garrick Aiden-Buie sharing a technical overview of how LLMs can integrate with Shiny applications and much more! Abstract: When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Garrick will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time. Resources mentioned in the session: * GitHub Repository for session: https://github.com/gadenbuie/genAI-2025-llms-meet-shiny * {mcptools} - Model Context Protocols servers and clients https://posit-dev.github.io/mcptools/ * {vitals} - Large language model evaluation for R https://vitals.tidyverse.org/

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Right, welcome. Thank you so much. We're just going to give everyone a minute here to get on the bridge and we're going to kick things off with an awesome session today. I've got a few words that I'll mention and then we'll pass it over to Garrick for the session. Thank you so much for coming to the Gen AI Day by R&Pharma. My name is Phil. I help to run the non-profit with many people here that are behind the scenes helping to make today possible.

Open Source and Pharma is the group that helps make this event and other events a part of the R&Pharma open source community. Today, we've got a really exciting program and some very technical sessions for everybody to learn about some of the new tools. It's amazing that this event started out last year just as a couple people, Anna, Victoria, Harvey, Jared, Eric, myself, we were just chatting during one of the Hangouts and we said, why don't we get a few people together for Gen AI Day just to learn about how pharmas are actually using these tools.

We don't really want to learn too much about general applications in the industry, but actual technical details of how people are implementing Gen AI. And so last year, we hosted this single day event. We were expecting probably around 50 to 100 people coming. We almost had a thousand people register again this year, same boat. It's just amazing to see the community come out to learn about the intersection of pharma, open source, Gen AI, and really excited to mix real applications by pharmaceutical companies with Gen AI, but also have some technical sessions.

So I'll introduce now, Garrick Aiden-Bue, who's here today, an engineer at Posit, works on the Shiny team, is doing some really amazing work with Shiny, LLMs, Gen AI. He's going to take us through a technical deep dive today and give everybody a little bit behind the scenes, lift up the hood scene into the world of open source R and LLMs. So with that, I will pass it over to you, Garrick, and we'll kick off the session for today.

Awesome. Well, thanks, Phil. It's a pleasure to be here, and I'm really excited to be talking about LLMs, Generative AI, and Shiny, and all of that together, because those are basically all of my favorite things these days.

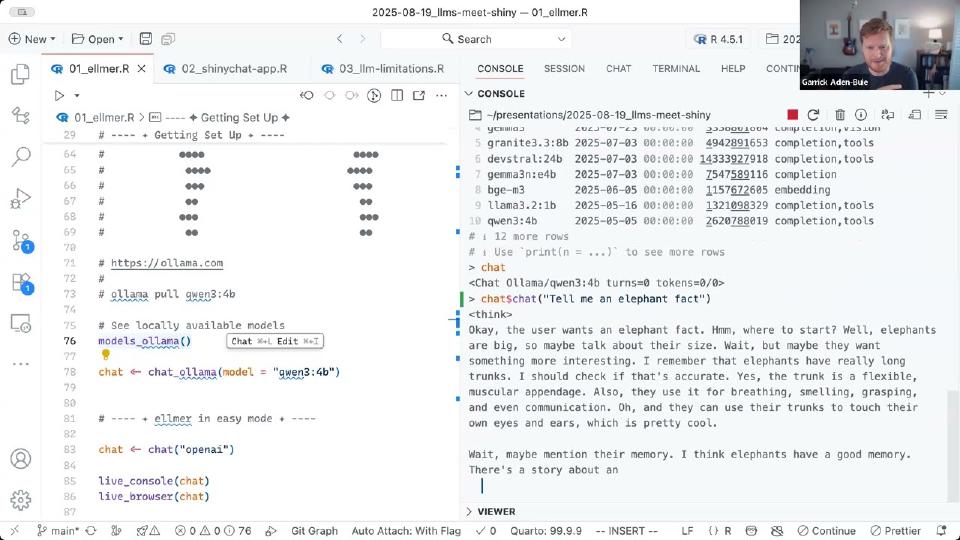

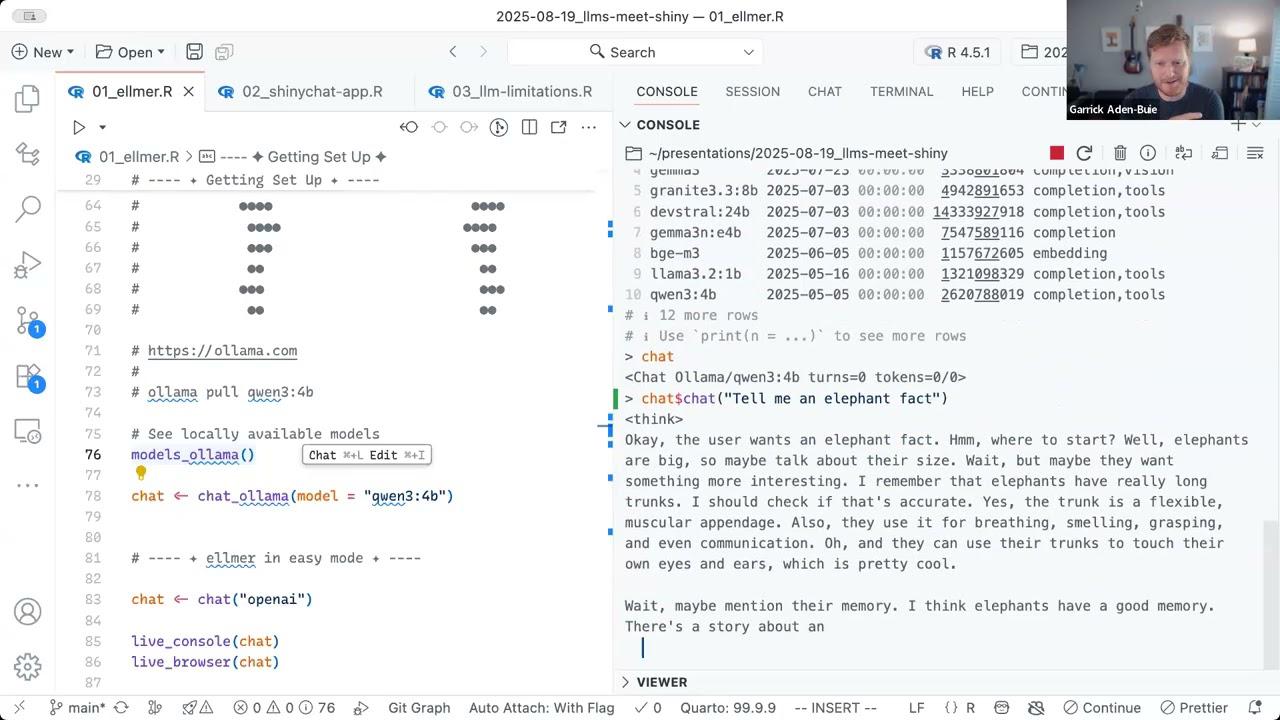

Getting started with Elmer

Okay, so you should be able to see my IDE. I'm using Positron. If you haven't seen Positron before, it looks kind of like this. I've zoomed it in quite a bit, but we have some files that we're going to be working through on the left-hand side. I have an editor over here. You should see a little elephant ASCII drawing, and I have an R console over here on the right. And yeah, so I'm going to do something which might not be the best advice to do in general. I'm going to do some live coding and live demonstrations, and to make it even more fun, I'm going to mix in some live demonstrations of AI. So we never know how these are going to go. Hopefully everything goes great, but we'll see.

So thank you for coming along the journey with me. So I'm going to start by talking about just some real basics, like how do you use LLMs and generative AI in R? And the key here is a package called Elmer. And when you load Elmer, it makes interacting with LLMs pretty easy overall.

Elmer has these chat functions, and you can just type chat underscore, and you can see all of the different AI providers that are available inside of Elmer. There are a ton. Each of these are places, basically companies that give you API access to interact with an LLM. Many of these companies also train large language models and develop them, but also some of these are places that just sort of run other people's models for you. The two kind of biggest model or biggest providers are, for example, OpenAI.

So I can create a chat instance for OpenAI, and Elmer comes with these great defaults that just make it super easy to get started. So it automatically picks a reasonable model for you. So here it picked GPT 4.1 for me. And then to actually talk to the model, I'm just going to, I'll call chat $chat, and then I can ask it a question or say something to the model. And I'll just run this line, and I'll ask the model to tell me an elephant fact. And it says, sure, elephants are the only animals that can't jump. Okay, cool. Yeah, they're too big to get all four feet off the ground at the same time.

Okay, so I can just as easily pick an Anthropic model and talk to an Anthropic model here. I'm going to talk to Claude Sonnet 4, which is the default that Elmer picked for me. And I'll ask Claude for an elephant fact. And we can see, yes. Okay, so Claude picked elephants have incredible memories and can recognize themselves in mirrors. They also remember other elephants they haven't seen for decades. So pretty cool.

All right. So we started the talk off by learning two pretty cool elephant facts. And you can see how easily, as an R programmer, how easily you can switch between these models, right? When I talked to the model, I used the same method. The only part that changed here was, was which provider I'm connecting to. So Elmer makes it really easy to experiment, to try out, to, like, get used to how LLMs work. And, and it kind of makes the whole thing fun.

Okay, I want to show you something else about this chat object. It actually keeps the chat history going. So if I ask again, something else about how long can, like, let's see, how long, what is it, what I'm trying to ask, how long is, how long does an elephant remember? And I'll get another answer. This is still from Claude. And it says exact duration isn't totally known, but potentially 60 to 70 years, general knowledge, something about 40, 50. Okay.

But here's what's kind of cool is if I look at this chat object, if I just look at chat, you can see, first of all, it gives me a little header that shows me which model I've been talking to, how many turns have happened, how many back and forth I've had with the model, how many tokens I've used, and an estimate of how much it costs. In this case, it's only 106 tokens in and 247 tokens out. So this hasn't cost me much money at all. But you can see my first message was about an asking about an elephant fact, and then the follow up messages are shown here. So you can use this to debug. And also, it's a really natural way to sort of like have a conversation with an LLM, natural in a sense of like, as an R programmer being used to running code in the console.

Running models locally with Ollama

We're going to see another way that you can interact with LLMs through Elmer in just a second. So to get set up, okay, you cannot just run this code and have it work the first time you've installed Elmer. Unfortunately, you do need to give your credit card information to somebody who will like Anthropic or OpenAI. And what is cool about Elmer is that if you look at the help for these functions, like chat, Anthropic, look at the help. And you'll see in the description, it will talk about how to how to get those API keys. So every chat function has a little bit of instruction about how you should get it set up.

So in my case, I went to these two links, I went to OpenAI, and I got an OpenAI API key, and then I went to Anthropic, and I got an API key. And you can there's a neat function and use this called edit our environ. And you can run that it opens up your our environment file, and you can put those API keys there, and then forget about them. And from then on, you can just call these chat functions and get started.

Okay, so you might be saying like, hey, I don't actually really want to give my money to, to OpenAI or Anthropic yet, I just want to try out LLMs and see how they work and get a feeling for them. And what's really cool is you can do that too. There is a project called Olama. If you go to olama.com, you can install Olama on your computer, you can do that really easily. It doesn't take long to get started. And then from there, you can, you can install lots and lots and lots of different models that you can run locally on your own computer.

And the command to do that is something like this Olama pull, and then you give it a tag that identifies a specific model. And, and then it goes and it downloads the model for you. These models are something like, like this is a 4 billion parameter model that I have listed here, called, it's Quen3 with like a, at the size of 4 billion parameters. And I feel like that one was probably about three gigabytes to download, something, something like that. The bigger the model, the more it, it is to download. And roughly, you can think of, like, the, the, the number of parameters is roughly equivalent to how much, how many gigabytes of RAM you're going to need to run the model. So this model takes about four gigabytes of RAM to run, which means it's kind of small, and it's snappy.

And I might even be able to run it right now in this, in this session. So I'm going to start up a chat here. Okay, with, oops, with Quen3. But, but you're, you might be wondering, like, which models can I use? And how do I figure out which models I can use? And Elmer has some help for that, too. There's a models prefix for the functions. And where, where Elmer supports listing models, then you can, you can see the, the associated providers here, right? So if you want to pick a specific model from Anthropic, you can use Models Anthropic or OpenAI.

By the way, GitHub has pretty great free access to LLMs as well. So there, if you're wanting, if you're, like, yeah, if you're wanting somewhere between, like, if you want to try this out with just some, some models, I recommend using GitHub, actually. But you can see, if I use Models Olama, it will show me, that's not a great view. So I'm going to do as Tybl. It will show me a table of all of the models that I have installed locally. It also tells me how big they are, in terms of size, that's like, I think that's 13 gigabytes, basically. And you can find my Quin 3B here is about two gigabytes.

Okay, so I can, let's make sure, I'm talking to Olama here, and I can say, tell me an elephant fact. And we'll see what the smaller model comes up with. And actually, I think we're gonna see something pretty interesting here. It turns out that Quin 3 is a thinking model. So it does this whole step of, of reasoning or thinking, which is basically like, it just writes more words, it writes a lot more words initially, as part of its thinking step. And you can see, it's kind of like, it's thinking of, it's like writing about some facts that it knows about elephants, and then it is second guessing itself multiple times until eventually it might come up with an answer. It probably won't, though. Because sometimes it gets stuck in this loop of, but wait, I need to check. But wait, I need to check. Oh, we got one. Okay, so elephants have long muscular trunks. Okay. Yeah. Oh, it contains over 40,000 muscles, which gives the trunk incredible precision, precision and strength. Cool. Okay, so now we learned something else about elephants. I mean, you might want to fact check this one, because this is a smaller model.

System prompts and model parameters

Okay. So, alright, let's go back to OpenAI. Elmer recently introduced a new function called chat. And what's nice about chat, okay, it's nice that you can use chat underscore to find all of the providers that are supported. But you can also, chat is sort of like a single door, front door entrance to all the models. So you can give chat OpenAI, or you can say Anthropic, or you could say GitHub. Oops. Not that right now. So we'll go with OpenAI. And you can also, if you want to pick a specific model, you can give a slash afterwards and say GPT. And maybe I want 4.1, but like a smaller size. So I don't pay so much money while I do this demo. And I'll give it GPT 4.1 dash nano. So now I'm talking to OpenAI GPT 4.1 nano.

Okay. Typing chat, dollar chat, and then giving your questions is a little bit annoying. So there are two things that you can do in Elmer that are pretty neat. The first one is you can have an actual conversation by running live console chat. And here you can, it's sort of like, it's kind of like the same interface you'd have with chat GPT, except it's in your console. So you can say, tell me an elephant fact, and it will answer you. And then you can keep talking and have more conversations. You type Q to get out of that.

Okay. And if I go back and look at my chat excuse me, if I go back and look at my chat, you can see, I have now these first, my message, and then the message from the LLM or assistant. And now I'm going to take that same chat, and I'm going to call live browser. And this launches a Shiny app. And the Shiny app is just a really simple, Shiny app that uses Shiny chat and has a little chat interface. And it includes the messages that we've already had in this conversation. And yeah, and again, it's going with memory and intelligence. Tell me another fact about how elephants hear.

Yeah. Cool. So give me another fact that I can have that conversation. I'm going to do control C to get out of this. And I can go back and look at my chat object and see that it now has the four back, the two back and forth or four turns that we, that I had with the, with the model and still about 100 and about 200 tokens total and not enough money to count.

Okay. So this is, this is like, I called this easy mode. When you're programming with, with, when you're programming with LLMs in general, you are going to want to customize their behavior. First of all, you're, you want to pick, you'll end up wanting to pick the right model for your, for your task or the thing that you're working on. So you'll want it like, like we were just testing out and trying different models here. You know, you you'd want to get, do that same process to get a sense of which model you want. And some models will end up being better at depending on the task that you're wanting to do, whether you're wanting to write code or do something that's more like document summarization or, you know, anything, you know, depending on the thing that you're trying to work on, you'll pick a different model.

Right. So, so we've seen, we've seen how you can do that, how you can pick models. So we have, I have here chat open AI, and I'm going to just give it the model, the specific model I want. This time I'm saying GPT-5 nano. GPT-5 just came out two weeks ago. And it's a pretty, it's a pretty decent model. So we'll try it and see how it works.

And I guess that's also one of the nice parts about using Elmer is that you have access to the new models as soon as they're available in the, in the API. So with, you know, the same day that GPT-5 came out, we were testing it with Elmer and, and seeing how it works. Right. So you have another tool as a programmer, which is that you can set a system prompt. And a system prompt is basically like a message. It's essentially like a message from the user, except it's from the developer, the person who is doing the programming with the LLM. It's shown to the model in the same way that a user message is, but it is not usually shown to an end user. So like if someone is using your Shiny app, and you have given it given, if you've given a system prompt, only the model will see the system prompt and the person using your Shiny app will not. But functionally, it's very similar to just a regular message that you send to the LLM. Otherwise, it is usually where you give the model some, some instructions about how you want it to behave in the conversation and how, you know, essentially how you want it to talk and what you want it to do. So here, I'm going to give the model the instruction that it is a trickster who turns what the user says into a silly riddle.

So you have another tool as a programmer, which is that you can set a system prompt. It's essentially like a message from the user, except it's from the developer, the person who is doing the programming with the LLM. It's shown to the model in the same way that a user message is, but it is not usually shown to an end user.

The other nice part about chat about using Elmer is that you have access to different parameters that are available through the API. So this is an API parameter that many models support. It's temperature, it's called temperature, it basically means you could you can kind of think of it like creativity, where a number closer to one means the model will pick more random words. And you end up getting an answer that feels more creative. And a value close to zero is the model will pick mostly the most likely words. And you'll get a you tend to get something that's a little bit more dry. So given that this is a sort of creative task of turning things into riddles, I'm going to give it a temperature of one to dial up the creativity. And we'll see what happens.

So I want to clear my console really quickly. And you can see I'm now talking to GPT five nano, we haven't had any turns, but there is a system prompt that that is telling the model that it's a trickster, right? So I'm going to give it just one word and it will make me a riddle. I hope so. One thing that I've noticed in with jet with GPT five, the newer model is that it takes a little bit, it takes a little while to get the first to have it like actually stream tokens back to you. So usually there's a little bit of a delay with GPT five that I don't typically see in GPT 4.1. But here you go. Here's a silly riddle spun from your prompt. I'm gray and grand the Savannah's own parade. And the answer obviously is an elephant.

Okay, this is way more useful than just to make riddles. This is very useful when you are trying to guide the conversation. And as a programmer, it's one of the one of like the best ways that you can use a system prompt is to tell the model how you want it to behave for like it's an expert programmer and statistician and I want it to explain things clearly and concisely. Most importantly, though, I wanted to use the latest features of R, including the pipe operator. I definitely prefer the tidyverse style instead of packages. And please write nicely formatted code, use a functional style, and prefer things like vectorization. So basically I can say like, hey, look, this is how I want you to write code for me if I ask you to write code. And I can pick the model that I want. And I can dial down the creativity this time because this is not a as much of a creative task.

Building a Shiny chat app

Okay, so let's see how we can use Elmer in a Shiny app. And and have any like create a whole model around or a whole and you know, the interface and experience around around an LM. So I have the Shiny extension installed. You can see if I go to my extensions, I have installed Shiny. And what's cool about that is it gives me a little snippet where I can just type shiny app and hit tab and I get the snippet. And then I'm going to change from page page flow to page fillable. I like that better here. And, and cool. Now I have an app, I have the skeleton of an app.

I'm going to use the shiny chat package. So I'm going to add in shiny chat here. And you can grab you can grab shiny chat from from our GitHub repo. It's at positive slash shiny, shiny chat slash package R. And you can use pack to install that. And, and then we have a nice module for creating a chat interface. And basically you call the shiny chat. That's chat, mod, UI, and you give it an ID. And we put that in our UI. And then on our server, we're going to do chat mod server, let me load shiny chat. So I get good completions here.

And chat mod server is going to get take an ID and then we need a client object. So like the connection to a model through Elmer. So I'm going to come back up to my imports. And I'm going to library Elmer. And I'm going to create a client. And here I'll do chat, open AI. And let's, let's stick with 4.1 nano because it streams a little faster. And yeah, so everything is connected now. So I have this Elmer chat client, and I have my UI and my server. And if I go up here to the run button, I can run my Shiny app. And we will see. Ideally. Yeah, here we go. We've got a little Shiny app. And I'll ask for an elephant fact.

Nice. Okay, so what is this? Let's see, there's one important thing about how I have set this up. You'll notice that I am creating the client to the to the LM provider. I'm creating this here inside of the server. And that means that every new Shiny session, every new app session, every new user that comes to look at this app gets their own fresh chat session. We saw before that Elmer is chat objects are stateful. So they record the history that and the conversation that you've had with them before. So if I accidentally create the client outside of the server function, I'm basically using one client for everybody. And that means that everybody's conversations are going to get interleaved. And, and that would not be great. You probably don't want to do that. So make sure you create your client inside of your server function. And then I'm passing it to chat mod server.

LLM limitations and tool calling

Neat. Okay, so it'd be really fun to do more things with LLMs. But first, I want to take a small detour into to talk about some of the things that LLMs aren't great at first. So I'm going to create another chat instance to GPT 4.1. Nano, let's make sure. Okay, I'm on you talking to 4.1 through open AI. And I'm going to ask it something like, hey, what is positive been up to lately. And the model says something interesting. It says, as of October 2023, posit, which was formerly known as our studio, has continued, this is, this is pretty bland. We've definitely been up to something more specific than just generally developing the tidyverse and related packages.

So I'll ask the model to read this URL and figure out what we're doing. And right away, the model says, hey, I can't do that for you. I'm unable to access external websites directly, including that blog link that you gave me. In fact, if I even ask it like, hey, what is today's date? Okay, apparently, today is April 27, 2024. So these things are this is it's not 2024. First of all, and second of all, it's kind of disappointing that this model can't go read the website. The, the reason that this happens is because models are trained, training is a very expensive operation, they're trained on lots and lots of data. And then training only happens once every once in a while. Basically, prior to every new model release. So by themselves, an LLM large language model basically just writes text, that's what it can do, it can write text, but it can't go out in the world and do things for you.

So by themselves, an LLM large language model basically just writes text, that's what it can do, it can write text, but it can't go out in the world and do things for you.

Right? It also isn't really a database, either. So I'm going to ask it this, I'm going to make a little chat app. And I'm going to ask the model to figure out all the zip codes in the US that start with 18, that start with one eight, and have a population of more than 20,000. And, and, like, what's interesting is it actually it does, the model does give me an answer. But, but they, the knowledge that the model store is not like a database, a large language model is not going to go like pull from a knowledge store and pick out facts, like it, it writes words that seem to be plausible and realistic. Right? So this isn't a good this is not going to be generally a good way of figuring out what, which zip codes have a pop start with one eight and have a population of 20,000. But what I can do is I could ask the model to write some R code, using the tidyverse and tidy census to find this number or find these zip codes. And yeah, and so now we've shifted the problem into something that models are actually really good at. So I've just asked the model to write some R code for me.

And even GPT 4.1, or, you know, a relatively or nano, a relatively small model is going to be able to write some code that is going to get me pretty close to, to what I want. I think we're getting close to the end. Okay, so now it's going to explain all that to me, I'm going to try to just come up and grab this code here. And I'm going to create a new R file and paste in that code. And I'm going to take a look, I'm going to get rid of all this stuff, because I have a census API key ready. What else am I going to do, I'm going to start a new R console. This is one of the really cool things about positron is that I can have multiple R consoles at the same time. So I have this one that's running my Shiny app. And now I'm going to go into a fresh R console and try some stuff. And we're going to YOLO this, we're just going to run, run some code, there's no tidyverse. That is disappointing, but I can fix it.

Okay. So, alright, let's go back to OpenAI. So tools are really tools are sometimes they're called functions or tool calling or function calling. But the goal of a tool is it is a way to bring real time or up to date information to an LLM. So we've seen that they don't have access to real time information or knowledge of what is going on in the world. So tools let you bring that information to a model. What else is really cool is that you can write tools that let the model go out and interact with the world too.

To understand how this works, I'm going to take one little step back. And we're going to imagine, you know, imagine you're talking to ChatGPT. So you send a message. You type in a message at ChatGPT. It looks a little bit like a text message. That message is sent to a server. The server then goes and talks to the LLM. The LLM gives an answer, writes more words. That response comes back through the server and makes its way all the way back to you where ChatGPT shows it to you in the UI. Cool. Except we're not writing ChatGPT.

We just saw that we can write R code and have ShinyChat be the thing that is replacing ChatGPT. So we're kind of here as the developer. We're writing some R code. We just saw how you can write a little bit of R code to connect Elmer to a chat provider and create a whole chat interface with it. And that's something that you can, like, deploy and give to your users, right?

So we're going to focus on this spot here, on the person writing the R code. And if you are a person who is writing R code, then you're writing R code on a server where you can also run R code. And if you can run R code, you can do things like write code that pulls in data. Or you could talk to a weather API and get up-to-date information from a weather API. Or you can write R code that sends emails. And you can do all of this in, like, the same exact place that you are currently, like, connecting to an LLM. So you're in charge, essentially, of writing these tools and then connecting them to the LLM. But the tools run in the same place that the LLM runs.

Okay. To, like, walk through this, we're going to try this question. We're going to basically see, like, how do tools work through asking an LLM, is today a good day to go to the pool? So I'm going to, let's see, hop back. I need to run this app really quickly. You're not seeing it, but it is starting up in the background. And as soon as it does, I will be able to do this.

And here we go. I'm going to make it really big. Okay. So I have a little Shiny app. And I have told the model that I can look up the weather for the model. And if it wants to know the weather, it should write this special message, get weather with the zip code. Right? Okay. So we're going to see what happens. Is today a good day to go to the pool? And I live in Woodstock, Georgia. And it writes back, I'm going to need the weather, please. It says get weather for this zip code, which happens to be the correct zip code for Woodstock, Georgia. So if I hop over to the National Weather Service and look for the forecast today, and I could just, like, copy this text here, go back to the chat, and give the model the answer. And it will say back, okay, looks like there's a 40% chance of showers after two. So maybe, yeah, maybe go earlier in the day if you can.

And this is essentially how tool calling works. The model itself is not going to actually call the tool. The model is going to send a special message back that says, I would like you to call the tool for me with these parameters. And then we run some code and send the answer back. And I say we, but really, Elmer is going to do this for us, which is awesome. Right? And there's also another, like, small part of this is there's a process that happens that explains how to use the tool to the model. So, you know, I explained it here in text, but there's a similar process that explains how and when to use the tool, and that gets the model to write a message that looks sort of like that.

Creating a tool with Elmer

Okay. So, hopping back to my slides, what the process looks a little bit like this. Like, we as the user will write, is it a good day to go to the pool? The LM writes back, let's get the weather for that zip code. We have a function that we can write that goes to call the weather API, and we get the data back, and we send that back to the LLM, and then the model interprets that data and comes back and says, hey, yes, good day to go to the pool. Right? What is awesome about Elmer and chat list is that this process of, like, receiving the special message from the LLM and calling the write function and sending the data back to the LLM, that Elmer and chat list, chat list is like the Python version of Elmer, they do this for us. We don't have to do this at all. So, we just have to worry about the part that we're good at, which is writing functions that can talk to the weather API, and Elmer and the LLM will work it out and figure out how to connect the dots for us.

So, we're going to actually do this right now, very quickly. I'm going to, let's see, should I stop that app? I think I should stop that app. And stop the app. Okay. So, and I'll start just a new console because that way I know I'm clear. Okay. So, this is a package that I really like called weather without the E and a big R. And let's say I know that Woodstock, where I live, is this latitude and longitude. And I basically, I can, let's see, there's this function, point forecast from weather. If I look at the help data or the help page for it, you can see it takes latitude and longitude and it's going to give back a forecast.

Cool. So, let's do that now. So, Woodstock, latitude, and if I run, oops, I need to get rid of that help. And if I run that, you can see, let's make this a little bigger, I get back temperature, dew point, wind speed, humidity, and the forecast, basically. Which is really cool. Okay. So, to turn this into a tool, I'm going to load Elmer. And basically, there's this really neat function in Elmer called create tool def. And I give it a function, and I'm going to run this. And you can see, I turned on verbose. So, you can see that Elmer gives the help documentation for this function to a model, and it writes a tool definition for us. So, I'll just copy and paste that in.

Okay. And now, it's basically, a tool is basically a wrapper around a function with a whole bunch of other stuff that describes the function to the model. Let's say, let's just change this to get forecast data for a specific latitude and longitude. I'm going to clean this up a little bit. Okay. I don't want the model to have to pick time zone or these other things. I'm going to delete them from the arguments, and I'm going to do something really neat. I'm going to make a little lat lon. I want the model just to focus on latitude and longitude. And now, get out of the way. Now, if I run that, I now have a tool definition. You can see it has a description, and it, you know, there's some, we've described the arguments. We've told them the model that I need a number for latitude and longitude. We've described the tool overall. And I can actually, what's really neat is I can actually call this function, or this tool, like you can call this tool directly. So, I could give it some latitude and longitude, run that code, and I get my forecast.

So, to connect this tool to an LLM, I'm going to create a chat object. Then I'm going to register the tool, get weather. And up here, I'm going to do one thing just to make this really explicit. I'm going to say echo equals output so that I see every time the model is called, or the tool is called. Then I'm going to register the tool with the chat object. And now I can ask the tool, is today a good day to go to the pool in Woodstock? And you'll see, basically, the model decides to call my, decides to call this function, and we get back an answer. For some reason, it decided to call it again. That's fine. It does that sometimes. And now, having looked at the weather twice, it says, this indicates that it's not ideal for swimming at the pool in Woodstock. Okay. So, we've decided it's not great.

I'm going to do this one more time. I'm going to create a new chat object. I'm going to register the tool. And then I'm going to call live browser on the chat object. And I'll ask it here, is today a good day to go to the pool? And then, of course, where? It says where? In Woodstock, GA. Here we go. And now, you'll see that in ShinyChat, we now have an interface that will show you the tool calls as they're being made. You can open them up and see this is what the model called, and this is what we sent back to the model. And if I collapse that, then you can see now we have the answer from the model.

So, I think I have reached the end of my time. I do have a repository for all of this. I will share the link in the chat right now. Okay. I just dropped that into the chat so you can get back to it. And find some there's even some more code in there if you want to explore these ideas more to see how you can customize the tool display and do some other really fun things with tools. So, it's been a pleasure chatting with everyone, and thank you very much for being here.

Q&A

Hey, Garrett. Awesome session. Great comments in the chat. We've got a few questions for you that we thought we would tackle. Sure. If you can see them, feel free to just take a look in the chat. Otherwise, I'm just going to read a few out to you. Does that work okay?

Sure. All right. So, the first one, someone asked, do you need API from those providers or installation is automatic? You, yes, you need, you usually in almost all cases, you need to go out and get an API key, which is also equivalent to giving someone else your credit card information or an account or something. I think one of the few places where you can get started right away, like I mentioned, is with GitHub models, which is as long as you have like a GitHub token, which many people do already because we've used those for a while in the R world, then you can probably get started right away with GitHub models and you can get pretty far in their free tier.

I always think this is such a tricky thing is like, like, where did the API key come from that I'm using? And like, what was it like to navigate the website and sign up for the right thing? Because when I go there, sometimes there's like all these different options. So I, yeah, this is definitely not the clearest thing for a lot of people to tackle. Yeah. No. And every, every model, every provider, sorry, is a little bit different in terms of how you are supposed to get everything set up, which is why the, the function documentation in Elmer is probably the best place to get started. Because whenever a new provider is added to Elmer, we figure out where you should go to get that API key. We have to do at least once and we write that down.

That's great advice. All right. Let's keep going with the questions here. How would you deploy an app? Yeah. So, okay. Very quickly, do not use live browser or ShinyChat chat app to as a way to deploy an app. Those are designed for being like local, like you're just testing out yourself locally. That's fine. On the other hand, if you're, if you're writing a Shiny app, like we saw, like the second example was something that you could deploy easily. And it was only a few lines of code to get that started. So if it's a, if it's a Shiny app that you have like done the normal Shiny app things, like it's, there's a file that starts with library Shiny and it ends with Shiny app UI and server. That's something you can deploy. You can put that on Posit Connect or anywhere else you deploy your Shiny apps.

Awesome. Another question, is choosing a model based on data size, what is the standard SOP for choosing the right model? Yeah. Great, great question. Experimentation is the right way to do this. You try, try lots of models, see what works. There's always, basically with every model, there's a trade-off between size, speed, cost, and intelligence. And none of those things are necessarily linearly related, and they're all task dependent. So it really comes down to trying, like you almost could never go wrong with using the biggest model that you have access to. But that also turns out to be the most expensive model usually. So it's still, it still comes down to testing and experimentation and figuring it out.

And I saw someone in the chat asked about how do you test models? Oh, they're asking about how do you test the tools? But yeah, how do you test models? Simon Couch, who's also in the chat and can drop a link to, he's been working on a package called vitals, which is a great way to test different models with different tasks and see how they do and compare them. And that's a good place to start if it's really important to you to optimize which model you're using.

All right. A couple more questions here. Will you share the scripts afterwards? Yes. Yeah. They are already online at the repo that I linked to. It's in my GitHub account. And I'll drop the link one more time in the chat because I still have it in my clipboard.