Shiny community, hackathons, and his AI mindset | Joe Cheng | Data Science Hangout

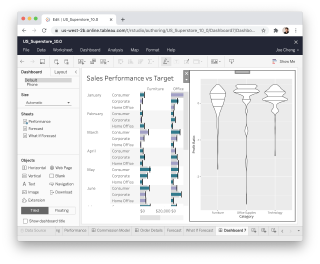

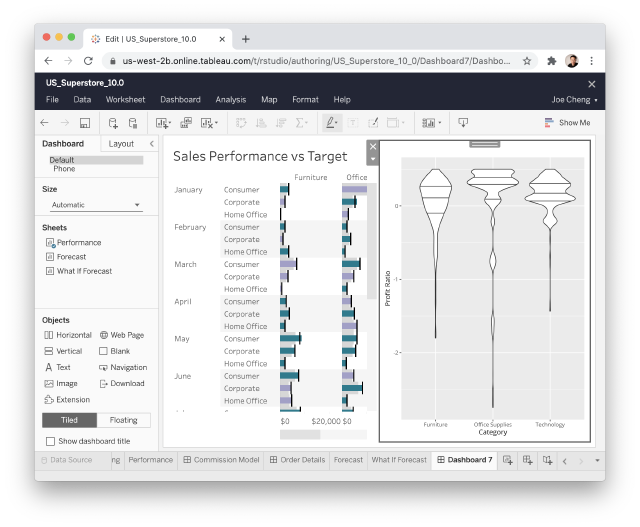

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We'd love to see you! We were recently joined by Joe Cheng, CTO at Posit, to chat about the Shiny contest, the use of AI in data science, and designing hackathons for learning new technologies. We were joined by several past and present Shiny contest winners who gave great advice on how to get started if you want to participate (and we really hope you do)! In this Hangout, we explore the evolution of the Shiny contest since its inception, including what made the 2024 submissions unique and the ways the contest encourages community contribution and learning. Joe also shared about his personal journey from feeling skepticism about AI to seeing and embracing its potential. We got some amazing questions from the Hangout attendees! We hope you join us live next time to ask some of your own questions Resources mentioned in the video and zoom chat: 2024 Shiny Contest Winners → https://posit.co/blog/winners-of-the-2024-shiny-contest/ Joe's AI Hackathon Slides → https://jcheng5.github.io/llm-quickstart/quickstart.html Shiny Assistant → https://gallery.shinyapps.io/assistant/ Isabella's blog post on prototyping with Shiny Assistant → https://posit.co/blog/ai-powered-shiny-app-prototyping/ Posit Conf Workshops → https://reg.rainfocus.com/flow/posit/positconf25/attendee-portal/page/sessioncatalog?tab.day=20250916&search.sessiontype=1675316728702001wr6r Shiny Conference 2025 → https://www.shinyconf.com/ Call for Speakers Shiny Conf 2025 → https://sessionize.com/shiny-conf-2025/ Shiny Tableau → https://rstudio.github.io/shinytableau/ Echarts4r → https://echarts4r.john-coene.com Elmer package on Github → https://github.com/tidyverse/ellmer All the Shiny app links mentioned in the video and zoom chat: Eric Nantz 2021 Shiny Contest Submission → https://forum.posit.co/t/the-hotshots-racing-dashboard-shiny-contest-submission/104925 Eric Nantz's R/Pharma conference keynote on AI → https://youtu.be/AfMa1CVUdXU?si=ThLsKFyonntxzBUF Eric Nantz's Haunted Places app → https://youtu.be/vX09QGMuOfo?si=K5_uPfK5bcfZZ92l Umair Durrani's Shiny Storytelling app → https://umair.shinyapps.io/storytimegcp/ Umair's Blue Sky profile → https://bsky.app/profile/transport-talk.bsky.social Umair's Shiny meetings project on Github → https://github.com/shiny-meetings/shiny-meetings Abby Stamm's Shiny Accessibility app → https://github.com/ajstamm/shiny-a11y-app If you didn’t join live, one great discussion you missed from the zoom chat was about everyone's favorite interactive plotting tools. Someone asked whether Plotly was the best option, and lots of people said they loved ggiraph, echarts4r, ObservableJS, and others. What about you?! What's your favorite interactive plotting library? ► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co Thanks for hanging out with us!

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Welcome back to the data science hangout everybody. We are so happy you're here. I am Libby. I'm a community manager here at Posit helping to foster our vibrant hangout community and I'm based in San Antonio, Texas. Posit, if you don't know, builds enterprise solutions and open source tools for people who do data science with R and Python. And in case you didn't know, we are the company formerly known as RStudio. So if you've used RStudio, you've used Posit.

I'm Rachel. I lead customer marketing at Posit and I'm based in the Boston area. The hangout is our open space to hear what's going on in the world of data across all different industries, get to chat about data science leadership, and connect with other people here who are facing similar things as you. And we get together here every Thursday at the same time, same place. So if you are watching this as a recording on YouTube and you want to join us live in the future, we would love to have you here.

Wanted to say a big thank you to everybody who has made this the friendly and the welcoming space that it is today. We are all really dedicated to keeping it that way. If you have feedback about your experience here today, good or bad, we would really like to hear from you. There's going to be a survey that pops up in your browser as soon as you leave the Zoom call.

This is the point of the call. This is a community discussion. It doesn't matter your years of experience, your title, your industry, what languages you work in or don't work in. We want to hear from you. We also really encourage you to connect with each other. This is a community spot. So introduce yourself. Feel free to share your LinkedIn, a link to your GitHub repo, link to your personal website.

I'm also going to do a quick shout out that the registration for PositConf 2025 is now open. We'll be in Atlanta this year.

But with all that, we are so excited to be joined by our co-host today, Joe Cheng, CTO at Posit. And we're also lucky to be joined by a few of the 2024 Shiny contest winners today. Joe, I'd love to kick it off with having you introduce yourself first and share a little bit about your role here at Posit, but also something you like to do for fun outside of work.

Joe Cheng's background and AI journey

I am the CTO of Posit. I was the first employee back in 2009. Almost my entire time at Posit has been focused on web stuff, especially Shiny. And in the last, I don't know, maybe year, I've been focused on AI and took all my energy not to roll my eyes when I said that. But it is true. I have been exploring at first the intersection of Shiny and AI, and then it kind of turned into like AI across Posit. What does this crazy technology mean for us? Outside of work, my hobby aspirationally is cycling.

So before we jump in, and I'm sure there'll be a lot of AI questions as well, but we mentioned the Shiny contest already. And I think it'd be great to pull in Curtis, who has run this contest for a few years now. Curtis, would you want to jump in and share a little bit about what the Shiny contest is?

The Shiny contest

Yeah, absolutely. I'm Curtis. I work at Posit on the marketing team and community team. On the point of the Shiny contest, I should give credit where it's due. So Joe and Mini, Chet and I, Rundell, I think many of you also know, did the first Shiny contest ages ago. And I've kind of picked up the mantle, and then taken it to like tables.

So the community event, when we think about the kind of community events, we'd like to support and organize ourselves here. Most of Posit are engineers who are making software, open source software. And so open source software benefits a lot as people contribute to the code base. You could contribute directly by contributing code, you could create supporting packages, you could do bug reports, feature requests, there's all sorts of ways you could contribute. But there's interestingly also a user community and a super user community. They're folks who might not be actually contributing to the GitHub repo and directly to code or supporting package, but they have a ton that they could contribute.

We were just kind of thinking, what's a good way that we could kind of plant a flag to kind of call for people to contribute and to kind of show what they do? So, you know, for example, how do you create Shiny apps? What Shiny apps are you creating? How do you organize your code? And we were kind of thinking, is there a way you could do it that it's fully reproducible and people could dive into the code and kind of learn how you approach things?

So that's kind of what the Shiny contest and a lot of our other contests are doing, where we have a submission deadline that is essentially a forcing function to encourage people to submit and actually get their work done and share by a certain date. In 2024, we had over 100 submissions. They were both R and Python. The interesting statistic, there's over 20 countries. And that's just looking at the addresses that people asked for their stickers to be sent to.

I find it amazing that the core incentive that's built into this, like, why are people contributing? It does feel like altruism, right? Like, basically you're being recognized, you know, and then you get some stickers. We are just blown away by all the sharing that people do and just really appreciate it.

Joe, I have a question for you. We're talking about the Shiny contest. You have seen a lot of Shiny over the years. So, what was different about this year's crop of winners?

Yeah, absolutely. First, I want to acknowledge this contest doesn't happen without Curtis, for sure. He's the driving force behind making it all go. And then this year's contest would not have happened without Garrick Adenbuie, who is on our call, who not only helped judge but kind of built all the infrastructure for judging this year.

This year, 2024, I think every year, I think the submissions are really awe-inspiring. And I'm surprised every year. I think what struck me about this year was that the level of sort of design polish and technical expertise and impressiveness is as high as ever. But it felt like these applications, especially among the winners, felt real. Like, it didn't feel like somebody just put together a demo or somebody just put together something for the sake of, you know, trying out a new technique or a new function. But they really felt like they were built for a purpose and were used by someone.

So, our grand prize winner, Curb Cut, was designed to help kind of urban analysis of urban areas and different ways that, you know, emissions and sustainability and things like that. And clearly was built with a passion for helping these municipalities figure out, you know, different aspects of how they can make their cities more sustainable. Or like, on the other end of the spectrum, there was a Pomodoro timer that was so polished and so kind of dialed in that clearly somebody had thought about all the kind of use cases. And yeah, just like every one of them, it felt like it either really engaged in someone's hobby in a way that you could feel like, oh, this person like needed to use this.

Our grand prize winner, Curb Cut, was designed to help kind of urban analysis of urban areas and different ways that, you know, emissions and sustainability and things like that. And clearly was built with a passion for helping these municipalities figure out, you know, different aspects of how they can make their cities more sustainable.

Yeah, sure. So, hi, everyone. My name is Garrick Adenbuie. I have been on the Shiny team now for almost exactly two years at this point. I had a lot of fun looking at these apps this year. I've been a judge for a couple years now for everything. And I think, like, especially being on the Shiny team and being kind of in the trenches of GitHub issues and, you know, seeing little snippets of apps and of Shiny code that people are writing and often helping them kind of try to debug or figuring out what we did wrong. In those cases, it's just very refreshing to see these, you know, well done, full apps that do something useful that help people in their daily lives or help them realize, you know, a creative idea that they had.

Absolutely. I put a question into the chat for some of the Shiny contest winners or anybody who's participated, but I would love to hear what advice you might give to somebody who's considering entering the contest in the future.

What does a CTO do?

We had one anonymous question come in already, Joe, and I think it's good to cover up front. What does a CTO do and what does CTO stand for? Nobody knows, honestly, what I mean. So, no, I'm sorry. It really can mean a lot of things, and I think it's different than a lot of other C-suite titles.

Traditionally, CTO can mean one of a number of things. First is you start a company. There's two of you. There's a technical person and a non-technical person. The technical person is the CTO. That's not me. This company was started by J.J. Allaire, the most technical person I know. Another kind of CTO is where you have kind of a engineering department and a VP of engineering who's kind of over that. And then the CTO is kind of like department of architecture. And they're either sitting there pontificating about the future or making architecture decisions that kind of then get projected onto the engineering department. And I don't believe in that kind of CTO.

And then the third kind is basically your engineering department is in trouble and you need someone to come in and make a lot of unpopular changes. So you appoint the CTO. So I'm none of those things. But I do have to admit, especially over the last, I don't know, year and a half, this AI stuff kind of was not a natural technology for us to ingest as a company. And I think like a year ago, we were all like, boo, AI, you know, we're all kind of very skeptical, except for like three people in the company who are really excited about it. And I kind of felt like compelled, like, I at least have to spend some time checking this out and then realize like, oh, crap, like, no, we are missing something very, very important. And it's not something that like we in the boardroom need to understand. It's like this whole company, we need to build understanding from top to bottom about this technology.

So my job went from, I'm helping the Shiny team execute on, you know, the packages that we build and giving talks and things like that to like, how do I take this whole company of really smart, opinionated people and help us go from a world where like, boo, AI sucks to, yeah, there's some like really heavy reservations we have about it. And yet, we have to look at the opportunities here and, you know, upskill ourselves and turn that into, you know, a set of plans, a set of prototypes, you know, a set of strategies. And so there have been like a lot of points over the last year where I'm like, this feels like CTO work more than it feels like being a lead developer.

AI skepticism and the right mindset

I'm still an AI skeptic. I feel like code generation, it's good at, like you gave a good demo, I think, at PositConf about how you can turn like words into like a .db query or whatever. But other than code generation, I just am very skeptical. I feel like Apple intelligence is just comically messing up summaries all the time. I don't think they can think. I don't think they can reason. So was it more of the code side, or is there something else that you saw that kind of changed your tone on large language models?

Yeah. I mean, I think what started the change for me is that there were a couple of true believers that just really were, as soon as, you know, ChatGPT and really like Gen AI stuff before that came out, who were really intensely attracted to this technology. Winston Chang on my team, I work very closely with, and he was one of these people that just, I think as every new development came up, he was like, oh, look at this. This is amazing.

I realized after a while that there was a big difference in mentality between the people who are getting excited and people who were sort of getting annoyed. And I think the difference is, so you go to use ChatGPT for the first time, or whatever, and you ask it three questions and it gets two of them wrong, right? And I think most people are like, oh, this thing is useless. It was wrong. Like I asked it something and it was wrong. And the people who really got it were the people who were like, oh my gosh, it got one of them right. That's really interesting. What else can it get right?

The more I started to see people around me gradually, like the results that they were getting by like, yeah, yeah, yeah, it makes mistakes. Keep pushing. And, you know, like knowing that it's going to make mistakes, what can you get out of it, is a totally different mentality than like expecting it to be perfect at anything. So I don't think that makes it good enough to be a game-changing, you know, technology. But it made it interesting enough to be like, if you could save, you know, two hours coding something up, even if it took you six turns in a conversation instead of one turn, like it's, you'd be foolish not to take advantage of that.

So I want to be clear, I don't at all think that all the kind of breathless hype that's out there, like most of it is nonsense. Certainly anything with AGI, I'm just, I'm not even interested in following that discussion. Like that's just not an interesting, that's like philosophy, and I always hated philosophy.

So yes, for me, I think, yeah, what I've been really interested in is the more coding aspects of things. I am really interested in, number one, I think helping our users do what they do more effectively using AI. It was really the release of Claude 3.5 Sonnet, I think maybe in March last year, that represented like, this is a step change in ability to code, where it went from like, this is not just wrong, it's unknowingly wrong. Even like the best models were like, this is just irritating. Like it's, it's wrong. And then when I tell it how to fix it, it fixes it, but then break something else. Whereas Claude was this sudden step change, and it's right so much more often. And it's so much more stable when you ask it to make changes.

So a lot of people, I think that are complaining like, oh, this thing never helps me write code. It might be like, you need to update your priors with like the latest models, especially Claude, which I still feel like is head and shoulders above everything else that I've tried. And then finding that we can sort of find these narrow use cases, like I talked about at PositConf last year, where we like, restrict what it's doing to taking your like, human intent and translating it to SQL.

I'll spill a little bit of a secret, like one of the things that I'm working on is this chatbot that mostly focuses on doing like dplyr and ggplot type stuff. And like, if you think about what you can do with dplyr and ggplot, that's a lot. There's all sorts of questions you can do in exploratory data analysis with just those two tools. And those two tools, like Claude is very, very good at writing ggplot and dplyr code.

So I think it's like sort of ignoring the fact that there are all these things that AI is terrible at, and will be terrible at for, you know, the foreseeable future, and saying like, wait, what are the good parts? Like, and can we sort of create scenarios where people are a little bit shielded from the bad parts? And or at least their expectations are properly set, that like these things this bot is going to be good at.

Yeah, I think I agree with most of that. I mean, I do think that the ability to generate code in SQL benefits from the fact there's not a lot of satirical code out there. I mean, there's not like the equivalent of put glue on your pizza, and SQL does not exist, right? So I think that's one reason that's pretty good. I think you are embracing the good and running with it. And I am like, yeah, there's this good part, but most of it is kind of overhyped, and they still can't pay the bills. So I remain skeptical, but I think I appreciate what you're saying about there is some utility there.

Yeah, yeah. And I just want to acknowledge, like, a lot of people have objections to AI on the grounds of like copyright infringement, or environmental impact, or like, what does this mean for the future of the human race? You know, like, those are all totally valid complaints, and I will not attempt to argue against that. Like, those are absolutely things that we should all be wrestling with. But this sort of like, can it be useful? I think that question needs to be answered with a lens of, like, you need to get over being offended when this thing is wrong, you know, like, take into account when it's wrong and take into account, like, it can't be trusted for these things. But if you allow that to stop your exploration, then you're missing out on a lot of good things that the models can do.

Probabilistic models and mental models

My question is, is one that comes a little bit out of frustration as well. One of the challenges that I see around it is not the technology itself. I think it does everything that it's meant to do the way it's supposed to do it. It's a probabilistic model. What I think the issue is, is people's understanding of probabilistic models and what they can and cannot do. So if your expectations of AI are that it's going to talk to you like a person and have the judgment of a human and all of that. Yeah, you're going to be very disappointed. But these are really great language models that are just models of language. They predict what something is based on a huge set of data. So I think the problem is the expectation of it. And so I'm wondering how you've dealt with kind of that question in talking to folks that are maybe a little less technical.

Yeah, I actually fundamentally disagree with that framing. I mean, well, sorry. What you said is true. These are absolutely probabilistic models. They are effectively like very, very, very large pattern matching machines. There's no doubt that that statement is true. However, I found it as of like a year ago, it stopped being useful.

We've been doing an internal hackathon series, myself and some members of the Shiny team. Once we realize like, oh, there's stuff here that we need the whole company to understand. We do like a two and a half hour boot camp and then set people free for two days to hack on LLMs. And the very first thing I say is to resist this framing. So especially people inside Posit, a lot of them know how these things are coded. They know the math. You know, they know what a feed forward network is. They know, you know, how attention works. And if you know a little bit too much, it's actually it gives you really bad intuitions about what this technology is capable of.

Because I think probably at least a year ago, we surpassed the point where what you would intuit a pattern matching machine to be able to do these things can already do. They can already go beyond those things. So I think I encourage people like understand that that's true. But when you are wondering, I wonder if a model can do this, don't let that intuition stop you from trying things.

Think of it as like really lean into being empirical about this. Don't think about like my mental model for what Markov chain scaled up can do is this. So I'm not even going to try asking it this kind of question. Like I think like we far, far exceeded what you would normally intuit these models to be able to do. And I think the way you can see that is that every generation of new foundation model that's come out, there's been some gotcha question that has been like, oh man, it does these amazing things. And then this simple question completely fools it. And there's always a host of people posting, well, of course it can't do that. All it is is a stochastic parrot. And then what happens is the next generation of models come out and they can completely, you know, not just solve that question because they've seen that question. People have talked about it, but like that type of sort of quote unquote reasoning, which again, not technically true. It is not reasoning. And yet your mental model is better served by pretending that it's reasoning and then just not being surprised when the facade kind of falls down.

It is not reasoning. And yet your mental model is better served by pretending that it's reasoning and then just not being surprised when the facade kind of falls down.

A lot of what we've encouraged people to do is to just sort of don't say no for the model, like let the model try and you might be surprised what happens. And every once in a while you're surprised that you think that it should be able to do something and it can't because in the end it is just a pattern matching engine. But I really encourage you not to go into it, putting those limits on the model. You're going to miss out on a lot of things that it can do amazingly.

The internal AI hackathon format

Yeah, absolutely. So basically we let anyone in the company sign up, whether they're at whatever role they are in the company, any department, any level of seniority, whether they can code or not. And we start with a two and a half hour boot camp where we introduce ourselves and then in a group of eight to ten people, I think eight is about the optimal size for me, we basically give them everything they need in two hours to be able to start hacking on LLMs. And not just like using LLMs through ChatGPT or Copilot, but I believe that a lot of the interesting stuff that these LLMs can do, they only arise when you program against them, they only arise when you use LLM APIs. And I felt so strongly about this that I strong-armed Hadley into creating the ellmer package with me so that our users can do this.

And so in two to two and a half hours, I sort of go through everything that I feel like you need to know to get started hacking on these things, including you know like shift your mindset from thinking of this as a stochastic parrot to think of it as a reasoning human being even though it's not. And then I can share the slides, I'll share the slides in the chat after I answer this. And then we give them a two-day period where we ask them to spend four hours minimum doing some kind of exploration with AI. And then we come back and have an hour and a half sort of show and tell where everybody gets five to ten minutes to show what they've done.

Now I'll say that the most valuable part of this by far is just creating the space for people to have the freedom to explore. I think you know Posit, we're like a fully remote company, everybody's very conscientious. So the idea of like let me put my normal responsibilities to the side for a little bit, even for four hours over a two-day period, and just start hacking. That is a really difficult thing for people to do without feeling really guilty, even if it's something that everybody acknowledges is an important thing. So I think one of the reasons I've been told this effort has been really successful is I have the CTO title, so it can feel a little bit like this is official, like you're officially being asked by one of the executives to dedicate this time and really spend it to learn this one particular thing.

Yeah, I loved it because I think sometimes we don't take enough time to step back and learn something new. Like we're just doing our regular jobs every week and you get so caught up in that, so it was really nice to like take a step back and think about what's a problem that I've been wanting to solve and I haven't given it enough time. And I'll even share like one quick example, like right away was with the Hangout chats, like Libby and I always see all these great resources, but the chat is such an amazing conversation that's happening, it's hard for us to go and pull out those helpful links. And one quick way we used it right away is to say like, what were all the resources shared in the chat? So now that we could share them all with you on LinkedIn as well.

Yeah, I got a chance to do it and for me personally, I did see some thing on the chat when people are talking about prompting. The way I like to look at working with LLMs is like training a pet on how you, like the pet already knows like a few things, like its biological needs and stuff like that, but then how do you actually make it follow directions, right? So how well you train it or how well you do the prompting actually affects a lot on how you want to do it. And the way I actually have found it has worked really well is the answers I'm looking for is something I should already know what I'm expecting. If it's something that's like super unknown, then it's going to be really hard to validate that. So if there's something I know what I'm expecting, I can build some validation on top of it, becomes really easy to like go back and change the prompting to make sure I can tune it to get what I expect.

That's a really good point. I was also in one of the hackathon groups and I think for me, one of the core things that I really enjoyed about it was just Joe's articulated this mentality of questioning your own mental models and using experimentation as a way to find out how LLMs work and the ways in which they work the best and just really kind of approaching it with a curious mind and also just trying it out and seeing what happens. And I enjoyed the format so much that as soon as I heard that Joe was going to be leading a workshop at PositConf, I offered to join in with him. So I'm really excited that this year at PositConf, we're going to be hosting a workshop that is basically distilling the experiences that we had from the hackathon into a one-day workshop on programming with LLMs. And there's a lot of really cool tools that we've been working on. Joe mentioned ellmer that Hadley's been working on. And also on the Python side, we have a similar package called chatless. There's a lot of cool things in Shiny that we've been adding to work with LLMs and they're all going to be wrapped up in this one-day workshop.

Spreading AI knowledge across the organization

It did sound like you mentioned a more holistic learning process for the organization. You said, you know, we need to embrace at least knowing about AI from the top all the way through the org. Like what does that look like for you all? And are there resources that are good to that end?

Yeah. So the link that I shared in the chat are my slides for the kickoff. This so far really is the way we are trying to spread this information. I think once people start using these models in anger, the rest comes relatively easily. That being said, one conclusion that I've come to about our hackathon format and this set of slides is that they're very effective for people who know how to code. And they're okay for people who are not coders. I feel like it's maybe a little bit interesting, but it's like a lot of time is spent on things that are not going to be relevant to you if you're never going to write a line of code.

So for the big chunk of the company that there's no coding in their lives at all, I think that's like a to-do for us. How do we come up with a hackathon format that really makes the maximum amount of sense? I think Rachel happened to do a hackathon project that involved no coding and was spectacularly successful at that. So that is definitely a to-do for us in this sort of winter-spring season to figure out how do we get people who have no coding knowledge to come in and really use these off-the-shelf tools more effectively or to build mental models differently.

And the thing that we've pointed people to the most is Google Notebook LM, which, I don't know, it seems to be like a particularly good implementation of RAG and a number of other techniques and a really nice UI. So I think so far, I think we've had the most success basically pointing people, giving people a sense of what RAG is, how system prompting works and stuff like that, and then just go see what you can do with Google Notebook LM. With Google Notebook LM, it's so easy to bring in anything from your Google Drive, YouTube videos. And at least in our company, we use so much Google stuff that everybody has relevant information in Google Drive that they could do some interesting experiments with.

Yeah, Joe, actually, I will say Notebook LM, that was my thing that put me over the edge from AI skeptic into, well, this is actually useful, now I get it type of thing. After being grumpy for several years about it and being like a curmudgeon, being able to just add a bunch of sources and have it be so user-friendly with a good user experience is amazing. So if you're out there and you're skeptical, like I was, maybe go explore Notebook LM.

Advice from Shiny contest participants

I wanted to steer back to Shiny for just a minute because we asked in the chat, like, hey, Shiny contest people, do you have any advice? And Eric does. So I'd love him to hop in really quickly and share.

Yeah. Hi. Thanks, Libby. So I've been a Shiny user from the very, very beginning back to the Google mailing list. So shout out to those that were on the Google mailing list back in the old days. Nonetheless, yeah, the Shiny contest, I'm so glad that that was originated. And for me, both from being on the inside of it as a judge one year, and as well as being a submitter, some of the advice I can give to those of you that are interested in trying this out, one of which is find, like, maybe it's based on one of your hobbies or an interesting data that really excites you. Don't just use some data source that is kind of boring to you. Find something that really you have some excitement with.

When I submitted, even though I was a judge there, I just figured I'll just submit it just for fun. I was involved, of all things, an online racing league during the COVID pandemic, and we wanted a way to actually get the race results to have a cool app to visualize it and kind of track how the races were doing throughout the season. So we figured out how to do OCR on, like, the game screenshot. And I thought, OK, well, I'm going to bring Shiny into this and not just have my compatriot in Europe do all the Python fun with it. So I built a Shiny dashboard that levers Plotly, levers Reactable, but things that I wish I could share, like, more fully from my day job, where some of you know I come from life sciences, and I can't share, like, 99% of what I do there. But I worked in a few things that I learned from some of those projects into that Shiny contest app. So it was like finding a motivating dataset or other rich source of inspiration and just being as creative as possible with it.

Interactive plotting alternatives to Plotly

Is there mostly any sort of Shiny app that I make or try to make default to any plotting done in Plotly? And it, like, gets the job done. But I feel like it's something that has been around for quite a while, but seems to not have so much development or a lot of enhancements in it. And I was just wondering if, like, you know, is there anything that anyone else uses that seems to be better? Seems like GGI Rath, which has a fun name that people seem to like using that. So I'm just kind of wondering, like, are there any sort of, like, you know, plotting alternatives, stuff that works better, stuff that's in development, cool stuff to check out there?

Yeah, I definitely sympathize with that. I feel like Plotly is just good enough that I don't, like, bother learning anything else. But it definitely, you know, it definitely has its rough edges. And there are definitely times where I'm like, oh, you know, I wish it was a little bit better in this or that area.

We, I love the advice that's here, you know, GGI Rath and eCharts for R. I don't have a ton of experience with either, but I've heard really good things about both. And it's always one of those things that I think for us internally, for those of you who have been around for a long time, we took a shot at this with GGViz years and years ago. And Hadley kind of famously declared on stage at USAR that, like, 2014 was the year of GGViz. And we had some kind of, I don't remember even what the technical challenges were, and then kind of backed away slowly. So, for years, he caught a lot of ribbing from question askers at conferences, like, what happened to the year of GGViz? But I think it's always something that feels like we're very tempted to return to, but it's always like, oh, the year after this year, you know, like, there's always these things first, and then we can, like, let's build the world's best interactive graphing library.

This same problem exists in the Python space. I feel like Plotly plays the same role there, where it's sort of, like, probably the most popular interactive one, even though everyone's like, yeah, it's good enough-ish, you know. But all the, you know, competing libraries have their own sort of pitfalls or particular features that they don't have. So, I always keep my eye on ones that are based on Vega, Vega and VegaLite, just because it feels like there's, like, a really principled base that they're building on. But at least last I checked, being able to do custom interactive stuff, like, in reaction to clicks and things like that, all that stuff was a little bit, it felt a little bit manual still.

Advice from Shiny contest winner Umair

Thanks, Libby. So, my suggestion is, if you have any idea at all, don't wait until you have all the required skills to make that app. Just start. And once you have some bare bones working app, get community feedback. So, DSLC, Slack is very useful. Blue Sky is very active now. You can get some feedback and improve from there. When I was working on my app, about the story time with AI, I did not know how to render Quarto slides in the app. And I actually had a very challenging time to basically use Quarto, and not only generate the slides, but also render them in the app, because Quarto limits you to where your output directory would be. Unlike R Markdown. But eventually, I figured it out. So, my suggestion is just start. Don't hesitate.

Thank you so much, Umair. I love that advice. Joe, do you have anything to add? I was going to say, definitely check out Umair's app. It is a beautiful piece of work. It is such a simple user interface. But it's just, like, the whole thing just felt like a lovely little nugget of Shiny, plus LLMs, plus, like, the idea to use a Quarto slide deck to render the story output, I thought was, like, a really inspired choice. Because you get these sort of really nice transitions and this, you know, really nice formatting. So, I really appreciated that app. I thought it was super cool.

Yeah, I think several points. While developing the app, the first thing was how to render slides. And so, when I was working through that, I had it working locally, but not on the deployed app. So, then on the Slack, I asked about people trying out the app, and there were several people that actually helped testing the app. And on that point, I also want to add, I recently started this project called Shiny Meetings, and I posted about that on BlueSky as well, where the goal is to do tidy user type projects every 15 days, where you could collaboratively work on an app, the UI part and server part, and I think there's a lot of opportunity to practice there.

The Shiny Assistant and its future

I do have like a – well, I love the Shiny bot or the Shiny Assistant. Like, it is my best friend. It is my god, basically. I pray to the god of the Shiny Assistant. And on that topic, I was wondering if there's any motions to make it, to build it. I noticed that basically, if you refresh, then you lose all of your conversation, and you have to start over. And sometimes when I reload, it reloads where I was, and sometimes it reloads when I was a month ago. And so, yeah, I was wondering if it's going to continue to be free. Second, if it's going to have some sort of like agency, like, oh, I want this to have, you know, Plotly libraries much more like here, or I want to upload my code there so that it knows my style. Because I think that R is really, really poorly understood by like Claude or OpenAI models and stuff, but it's magical with the Shiny Assistant.

It's funny you say that. The Shiny Assistant, like almost all the intelligence is just Claude. There's very little custom – we know that there are a few common mistakes that it makes to Shiny that we instruct it not to, but other than that, it's mostly just Claude doing what it knows about Shiny. But I'm glad you're finding it useful.

For the sort of hitting reload and having it start over, that is – I mean, that is intentional. We assume that if you hit reload, it's because you want to start over. And if you're seeing it, sometimes when you hit reload, it reloads – you know, it continues where you left off. That's because if you disconnect, we assume that that was accidental. So, if you put your laptop to sleep or you just, you know, walk away for a few hours and come back and it's gray, we assume that if you hit reload then, then that's because you want to resume where you left off. And you can see the difference because in the URL bar, you'll see a huge URL after the pound sign. That means that it has state that it's waiting to restore you to. Whereas if you just see, like, the bare URL, if you hit reload, it's going to start clean.

Yeah, as for whether it'll remain free, it is quite expensive to run. It is currently costing us, I think, a couple thousand dollars a month and growing quite a bit each month. So, I'm not sure what the future holds there. Worst case, I think, is you'll have to bring an API key from Anthropic and then it'll cost you, you know, dollars a month at the most. I mean, it's not that expensive for an individual. It's just that scale really adds up.

But I think our focus right now is there are a lot of things that it can't do because it's running in a browser. There's a lot of code that it can't run. It can't access your data, things like that. So, one of the things that we are actively working on now is thinking about how do we bring that experience local so that you're running Shiny Assistant on your own machine and it can run whatever R or Python code you could run on your machine. Like, that's what it can write and let you preview. And then in that case, I mean, we will definitely be asking you to provide an API key or something like that. You know, if you're running on your machine, then we want you to connect directly to Claude or whatever's in the back end.

And as far as getting better, I don't know. Like, we're not doing a great job of sort of analyzing what people have written into Shiny Assistant. So, like, we reserve the right to, like, analyze your logs, but we have not as of yet done any of that. So, right now, we're a little bit blind as to what Shiny Assistant is doing well and not well.

Posit's positioning in the Gen AI stack

For background, my team has historically been doing data science, but we've gone very heavily into Gen AI sort of workflow development. So, I'm a big believer and user of this technology. And I've been using Posit products for a long time. I remember when Posit Connect first came out. So, I really, I see how this product fits in the enterprise, and I'm seeing this new capability emerge. And so, my question to you, Joe, is how are you guys positioning yourself as part of the Gen AI stack? It's different than Shiny. It's a completely different ecosystem, set of users, but you guys have something here that other people don't. You're in enterprises. You have the ability to host Python apps. You have so many things going for you. How are you trying to take advantage of this?

Yeah, absolutely. So, I think of us as we want to be at the sort of AI integration layer. So, we don't want to develop foundation models, and we don't even really want to be in the model hosting business. Each of the cloud providers has a very strong solution with Bedrock, Vertex, and the Azure AI. We can't compete with that, even in terms of getting it past IT. They want the models to run there. So, what we want to do is take this raw capability of an LLM endpoint and empower people to build custom front ends, custom workflows that take advantage of those LLMs.

I think right now, a lot of people who don't really get hands-on with the technology think of an LLM as like, I want ChatGPT in the enterprise. We think of this as like, sure, you can have that, but there's so much more interesting capabilities if you narrowly focus these LLMs to solve a particular workflow problem. So, the one that I demonstrated last year was having a sidebar in your Shiny app that lets you ask data questions. But I think in our hackathons, in our internal explorations, there's just like a million things where you don't want some off-the-shelf AI solution. You want to build something that's bespoke to your organization, where LLMs are one ingredient of what you're building. So, that's one part of it. That's what I'm most excited about, being a Shiny person. But there's also other possibilities that Connect itself could gain LLM capabilities. Like, Connect itself could let you ask questions of the content that you've published, for example, which, you know, those are things that we're actively exploring as well.

Thank you all for such great questions, and I can't wait to go back and look through the chat here and see all the conversation happening. But thank you so much, Joe, for joining us as our future leader, and Garrick for jumping in here, too, and to all the Shiny contest winners who joined today as well and shared their experience. Yep. Thank you, everybody. See you next week.