How to develop and deploy a machine learning model with Posit

While data scientists are often taught about training a machine learning model, building a reliable MLOps strategy to deploy and maintain that model can be daunting. It doesn’t have to be this way! Join us with Julia Silge at Posit on Wednesday, April 24th at 11 am ET to learn how Posit Team provides fluent tooling for the whole ML lifecycle. 1. Develop an ML model using Posit Workbench and a recent Tidy Tuesday dataset! 2. Version, deploy, and monitor that model with Posit Connect 3. Maintain reproducible software dependencies throughout the ML lifecycle with Posit Package Manager No registration is required to attend - simply add it to your calendar using this link: pos.it/team-demo Helpful resources: ️ Vetiver - https://vetiver.posit.co/ ️ Julia's YouTube Channel - https://www.youtube.com/c/JuliaSilge ️ To join us at posit::conf(2024) - https://posit.co/conference/ ️ Follow along blog post - https://juliasilge.com/blog/educational-attainment/ ️ Live Q&A Room - https://youtube.com/live/-6lqzW1iV7E?feature=share ️ If you're interested in learning more about Posit Workbench, Posit Connect, or Posit Package Manager - pos.it/chat-with-us ️ Subscribe to learn more about Posit events: https://posit.co/about/subscription-management/ We host these end-to-end workflow demos on the last Wednesday of every month. If you have ideas for topics or questions about them, please comment below!

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hey everybody, thank you so much for joining us today. It's nice to see you, even if it is just seeing your names pop up in the YouTube chat. But it was also fun to see how excited everybody was for this session and all the responses to my email letting people know about it.

So if you're wondering how you can make sure you're notified of events like this one too, you can subscribe to Posit event emails and I will include that in the YouTube details below.

Before we jump in today, I also wanted to use this opportunity to remind everybody that Posit Conf 2024 registration is now open. If you'd like to join us in Seattle in August. Julia and Isabel are actually leading one of the one day in person workshops there on an intro to MLOps with Vetiver.

I'm always so impressed with Julia's Tidy Tuesday YouTube videos. So I had reached out to her to see if she'd be interested in joining forces this month for our workflow demo. So I encourage you to also subscribe to Julia's channel and I'll put that in the YouTube details too.

If you have questions during the demo today, you can use the Slido link shown on the screen or ask questions in the YouTube chat. And right after the demo in about 30 minutes or so, we will jump over into a live Q&A room too. If you have specific questions about Posit Team, I welcome you to book time to chat with our team after as well. But thank you all so much for choosing to spend time with us today. I will turn it over to Julia and see you all in a bit.

Hi, my name is Julia Silge and I'm a data scientist and software engineer at Posit PBC. And today in this screencast, we are going to use Tidy Models and Vetiver to explore the machine learning lifecycle. You're starting with some data, you develop that model, and then you deploy that model.

This screencast is going to have an emphasis on using the Posit Team tools because it turns out that a lot of things that can be difficult about managing the whole lifecycle of a machine learning model can be addressed with the kind of tools you use. So we're going to be using Posit Workbench as a place for model development, Posit Connect as a place to publish and put our models into production, and then Posit Package Manager, which helps us manage reproducibility and package versions.

We're using a recent Tidy Tuesday dataset that is on educational attainment in the UK. Basically, towns in the UK, how do they differ in terms of educational attainment? How is that related to the size of the town, the income of the town, and other characteristics that we have? So let's get started.

Exploring the data in Posit Workbench

All right, here I am logged into Posit Team, which is three products together. So here I am in Workbench. In Workbench, I'm using the RStudio IDE. And so, you know, this is where we start, right?

So I am going to get started with a recent Tidy Tuesday dataset here where we will look at educational attainment in the UK, specifically with a focus on small towns. So we are going to – let me read in the data here from Tidy Tuesday. And what we are going to do is we're going to try to predict the educational attainment in a town based on some of the things that we know about that town.

So in a real modeling project, I would spend quite a bit of time exploring the data. Let me just give you – let's just do a little bit of a glimpse here just to kind of get the feel for what the data is in. But, of course, this is a super important part of the modeling process. So we'll glimpse kind of quickly here.

So we've got a town ID, a town name, the population size, whether it's small, medium, or large, the region. Is it a coastal town? We've got other kinds of information here. Is it residential versus other? Is there a university there? That's kind of interesting. An income flag about whether it's like a more – a town that has more deprivation and poverty or not. And then there are a bunch of different ways of measuring the educational attainment here.

This is – like this is younger kids, middle kids. Then this is like the end, what people are doing. All this information about the – like all of this is kind of aggregated into something called an education score. So let us – I think that's what we'll use for the modeling because that is education score. So because that is the – like kind of like an aggregated kind of number.

So like we could do, for example, a histogram of this, what this looks like. So you see that they have centered this value at zero, this sort of aggregated kind of number. And you can see kind of we've got a bigger hump down here of lower score towns. So there's like more to the – it has a – it's not symmetric here. There's more towns that have something on the lower end than the higher end.

Let's just look at this a little bit more. Let's look at that income flag. I mean I'm sure – I'm 100% sure that will affect this. Let's make a box plot here.

Okay, so cities are separate from towns here. This data set as I understand it largely is about towns. So the cities are kind of just here for comparison. But we certainly do see that lower deprivation towns have the higher education scores, higher deprivation lower.

So this is like a bit of just an exploratory plot. We would typically spend a huge amount of time in this if we were thinking about the full machine learning life cycle. But for now, we're going to see this higher deprivation towns. It looks like you might have a relationship here maybe, but look how wide that is. So hard to say.

But this is the kind of interactions and relationships that like maybe the size of the town makes a difference in educational attainment for if there's more poverty but it doesn't. Here, you know, that's the kind of thing we want to learn with a model that is flexible and allows us to learn those kinds of relationships. So let's now start about building a model.

Building the model with Tidy Models

So I'm going to use tidy models here. And the first thing I'm going to do is I am going to filter. I'm going to say I'm going to take education. I am going to filter so that I only get the towns here. Income flag. I'm going to say deprivation towns. So now I just am getting the towns here is what I have.

And I am going to now, I'll just take the things I'm going to use in the model. So education score is the thing that we're going to predict. We're going to try it for any town. Let's predict the education score. Let's definitely take that income flag. Let's take the size flag. Yeah, let's say whether it's coastal. Let's say whether there's a university there. And then let's look for regional differences as well. So these are the things we're going to use in our model.

And then we're going to create a split object here. A split object keeps track of which of our, we have a certain amount of data and we're spending our data budget here. So we're going to split our data into training and testing. So a split object keeps track for us of which objects are in training and testing.

So I need to set a seed because this has randomness here. Then we will make a training set that we'll use. We'll use a testing set like that. And then we'll make some folds. So let's say edufold. So the good default is vfold. Cross-validation. So we use a training data set is what we use to create folds for resampling. And we are stratifying.

You notice I stratified both when I created the split and when I did this. Stratification often helps and rarely hurts. So it's a good default option to take here. And I'm going to set the seed again just in case I run this separately.

Okay, this data set isn't huge. If I'm looking at the towns, there's only a thousand. So that would mean when I'm doing resampling, I would train on this, estimate on this, train on this, estimate on this. You know what, I'm going to actually switch to bootstrap resamples because I think that's going to give me a little better estimate of performance here because this is what we're estimating on. So this is like you've got the bias-variance tradeoff when you have cross-fold validation and bootstrap resampling. And in this case, I think I would – this is a little small, I think, so I'm going to go with this to kind of make a different choice there.

Okay, so that is spending my data budget, deciding which variables I'm using, which I would do based on exploratory data analysis, deciding – creating my training and testing and resampling folds. Now it's time to make my model.

Okay, so let me – I'm going to create a workflow like this. So I'll say workflow. And this is – I'm going to do a pretty simple model here. I am just going to predict the education score with everything that I have. So I'm not doing feature – you know, significant feature engineering.

So if I do look at the training data set, you know, we've got six variables. I'm predicting this one, which is a number, so I'll use regression. And then I'm predicting it with all these flags. So it's all these categorical variables. So I'm just going to use a simple formula. And then I'm going to use random forest here, which is a great default option for problems like this, of this size.

You know, and random forest, you really often don't even need to tune hyperparameters that much as long as you get – have enough trees. So I'm going to set the trees pretty high. And then I will just go with that. You know, these are some just sort of good default options for getting a, you know, a reasonable performance model using the, you know, this kind of data that I have available.

So now what I want to do is I want to use my training data, especially the – like specifically thinking of the resampling folds, to estimate how well will this kind of data set perform. So I am going to fit using my workflow, and I'm going to fit to my resamples. So it will fit this model, this, you know, fairly simple random forest model. It will fit it 25 times to each of my resamples, and then I'll get 25 estimates of performance, which will help me understand how well this kind of, you know, basic default kind of – this basic kind of model that I have, like how well is it doing.

So I am going to set a seed again because random forests involve randomness. It's kind of right there in the name, right? And then once I'm done, I'm going to collect the metrics off of this like that.

Okay, now it's fitting. So it's fitting. Eat my model 25 times. It's done. It's not a huge data set. You know, not – like the random forest model is reasonably fast. And so here are the results that I get.

So this is on the order – if we go back a couple plots to the first plot I made. This one. So this RMSE is on the scale of this. So the root mean squared error is on this scale 2.7. So, you know, this is not the most highest performing best model you've ever heard of, but like that's what we're able – that's how well we're able to estimate the education score of a town based on these inputs that we use, the six inputs.

Here's the R squared here. Again, you know, it is what it is. It is what it is. It's certainly good – certainly we're able to move forward with this and see how we would – like how would we take this model if we decide it's good enough and move forward with it.

So I am going to – so far I've been using the resamples, and so I am going to now fit it to my training data. Let's say I've decided – I've done model development. I've decided this is good enough, and so I am going to make a last fit. So my last fit I take the workflow, and now I do use the split, which is what I was like accidentally doing before. And I can do the same thing here. I can collect the metrics on this last fit.

So now instead of fitting it 25 times, I'm fitting it one time to the training data, and then these metrics are estimated using the testing data. So this is what I got from resampling. This is what I get from the testing data. So the – so it doesn't look like I've overfit here, which of course would be difficult when I use the resampling to – like this gives me a very reliable estimate here. So looking pretty good, like in terms of I have not overfit my data here.

One thing that might be interesting, this particular data is towns in the U.K., and so it might be interesting depending on, you know, like how these things are related to each other spatially. It might be interesting instead of doing something like bootstraps, do a spatial resampling approach so that – and what that would do is that educational attainment, like I'm sure so much else in the world, exhibits spatial autocorrelation, where things that are close together tend to be more similar to each other. And so if you do random sampling, you actually can get a slightly overoptimistic approach for that. We're not going to dig into that today, but that would be an interesting thing to look at here.

Let's just do one more quick plot so we can actually get the predictions from the final fit. So this is the predictions on the testing data, like this. So notice this is the number of elements we had in our testing data, and we can compare that, like this is the predicted value, and then this is the real one. So we can make a quick plot of that if we would like to be able to understand visually what those metrics kind of look like.

Okay, so this is interesting. And so what I can look at here and see is what we're having the hardest time predicting are these guys over here, which are the high education score, like the high educational attainment towns. We have a hard time distinguishing those. Like the spread is wider at the real high education attainment end than at the lower. So you often see this when you're really approaching a real-world machine learning problem that it's more difficult to predict one kind of thing than another kind of thing, and we definitely see that here.

Deploying the model with Vetiver and Posit Connect

All right, so let's say during – so, so far, what have we done? We've explored the data, we've built a model, and now it's time for us to deploy our model. So far in terms of like what am I doing with Posit Team, I've been all here in Workbench, which is my environment for model development. And now it is time for me to work on deploying that model out of my environment where I work on – where I work and develop and iterate to a production environment.

So I'm going to use Vetiver for this. And the first thing I'm going to do is I am going to – oh, I have to extract the workflow from my final fit. So what this gives me is the fitted, the trained workflow here. And then I'm going to pipe that to Vetiver model like this. And let me call it – I need to give it a name. I'm going to call it UKEDU Random Forest. So like, you know, UK Educational Attainment Random Forest. So let's save this as V, like so.

So this is a bundled workflow that is ready for deployment. And, you know, it's saving a fair amount of metadata here about what this model is like. But I actually, like, could add in my own metadata if I want that as well. Maybe I'll show how to do that in just a moment.

So let us load pins as well. And I am going to create a board. So pins is a way for us to think about storing objects, versioned objects with metadata. And so now I am finally thinking about going somewhere else. So this is the Connect instance that I am – so over here where I was working on Posit Workbench, now I'm talking about publishing. So I'm over here on Connect. So I have connected to Connect. Man, I'm always saying things like that.

But what I'm going to do here is I am going to write my Vetiver object to the board like this. So if I run all this, it is going to pin the model object over on my Connect. So I'm storing a versioned model with metadata about how it was trained. Notice that I'm getting this prompt about how to create a model card, which is – and there's our markdown template in Vetiver as a place to start for this. I highly recommend, if you're thinking about the whole machine learning lifecycle, to dig into this and think about model documentation as well.

But if we go over here and I look at my stuff, it's here now. It's over here on Connect. So this is – there's some – it shows me how I might connect to this. It tells me information about how it was stored, what it's like. I can click here to download it here. I can control who has access to it. Let's say I need all our users to be able to control it. I can look at the metadata here. You know, it's got – all this was automatically generated, including what kind of packages I need to make a prediction.

But I can also go up here and I can customize the metadata. So let me say that I want to pass in some custom metadata. Let's say I want to store the metrics from when I trained it. So this – if I take this, this will be the training, the metrics from the testing data. And so if I do all this again – whoops, that's wrong. I have to – metadata is a list, metrics like this. There we go.

Okay. So now if I let – like I'm going to reload this, and you're going to see that the metadata has changed because now I added some new metadata, which is, you know, these values, RMSC and R-squared. And I can read this back out. Like if I need to get to this model later, I can read it back out.

Okay, so what we've just done is we've versioned our model. And now what we're going to do is we're going to deploy our model. So I'm going to use the function vetiver deploy RSConnect like this. And I'm going to say, okay, go to my board and go to this object here. So it is – I'll just copy it here. This is the object that is a model. So what I'm saying with this is I want you to deploy my vetiver model. And where is my vetiver model? It's on this board, and it has this name.

There are other optional arguments here. If you want to say some specific things about the kind of predictions that are made, I'm just going to do one here so that you can see more informative errors. So if you set debug equals true, that lets us set up the API so that it passes the errors from R through to what we see from the API. So if we don't do this, if debug is false, it's safer in terms of how much information about your model gets through to outside of the API. But if you say debug equals true, you get more informative errors. So let me start this.

And now we're finally to a point where we're now thinking about the packages that we're using. So this is using under the hood Rn. It's finding 83 dependencies that I need for this model. And it is using, if I go back over here, it's now using the package manager.

For a, let me go back over here so that we can look at this and just talk a little bit about what's happening here with the packages. It's finding exactly the right versions of the packages so that I can be confident that my model is deployed, uses the versions that I expect. So this can be a common failure mode in deploying models that you can lose reproducibility because your packages are not really locked down and the same in both ways.

So this can be a common failure mode in deploying models that you can lose reproducibility because your packages are not really locked down and the same in both ways.

Notice that not only do I have these guarantees around reproducibility and using the right packages because I'm in this unified environment where I have all these things together. But also, that was pretty fast. That was pretty fast. It is really deployed now. Here it is. Here it is over here.

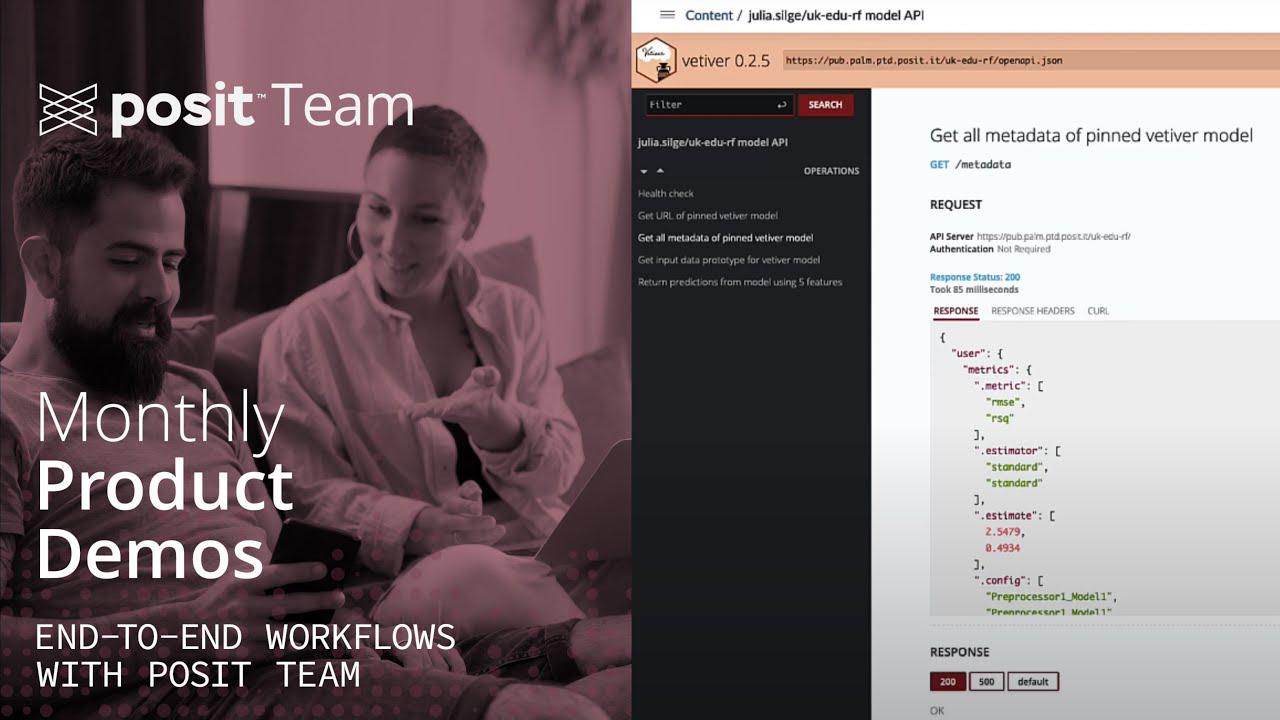

So I can do things like, let's say, uk.edu.rsa here. So I'm making a nice URL for it. Again, let me say that all users can get to it, but login is required. Let me save that. And then I can interact with this here. If I click this, it'll be in its little standalone window. And we've got all these API endpoints that were automatically created for me. We've got a ping endpoint that tells me whether the model is online or not, the URL, that same metadata that we had before. I can get it through the API in case that's a better fit.

The input data prototype is telling me what's the shape of the data that needs to go into the model. Notice that by default, we don't put possible values here, but that's actually something you can also control with an optional argument. Here's where the really big thing happens here. So this is the predict endpoint. These are all kind of supporting, and then this is the main one here.

Okay, so let's say higher deprivation towns, small towns. Okay, so higher deprivation towns. And this one's capitalized, yes. Okay, small towns. Let's say coastal. Let's say university. And let's say northwest, like this. Okay, so if we try this, it's going to get me a prediction. So notice this is on the lower end here. If we are looking at a lower deprivation town, it's quite a bit on the higher end here.

Think of what we're looking at right now as visual documentation for your model. This is the kind of thing you can use when you're collaborating with a software engineer, coworker who needs to know how to generate predictions from your model. Don't think of this as like a shiny app, but do think of this as a way for you to be a good collaborator when it comes to the MLOps lifecycle, so that you can give your software engineer, coworkers, what they need to integrate predictions into the rest of your system.

Think of what we're looking at right now as visual documentation for your model. This is the kind of thing you can use when you're collaborating with a software engineer, coworker who needs to know how to generate predictions from your model.

So this is just a regular API. So anybody inside of your, depending on the levels of which this is made available to people, you can access this from Python, from JavaScript. It is an API, but you also can access it from R. So let's talk about how you do that as kind of this last little thing.

So let me get the URL here. So I'm going to create a URL, which is just a string. So this is the main URL, and then let's say I want to get predictions from it. So I'm going to use the predict endpoint. I'm going to create an endpoint. Let me create a Vetiver endpoint object with the URL, and then we can look at what that looks like here. So this is a model API endpoint for prediction. So I have not called it yet, so let me do that right now.

So we predict using this endpoint, using the predict function, just actually like it was a model that was in memory. And so instead of putting the model here, we will say slice, let's say slice sample of the testing data and equals 10 like this. So we can, like if we had the model here, like let's say, if we had the model here, we could predict on it. So this is predicting with the model that's in memory in R right now that I have. I can get some answers here.

Now I can actually also predict on the endpoint, which is so convenient and nice. Now I think since I set this to you have to be logged in, this that I'm about to do is going to fail because it's like you're not logged in. You need to ‑‑ I'm not going to give you access to this. So instead what I can do is I can use authentication here. So I'm going to create something called my connect authentication.

And I am going to use hitter. I'm going to add headers that have my authentication here. So it's ‑‑ if I remember right, this might take me a sec to do. I have an environment variable. I'm not going to show you what my ‑‑ I'm not going to show you what it is, but I have an environment variable in here for authentication in this dot R environ file. And I'm going to get it. It's called connect API key. Like that. So I'm not going to print this out because I don't want you all ‑‑ I don't want to put my ‑‑ I don't want to put my API key on YouTube. Talk about a security fail.

Now with authentication, I'm going to predict on that end point, and it is calling the API. It's taking my data from R, sending it, converting it to JSON, sending it to the API, and then sending it back. And so I have taken a different sample, so the values are different, but we are getting these predictions here as well.

Wrapping up

So just to highlight again, I did model development in Posit Workbench. I published to Posit Connect both my ‑‑ like if I want to ‑‑ if I go back to content and look at what I have here, these are my things. I have two artifacts here, the binary model object together with its metadata and the deployed API, and especially when it came to deployment, being able to use Posit Package Manager solved some reproducibility and speed challenges that we often get with keeping, maintaining correct versions.

All right. We did it. We started with this data about educational attainment in the U.K. We spent a little bit of time exploring that data to understand it. Then we moved into model development. So these pieces of EDA and model development happened on Workbench by using Workbench that's easily connected to these next steps. It really sets us up for success. We then deployed the model. We were managing a couple of artifacts, including the model binary and the API that serves predictions using that model binary. We published those to Connect, which were nicely authenticated to each other, so we had this smooth experience. And then throughout this process, we used Posit Package Manager, which lets us have confidence about exactly what packages are being used.

We have confidence about the reproducibility and also makes installation really fast, which sometimes can be a struggle if you've ever spent a long time building a Docker container. Now, the steps that I showed you how to use here, use open source software. A great thing about this kind of approach is that you're not locked into one set of professional tools or one vendor, but as someone who uses our tools, I do think we solve a lot of problems that can be quite challenging. I hope this was helpful, and I'll see you next time.

Thank you so much, Julia. Now we're going to go jump over to our live Q&A, where Julia will join us in that Q&A room. YouTube should automatically push you over there, but if for any reason that doesn't happen, the link to the Q&A room will be in the YouTube details below, and we'll put it into the chat right now, too. We'll see you over there in just a second.