tidymodels: Adventures in Rewriting a Modeling Pipeline - posit::conf(2023)

Presented by Ryan Timpe An overview of the benefits unlocked on our data science team by adopting tidymodels. Data science sure has changed over the past few years! Everyone's talking about production. RStudio is now Posit. Models are now tidy. This talk is about embracing that change and updating existing models using the tidymodels framework. I recently completed this change, letting go of our in-production code and revisioning it with tidymodels. My team ended up with a faster, more scalable pipeline that enabled us to better automate our workflow and increase our scale while improving our stakeholders' experiences. I'll share tips and tricks for adopting the tidymodels framework in existing products, best practices for learning and upskilling teams, and advice for using tidymodel packages to build more accessible data science tools. Materials: https://www.ryantimpe.com/files/tidymodels_adventures_positconf2023.pdf Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference. -------------------------- Talk Track: Tidy up your models. Session Code: TALK-1082

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

My name is George Stagg. I'm a software engineer at Posit, and today I'm going to be chairing this session, which is named Tidy Up Your Models. So the first person we have today to speak to you is Ryan Timpe, and I'm going to pass straight on to him to get started. Thank you.

Thank you. So last night at the reception, someone ran up to me, super excited to meet me. I was like, yes, I made it, I'm nerd famous. But they thought I was Hadley Wickham. So just pointing that out there. I'm not Hadley Wickham. My name is Ryan Timpe. I am a lead data scientist at the Lego Group. My team and I build models to help our business understand how all of our marketing, our trade promotions, our product launches, all that impacts our sales and our brand health. I've been at the Lego Group for almost five years, and over that time, the field of data science has really changed. As you might have noticed, RStudio is now Posit. My managers are always talking about production, and if you checked out the title of my talk before you sat down, our models are now Tidy. And this change can be challenging, especially when it impacts our work, but it can also be kind of fun and rewarding to rethink how you do your day-to-day job.

So this talk is about that. Some of the recent fun and challenges I had while adapting to the changing world of data science were off the Lego Group. So let's start with that first adventure. And I'm going to call my changes adventures. It gets more fun. So when I joined the Lego Group, my first job was to build an R package to do our modeling better. And I did that. I built a package. It was simple, but it worked, and it answered some important questions for our business.

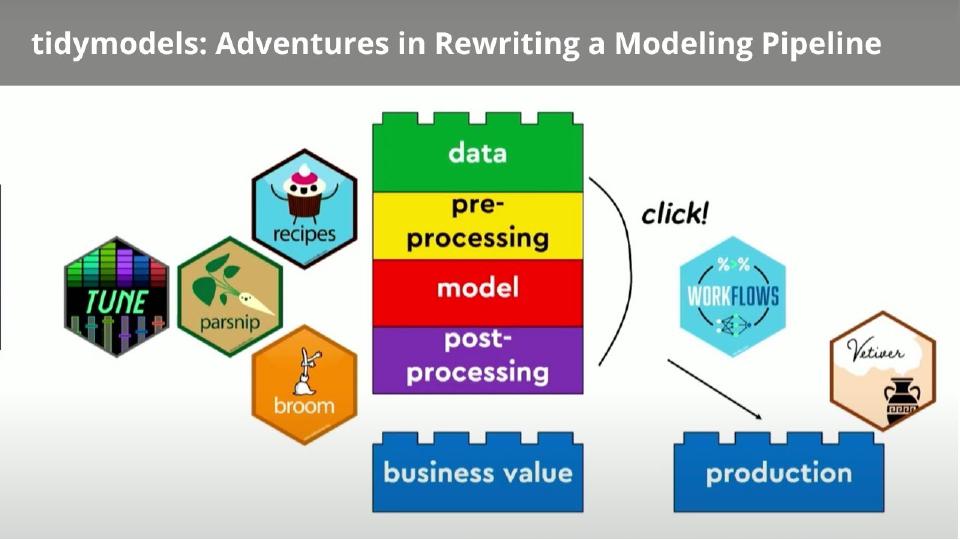

For no special reason at all, I'm going to represent that model on the screen as a red Lego brick. No reason. And then over the four years, we had to do more with this package. We had to add ways to add more data to the package and the model. We had to add more pre-processing. We had to add more post-processing. And then we had to do more things to add business value to make sure our stakeholders were actually using the models and driving change in the organization. Then we kept doing this over and over and over, more data, more pre-processing functions, more post-processing functions. And eventually, our pretty simple model and package looked a lot more like this. And this happened because we grew our package organically. It did everything we needed it to do. It ran some pretty awesome models, but it was getting very difficult for my team and I to navigate this and have this package grow with us.

So as we need to make more changes and add more things, we need to find all those connections and where to put each new step. And we came to the point where we weren't effective data scientists and we needed to make a change. And so four years later, we had matured, we knew exactly what we needed out of our package, and it was time to start over. So to do that, we identified tidymodels. tidymodels is a framework that adds organization to your models. So it takes that big messy pile of bricks and it stacks them. I never used tidymodels before, and I needed to use it on my job. So I used this book to help me by Max and Julia, who you will see on the stage in a few minutes.

What tidymodels is and isn't

So today's talk, it's not a tutorial. I'm not going to show you much code. I'm not going to show you how to really get started with tidymodels. But after this conference, and if you're still super excited about tidymodels, definitely check out this book, check out these websites, and it will have everything you need to get started. Because that's what I did. I took this book, I followed it cover to cover, took all those examples, applied them to my work at the Lego Group, and it was a really good way to get started with tidymodels and to get started rewriting my package.

The tidymodels packages and what they solved

So tidymodels itself is a collection of packages. And each of those packages really helped solve some big problem I was having with my models. So if you look at that red pre-processing or sorry, the yellow pre-processing brick, recipes was a huge help here. So pre-processing is a step that takes your raw data that might be human-readable, adds feature engineering, and gets it ready to be machine-readable for your model. In my old package, the one with all the big pile of Lego bricks, I had manually written a bunch of functions using dplyr and tidyr to do this pre-processing. And then every time I needed to access my data at different parts of the modeling process, I'd grab those, call that function, it would run through all the pre-processing steps. But as my data sets got bigger and bigger, this got to be like it was a lot of overhead. It took way too long for this to run. My models were taking a lot of time to run. It was also getting very difficult to control for data leakage at each of those steps. So pre-processing, sorry, recipes solves that where you kind of just list out your instructions in one spot, and then the tidymodels packages will call that whenever needed, whenever the models need your data.

Let's take a look at the red brick right here. And parsnip was a huge help here. So this red brick is the core chunk of code in my package that runs the modeling algorithm. In my old package, this chunk of code was deeply nested in a series of functions. Or my Lego analogy, that one red brick was buried deep in a pile of other bricks. And so every time I needed to call or make any edits to that model, either small edits or big edits, I also had to change every single function that relied upon it. Parsnip solves this by instead providing, one, a uniform syntax to all the different modeling types in R, so whether it's XGBoost or its Bayesian model. And with that, it helps you make sure your modeling process is modular. So now I can swap out that red brick with other features or swap out the modeling algorithm entirely and not have to worry as much about the data and the pre-processing and the post-processing and all the other steps. Very minor changes there if I could swap out that red brick.

That was a huge help. Tune, again, was a huge help. My first time around, I had manually written a simulated annealing algorithm to help me do my hyperparameter tuning, and that was a really good experience for me. I got to learn how that algorithm works. But this time around, I didn't want to do that again. It was a lot of work, and I knew how to do it. I didn't want to manually write that a second time, so I just used Tune. Post-processing, I used Broom. Broom takes all your raw model output and makes it into a tidy format, so you can grab your residuals easily. You can grab your coefficients very easily. And probably my favorite, though, is Workflows. Workflows takes a bunch of those bricks and clicks them together. Now you have one single object that you can use for your modeling process. And then that object can then be passed into other functions to help drive business value, or it can even be passed into a production environment, and Vetiver can help here.

So in the end, I completely rewritten all my code. My package still did the same thing, though. It just did it in a lot more organized way, and it was a lot easier for my team and I to now grow this package and evolve with it. So now, as you get more requests from our stakeholders, we have to add more things to the package, we know exactly where in this pipeline it goes.

Surprise benefits of the switch

But my favorite part is that this is all the advertised benefits of tidymodels. This is what the book will tell you. As my team and I started using this more and more, we discovered a bunch of new benefits that were not told to us. They were all the surprise benefits from using tidymodels. So this brings us to our second adventure. And as you know, sequels are always better than the original. No laughing.

So we had rewritten my modeling package. It was a lot more organized now. We discovered all these new benefits. This is going to sound weird, but I was really helped by what tidymodels could not do for me. So I'm a data scientist, but I'm also a Lego data scientist. So a lot of my value as a lead comes from knowing how the world of data science fits into the Lego business. And tidymodels really helped me do this better. So wherever I could, I used the open source tools available with tidymodels. And there's, of course, more packages than this, but for simplicity, I used tidymodels packages wherever I could, and those functions wherever I could. And by doing this, I saved a bunch of time, and I got to use that time to focus on my expertise, which is a little bit of modeling, but it's a lot I know everything about Lego, and I know how Lego can use these models.

I know everything about Lego, and I know how Lego can use these models. So I got to spend all this extra time I earned from using tidymodels to focus on what I could add to these models to really help the Lego group.

So I got to spend all this extra time I earned from using tidymodels to focus on what I could add to these models to really help the Lego group. So for example, if you look at preprocessing again, recipes is essentially a bunch of these step functions that you can pipe together to transform your data. And these are step functions that are used by data scientists everywhere. For example, step normalize will take your numerical data, give it a mean of zero and standard deviation of one, and these are very common things that data scientists everywhere use. And this freed up my time to focus on my, I could use custom step functions, because in my domain, I have some very specific transformations I have to use. I'm in marketing, we have to add carryover effects to our data, we have to add diminishing returns, and these are more specific to my domain. So I used all my free time, well, not free time, but extra time that I earned from using the open source tools to make my custom step functions. And this really added the Lego twist to my new modeling package.

And I found opportunities to add this Lego value add everywhere across this modeling pipeline. For example, my data, our team has thousands of possible data series we could put in our models. You can't put that much data in your models. So we had to spend a lot of time figuring out which data sources belong in our models, so I was able to write tools to help us do that better. The modeling process, I could spend more time making sure my model was better specified, making sure our residuals were behaving better. Post-processing is huge for us. We have to take all of our raw model output and make it make sense for the organization, like calculating media uplifts and calculating return on investments coming out of the models. We have a lot of functions here. I got to spend a lot of time on this very important part of the modeling process. Business value, I had more time to work with our stakeholders by leveraging the open source tools. And then on the flip side, when you leverage these open source tools, you get their help with all the bugs. You outsource your bug squashing, because you're going to hit fewer errors in the process. And when you do hit errors, there's a lot more documentation out there on how to solve those errors. Rather, if you're writing your own functions and your manual modeling functions, it's a lot harder to solve some of these errors. So by leveraging this open source community and tools whenever I could, I just became a much better Lego data scientist.

Speed gains

Another big benefit I saw was speed. So no one else is going to stand on the stage and tell you how fast tidymodels is. Julia warned me about that. It adds overhead. But actually, by the swap, we actually unlocked a lot of speed in a few different ways. And I want to talk about those. With our process. So since I wasn't running my own dplyr code and my tidyr code over and over for my data processing, my functions just ran a lot faster. And that was very noticeable to us. And it was a huge win when we discovered that. Our algorithm. We had originally in our models, we used Bayesian models. We had manually written stan code to do that. That was really awesome, because we got to customize every piece of that code or that model. But with tidymodels, our rstanarm works a lot better with parsnip in tidymodels. And that's a bunch of stan models prewritten and precompiled by experts out there. So we leveraged those models, that algorithm instead this time around, and that really sped us up.

And then just our ways of working was a lot faster. So with the swap to tidymodels and the workflows process, we were able to keep now every single part of the modeling process and development process on the same platform. We can basically do our entire developments in one R notebook. And that really just saved us tons of data scientists tons of time iterating over models, developing models, because we could move a lot more quickly. And without switching environments and everything.

So I can quantify this change. We had a batch of models for one project that used to take about 30 minutes to run from start to finish. Now they take just 60 seconds. That's a 30-fold increase for us and a really measurable increase that helps our team work better.

We had a batch of models for one project that used to take about 30 minutes to run from start to finish. Now they take just 60 seconds. That's a 30-fold increase for us and a really measurable increase that helps our team work better.

If we add that into the earlier example of the Lego value add, we have a pretty awesome list of benefits. As you know, though, sometimes benefits come with costs. This was no exception here. We did have a few extra new hurdles with the tidymodels swap. And so we had to kind of rethink our ways of working. So we're used to solving some specific problems one way for four years. We had to come up with some new ways. For one example is that algorithm. When we swap from our manually written STAN code to rstanarm, we gave up a lot of control. With STAN, we could customize really every single prior distribution type based on a variable type. And when we swap to rstanarm, we're a lot more limited in what we can do. But whenever we came across these types of challenges, we were able to kind of step back. Can we solve this problem that we're used to solving one way, a new way? And every time we came across these problems, the answer was yes, we could work with this. And by far, everywhere, the benefits of the tidymodels swap really outran the cost. So no regrets there.

The stealth adventure: updating a live product

And this brings us to our final part. So we had rewritten our code. It was a lot more organized. We had all these new benefits that we weren't expecting. There was a twist, though. I was making all these changes to a live product. So we had stakeholders we were working with in real time. So this is my stealth adventure. It's my stealth adventure because my original goal was to sneak into our product in the middle of the night. Hopefully no one noticed me. And I swapped out the old models for the new ones. And hopefully no one notices. Kind of like this. I'm Tom Cruise coming with my tidymodels. And we could have succeeded with this. We could have swapped out our old models for the new ones, and no one would have known the difference. But along the way, we noticed that all the benefits that my team experienced with tidymodels, we could actually share those benefits with the stakeholders. So rather than sneak and swap out the models, we could actually get them just as excited as we were.

And we did this on a few different areas. One is that we could build better models now. So tidymodels are written by experts across the globe in modeling. Experts who are better at modeling than I am. So I'd be silly to ignore their expertise. So once we knew that with the new package we could reproduce our old results, and that was an important step for us, we then took it a step further and used all the tools that tidymodels offered us to build better models. So we got better residuals. We could get better fits. And so now our stakeholders did see some changing numbers. But it was for a good reason. And we could talk them through those changing numbers and sell them on it.

tidymodels enabled faster, more frequent updates. So, sorry, since our data scientists could work faster, we could work faster with our stakeholders. So as long as our stakeholders keep on feeding us new data and new ideas, we can work with them almost in real time to take their ideas and data, spin it through the models and see what happens. Is this change worth it or not? And this has improved our stakeholder engagement so much.

The ability to automate and to put into production. In our old modeling package, production still had some very manual steps. I was getting my fingers in all those steps. But now with tidymodels, it's a lot easier to do that. So we can work with our engineers, work with that workflows object, automate our data and flows, pass them that object, and they can help us put into production a lot more easily than we could before.

Probably my favorite as a data scientist is that we can now answer more complex questions with these tidymodels. So with tidymodels, it's a lot easier to make assumptions and change assumptions on the fly. And so, for example, our stakeholders love what-if scenarios. So what if we had doubled social media spend last Christmas? Or what if we had moved this promotion a few weeks earlier? What would have happened? So now we can go into our modeling pipeline, go to that green brick at the top, shake around some data, and it will flow through all the other rest of the modeling steps, even including the trained model, and tell us exactly what the model thinks would have happened in these scenarios. So being able to give almost real-time simulations and optimizations to our stakeholders was a huge win for us.

And then finally, this is my day-to-day job, so I'm still learning these new... I'm still unlocking new value with our stakeholders. And so ask me again in a few months. I'll probably have some brand-new lists of benefits to share with you. So that's it. Thank you so much. This was a course to talk about tidymodels. Also really about just embracing change in data science, that change can be scary. But we came out so much better for our stakeholders than I benefited. So thank you. And if you want to talk about tidymodels afterwards, find me.