Open Source Property Assessment: Tidymodels to Allocate $16B in Property Taxes - posit::conf(2023)

Presented by Nicole Jardine and Dan Snow How the Cook County Assessor's Office uses R and tidymodels for its residential property valuation models. The Cook County Assessor's Office (CCAO) determines the current market value of properties for the purpose of property taxation. Since 2020, the CCAO has used R, tidymodels, and LightGBM to build predictive models that value Cook County's 1.5 million residential properties, which are collectively worth over $400B. These predictive models are open-source, easily replicable, and have significantly improved valuation accuracy and equity over time. Join CCAO Chief Data Officer Nicole Jardine and Director of Data Science Dan Snow as they walk through the CCAO's modeling process, shares lessons learned, and offer a sneak peek at changes planned for the 2024 reassessment of Chicago. Materials: https://github.com/ccao-data Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference. -------------------------- Talk Track: End-to-end data science with real-world impact. Session Code: TALK-1147

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

We are so excited to be here. I'm Nicole Jardine. I'm the Chief Data Officer for the Cook County Assessor's Office. Soon I'll introduce Dan, our Director of Data Science.

And right off the bat, does anyone here, does anyone currently live in Chicago ever lived in the Chicagoland area? Oh wow, okay, so about 10% of you, okay. We're excited to answer questions. We love to talk about this topic. We have time at the end to talk about anything related to Chicago home ownership.

Today Dan and I are going to talk about something that affects equity, housing affordability, and generational wealth for a couple million people here in Chicago and the surrounding suburbs. And that thing is property taxes.

Property tax bills here in Illinois depend in part on the government's assessment of how much your property is currently worth. Just to set the stage a little bit, in Chicago in 2015, the average home was assessed as if it was worth roughly $237k, and the average homeowner paid an average of about $3,600 in property taxes.

The tax divide problem

The problem was identified using something that's called a ratio study. So the assessor's job in Illinois is to have assessments that follow the market every couple of years. The assessor assesses property values and adjusts them so that they're roughly in line with where the real estate market has actually gone. And in a ratio study, you take the properties that have actually sold, which is roughly 5% or so of homes here in any given year, and you compare the estimated value of that property to its actual sale price.

And this is a New York Times graphic, we've done some light modifications, and that ratio should be roughly 1. And what the New York Times and Chicago Tribune and others have found is that in the past, an average home was right around that 1 mark. That's about where it should be.

But what about other homes? It's really important to think about homes on the other side of the distribution, not just the average homes. So here's an example of a modest home on the south side of Chicago. Let's say in 2015, it sold for about $50K. On the other hand of that distribution, we have a nice home on the North Shore that maybe sold for more like a million dollars. And the fundamental question is, what's that assessment ratio? It's going to be close to 1.

So what that would mean, just to unpack that ratio a little more, if that 50K home actually was assessed at 75K, that would mean a ratio of 1.5. And if that home that sold for a million dollars was assessed as if it was worth 800K, that would mean a ratio of 0.8.

Here's what the New York Times actually published. There was a higher ratio of assessments to sale price for the homes with lower sale values. So these homes that are on the more affordable side of the price spectrum were historically, according to this reporting, over-assessed. That can lead to over-taxation. And on the flip side, these homes that are these higher-priced homes were also being under-assessed, leading to under-taxation. That's the problem.

The Chicago Tribune referred to this as the tax divide. And that tax divide is why we're here. So again, the issue, according to the Tribune, as they stated it, is Cook County failed to value homes accurately for years. And the result was a property tax system that harmed the poor and helped the rich. The problem lies with the fundamentally flawed way that the County Assessor's Office valued property.

And the result was a property tax system that harmed the poor and helped the rich.

So what's the cause? It could actually be a number of interesting causes. Could be issues with the characteristics data that the Assessor's Office uses to estimate property values. It could be issues with the way other offices actually adjust assessed values. But we're here today because we want to talk about one of those issues, and one of those is about the modeling.

So recall that the Assessor's Office uses sales, again, 5% of sales in a given year, to estimate property values for everyone. In the past, this was done with regression modeling. This is actually a snippet of code. It was closed source, SPSS, OLS-based models, and, you know, we're talking 50,000 lines of manual fixed effects, property-specific overrides, and more.

After the Tax Divide was published, voters elected a new Assessor, a new County Assessor, Fritz Kege, and Fritz started the Data Department. The Data Department's first Chief Data Officer was Rob Ross. I'm the current Chief Data Officer, and Dan and William and I have been on this journey together for about four years. And we're here because tech people and academics recognize that this is a data and a modeling problem, and that maybe we could actually help solve it. So our goal was to improve the model and transparency, and right now I'm super excited to hand it off to Dan, who's going to tell us about the model.

Rebuilding the model with R and tidymodels

All right, hello, everyone. I am Dan Snow. I'm the Director of Data Science at the Assessor's Office. And so Nicole has described the problem, basically lower-value properties broadly were over-assessed, higher-value ones broadly were under-assessed. I am going to talk about what we did to fix it.

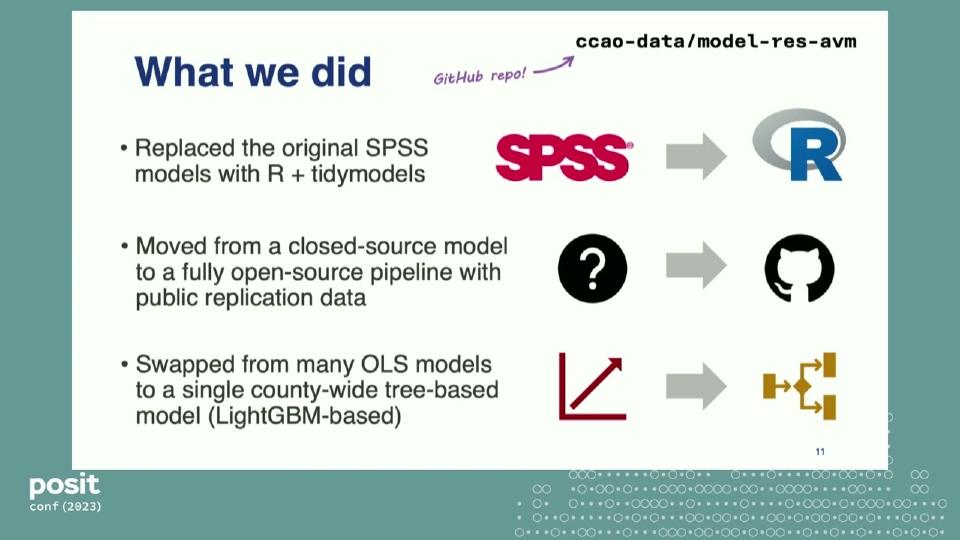

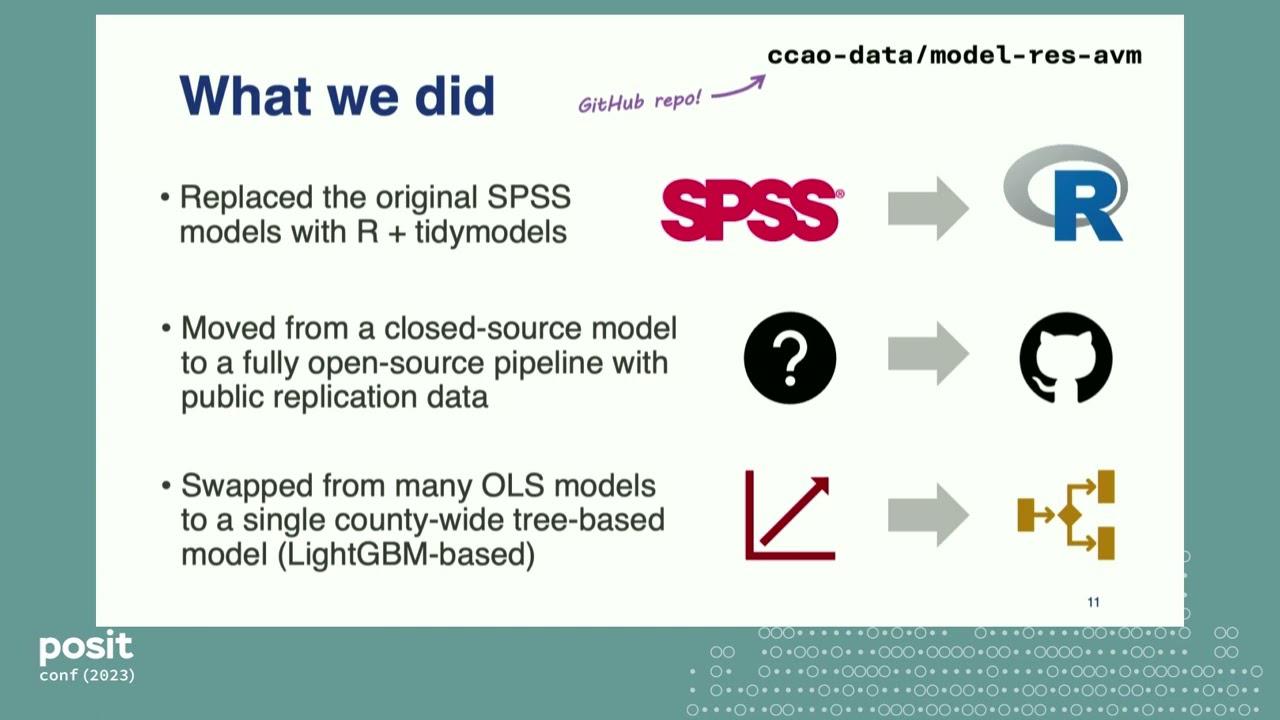

So the first thing we did was we got rid of the SPSS model. That is gone. And we replaced it with R. And if you would like to see what that model actually looks like, you can visit this GitHub repo at the top. That is the actual production model that is running right now. Go through the README, check it out.

We also moved from a closed-source, fully opaque model to one that is completely open-source and that includes data to replicate it. So again, at that link at the top, this is the live-running model. On that repo, you can also find all of the data necessary to run the full pipeline to get the values that we basically mailed to taxpayers with some adjustments.

And we swapped out the old OLS model to a LightGBM-based, county-wide, tree-based model. So the old system had a sort of regimes-based OLS model. Different regions had different regressions. Now one big model for the entire county using LightGBM.

And to see what that actually looks like, this is the old code. It was, you know, 50,000-some lines of this, probably more. You can see that there are no comments whatsoever. It is basically just constructing manual time-fixed effects and then doing a regression on those. All of the code looked roughly like this. It was very unintelligible.

In 2019, the first thing we did was replace it. We replaced it with Base R because tidymodels was very nascent at that time. And that code looked a lot like this. It improved the performance of the model pretty substantially, but this is not super easy code to read. It was not especially replicable outside of our office. And so we needed something better.

So in 21, we replaced that Base R system with tidymodels, and the code looked like this. Much more commenting, much simpler. And we continued down that path to now, if you're looking at the code today, looks something like this. So big improvement, and we will talk about what that actually gained us in terms of performance.

That's what the model sort of looks like. These are some of the things that go into the model. This is a regression on sale price, right? So sale price is the outcome, the thing that we are trying to predict. And the predictors are sort of things that you would expect for a sale price regression. They're like the size of the property, the year it was built, and basically where it is. Those are the big three.

Those are indicated in blue with the lightning bolt, and all of the stuff in green is stuff that we have added to the assessor's system of record database. So these are spatial features and stuff that we've imputed, or data that we have collected, and added with the goal of improving the accuracy and performance of the model. The things in red are things that we are interested in collecting or have been asked about, but for a number of reasons have not been able to include.

How tidymodels powers the pipeline

All right, but what about tidymodels? This is supposed to be a tidymodels talk. So how do we actually use tidymodels? Well, as I said, tidymodels has fully replaced our sort of base R modeling code base. And that has been a huge, huge boon for us.

It has completely abstracted away all of the complicated ML logic that used to be in that old code base, and that is stuff like cross-validation, you know, tuning hyperparameters, all sorts of things like that. It has allowed us to move much, much quicker and with more confidence, so we can sort of focus on the feature engineering and data collection side of things rather than being worried about shooting ourselves in the feet at every step.

And importantly for us, it has also made the code much more understandable. We are a public agency, right? And we want the code to be intelligible to the greatest number of people possible. I think that tidymodels helps us accomplish that by putting it into a standardized framework that other people are familiar with.

One example of that, time-based cross-validation. So this is sort of how we do our cross-validation split using our data. It's like an expanding window of the training set with the last 10% held out as a validation set. This is not something that would be super easy to program outside of tidymodels, although we tried.

We also made an R package. This is our R package called lightsnip. It is basically a shim between LightGBM and parsnip to allow us to do all sorts of fun things similar to bonsai, if you're familiar with that package. We made it because we wanted to use some of LightGBM's more advanced features. Specifically, we wanted to use early stopping.

So if you are training a model and after a certain point, the model is not improving in performance, LightGBM can automatically stop further training rounds and save you a lot of time. In our case, implementing early stopping decreased our training time by like roughly two-thirds. This was a huge boon for us as a full training run with cross-validation was taking like 60 or 70 hours.

Results

That's what we did. Now let's go back to our example properties that Nicole showed. These are actually real properties in Chicago. So in 2015, that property in the lowest decile, that was assessed for 53K. Its 2021 estimate was lower than that. It was around 34K. And it sold in 22 for 33K. So it had a ratio of right around one.

That larger property was actually the Home Alone house, which is in Winnetka. And it sold in 2012 for around $1.56 million. Its estimate in 2018 before the new model, to be clear, was around $1.5 million. But if you are familiar with property markets at all, and especially the property market after the Great Recession, that does not make sense. It should probably be higher than that. So its 2022 estimate was $2.3 million, which we cannot calculate a ratio on because we don't have a recent sale. But we think that it is much closer to its actual market value.

So that's the micro level results. On the macro level, this is a map of Chicago. For those of you familiar with the map of Chicago, it probably looks very similar. Broadly speaking, the change in the modeling and some other things had the result of decreased assessments and ultimately tax bills on the south and west side of the city. Will that trend hold? It is hard to say. This map is going to change in 2024 since Chicago is reassessed every three years. We are preparing to do the 2024 model right now.

Transparency and open data

Other things that we have done, nearly all of the assessor's data is now online and public. You can visit this link if you want to check it out. We have a public data portal, all sorts of property characteristics, tax history, spatial stuff that we've imputed, tons of things. I cannot express to you how big of a change this is from the old office.

We also publish a bunch of open source R and Python packages. These are mostly things related to assessment and property taxes, but they are very useful for academics and journalists in the space.

And so what is the point of all this? What is the lessons to take away? To me, our work is proof, it is a case study of the effectiveness of technical teams in local government. I think that if you can get into the right place with the right people at the right time with a lot of leverage, you can make a huge impact in local government.

And I would encourage anyone on the fence to try joining us because data skills are very desperately needed inside of state and local governments. Cannot express to you how true that is. And that is in all sorts of different areas like operations, policymaking, program evaluation, tons of different places.

And I would encourage anyone on the fence to try joining us because data skills are very desperately needed inside of state and local governments.

And if you are really a glutton for punishment, you can join us in the assessment industry because this problem that we described about Chicago, this is nationwide. These are other graphs from that same New York Times article from other cities. And you can see that their ratio curves look equally bad.

So if you're interested in learning more, I will take questions, and Nicole and I will stick around after the talk to chat. I also have some rare LightGBM hex stickers, if anyone wants them. So thank you, and I will be happy to take questions.

Q&A

Big thank you, Dan and Nicole. And we have time for questions. And we have a lot of questions.

So, how much were you inspired by private companies like Zillow and Redfin, who also do assessment? Or where did you go to figure out how to do this?

Oh, that is a great question. I mean, significantly inspired. They're building very similar predictive models. They're not predicting exactly the same thing. We are predicting the universe of all properties. Zillow is sort of predicting the universe of properties that are going to sell. And so they're different markets, not necessarily perfectly analogous. I think that our job is actually harder, personally, because, yes, we just have to cover a much wider swath of properties. Their blog is cool, though. Their blog is very cool. And they have a neat model.

No, I don't think so. I think generally the things that you would expect to be important to property values stayed about the same in terms of their importance. It is exactly the things I said before. It is the size of the property, its relative age, and where it is. Those are sort of the big determinants of property value almost anywhere.

One more question. Do you use any model explainability methods, for example, to help with conversation with public stakeholders?

We do, although we have not made a lot of those results public, and we are sort of working on that. I'm happy to answer questions on that afterwards. Thank you so much.