Hitting the Target(s) of Data Orchestration - posit::conf(2023)

Presented by Alexandros Kouretsis We are living at a time when the size of datasets can be overwhelming. Add to this that their process involves linking together different computing systems and software, and integrating dynamically changing reference data, and for sure, you have a problem. Reproducibility, traceability, and transparency have left the building. Here is where Posit Connect along with the vast R ecosystem comes to save the day, allowing the creation of reproducible pipelines. I will share with you my first-hand experience in this presentation. In particular, how we used Targets in Posit Connect combined with AWS technologies in a bioinformatics pipeline. The result? An effective and secure workflow orchestration that is scalable and advances knowledge. Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference. -------------------------- Talk Track: Leave it to the robots: automating your work. Session Code: TALK-1148

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

I'm a huge huge fan of Lord of the Rings. And every time I see this image, part of my brain thinks that this is the Eye of Sauron.

But actually, this is the first image of a black hole. It represents an extreme case of a data pipeline where petabytes were shipped across four continents to a supercomputer where they were processed and synthesized to this image of a few kilobytes.

My name is Alexandros Kouretsis. My background is from astrophysics and cosmology, from where I entered the fascinating world of data science and now I'm working as an R Shiny developer at Epsilon.

In this presentation, I'm going to walk you through some real examples and I'm trying to pass to you some hands-on experience on how to build effective, efficient, and secure data science workflows using the vast and mature ecosystem of R and Posit Connect, augmented by the game-changing package targets.

The data orchestration challenge

So, we are living in an era where the size and complexity of data has grown exponentially. In addition, we have a multiverse of different computing systems and data technologies. And tapping the value of data requires careful planning and execution so that all these necessary systems and data technologies are linked together effectively and efficiently.

If you don't pay attention to this, you may end up facing chaos.

So, contrary, if you follow best practices on the way that you orchestrate your data science workflows, then you get multiple benefits, like you increase reproducibility and transparency, you have increased your data quality and data integrity. Of course, you have much better streamlined your processes and all these tend to lead to much more reliable, stable, and up-to-date downstream applications.

The project: a pharma data pipeline

So, let me be more specific now. What's the task? We're at Epsilon and we have a project with a big pharma company and the task is to automate a process that accesses a secure data lake. And this secure data lake contains quite sensitive data. There are JXP data about clinical trials. There are cancer treatment data and even the genome of the patients is in there.

So, what we want to do is to grab some views from there, some summaries that can be publicly available and unload them to an external file storage, an AWS S3 bucket. This is an efficient cloud storage from AWS. And then copy this data to a downstream database where we want to do our pre-computation, our optimizations. It's a Redshift cluster that we will use. And, of course, build downstream applications with Shiny that we want to be reliable, up-to-date, etc.

And we will use R and targets as our Swiss army knife to glue all this together.

Requirements: transparency, security, automation, efficiency

So, let's see the requirements in more detail. We want transparency. And by transparency, we mean that whenever this process runs, we want to produce some kind of a report that other people can inspect what happened during this pipeline.

We want security. And for security here, we have also quite a bit of a constraint because we are talking about JXP data in a data lake. Whatever action is performed in the data lake should be traced back to a particular person. So, you cannot blame the robot here if something goes wrong.

We want also automation. We want to run this once per day. And, of course, we want efficiency. And with efficiency, we mean that we have this, our genomic data, they can go up to billions of rows, and this process can take hours.

So, we deployed this pipeline as a Quarto document in Posit Connect. And Posit Connect really served us well in terms of security because I can control who can see this process and I can specify the users there.

Also, I can securely store my environmental variables. And here, from the UI from Posit Connect, we can easily store our personal credentials. So, now I have this nice synergy where I can put my personal credentials there but control who can run this process. So, I said that I can run this process only. And all the other people just can see a static HTML generated by the process.

For automation, it's also very easy. From the UI, you can see that it is easy to schedule a report, to run once per day. And, of course, reports version, it's very easy to inspect. You can easily go back to history of the reports. So, Posit Connect really served us well in terms of security and automation.

The efficiency problem: Groundhog Day

And we followed best practices. We write our unit tests, our integration tests, data validations. But something is missing here. And what about efficiency?

So, the next day, the pipeline triggers and everything is computed correctly. But do I really want to recompute everything? Because, for example, if the inputs have not changed, then I already know the results from the previous day.

So, looking closer to Posit Connect, at the bottom line, it is a Cloud Worker. And the Cloud Worker means that it triggers a process, it does some computations, and then it writes, of course, some data in the file system. But when the Cloud Worker stops, then finishes the process, then everything from the file system vanishes.

So, this means that there is no easy way for me and for Posit Connect to remember what happened the previous day. So, here, we are trapped in a Groundhog Day, right? Where you have to live the same day over and over again.

So, here, we are trapped in a Groundhog Day, right? Where you have to live the same day over and over again.

And this goes even worse for the development process because even if I do the slightest change in the pipeline, I should recompute everything. And this turns like the Joyful process of building a data science workflow through a Sisyphean process where I have to recompute everything over and over again.

Directed acyclic graphs and the desired behavior

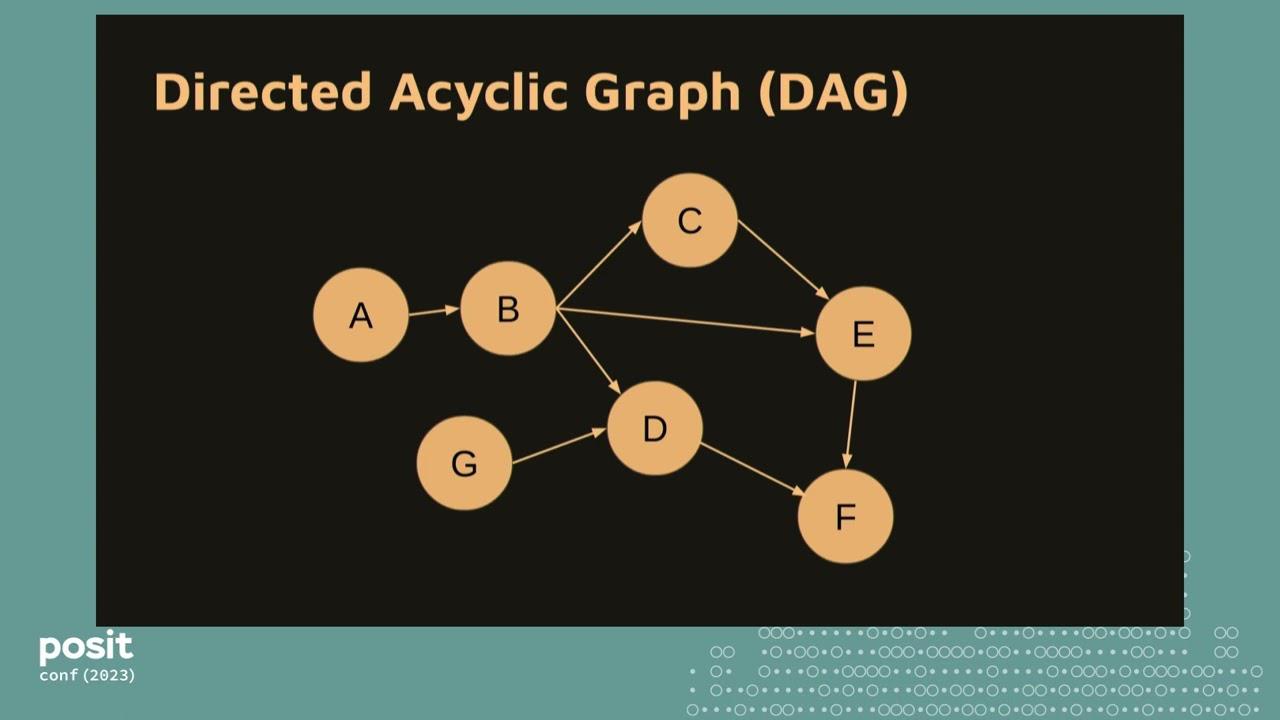

So, let's see what went wrong here and see what options we have to get out of this trap. This is a concept from ETL Pipeline. It's a very easy concept. It's called Directed Acyclic Graph. So, consider two inputs, two tables, called Data1 and Data2. I read this data, I process this data, and we join this data. And you can see the flow of the logic from the left of the right and this is a Directed Acyclic Graph.

I can go now even further and generalize this notion where A, B, C, D can be any kind of steps. And here, in our case, B can be I load some big genomic files to the S3 bucket. And I compute some correlation and regression in the database for genes that can take hours, et cetera.

So, what I have created here is an all or nothing scenario. And this means that whatever, whenever I trigger this pipeline, everything is invalidated. Everything is processed again. And what is the desired behavior? So, consider here this G node that it is like a kind of a logic that I just read a small file from the file system that can take seconds. And when this file changes, this node is invalidated. So, what I want to do is just to recompute the nodes that are related to this part in my workflow.

And omit the other steps that can be like big computations that can take hours.

So, going back to my previous experience from research and data science, what usually we tend to do is like we introduce some kind of an abstraction that we call the job or a chapter or something like that. And what you tend to do is to break this down to some kind of steps that you load some data, you process some data, and then you save some data. And then you continue on if you want to extend your project.

You introduce a new job, you load some data again, process data, save data. And this goes on and on, and you start creating something like a higher order graph where you do things manually. But still here you have somehow breaked out from this Sisyphean cycle for development process, but still you have to do some custom scripting to declare the sequence of jobs. You should do some custom scripting to skip steps, and you should also do some custom scripting, for example, to shake workflow data.

So, you should be careful. All the saved files, for example, if you are in a cloud worker, to push them to an external file storage. That it is persistent. And this is just part of a real pipeline in the wild. This is a small pipeline, and these are the components of the pipeline, how they are connected together. I think you will see in the next presentation a bigger view of this.

And do I really want to take up all this complexity? I'm prone to errors, and it's much more difficult to collaborate with other team members. This is the chaos I was talking about.

Targets to the rescue

So, here is where Targets comes into play. Targets is an opinionated framework about how to structure your data science workflows and your data pipelines. And if you follow its conventions and the way that it suggests you to build your workflows, then you get out of the box many benefits.

Targets will automatically infer the directed acyclic graph for you. It will strategically skip steps from the pipeline for you. It will also keep strong reproducible evidence for you. And it has great facilities to integrate with cloud storage. And of course, it has great facilities for distributed computing.

So, how Targets achieve this? Targets introduces a simple abstraction that it is called a Target. And the Target is just a function that outputs an object. And by convention, this object is pushed to your persistent file storage.

So, let's see a Target script in action. These first three lines, we just set the scene. So, you load Targets, you set your options, and with TarSource, you can load your custom functions. And this list is where all the action takes place. So, here is where I define my Targets.

And in this line of code, TarTarget, like, declares that this line of code is a Target, and then you go to GetData, the function that outputs an object, the data object. So, here we have this duality. GetData function outputs data object. And then GetData takes as input the file object that it is generated by a previous Target, and this goes on and on. And Targets will directly infer the directed acyclic graph for you, and it will put in the correct order all these objects. So, file now is before data, and there will be computed correctly.

Cloud storage integration and distributed computing

Also, Targets has, how we call it, Metaflow integration. And by Metaflow integration, we mean that Targets will send all this metadata and intermediate files that have been computed to a persistent file storage. Here we use an S3 bucket, so you can just set the options and declare your S3 bucket.

And now you have many benefits. Some of them are that you can now scale up to petabytes for your storage. You can just switch on data version control. And, of course, you have portability. And by portability, we mean that this pipeline, you can run this pipeline in different machines, and developers can share cast results between them. So, if I have run the pipeline and have pre-computed something, my colleague can share the cast and save hours from his development process.

Targets has also great facilities for distributed computing. It uses GRU. And it's very easy. You just go to the options. You set the number of workers. Here we set two workers, for example. And Targets will start these two workers and check if a worker is available. As soon as it is available, it will send the next target there.

So here, let's consider a very simple case. I have data, some data, and I feed two different models with this data. And this can take, again, each model feed can take some time. And Targets will check if a worker is available and it will send these computations there so they are computed in parallel. And it has also nice facilities. You can also go even further to more classic distributed computing, like using SAM systems, et cetera.

Deployment with Posit Connect

For deployment now, it is also very easy. You just set in the Quarto document one line of code, Target star make, and this will trigger the pipeline for you and will do all these nice things. So you can automate it easily, and pause it, connect. And you can also easily read any previous object that has been computed and displayed in your report.

For example, here we summarize the data object that we computed in the pipeline. And now everything is inspectable. You can create your code books. You can create your validation reports. You can extend your report that is generated during the pipeline.

So going back to our case where we have deployed this pipeline and pause it, connect. By, let's say, moving and refactoring and reframing our processes into a Targets project, brings this, completes the picture. And with minimum effort now, we have a case of maximum efficiency and scalability.

And with minimum effort now, we have a case of maximum efficiency and scalability.

And yeah, I couldn't resist to not share this meme from my social media. It is now that we have a nice pipeline. It is open source also, written in R. This means that it is extensible. Also, it is resilient. You can integrate with any system that you want. And you can move on on building your Barbieland perfect dashboards and share this with your organization so that everyone benefits from this process.

So the next time that you have a project that it is some logical steps, a workflow, a data pipeline, you can just use Targets, reframe it into the Targets project. Even if it is like a medium-sized or small, you will get all these benefits. And it will bring you out of the mindset of creating like ad hoc scripts, manual scripts for your workflows and linking them together.

So if you want to, yeah, please reach out to me on LinkedIn. This is my LinkedIn profile here. And yeah, use Targets. Thank you.

Q&A

So a quick question. How was the experience of integrating Targets with Connect for the team?

It was quite nice. The thing is that the biggest bottleneck, one of the things that you have to solve is that you want to store this data somehow. So you will need a persistent file storage or if you have like a friendly IT team, you can request for, let's say, write access in Posit Connect in an absolute directory. So this is the main thing.