dbtplyr: Bringing Column-Name Contracts from R to dbt - posit::conf(2023)

Presented by Emily Riederer starts_with(language): Translating select helpers to dbt. Translating syntax between languages transports concepts across communities. We see a case study of adapting a column-naming workflow from dplyr to dbt's data engineering toolkit. dplyr's select helpers exemplify how the tidyverse uses opinionated design to push users into the pit of success. The ability to efficiently operate on names incentivizes good naming patterns and creates efficiency in data wrangling and validation. However, in a polyglot world, users may find they must leave the pit when comparable syntactic sugar is not accessible in other languages like Python and SQL. In this talk, I will explain how dplyr's select helpers inspired my approach to 'column name contracts,' how good naming systems can help supercharge data management with packages like {dplyr} and {pointblank}, and my experience building the {dbtplyr} to port this functionality to dbt for building complex SQL-based data pipelines. Materials: - https://github.com/emilyriederer/dbtplyr - https://emilyriederer.com Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference. -------------------------- Talk Track: Databases for data science with duckdb and dbt. Session Code: TALK-1098

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Alright, hi everyone, thank you for coming, and today I'm happy to be here to talk about my work translating some of my favorite dplyr functions to dbt, and more broadly what I've learned about the power of translating syntax to share ideas between different communities in the data space.

And I think one of the reasons it's really exciting to be back here at positconf is we all share the same language, so when we're talking to each other in the hallway, we don't have to talk about, oh you know that guy who's written all the packages that have hex logos, we can just say Hadley, and everyone knows who we're talking about.

And similarly, beyond kind of those fun concepts and that internal language, this also helps elevate important concepts and accelerates more technical conversations. For example, instead of manually describing, oh that certain way to structure data, if we just say tidy data, we know what we mean, it elevates that concept, and we can springboard into whatever we're saying about that concept.

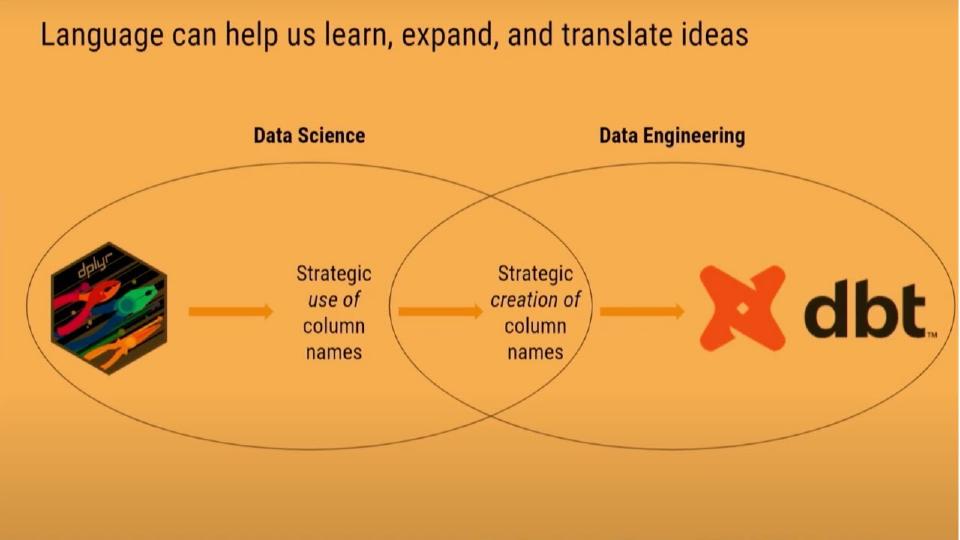

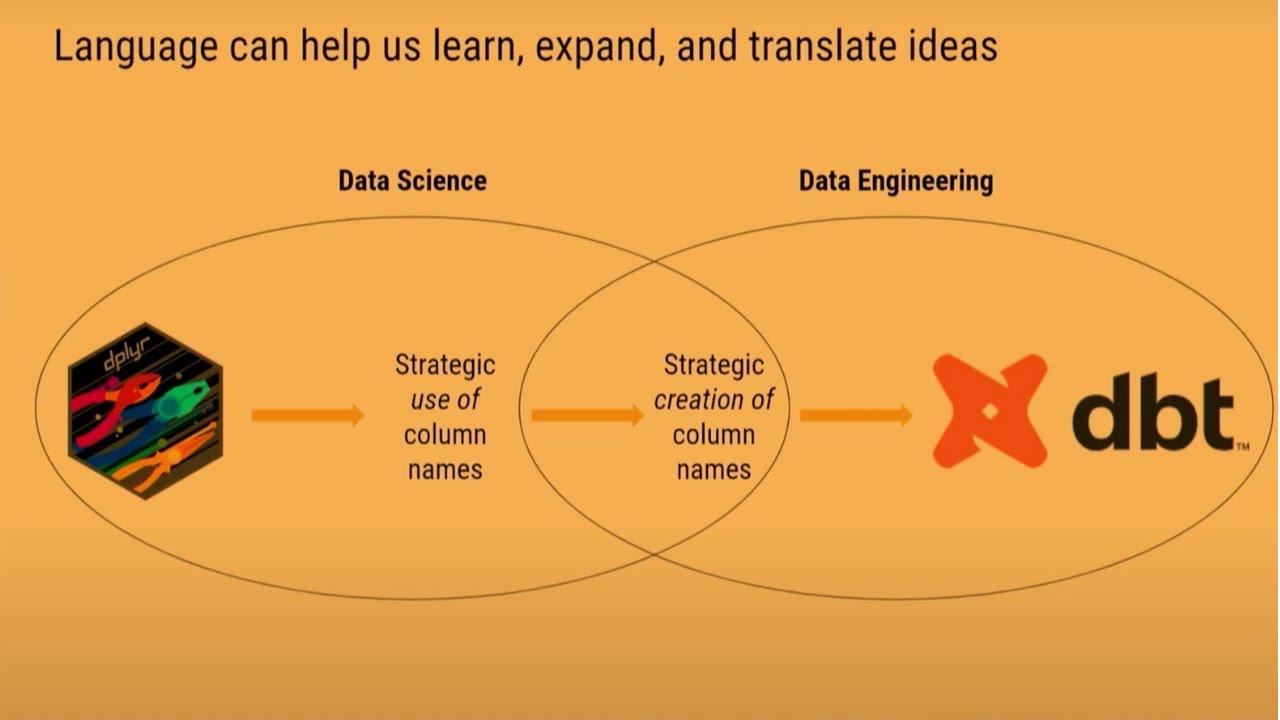

But while these internal languages can really help communication within a community, sometimes they can make it actually harder to communicate with our peers that come from other backgrounds, such as data science versus data engineering, or R versus Python. And what I've found as I've moved between some of those different job families and responsibilities and languages in my own work, is that translating syntax between languages can be a powerful way to transport concepts across communities.

And one recent experience I had with this was when I translated some of my favorite functions, functionality, from dplyr to dbt, which is the data engineering and data pipelining framework. Coming from the dplyr world, I found that I could find a lot of really useful and interesting workflows with the strategic use of column names. Then as I began to produce more data sets for consumption by other people, I began to realize that I could pull this idea further upstream into the strategic creation of column names.

But to get others in the data engineering and analytics engineering space to do the same, I had to build that same incentive structure that existed in dplyr in the stack of tools used by data engineers, which motivated me to make the translation to dbt.

So for the rest of our time today, I want to tell three parts of this story. First, the underlying concepts that I was able to learn from the dplyr language. Secondly, how I realized column names themselves could be a type of micro-language to communicate between communities. And then finally, how I encouraged myself, wearing an engineering hat, and other analytics engineers to do the same by bringing dplyr functionality to dbt.

dplyr's tidy-select helpers

But first, let's start with dplyr. As many of you may have seen in your own work, dplyr contains a number of helpful tidy-select functions, which kind of imply the existence of column names having meaning and being something we can act on and operate. So we have functions such as starts with, ends with, and contains. And how these work is that these selectors, when applied to a dataset, help us pick out column names that follow certain patterns.

For example, finding all count variables that maybe start with something like n, or finding all variables that relate to some concept, like containing the word spend somewhere within them. And this simple idea of expecting column names to have a pattern and have a meaning unlocks the idea of them being something we can program against and operate on. And it incentivizes the strategic use of column names for this general pattern of find columns and do something.

And this became very useful in many aspects of my data science, data analysis work. In transformation, in modeling, and in validation. So just to give a few examples, using something like dplyr for data wrangling, we can find columns and then use that for more efficient transformation. If we want to do something as simple as the data aggregation, instead of writing the same aggregation function, for example, taking the mean of every indicated variable to calculate a proportion, we can write that line of code once and have it applied to all relevant variables.

In the modeling context, this unlocks different capabilities of find columns and do something else, like prevent model leakage. Often when I'm modeling, I like to keep variables in my dataset that should not be used as predictors in that model, but might be useful to help me diagnose weak spots in the model as I'm looking to enhance future performance. But with a package like recipes, to ensure that I don't accidentally include these as predictors, I can name those features variables strategically, find those columns, and assign them to a role that ensures that they don't enter into the wrong part of the modeling pipeline.

And finally, for something like data validation, I can find columns and do something like double check that those columns, those variables are behaving as I expected, using a package like pointblank. For example, if I think all of my variables that start with N should be counts, I can test that hypothesis in a single line of code, and then if I update those variables later, if I add more columns into my dataset that fit this pattern, my checks will automatically expand to comprehensively test all of the variables in my dataset, even as the individual variables change.

Column names as a micro-language

So in my own work in the data science and data analysis space, I found this simple idea of being able to find column names and expect meaning in those to be really useful, so much so that I started to think about column names in and of themselves as a kind of language. So as I moved in and out of the data science roles into at times more analytics engineering or data engineering type responsibilities, I thought about how I could operationalize this concept to not just have meaningful column names for myself, but to better communicate between data producers and data consumers by embedding meaning in those column names for others.

Column names are themselves a form of abstraction that capture the messiness and the complexity and the rich underlying meaning of a dataset. So to do this, I started thinking about names not just as words, but more like sentences or paragraphs. So I started thinking about defining sets of stubs that can encode different types of semantics and different types of performance contracts about my data, and then when going to name columns for a dataset I intended to publish, permuting those together to create a rich combinatorial explosion of intuitive and really meaningful column names.

Column names are themselves a form of abstraction that capture the messiness and the complexity and the rich underlying meaning of a dataset.

So to just give a few examples of how this is useful, you can imagine maybe defining one layer that captures something like the rough types or intended use cases of variables. So for example, ID, indicator, count, date, hopefully many of these seem fairly intuitive. That's the goal. But beyond intuition alone, we can define very explicit semantics for these variables so people know exactly what these things should mean when they look at them.

For example, an indicator variable would be a 0, 1 indicator, and the rest of the name would always describe the positive case. So we could push towards more specific names and not have ambiguity of which case encodes the positive versus the negative. But beyond semantics, we can also use these stubs to make promises to the users of our data. So for example, indicator variables perhaps always being non-null or dates always being in everyone's favorite ISO 8601 format.

And similarly, you can imagine doing the same process as other layers as it best relates to your specific use case and tackling some of those gnarly problems in our enterprise such as everyone having a different definition of what is the user or what is the customer. If we define for a specific stub a singular meaning for something like a user, we can then use that concept and that definition consistently throughout all of our datasets to push towards more clarity and again that better communication across different job families and areas of responsibility.

And beyond encoding semantics and contracts, we can also encode the consequences of those choices. So for example, if users are identified by IP addresses, sometimes that may result in inconsistencies in identification across devices. And so forth and so on. You can imagine this process playing out differently in different enterprises. But the idea being for once, define and align on this common language and then use that to create rich, latent communication between different families.

Translating the concept to dbt

And the benefits of this pattern really, really cleared me, having come from the perspective first of that data science and that data analyst user of how they would interpret and use these names. But to encourage others on the data production side to use this same pattern, I found that I had to make one more set of translation and translate this concept into tooling used by analytics engineers and data engineers so they could also benefit and really see it and experience for themselves the value of this sort of workflow and this sort of pattern.

And that's what encouraged me to take those tidy select helpers that inspired my own workflows and translate them into a dbt package called dbtplyr.

Now for those that aren't familiar with dbt, I'll just say a few brief words about what that is. Dbt is a data engineering framework that largely sits on top of the SQL language and helps people build better quality data pipelines by encoding software engineering best practices such as enabling clean code with variables and control flow like if statements and for loops that you can't necessarily do natively in SQL, promoting more organized projects for better structure and reproducibility, and adding some syntactic sugar for better developer workflows with things like testing, logging, and orchestration.

So although it's a different language and a different framework, I felt like the dbt community and the dbt language already shared a lot of the same underlying values as the R community in the way that we valued the scientific quality and reproducibility and the idea of bringing these best practices from these different fields into the data space.

And just to give one example of how dbt does this, you may be familiar with a traditional SQL query that really doesn't follow the dry principles of do not repeat yourself. There's a lot of redundancy, a lot of repeated copy-paste business logic, which can cause a lot of room for error. But dbt, by adding variables and adding for loops, we're able to get a lot closer to that dry streamlined workflow that is probably more comfortable to many of us coming from more traditional coding languages, as well as encoding a lot of our business logic in macros and packages.

And because of this packaging ecosystem, I was able to write a dbt package called dbtplyr, which translates all those select helpers that I knew and loved and found so many applications for in my R-based workflows to dbt. So we have all those same functionalities like starts with, ends with, and contains. And what this same pattern looks like as it plays out in the dbt workflow is something like this. Once again, we can find columns and do stuff, whatever that stuff may be. First, we can find column names that match some sort of a pattern. Next, in a select statement, we can apply whatever transformation we wish to all of the variables matching that pattern. And similar to dbtplyr, if we so choose, we can do similar things in filter statements and where clauses.

But to me, the most exciting part of making this transformation wasn't simply copying the functionality of dbtplyr to unlock the same workflows, but rather seeing by translating the concept of column names to dbt and therefore to the analytics and data engineering community, finding slightly different applications for them when used and applied in that space. And in turn, these applications helped better soothe common pain points of communication between data engineers and data scientists.

But to me, the most exciting part of making this transformation wasn't simply copying the functionality of dbtplyr to unlock the same workflows, but rather seeing by translating the concept of column names to dbt and therefore to the analytics and data engineering community, finding slightly different applications for them when used and applied in that space.

So for example, tackling problems such as data sets with inconsistent naming, unreliable and untrustworthy meaning, and unvalidated or poorly tested data sets. So to just show how this fully played out in the dbt context, you can imagine as a data user how frustrating it can be when your column names don't follow a good pattern. I'm sure many of us have been in the case where our code breaks your errors out because we failed to replicate some error that exists upstream in a data set.

But with the package, but with simply the idea embedded in dbt now of column names really being this thing we can act on and structure, instead of writing this really manual redundant SQL code that tends to lend itself to those sorts of sloppy typos, we can pull in the variables and macros and control flow that's embedded within the dbt framework to only write that code once and loop over all of the relevant variables. So by embedding the concept of having very structured column names, we're incentivized to change our code to fit that pattern and create and avoid those typos for our users.

But beyond typos, even more frustrating for data users is broken contracts that lie to our users. So for example, if we have a variable that's called a date but is actually cast as a timestamp, this is more than a mild annoyance. If someone makes the mistake of trusting you and filtering that column as if it were a date, they may risk throwing away all of their data and not retrieving any of the records that they intended to.

But by the combination now of dbt and dbtplyr, we can catch this process earlier on in our data pipeline and avoid this type of error. So for example, we could think about manually casting every variable that we're calling a date to a date, but this could get a little fragile and a little sketchy if we add a variable to our dataset and forget to adjust this sort of transformation. But with the power of dbtplyr, we can find all of our variables that match pattern and write dynamic and flexible code that will ensure that we enforce that contract universally, no matter how that shape of our dataset changes or evolves over time.

And yet, you can probably also imagine a time where this could be a really bad idea. For example, imagine trying to automatically cast a date variable to make sure variables called n stays integers. If something upstream in your pipeline is broken and those variables aren't actually integers, you wouldn't be solving a problem by doing this sort of casting, you'd just actually be covering it up and making it harder to debug.

So with dbt's testing infrastructure, we can write tests that run in our pipeline before we execute certain transformations. For example, we could check that no matter what type of variable it's currently cast at, we could make sure it's sufficiently close to an integer that we're comfortable doing that casting operation. And once again, with the framework of dbtplyr and with this concept of operating and programming against our column names as this shared latent form of communication throughout our data space, we can automate this check to make sure it, too, runs comprehensively.

So as you move throughout different roles and responsibilities in your career, or as you work in cross-functional initiatives, I found that aligning on these core ideas across our different communities and creating shared languages for them, both in words, in frameworks, and in syntax, can be a really powerful tool for collaboration. So thank you all for your time. If you're interested in talking more about dbt, dbtplyr, or select helpers or column names, please find me any time throughout the conference. And I've linked in my slides a number of resources kind of about the smattering of topics that I covered today. Thank you.