Automating the Dutch National Flu Surveillance for Pandemic Preparedness - posit::conf(2023)

Presented by Patrick van den Berg The next pandemic may be caused by a flu strain, and with thousands of patients with the flu in Dutch hospitals annually it is important to have accurate and current data. The National Institute for Public Health and the Environment of the Netherlands (RIVM) collects and processes flu data to achieve pandemic preparedness. However, the flu reporting process used to be very laborious, stealing precious time from epidemiologists. In our journey of automating this data pipeline we learned that collaboration was the most important factor in getting to a working system. This talk will be at the cross-section of data science and epidemiology and will provide you with a valuable opportunity to learn from our experiences. Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference. -------------------------- Talk Track: Leave it to the robots: automating your work. Session Code: TALK-1150

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Ah man, if you're like me, you remember this time during COVID-19 where we were locked down. It was terrible. I felt like I was trapped behind glass. I couldn't go outside. I hated it.

But of course, it's not as bad as some people had it during COVID. They lost loved ones. And of course, they could get sick themselves. So it's kind of strange that we're now back to this situation where we can, you know, have a conference. We can go out to bars and have fun. For a lot of people, it is very much like a pandemic never happened or will never happen again.

For the people that think a pandemic will never happen again, I have some bad news. Pandemics are inevitable. And then some good news attached to that is that we can reduce the risk.

Pandemics are inevitable. And then some good news attached to that is that we can reduce the risk.

So this is a quote from the leader of the Pandemic 2 project. She is responsible for increasing pandemic preparedness in the EU. And what does reducing the risk mean in this case? Because I think it's kind of very important to understand what that means. It's that we can try to mitigate a pandemic when it comes. So it's very important to have really good data when you are confronted with a situation like that. But it might also mean, if we're very lucky, that we can actually completely smash a pandemic from ever happening, depending on our response.

So this is a very, very far away from what I'm doing, but I'm sort of trying to work towards that goal. So who am I? I work at the RFEM, which is the Dutch Institute for Public Health and Environment of the Netherlands. Just to be sure, because I know there's a lot of Americans in here, I put a little map to show you where the Netherlands actually is. It's this tiny little country in Europe.

Background and the existing flu pipeline

So I got hired by the RFEM two and a half years ago to work on the COVID-19 pipelines. So they were a completely new concept to the RFEM, because we had previously just did a lot of stuff manually, or sometimes in SAS scripts, but not very automated. So I got hired to help set this up and help run it.

Now that COVID-19 has died down a lot, fortunately, we have switched our efforts to other diseases. And I'm going to talk today about influenza pipeline, so the common flu. And why the common flu? Because the flu has been actually one of the main sources of pandemics in recent history.

So there is already a pipeline for this previously, it already existed. So very similar to toxo you had before, it was like a mess of data going in. You had to do a bunch of magic, and then a bunch of stuff goes out for different reasons. So it could be hospital data, data from GPs, nursing homes, municipalities, or statistics from populations. And we get handed a lot of different types of data. So it could be from a database, could be FTP, or, for example, just emails with CSV files. And what we did is we cleaned it up, and then we create reports for experts to analyze, and then make sure that it's not out of control.

So this already exists. So we already have a pipeline in place. So how bad can this existing pipeline be? So I have a tiny little demo here of an actual job that someone had to do every single week. So they never figured out how to put numbers above a bar chart. So the solution was to go to a graphical software, and then type in the numbers from an Excel sheet. So you can also make typos when you do this. So this is obviously very dangerous. And it's just because she inherited this job this way. This is just how she was taught to do it.

Designing the minimum viable pipeline

So obviously, we need to automate this whole process. This is not OK. We learned a lot from COVID. It was a little bit messy and very chaotic during COVID. So now we have time to design our pipelines. So let's automate the whole thing.

And I'm kind of a nerd. And I go to these conferences. And I get all these ideas. Some of these things are quite obvious. I have those data bricks. I also have fire on here, airflow for orchestration. So many ideas that I want to implement. But epidemiologists' reaction is more something like this. The reason for that is that the reason the epidemiologists are even involved in this is that they have to be really close to what they're doing to the data.

So I will explain a little bit of reason for why that is a little bit later. But they have to be the ones that are running the pipeline, are actually constructing the data themselves. That's sort of the way it's set up at RFM right now. So this doesn't work. So I proposed a couple of steps for a properly planned pipeline. And I will go through them one by one.

So the first thing is we have to determine the requirements for a minimum viable product or a minimum viable pipeline, if you will. So to explain that, I will go back again to COVID-19, because that's where I have the most experience at RFM. And also because I was in a very similar situation to where the epidemiologists are right now at the RFM.

So two and a half years ago, when I started, there was one of the tasks that we were just handed. I had to sit behind a computer every day. Someone of my team had to sit by the computer every day at 10 a.m. and press Control-Enter. We had to run one script and sit behind the computer and watch it go. The reason for that is that things were moving so fast, and we were constantly adding stuff to our code. And that was running into errors. So what would happen is that if we would run it automatically, the job would fail, and we would have to then run the whole thing again and figure out where the bug was. But the job was two hours long, and we needed the data in three hours. So that was very challenging.

So what went wrong? So our error business was really low, because we were constantly changing the code. And because we were constantly changing the code, we had to do it manually, which means I was wasting a lot of time. And our pipelines were not reproducible at all. And this is, of course, because we were changing them on the fly when we were editing. And I don't have logs. I don't have version control for it. So that's kind of bad.

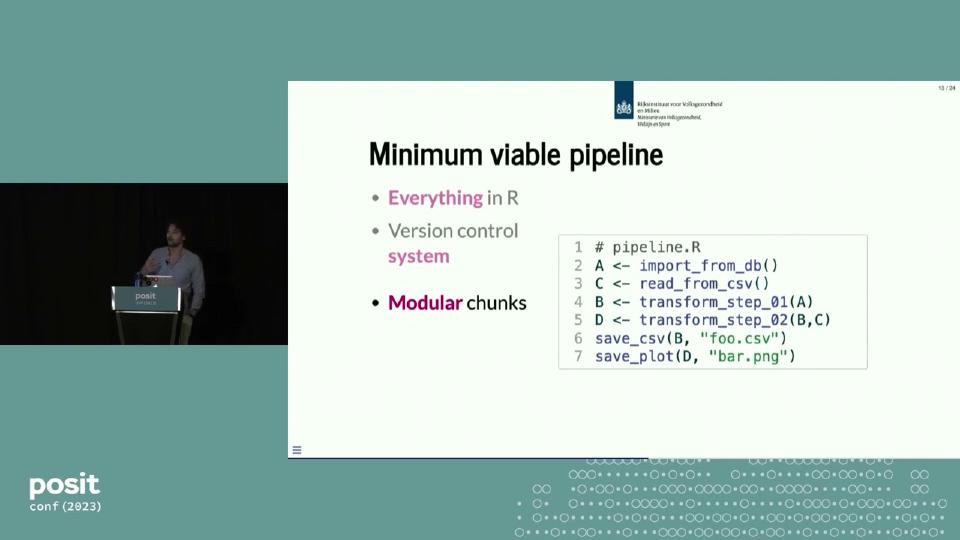

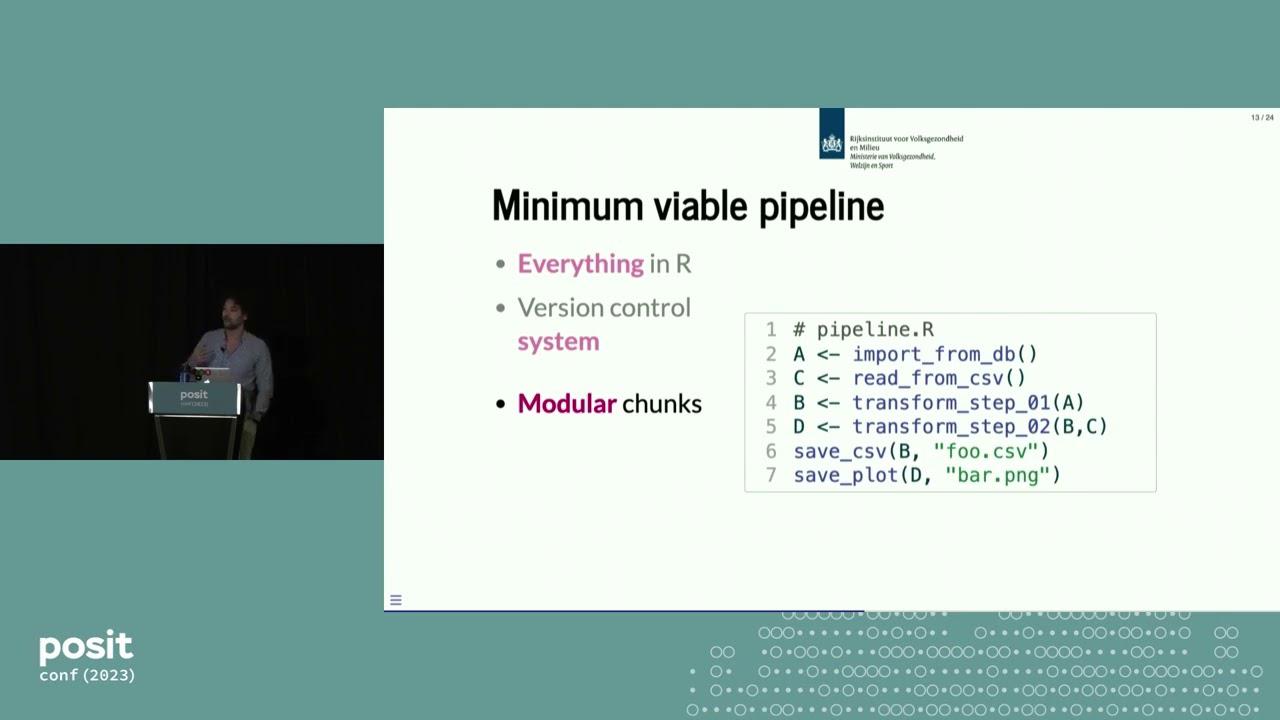

So these were the main things that we had to challenge for our minimum viable pipeline. So some of these things are just agreements that we have to do with our team, so whoever is working on it. So we decide we will not use GraphPad and then also image software, and we'll do everything in R. And we have to have a version control system. And it's not just installing Git and running it once in a while. No, we have to have agreements about how to actually use it.

Modular pipeline design

So I wanted to break down our pipeline into more modular chunks. So if you look at this little pseudocode that I have here, this is very similar to what our old pipeline would look like, the thing that I would have to run at 10 in the morning. We're importing a bunch of data. We're transforming the data. And then we're saving all of the data. And if something went wrong during this huge transformation step in the middle, then I would have to go in, fix it, and then rerun the step and the step below, et cetera.

So we propose to do it in a very similar manner to the targets package, to sort of separate this out. And this is also what was sort of talked about this morning at JD's talk, what was it again? Separate concerns and minimize complexity or separate concerns. So here we are, by creating modular chunks and breaking the problem down, we are separating concerns so each step is smaller and easier to tackle.

And basically, I'm also saying I'm equally genius as JD, so I'm just hanging up. So what we do here is we have our definition file where we say each step here, script one, script two, has an input and an output. And then we have some way of running this as a pipeline, very similar to the TAR make. So here, there's our definitions again. And this would look into this, again, directed acyclic graph, this is what would get run. So that is sort of now our minimum viable pipeline.

Scaling across the organization

And the next step is to design a framework around that that would scale across organization. So this is what I was talking about earlier, that the epidemiologist actually needs to be involved there. The reason for that is that we're not just doing influenza, we have a lot of disease that we have to monitor. And each of them has their own unique quirks, unique data sources. And it's really the epidemiologist's task to be really close to the data source and really contact them.

And there's a lot of just emailing back and forth that us data scientists can do. So we rely on the epidemiologist to know what's happening. And our job is just to make their life easier. So we have to create this framework where they can focus just on the most important steps. So they have to understand what do I need to create, and we help them create this, break it down into smaller steps and create this graph. And so they can focus on what script one does, what script two does, in this example. And what we do is we try to abstract away all of the stuff around it.

So these are the most important things that I was talking about earlier that was missing during the COVID-19 scripts, is that we didn't have logging. So we just add that as a layer in there. And also, of course, we need to go from this definition file to this DAG in the first place. So that's something we do. And we help with the import and the export of the data files. So we try to make it in such a way where it should not matter what you have, what kind of data you put in, or how you put the data in, and how you export the data also for future.

So the epidemiologists, besides making this DAG and separate scripts, we just have to run pipeline, which is tar make in targets package. And we in the back end, we created a package for internal tooling, and we create the run pipeline function. So we restart the logs, we interpret all of the steps, and we loop through the DAG to import the data, execute the script, export the data.

Extensions and current status

So now, next to the third step, that's to allow space for extensions. This is the pipeline, as I showed you before. And what we wanted to do is to have the possibility to have very specific, to allow for specific extensions that are really important to us. So for example, you can imagine that they want to visualize this DAG, which is a relatively simple thing to do, so that you can see what they're doing. You want to add static code checks as an option, so that we're making sure that they are doing what they say they're doing. And a very important one is to add metadata. So when you are running a script, we can have metadata be collected and be published in an internal RFEM-wide database, or for example, for scheduling.

So the idea behind it is that the epidemiologist doesn't have to change their code. They just have to update our internal package, and then the run pipeline transforms and adds these extra things. So the static code check would be something like check steps. The metadata would just be one part of the loop, and they could have a separate visualization function. And of course, there's many ideas that are floating around that we want to do.

So what is the current status? We now actually, we're finished with fully automating the flu surveillance, at least in the form of the minimum viable pipeline. And we have now started with 20 new pipelines. So there's actually quite a lot of stuff happening in parallel. So a lot of epidemiologists working at the same time, and we are developing our package also at the same time. So we're quite busy.

So the first big extension to the package would be metadata. So we're working now on defining what is metadata in our case, and how are we storing that and sharing it across the RFEM.

So to summarize my talk, using collaboration and clever design, we created a scalable framework for disease surveillance that gets us one step closer to preventing future pandemics. I mean, preventing is, okay, I hope so. But this is a long summary, and I like the seven Ps of the military, so I summarize it even further. Planned pathogen processing pipelines power pandemic preparedness. So it's much easier to remember, right?

Planned pathogen processing pipelines power pandemic preparedness.

Okay, so thank you for your time.

Q&A

So the other projects that you have right now, we create the other pipelines, are they still about surveillance, or is it something else that you're trying to track? So right now, we're mostly focused on getting surveillance out there. Maybe I failed to mention it a little bit, but we want to have it as standardized as possible so that data scientists can still help epidemiologists, because if they start creating their own thing, then it's really hard to support them. So it has to be as standardized as possible.

So we're focusing now on getting surveillance pipelines up and running, but we also have things like modeling happening that we might extend our functionality to in the future. But yeah, so there's also research happening, and we're probably not going to support that much because that's going to be unique every time, and we'll not have a lot of repeating pipeline stuff happening. So we're mostly focusing on surveillance first, yeah.

And also the use of the YAML file, what was the reasoning behind using that particular method? So yeah, the Targets package uses R code. I find YAML to be more readable, and I looked at a couple, because Targets is the only one that's really mature for R, I think, but other programming languages have a similar thing. And I find it really much more readable if it's in YAML, it's just me. For example, the Kedro package, I think, also uses this Python thing, also uses YAML. So it's more about readability to me.