Barret Schloerke: Lessons Learned Testing 2500+ Shiny Apps Every Day

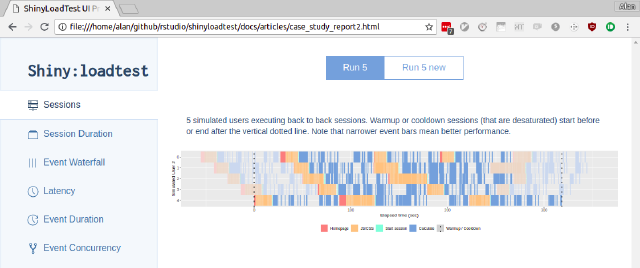

About the Talk: The Shiny team tests 2500+ different combinations of Shiny Applications, R versions, and operating systems to verify no feature regressions occur within the bleeding edge of the Shiny-verse. Over the past few years, we have learned a few lessons to keep our tests robust and honest. There is a non-zero chance that a test failure is not your fault. When working with CI systems, external influences such as installation failures or slower computing power will prevent tests from executing properly. A picture is worth a thousand tests but visual testing is known to have a non-zero false-positive rate. To address this, we have utilized threshold based image comparisons for flaky tests. We have found that it is best to test the minimal amount of content where possible. Changes in App package dependencies can produce unintended changes in expected outputs. These hard learned lessons have reduced unreliable test results allowing the Shiny team to quickly respond to feature regressions. Speaker's bio: Dr. Barret Schloerke is a Software Engineer at Posit. He currently develops and maintains many R packages in the Shiny ecosystem at RStudio including shiny, reactlog, plumber, learnr, leaflet, and shinyloadtest. Dr. Schloerke received his PhD in Statistics from Purdue University under the direction of Dr. Ryan Hafen and Dr. William Cleveland, specializing in Large Data Visualization

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Okay, everyone, we are just chugging right along here. I'm going to thank everybody every time I come out, so just get used to it. So thank you guys so much for sticking around. I think we're seven hours into day one of the 2023 Shiny Conference, and we have two speakers left.

So this next speaker is Barret Schloerke, who in my world goes without needing an introduction, but for anybody who doesn't know who Barret is, he is a software engineer and Shiny developer at RStudio. He currently develops and maintains many R packages in the Shiny ecosystem at RStudio, including Shiny, reactlog, plumber, learnr, leaflet, shinyloadtest.

And you really can't encapsulate how much of an impact Barret has had on Shiny. I'll let him speak to that, but a little bit of a background on Dr. Schloerke is that he received his PhD in statistics from Purdue University, specializing in large data visualization. And today Barret is going to be speaking about lessons learned from testing 2500 plus Shiny apps every day. So Barret, it's such a pleasure to have you once again, and I'll leave the floor to you.

Awesome. Thank you so much for the introduction, Ian. It's always wonderful to see you and present here at the Shiny Conference done by Epsilon. It's such a wonderful conference.

So as Ian said, today is actually going to be a little bit different talk for me. Normally I'm doing technical talks deep into the weeds, and today is going to be a little bit more of a story time. And I think it's kind of fun because I'm going to unveil the curtain behind the great development of Shiny over time. And it's kind of fun to see where we've come from and actually where we're at today.

Background on testing

So just a little bit of background, testing, never heard of them. If this kind of rings a bell for you, I recommend two chapters to check out. There's one in the R packages book. It's been updated recently. It's now chapter 14, and it's testing basics, and there's a couple of follow-up chapters as well. And then also check out chapter 21 in Mastering Shiny, it's the testing chapter there. Both of those is wonderfully written and good explanation about motivation of testing and why we need them, and how they can actually even apply to Shiny as well, even if it's for an R package.

And then last year, presented at ShinyConf, and if you want a refresher on Shiny Test 2 and test that, which I recommend highly if you have your app or package that has Shiny applications, please watch that presentation on the link there. At the end, I will include a link for my slides, and it has hyperlinks, PDFs, you know, HTML, you can get all the things from there. So don't worry about trying to screenshot or grab things right away.

Also throughout this presentation, do not be discouraged by the amount of testing that we are doing, or the approach that we are doing to testing. The testing that Shiny does is pretty exhausting, both mentally and also in like computing cycles as well. I think it's a bit overkill for virtually everything else. But as a Shiny developer, I'm trying to make sure that all of you have smooth user experiences, even when we are doing updates that are breaking things. And we want to make sure that everything works across packages, because local development may not be the same as across package development.

The early days: manual testing

Yeah, so use Shiny Test 2, you should be good, and that should cover most of it.

Okay, so yes, as Marlene said, yeah, tell me a story, Uncle Barrett. Well, all right. Back in 2018, I joined the Shiny team, and that was a fun year. And, you know, did a little bit of work on React Log, did a little bit of work on Leaflet, and then, okay, now we're ready to release Shiny. And I was like, where's the test suite?

And Joe looked at me and said, we have 150 applications, and what we're going to do is everyone on the team is going to stop work for two weeks, and we're going to manually test all 150 applications in as many places as we can do this. And if we find any bugs, what's going to happen is maybe in, like, second day of week two, if you find a bug that we think is critical, which most bugs are, we're going to restart this testing process and keep doing it until we can release. And so this is kind of like a motivation as to why Shiny didn't release all the time. It's because testing took, like, a solid three weeks, maybe four weeks. Sometimes it took six because we just kept finding more and more that would pop up. So it's very, very expensive.

And Joe looked at me and said, we have 150 applications, and what we're going to do is everyone on the team is going to stop work for two weeks, and we're going to manually test all 150 applications in as many places as we can do this.

And when we're testing, I know I was guilty of this. Like, if you're given a piece of paper to-do list of, like, install these packages and test these applications, what about, like, if I have another package that's not the latest Crayon version? What if I have, you know, something else? And, like, I know I installed, like, not the bleeding edge, but, like, back to commits that maybe contained the bug, and then I was testing things. And so I wasted two hours because I realized I was behind and I needed to come back to the latest version. And so there's no guarantee that everyone had the best testing environments, and it was really, really expensive for the company. And it was just like, oh, man, so much pain. I hope no one has to do this. I highly recommend ShinyTest2 to try to at least get out of manual testing.

Formalizing the process in 2020

So a couple years go by, starting to, you know, get a little grumblings and fed up. And in 2020, we started to formalize, you know, what's going on. And so we split the two pieces, the repo, into two areas. One was ShinyCore CI apps, where we just kind of hosted all the apps in one spot, and we could adjust them so that they're not necessarily examples, but they're testing apps. So they're going to be boring, they're not going to be flashy, unless that's the goal. And we can start trimming them, chopping them, and adjust them without having to, you know, worry about Shiny examples changing its original behavior or intent. And then we're going to put the package logic inside RStudio ShinyCore CI.

So this was good, because ShinyCore CI made consistent install methods. But we would still, like, to avoid GitHub tokens and other things between the team members and on locations where you don't have your tokens, testing, like, we would test with a zip file of ShinyCore CI apps. And zip files are not up to date, because you would find a bug in the test, and then you would update the test, but now I got to redistribute the zip files. So we're closer, like, I believe you have the right Shinyverse that's installed, but I don't know if you have the right testing apps available towards you. And so there's just a lot of hiccups there.

And also with this approach, when we had our snapshots, well, they weren't snapshots then, but we'll consider them snapshots. And we would do our expectations. It was a really, really laborious and manual process. Yeah, they in ShinyCore CI apps alone, there's over 8,000 commits, and those were always done by a human. To put that into perspective, that's a lot.

So it was really intense, but it was good, because we're starting to get closer and closer to this more automation and getting things done in an automatic way. The bad part was, is there wasn't automation there. And so the across-repo bugs would still take six months to find, or we wouldn't find them until we release, which was about every six months.

GitHub Actions and automation

But about this time, though, something came around called GitHub Actions, and GitHub Actions is a continuous integration platform that can automate your workflows within world-class CI, CD. That's their tagline. And they also said to build, test, and deploy your code right from GitHub. And I think this is huge, because it's not going to be Travis CI or Circle, which while they worked, it was somewhere else, and it's not integrated in with your PR and your code and everything else. And so it was really, really intriguing when this came around.

They had some growing pains, but it turned out in their V2 or what we use today to be pretty stable and really intriguing. The better part is that the pricing is free for public repos. It's not free for private, but everything we do within the Shiny team is public. And so, sweet, it's going to be free for us.

There's a repo called RLib Actions, and it's a collection of GitHub Actions for the R language. And it's currently maintained by Gabor and was originally created by Jim Hester. And it has many actions for you to use, kind of like Lego blocks. You can use them or not use them, depending on your needs. And you can do things like setting up Pandoc, setting up R, setting up R dependencies for your package, and then also checking your R package. And these actions, you can, as I said earlier, you can add in and expand on them, you can adjust them, but also you can do it in a way that you don't know what operating system you're on. If you've ever done testing with some platform, you usually have a very, very specific codebase for Ubuntu. You have a very specific codebase for Mac, and you have a different codebase completely for Windows. And here you just say, hey, set up Pandoc. Thank you. Set up R for me. Thank you. And that is really, really powerful that you can just put those in and all that work is hidden underneath.

And the repo also will contain many example workflows. If you're checking your R package, check out the standard workflow. That standard workflow is good for a solid 90% of packages. You don't need to do too much because you don't have too many different things. But if you find yourself to be a core package or an underlying package that needs to work on everything, you can look at the full example to test on all the different platforms that will be used. I would argue, though, if you are just testing your Shiny application, Ubuntu latest is wonderful. By using Ubuntu latest, most likely that's how your Shiny app is hosted. So that's how you should test it. I know developers may have a different machine like Mac, but so maybe you do Mac and Ubuntu, but it's only two flavors and rather than like 15 different combinations.

Testing Shiny in 2023

So this brings me now to testing Shiny in 2023. And oh, by the way, GitHub has this ability to create your own Octocat. So this is now the Shiny core CI Octocat, and I think it's a lot of fun. Got a little shiny blue face and testing red with hopefully green check marks in the eyes.

So the repo now is still Shiny core CI. We got rid of the apps and all the apps got merged into inside the repo. That way we can just install Shiny core CI and there's infrastructure already around there from pack or remote to install from GitHub and it'll tell you if you're the latest version or not. So it just makes sense having everything all in one spot. And since it's on GitHub, we can now leverage GitHub actions and the RLib actions repos. This allows us to have consistent install methods, consistent deploy methods, because it's on GitHub actions. If we're deploying off to Shiny apps IO or connect or someplace. And so now this gives me a full trust in every testing environment because I already know I can't trust me. So I don't believe I can trust you just by extension. And now that we have these consistent methods, I make it really difficult for you to be able to alter that testing environment.

Since we have all this, we now have expanded on those apps and now we're testing 170 applications, at least. We do it on five different R versions because similar to the tidyverse, we want to support the five stable R versions. So we have release, old rail 1, 2, 3, and 4. So kind of like today, this would be R version 4.2, all the way back to R version 3.5. We do this on three different operating systems because as a user, you will be using Shiny on all three operating systems, even though like as a standard package developer, I would say just Ubuntu is good enough. So if we take these apps, versions, R versions and operating systems, we have over 2,500 combinations in total. And these combinations are actually executed every work night. So we have five nights a week. And so this allows us to expose bugs within 24 hours of merging a PR. We'll pull in the latest main branches from all the Shinyverse, I think it's like 20 different repos and see if anything interacts.

Because I like statuses, I've actually made a lot of custom work into recording the results and you can actually see them here on ShinyCourseCI's results page. And this is actually a screenshot of one of the clean, I had a clean day where all the apps that we tested on all the different combinations did not fail, none of them, zero failures. I was very, very excited.

So because that doesn't happen often, because if you're looking at, you know, modeling and things like that, if there's just a small chance of a false positive rate, and you have like 1000 of these, then most likely one of them will fail. So having all green lights was wonderful for this one day.

Yeah, so this is actually today's results. So just to cherry pick, I know Maya is like, oh, you know, nothing's like always perfect. Well, here's a wonderful example, actually, in that the last two days, I haven't been able to actually install on Linux. So there's some issues going on there. We have tables of all of the apps and their statuses, and the status reports over the past 10 days. And so this little matrix here will tell us that this column is Macintosh, this is Windows, this is Linux or Ubuntu. And then the different rows are the different R versions. There's actually a lot of issues right now with R35, because a lot of people are depending on R36 or higher. So we're currently not testing there.

Testing costs, just so you're aware, if it's 45 minutes per testing run, 15 different combinations, five days a week, 52 weeks a year, that's over 3,000 CI hours a year for fees and pricing. Open source, it's free, which is awesome. If you're doing it in private repos, if Shiny Core CI was to be purely private, it'd be about 3,000 a month. So not crazy, not terrible, but it is expensive. But 75% of that cost is for MacOS. So if you actually just did Ubuntu testing, it'd be like $300 a month. And I think that is very reasonable for the amount of hours that is being executed.

And release time now, because bugs are being found when they come in, is less than two days with four developers. And this is because we need to go around to different integrations like Workbench, Shiny AppsIO, Shiny Server Open Source or Pro, which aren't there anymore, Workbench, Kinect, IDE. So we have to manually go do those. We can't get around that. And I think two days for four developers is not bad. But it does add a cost of weekly maintenance. But the confidence is through the roof when we release, because we know the bugs have been fixed as they've come in.

Lessons learned

So lessons learned. Number one, minimize the number of screenshot expectations. Like the office, GitHub will tell you, hey, I need you to find the difference between these two images. They look the same, but they're not. So I'm actually going to play this game with you. I will give you five seconds. Spot the difference.

Did you find the difference? This is this little white space right here. That little white space causes a diff to look like this. So these images are different, and they are truly different. But what happens a lot is extra little things. These two images, in a much more favorable comparison, can you spot the difference?

Just like in the office, Intel said there's seven differences. Differences are actually highlighted in red. And these are not really differences. They're more mistakes from the slider library or issues from there. So screenshots are hard. Try to minimize them, because little things like this will come up.

Lesson two, a failure may not be your fault. And similar to the slider one, so yeah, I know it's not my fault. But it's still my problem. And this is because the red dots will show up everywhere. We'll have failure messages everywhere. Get constant emails from GitHub Actions that, hey, your testing failed. But this is because things like GitHub Actions routinely updates their CI machines. And slow machines make client-side timing difficult, like if you have CSS animations with moving bars or modals or things like that. And then there's also lots of trouble with Ubuntu updating their system fonts.

Lesson two, a failure may not be your fault. But it's still my problem.

I know I've talked about this with ShinyTest2, but let's see if you can spot the difference on this one. I'm actually going to just switch slides and have the images overlaid. All right, so here's baseline and new, baseline and new, baseline and new. There's not much of a difference, but you can actually see that the baseline has like a boldish font in Alabama, and then the new has like a thinner font. So the font weight was reduced, and that was a system change. Like, can't fix it.

Test failure, test opportunity. Nah, that's test failure. So a recommendation is to actually use threshold. This will allow for minor differences when comparing screenshots within ShinyTest2. So in this code here, in the expect screenshot with ShinyTest2, just say threshold is like two or something less than five. If it was zero, then we would say the images have to be exact same. In the case of the modal dialogue, we are doing this because rounded corners were being different because they were rounding to the left or to the right sometimes, and so we just wanted to make sure that they were not throwing failures when comparing the images. Please check out the docs in ShinyTest2 Compare Screenshot Threshold. This will help you see what values you need to use or even what methods you can use to expose how different those images are.

Lesson three, test values that you can control. Yeah, Kaylee has totally said this in the movie. So test those values that you can control. So if there are dependency changes, you know, they will produce new output values like in Plotly. So Plotly will change, you know, its internals, and that's not your fault. And so really what you should be doing is possibly testing the dataset that's going into Plotly and assuming the plot will work. So use ShinyExportTestValues to expose those dataset values from your server side. They won't be exposed anywhere else except in testing mode, so you're safe to expose possibly dangerous things.

And then also, you can do snapshot excluding on your outputs, or you can pre-process your input or output, and I'll show you a quick demo of a pre-process your output, snapshot output. And this is, the snapshot is the JSON values that are saved to disk when you call expect values within ShinyTest2.

So running out of time, so that's why I'm kind of rushing here. So in a Plotly example from ShinyTest2 internals testing, we actually, this is adjusted for the demonstration, but what we do is normally you'd say output my plot, and you'd have Plotly, render Plotly, and you do your plot. Great. But we can then pipe this into a Shiny snapshot pre-process output, and what it'll do is it will provide that JSON object. So we're going to parse that JSON. We're only going to pull out the X data, the first data, and only the X and Y columns, and hopefully, we can get back the cars1 and cars2 for our X and Y data. And this way, it allows Plotly to do whatever it wants internally, and we're not testing against it. So it can hold, it can make changes freely without breaking our tests.

And finally, lesson four, routine maintenance is maintainable. I know this is a little bit of, like, tautology, but if you don't do routine maintenance, you might make a PR so big that GitHub won't let you merge it because you can't view the website. It finally rendered about 15 minutes later. It was a lot of fun to get that in.

So lessons learned. Minimize your screenshots, the count of them. Just because it failed doesn't mean it's your fault, but it's still your problem. And test values that you can control and perform routine test maintenance to keep on top of it.

Q&A

All right, Barrett, well done, as always. I especially love that Brad Pitt from Troy meme that's had me giggling for a second here. So we're going to be slim on time for questions, but the first one I wanted to ask comes from Eric Hans. What are your thoughts on other services that offer similar capabilities, like GitHub, sorry, GitLab CI? Well, I'm just trying to see how much of this is, like, GitHub action specific, and could this be generalized to other services?

I'm not fully familiar with GitHub or GitLab CI. It is very similar. I know Mr. Novartis uses it thoroughly. I have run into issues with promote on it, but I can't reproduce it, can't debug it, so it's really frustrating. But that also happens with GitHub actions. So if it's integrated in your system and it's less friction for you, by all means, use it. If you're automating things, that's the goal here. Less manual work, more automation.

Okay, makes sense. And we also got a comment from Paul Ruki. I can see that ShinyCore CI is testing logistics. Where are your actual tests? They are actually within each app. So if you're on the repo, it's inst apps, like, let's say, 001 hello, and then there's another testing file structure within that app itself. That's actually where I'm going with package helping later, or package enhancements for ShinyTest2 later. Those tests may move into your package structure, but for the most part, ShinyCore CI, let's just pretend it's custom and not what I will recommend for users going forward.

Okay, great answer. All right, Barrett, I wish we had more time for questions because your input is always incredibly valuable. So I'll just go ahead and end and say, you know, thank you once again for joining us at this 2023 Shiny Conference. And for everybody in the audience, we have one more speaker, it's going to be Peter Solomos. And we'll be back in just one minute with that conversation. Thanks, Peter. Thank you so much.