How to Use {pointblank} to Understand, Validate, and Document your Data

Workshop recorded as part of the R/Pharma Workshop Series (October 27, 2022) Instructor: Rich Iannone (Posit) Resources mentioned in the workshop: - https://github.com/rich-iannone/pointblank-workshop - https://github.com/kmasiello/pointblank_demo

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

That's the right one I just gave you in the Zoom chat. Then once you go there, you should be able to access it with this information here. You also can just go to slido.com and input the information that I just gave in the Zoom chat.

Okay, I don't want to steal any more time, I'm going to pass it over to my colleague Rich. Really excited to have him here giving the workshop. Thank you, Rich, for all that you do for the open source community, and with that, it's all yours.

Okay, thanks so much, Phil, and thanks everybody for attending. I'm going to share my screen right away so I can get right into it.

Great, so the first thing you should see besides my Zoom and my calendar is this web page. Basically, all the exercises are here on this repo, pointblank-workshop, and the link to the cloud project is here. Follow along if you want with the cloud project, it's the same stuff that's in here. This repo will always exist, the cloud project will always exist, which is great. So there's a life outside this workshop for these materials.

You can either follow along in the cloud by executing the different parts of the RMDs, or you can just watch. It's totally up to you. There's no exercises. There's a ton of material, though, so we need to get started. It's a three-hour workshop. I made... I mean, this is the first time it's being delivered, so I'm not sure if it's too much material or too little material. I think it may be, hopefully, right in the middle, but we're about to see.

Introduction to data validation

Okay, so we have a number of modules I'm going to go through, and basically, they are these RMD files right here. First, we're going to have an introduction into data validation. So I'm going to skip right to that RMD, which is right here.

Okay, so we're going to start with data validation, and that's data validation of tables. The whole package just deals with tables, not lists or vectors. So the scope is very much like data tables.

So common workflow that we start off with is involving an agent. So what's an agent? Well, it's kind of like the main data collection and reporting object. So you define that, you give it a table, you declare a number of validation steps, and we do that using validation functions, and we'll learn about how to do that. And then we interrogate the data. So that's where, essentially, we are actually applying the rules that we've set here in the middle part, right here. So we're going to see whether our validations, you know, what they yield in terms of like test results.

Okay, so we always start with create agent. And that's how we define how the data can be reached, give us some basic rules. We end with interrogate. So the major construction is right here, you create the agent object, start with this, a number of validation functions, and then interrogate, we close with that. And we can use pipes all the way through, which is nice.

So in the actual package, we have a data set called small table. It's a really, really small table. I'm going to show that to you right now. It's essentially this. So it's great, because as columns of different types, it has some missing values. It's a great table to experiment with just, you know, when using the functions from the package.

So we have that, we're going to use that in the next example right here. So we're going to make an object called agent one, starting off the create agent. The table argument is the first one, we give it small table. This is like a little name for report. So basically, what happens in pointblank is you get a report in your viewer. And you can publish this report, and we can just use it as it is, but we can feed it different little things like the name of the table, like a friendly name, and the label for the validation. So this is quite nice to have.

I'm going to run everything in my console. The reason is, I don't want the report to appear here, I want it to appear in this giant viewer area. So I'm just going to mainly copy and paste many times. So I can just type agent one, print it, and the printing actually happens in the viewer. So we see right here, we have, well, kind of like a whole lot of nothing. We have like a title, we have, you know, our label that we put in, it knows what table we have. But it says no interrogation performed. It's because we didn't do that yet. So at least it gives you something. So this is like an empty table.

Validation functions

So validation functions. That's kind of like, you know, like the main thing, the main event when it comes to validation in pointblank. So we have a ton of these functions. A lot of them begin with call, but, you know, they can be given something else. So how would we read these? So basically this function says we want column values greater than equal to some value. And we're focusing on column D. So what it'll do is it'll go in each and every cell within that column and check that the values are above zero.

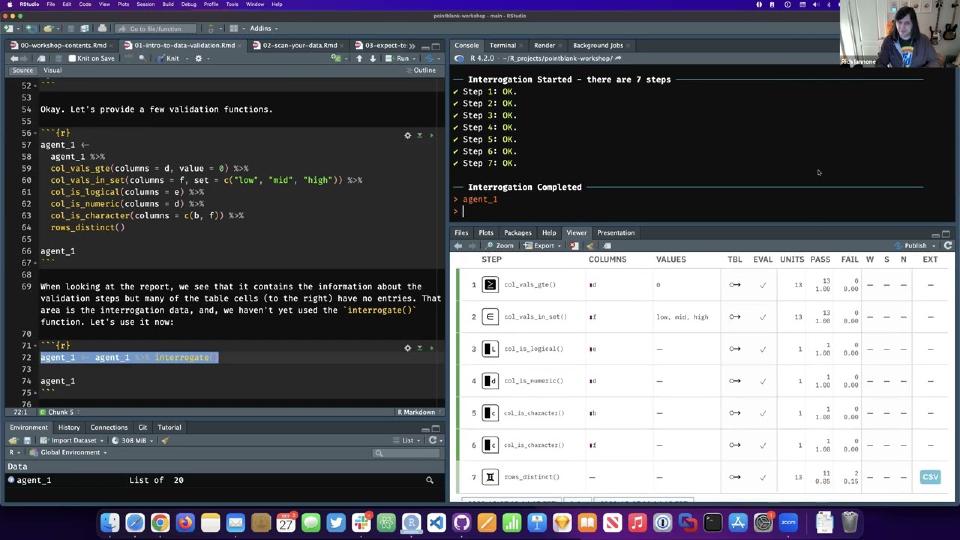

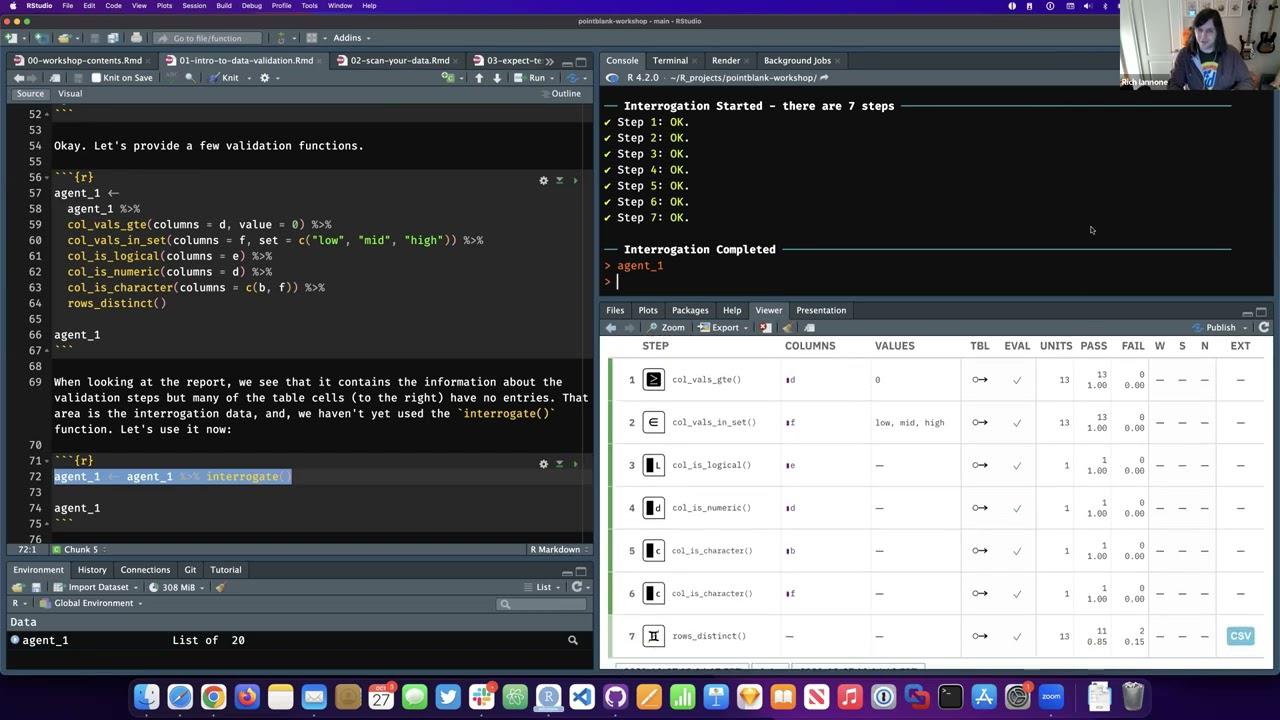

This is a different one. This is call vals and set. So what it's saying right here is in column F, we expect that values in it are of this set. Right? This predefined set. There's a bunch of call is functions. We'll get into this a little bit later, but it's good to explain it right away. The column type. So we expect that column E is logical. Column D is numeric. D and F are character. And we have another function here. It's also a validation function. Rows distinct. So we're just checking for duplicate rows. If there are any duplicate rows, that's going to be a failure. Not an entire failure, but a failure of test units. More on that in a sec.

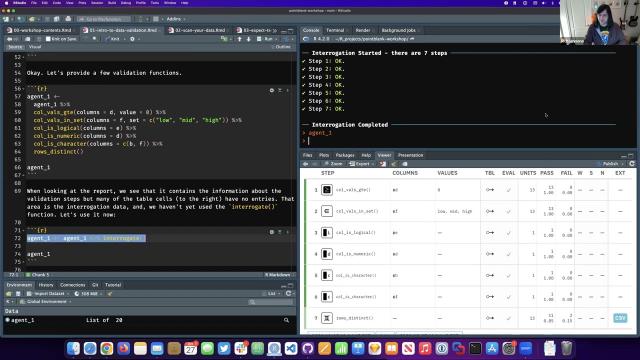

So let's actually run this. I'm going to run it right here. And then agent one will go here into the console so we can see it printed in the viewer. Great. Okay. Now we're starting to see things. More than before. We have basically a test plan right here. We have our functions coming in. We have the columns that we're using. So it kind of mirrors what we have here on the left we put in. But we have nothing on the right. This is all empty. It's, you know, it's because we didn't actually interrogate the table yet. You know, we're one step away from that. So we're building up the plan. And our next step is just to interrogate.

So it's basically this function, interrogate. And then great. It shows you some stuff on the console, which is good. Kind of like a preview of what's to come. And then agent one again. Great. Now we see things. We see that we have stuff on the right and we have, you know, all sorts of data here from the interrogation. I'm going to get into how to read this table, make it a little bit bigger so you can see more of it.

Reading the validation report

Okay. So there's quite a few things here. So I've got it all written down here. So you have that for reference later, but I'll just go through it pretty quickly. We have the step. So essentially this is a step index and we have the function that was used as a basis for this validation step. So each row is a validation step. The columns and the values, this is kind of like a common for most of these functions. You see rows distinct doesn't have that because it's not so much, it's checking across rows instead of columns. But we have essentially this mirrors the arguments you put in.

This right here. So there's a feature in pointblank where you can modify the table in a step. So let's say you just want to filter it a bit or add a column, a computed column, and then validate on that. You can do that. This just shows that there were no modifications made. We didn't use that feature. Eval just means that everything was good in terms of the interrogation, the evaluation of the table. Nothing unexpected happened. Good to see. You want to know that things worked before you validate things. So this is very important to have.

Units. This is a big thing. Units are these atomic units of tests. So this one has 13. That means there's 13 cells checked in total. 13 individual checks within this step passed. So the fraction of one. And none failed. So the fraction is zero. The only time we have failures, it seems like isn't row distinct because we have duplicate rows. So we see that right here. We have some failures.

What this does also is shows you in this ext. That means extract. It gives you a CSV of the failing rows. So you can just click that, get the CSV file, and then see which rows are duplicates. This is good for people not using R. If you just want to show somebody this table and they may be somebody else, they're not using pointblank, they can at least find out which get through a cause of what's causing failures.

So that's pretty much it. These things right here, WSN, these are different states. They're indicators. We haven't said any of these yet. We will. But these are thresholds that signify different states. So this W is warn. This is stop. This is notify. And if we set them and we get a number of failures which breaks the threshold, we see that's filled in and we get some indicator of that. And that's a good way of seeing whether things are important. You can act on those things. It's very flexible in that way.

Action levels and thresholds

So let's actually use this or make this useful by setting threshold levels. So basically, we want to use the action levels function to do this. And within that, there's like three arguments which are you can use any number of them, one of them, all three of them, it's totally up to you. And these are fractions right here. We can also include like numbers, like one at the first failure. But here we're just using fractions right here. So basically, it would be like this number right here, which are again, under the fail. So we're going to warn at 15% of testing is failing per step, we're going to stop, not really stop, but they'll just indicate in the S column when 25% of testing is fail and notify at 35%.

Okay. So this is basically, this is pretty benign because it doesn't actually do any of these things. You could set an action for when each of these are entered with another argument, but we're not going to do that because it's a bit too complicated for this workshop, but we're going to do this at least. So we're going to make that object from action levels, we can actually print it and see the results. It gives you sort of like a indication of what you did right there. So more than failure threshold of 0.15 of all test units.

So this is a default for every single step and you could, in a given step, change that, but we're just going to run with the default for all test units like this. Okay. So agent two is going to be very similar to agent, the first one, we're going to have a few more of these like validation functions within, like this regex one, for instance. And, but the key thing that's different here is in actions inside create agent, we're going to introduce that action levels object, which is right here.

So let's actually run this and then I will copy this over here and we'll see the new report in the viewer. Okay. So quite a bit different. We have more stuff happening. Basically we have these action levels right here. These are failure thresholds sort of like as, as fractional values. So we see right here in step two, we have a little bit of yellow here on the left signals warning, and we see that this is filled in right here because we have more, you know, 0.15 of units failing. So we get the CSV as before. This happens when there's any test failures, but we see these when there's a threshold being broke through.

Calls that Val's regex really failed a lot, like almost more than half of its test units failed. So we get all three of them being filled in. So we see it's active because we have these sort of like open circles, which means that it's, you know, it could enter these states, but the filled ones means it did. So that's what we have here. So this is good. So this is a good report. And we have these indicators on the left, which are colors, which sort of mirror we have here. So if you have a green, like a solid green like this, that means everything passed flawlessly. If you have a lighter green, not everything passed, but we didn't enter any of these conditions like WS or N.

So if you have a green, like a solid green like this, that means everything passed flawlessly. If you have a lighter green, not everything passed, but we didn't enter any of these conditions like WS or N.

So that's basically how you read the report and continue down.

So like I said before, you could do, this is a default right here. These action level thresholds. But any step there's actually an art and actions argument, and you can set like a different action levels value for that. So for this one right here, calls about call vows between we're saying column D should have values between zero and 4,000, but here we're specifying its own action levels value. And we're saying here, we're using absolute values. I'm saying one at one failure and stop at three notified five.

Okay. So let's actually run this. Great. So it's doing this little thing in the console. I'm going to show the report.

Hey, Rich, you got a question you want me to ask those as they come up or do you want to just wait till the end? Please do. Yeah, that'd be, that'd be great. Actually.

Someone asked, is it possible to change the report title if you want to share it with colleagues? You absolutely can. Yeah. So I'm going to do that right now because it's a good thing to do. So it's basically get agent report, and there's a field there called title. So by default it's called the bolt, but you just give it any title you want, like my title and just run that. And it appears there. And there's a few other little keywords that do nice things, like maybe the table date becomes a title, but anything's marked down here too, as well. So no nicety. I'll just show that it's not really obvious.

There we go. So that's bolted down. So yeah, definitely you can do that. You can, you can customize with get report, get agent report, but by default, you know, just printing out the agent itself, you just get the defaults, but it's highly customizable with this function.

Okay. So continuing on, we have this now and we see that call values between, yeah, it's between zero and 4,000. This changed. So basically right here, there's one test failure, but it's signaled a warn because we set here, you know, warn at equals to one. So that's different than the rest. We don't really get an indication of it, but we just kind of know from our code, I suppose. So that's, that's, we can do, we can customize per step or just have defaults that run through all the steps.

Overview of all validation functions

So I'm going to skip past and I'm going to give you a brief look. I can't describe all of these validation functions. There are certainly a lot. There's 36 of them. Suffice to say that they do have really detailed help articles. If I go to any one of these, like call vals between, for instance, oops, I did it wrong. There we go. So they have very long help articles and each one, they're not lumped together in terms of like, there's lots of these in one help article. Each one has its own help article. And the good thing about it is that it does have examples, which are fully worked out. And there's actually images as well, showing you what you expect. So these are totally runnable. I try to set, make the examples in a way that, you know, it's like a mixture of pros and the example itself, it shows an image. So, you know, if you land here, you kind of have something to go by.

Okay. So there's lots of these basically. And I hope it covers most of the common checking scenarios. If you don't, if you find one that doesn't quite cover it, there's actually one called specially, which allows you to just use any function you want and sort of like go manual as it were, but it still appears in the report, which is kind of a nice common ground or middle ground. So there's lots of them, but they can be described as sort of like groups. You can see there's some commonalities in the names. So I'll go through that pretty quickly right now.

So the functions, the validation functions at Beegamoth call vals right here. What they do is, and this is what you've seen, they check individual cells within one or more columns. And here's a nice little tidbit that you may not know, because you're just learning this right now. You don't have to use an agent. I mean, you get the report and everything, but you can use the validation functions directly on the data. And if you do that, it acts like a sort of like a validation filter. The data will just pass through unchanged if the validation passes, but it will error, like truly error if it doesn't pass. So let me show you that.

So a small table as before, I'll show it to you in the console again. So we have a reference here, make this a little bit bigger. There we go. So call vals between focusing on column A, which is right here, the integer column, we expect that values are between zero and 10. And they really are. I could just take a look at it here. So you run this, you just get the data.

Okay. So if you choose like something that's not correct between five and 10, there's definitely values less than five. So let's run this. You get an error. And you get an explanation for the error too. You see here it says exceedance of failed test units where values in A should have been between five and 10. That's what you wrote down here. But really you got like, you got like 10 failures in this whole thing, which is quite a few. So it's greater than the threshold of one. So automatically the first error will cause a stop. You can set that with threshold. It's like a simple version. Actually, you know, it's action levels, but you can actually use actions here if you wanted to and set it yourself. I won't do that for sake of time, but it can be set here.

Okay. So another one is... Questions real quick, if you want to tackle them. Awesome. First question is, is it possible to build a new validation function? It is. Yeah, it is. It's not easy. You might want to take what you have in terms of the available validation functions. I guess like these are considered as primitives. But also you can do it... There's a few ways. It's not so easy to describe quickly, but you can use something like call valise expression, name your function as the thing you want to be called, and then like use this internally and then provide the expression there. That's one way of doing it. Another way is using specially. That also takes a function, but it does it in a different way. It's more like loose. It's more like... More forgiving. It does less checking of the existence of a table, for instance. And you can wrap that in your own function and then set a few things. So you can definitely do it. And I think one of the answered issues in the pointblank repo has some examples of this. Unfortunately, I can't really dig those out right now, but it's been asked before and it seems to be totally possible.

Making a whole brand new one that ties into pointblank and uses all its features, that's not so easy because it's really sort of like intertwined with all sorts of reporting things. But you can definitely use it by wrapping one of these or one or more of these functions to make a sort of like a special one.

Awesome. There's another one here. As I think there are no factors in the example, any comments on how the package works with factors? Yeah. Yeah. It doesn't do a great job with factors because that's a bit sort of a special thing. Yeah. I mean, you can use like these special functions, like specially, which we're not going to get into and also call vals exper expression where you can pass your own expression and you expect to get like a vector of like true false. You can definitely use that. But things like, you know, the other ones where, you know, you're checking like ranges. I'm not quite sure, actually, how that would work. But if you do use it and it doesn't work, pass me an issue because like that part hasn't really been addressed as well as it probably could be.

Awesome. One more. It says that we may get to this later, but is it possible to check for something like specific proportions adding up to 100%? Yes. Yeah. Yeah. To do that, I would recommend making like a metric, like using basically creating a different column. You can do that in any of these functions right here. Like say, for instance, okay, maybe we can just do that right now. We can rattle that off.

So we have create agent. And then we pass in small table. Yeah. And then we want to check that something equals something like proportion. So let's try that. Small table, we do want to do call vals equal, for instance. And we don't have like a column like that where we can actually just add it up and get like 100. But let's pretend a bit just to see the concept here. So let's find out what this does here. So call vals equal. And then we will do columns. We'll make a new column. So we'll call it x. And we'll do preconditions. And I believe we can do, maybe we'll call it x just because it's a new name.

Okay. Rich, there's quite a few questions that are coming in the Zoom chat. Do you want to take a look at those? Do you want me to read them to you or keep going and come back to them? Maybe keep going and come back to them.

So we can do this. Okay. So we can do something like we can mutate. Just get rid of that extra tilde. Great. And then in this case, we're using mutate. And we'll say y. Well, we won't actually do the thing that was asked, but we'll do something similar. We'll say like y will equal a plus 10. Okay. And then we want to see that values are maybe greater than 10. So let's do this. Value 10. Okay. So we have to interrogate.

So what we're doing here is we're actually making a new column, y. And you could do a summary and try to get all the values in a column and make a table which has one row and maybe that one column, and then check if it equals something. In this case, we're doing something totally different, but the concept is the same. You're taking this preconditions argument, you're mutating the table, you're making this new column, and you're checking that column at the same time. So it's a bit, it's two parts that go together. But once you get that idea that you can like sort of like at any step, you can like change the table and then check that change table. That's kind of nice. Because like you don't have to have a whole bunch of functions that check every little tiny thing. You can make the metric and then check that new metric, even in just like one step. You just got to be confident that the change you make is correct. And I hope that somewhat answers the question in a roundabout way.

Column type and row checks

But I'm going to carry on and I'm going to move on to like these call is functions. Basically what they are, and we've already seen them, is a column is of a certain type. So call is character, checks for a character column, and you know the rest. So that's what that does. There's two rows underscore functions, rows distinct and rows complete. They check for entire rows. We've already seen like rows distinct, just checks for duplicate rows, if there are any that's a failing test unit, or it could be as many like test units failing as there are duplicate rows. Rows complete just checks every single row and looks for any values. So if there's any value, then it's basically a failing row and a failing test unit.

Okay. So let's check these. We know we have like duplicate rows in this table. I put one in on purpose. It's basically rows nine and 10. So if you actually run this, we get an error automatically. If you just take the head of the small table, which avoids a duplicate rows, it works fine. We just get the data out. Because if you apply these validation functions directly on the data, again, it just passes the data through.

Okay. Rows complete. We know this fails because we have an NA somewhere in there. Tells you that right here. If you just take a few of the columns, you could, in the columns argument of rows complete, constrain the check to just a few columns, which is kind of neat. Same thing happens or is possible for rows distinct. So if you just want to check one or two ID values that they're unique, you can do that with this function or with that argument, I should say. Great. So that passes it through the whole table. We just checked daytime, date, and A and B because there's no IDs there. And it works.

Okay. There's a number of match functions. These are a little bit complicated, or at least some of them are a bit complicated. I will show you two examples here of row count match and call count match. We're just checking that the count of rows for the entire table is, it beats an expectation. So we know the small table has 13 rows. If you run this, it passes fine. And another cool thing is in count, we just pass in another reference table. And it just looks, you know, it just, we're just matching the count of columns in this case with that of another table. So it just so happens that small table has the same number of columns as Palmer penguins penguins. If we run this, it passes the day through because it's true.

Okay. So that's my super quick look at all the validation functions. Each one, each one of them has a help file with examples that work and are pretty instructive. It's really hard to like, you know, get at them. Each one has a different interface too, depending on what they are, they may have different arguments, but they share a lot of common arguments.

Getting data extracts from failing rows

Okay. So getting data extracts from the field rows. Okay. So we've seen before that, you know, go back a bit, we have these CSV buttons here. These are all fine and well, but you know, we have our here, so we can just get them, you know, through our, and actually get the, you know, like the data frames and we can do that with get data extracts, that function right there. So let's use get data extracts on agent three and see what it does. And this is a case where I want to expand the console a bit. So we get a list of data frames or tables really. What we see here is like the name, you know, two and three, these are the step indices that had failures. Okay. So if, you know, if, you know, we don't see one, cause it had no failures and we don't have any extracts.

So yeah, so we get like one extract per validation step that had failures. Sometimes it's just one thing, sometimes it's a number of rows. So this is very useful when you want to find out where the error is for a certain step. That's great. And if you know the step, you could isolate that. You can just say like, get the extracts, same agent, but step nine. Okay. So let's run that. It's kind of cool. We just get the table out that way. The only key thing is you have to know what, what step is what, but if you know it, then it's kind of useful to use this.

Okay. So basically what we're doing in this phase of like the module is we got an agent and we're doing stuff with it. You know, we have the report. That's great. But we, you know, we want to do things, you know, with, you know, with the data that's in report, there's actually tons of data underlying the report. So one more thing we could do is getting something called sundered data back. I definitely use it at the source for this one. So basically, when I made this function, basically what this does is it splits your input data

frame or whatever table into two parts the past data piece, basically no failing test units and the failed data piece.

So if some rows have like, you know, like failing test units, that row is, is automatically sort of like listed as like a fail row, you know, in a way even if there's like no test on it, it'll still be pass. So basically the differentiation is if the row had failures, then it's part of that fail extra or fail piece. And otherwise it's part of the past one.

So let's actually it's really hard to describe much easier to show. So let's actually do that. So here's a new agent making, it's agent four, pretty simple. We only got two validation steps inside.

Column values greater than a thousand for column D, column values between, oh, this is kind of cool, two different columns. So we can do a cool thing like for like the value, we can actually reference a column and say like, okay, so the volumes, the values in column C should be between the values in column A and column D. And you can do, you know, literal values, you can mix and match, you can have a literal value here, a column value, you know, a column reference here or, or vice versa.

But let's just run an agent for this validation. We're doing all three steps, create agent, validation steps, and then interrogate. So we see this, this is great. I'm just going to type in agent four and we get the default report. Looks pretty good. We didn't set any, like any of these like action levels, totally fine. We're not really looking at that, but yeah, we see this, we get some failures in both steps.

Using get_sundered_data

So now we're going to do a thing where we take this agent and we use the get sender data function on it. So what it does is, well, by default, it gives you the rows that pass like the good ding piece. So these are basically from those two tests, the only pieces, like the only parts or rows of the table that didn't have a failure.

You can change that around. Like I said, that's just a default. You can just get the, you can get the fail piece as well by saying type equals fail. So these rows should add up, right? These sets of rows, they're also exclusive. These rows should combine to make the original data set. So that's a promise from the package.

You can do different things too. You can also have a combined view. What that does is it gives you like another column, we call it PB combined. And it's essentially like a flag column. It tells you whether it passed or failed, which is kind of cool. Sometimes you just need to have the whole data frame and like this column right here.

And the nice thing is right away, you don't have to accept what this is, like in terms of the values, you can set different things. You can set a pass fail vector. And so in this case, now pass will be true and fail will be false and the column will be changed to logical, which is kind of cool. And also, you know, it's pretty flexible. You can choose integers as well. As long as you have two values and the first one is pass, the second one is fail. Then you got this right here.

And you can switch these values around. So that's what this does, get centered data. Basically, it allows you to split the data in case you want to use the data. You do some tests, but you just want to get like the data that didn't fail any of those tests. There's probably some situations where that's pretty, pretty acceptable.

Using get_agent_x_list

So we have another function called get agent X list. Again, it uses an agent object, just like those past two functions did. But this is kind of like when you just want to get at the information, like that sort of gold mine of like interrogation info and like, you know, like do your own little investigation independent of this table, which is useful, but sometimes you just want the data.

So let's do that. We'll make an X list by just using the get agent X list function like so, I'll run that. Great. And I will actually copy this into the console here. And if you do that, you get a printout like in the console of what's in the X list. It gives you, it sort of echoes back, you know, it's small table. You're basically looking at all steps. That's a default. You can look at individual steps too.

And some of the arguments of this function, get agent X list, but we're looking at, you know, things holistically. So this is kind of cool. It gives you like all the, all the components of the list. So I can do dollar sign and look at, for instance, okay, I'll start a complete, but I won't do that. So basically that's the number of test units and failed. You can look at that.

You can look at one, basically I say, so this is kind of cool. So you can sort of see if it was any warnings at all, just by wrapping with any true there were.

Email notifications and report customization

so let me show you how that email looks i'm just going to like run this separately this is not set up you'd have to use blastula another great package by the way um but this will create the the message body for for blastula and then you can like take it away with like you know putting in the your you know your mail credentials and then sending it to whomever you want so let me just show you what that email looks like okay agent three is obviously very big okay

here we go so it'll do this stuff for you automatically it'll just generate the symbol text you can change all this stuff this is all very customizable uh but it'll put down it'll include a pretty stripped down version of the table uh just with the essentials units pass fail the states and then you know stuff like that um so this is a message body that can go into what's basically it just makes a blastula object and then you can just send it off uh this is customizable like i said you can do things i'm not going to get into it but you can just expose what failed and not the stuff that didn't fail um so there's options for all that

okay uh so i'm going to get into more customizing uh like but actually showing it so the title of the agent report so i showed this earlier i'm going to show it to you again basically this is changing the title we can use markdown so we have the double stars on each side of the third put it in bold and we can do other things that are marked down like bad ticks and you know underscores things like that so that's nice uh we can also um yeah this is the thing where i'm customizing the report just by like things that failed sometimes you just want the bad news so we're going to arrange by severity that's one of the keywords it's all in the get agent report help document so basically it's not excluding the um of course you can do that the steps that didn't fail but it's putting the ones which did fail at the top in order of severity so the ones that you know have stopped are first then just warning or second group and then the rest uh so that's great um yeah so right here keep fail states so this is where we exclude things which are not failing okay so let's run this so still arranging by severity but we're basically excluding all the the green rows okay sometimes you just want this just to get get down to it

oh another cool thing uh since this is a package that can be used all over the world we have a lang argument here so if you do get asian report lang de and then just run that we have things which are you know like um you know in this case it's german but there's a bunch of other languages too i think there's 11 languages included and so it should have little explanations um but you know focus on each of the things right here you can sort of see like you know this is great because like sometimes your stakeholders don't know english um and this is meant to be shared so that's a kind of cool thing

Module 1 summary

okay uh so to summarize i think the timing is pretty good for this first module i thought it'd be like too long questions of course i will handle but um but this is not bad this is good pacing i think um so to summarize so data validation in pointblank you need an agent you need a bunch of validation functions and interrogation it's kind of like a sandwich as it were of validation functions okay and the agent what it tries to do is create a report that's informative and easily explainable at least once you once you get the basics you know this is pretty explainable i think

um we can set data quality thresholds with the action levels function and so with that um there's default dq the day quality uh thresholds and we can also set set step specific thresholds okay okay there's 36 validation functions having a similar interface and many common arguments uh that can be used with an agent or directly on the data and remember if you use it directly on the data that's a totally different workflow you're basically just like probably running it in a notebook and you want to fail if one of these validation steps fails so that's that's the difference there the agent gives you the report there's no failing of that like uh during the running of it

uh we can get data extracts and these data extracts are pertaining to failing test units and these are mainly with uh you know cells in in a row that fail and also like um you know like duplicate rows it'll show those as well uh so that's extracts you can use get data extracts with that to get those uh you can use you can get splits of the original data set uh it's kind of like a different type of extract but it's like a it's like basically two tables you can choose one or the other or get a unified report uh it basically just deciding which rows have failing test units and that's like giving one part of the split the other split is either no test or passing

okay get agent next list that gives you like essentially a list if you print it you get a sort of indication at the console what components are in the list you can basically access everything that's in this report through that list so you can go at it more programmatically uh email is supported through the blastula package email create is one way of doing it it gives you the message body you just pass it to blastula so long as you know that other package uh connecting it to isn't so bad and again the report it can be modified in lots of different ways adding into all them with get agent report okay so that's the beginning or sorry that's the end of the first uh module

so data validation in pointblank you need an agent you need a bunch of validation functions and interrogation it's kind of like a sandwich as it were of validation functions

Q&A

uh i will take any questions any more questions you have if anyone's available to ask them to me uh yep rich i will get going with the question remaining all right uh i think one of them is um i think just starting from the earlier one uh do we have a check that can check something like the specific portion and it got to 100

oh yeah i didn't answer that uh sorry sorry basically i can deal with uh preconditions it's an argument that's available in all validation functions um basically how it works is you you know create a new column you can make a summary of the table the table can be totally reshaped have different columns it's totally fine it's very forgiving as long as you reference the the column that's in the new table up here in columns uh so my recommendation is for you know tests which do some sort of like you know like uh create some new data or you need to like you know like calculate something i would use preconditions for that

thank you uh next one is possible to compare validation errors with each other from one analysis to the next i will get into that later so that is a report which is sort of like um you have maybe the same target table and you have the same steps or maybe slightly different versions of the steps and you're comparing across different runs or maybe different time periods you're doing the same check that's totally possible uh that's a different type report using a multi-agent i will get into that a little bit later in the in the workshop but it's possible

again great all right can the validation include a combination of several columns like if a isn't missing then column b isn't yes uh there's a function for that i'll go to the help i won't really show an example because there are examples in there it's called conjointly and you provide you're providing multiple robust validations for joint validity basically you you want to check that you know things are true across right like this has to be true and this has to be true pass this is true this is false fail you know any any any one failure will trigger like that and if it's something more complex um we can definitely do call valves exper right here or help document uh does the calm daddy agree with the predicate expression so basically i have some examples down here of that and you can do more complex things like it has expression like you know it's just correct and you can definitely involve more columns in that so you can definitely make something a bit more you know like this in this case like well it's hard to explain uh you can just case when for example and and do multiple case by case things so you can definitely get into more complex stuff which involves multiple columns and you know they all have to like work out with this

great uh i think coming off your question related to extraction um so one thing is how does uh the two is working with big data okay yeah so maybe you have like a giant data table like you know like millions and millions of rows um so the extracts have a limit so it's not really i didn't state that there's a default limit of like 5 000 rows uh so if we go to interrogate i'll show you where that lives basically right here yeah right here sample limits right here so that's what that means uh you know by default we extract field true you can shut that off if you want to uh you can change the way the extracts are gathered as well but we do have a limit so like it'll actually like pull or collect those values uh from the database and uh it won't you know like uh kill your connection with like you know extracting millions of rows which i think this is sufficient you can change this obviously but uh you know unlimited is a good thing

i think this kind of answer similar question is maybe setting a sampling percentage is it can be done uh yeah there's a frack that's a pretty much percentage yeah so you can set that to like 0.1 or whatever yeah yeah and i think another one is can you well can you actually name the data extract in each step oh i see yeah instead of just i like a number um no not really not right now not so easy because like uh you don't really know what steps you're getting like there's no great unique name i mean it's going to be well okay hang on here because in each each validation step you could set a label for it but the label is more like not like an id we do have an id here as well but it's tough to do in that list as well um but if you find that you'd rather have like you know like get extracts you know provide everything by step ids which definitely are collected and they're made for you if you don't actually set one yourself i would say provide an issue and then we can have like a switch in get agent extracts to either name them by like you know step number or step id and you can definitely fill that in um i think that would be that would be good but yeah currently we can't really do that

great i'm trying to read a few more just pop up from the zoom um okay uh can the test name be relabeled to show a string description instead of our function call yes uh well sort of uh there's a hybrid approach there so we can do a thing where we insert a label right below this shifts up but the label will appear and it'll take as much as two lines i think so definitely you can do that um do i have an example here not really can i book one up fast maybe not really as well but let me show you where that lives anyways so like call valves between so you would include you would use it right label right there so i'm not going to do it but you just this is like you know descriptive label okay there's also another thing called uh briefs which you make you can make as long as you want it automatically generates one for you that's what you're actually seeing here um you expect that column date time is of type posix you can write a really long one here if you wanted to but it automatically generates one for you so it's like two places one that's like a label that'll appear right here and one is a pretty long text which is by default automatically generated that you can just have um and program programmatically programmatically extract later

all right uh two more i think we're getting to the end uh how would you select columns from a data frame to include the data extract reports after validation how would you choose which columns are oh which how you select column from the data frame to include in the data extract reports after the validation yeah i think you can't really do that i think by default it will just include the entire row with all the columns um i believe get data extracts does not do that um but what you can do is when you do get data extracts you can use an l apply and just like select certain columns you have to be a little bit careful but you can apply it through each of the list components and just do like a sub selection or a subset okay not very satisfying but yeah that's one way of just getting around that

all right final one can i parallelize the validation using more than one core i believe you can um oh the validation itself oh no that's something that i should probably be doing if i were because i think that's not possible they are independent of each other which is great uh but there's no i can't see any real needs of do of like you know like splitting it up and then recombining it in the end in a paralyzed fashion uh really if speed is a big thing and it might be um especially if you know huge amounts then i would maybe file an issue and i can take a look into some parallelization there's some things which maybe not this but some other things which should be parallelized in the package but these are generally pretty fast with the database though it might be a bit slower but we're not doing anything very complex when we do queries on a database they're pretty simple operations

thanks rich i think that's the end of the question that i can find at the moment great good um should we have a short break maybe like a five-minute break just like decompressed maybe look a few minutes or the host your call okay let's do it let's do it so 105 eastern time no let's do 106 eastern time we'll recommence and scan your data the next module

Scan your data

okay i think we could resume um go into the next module the next one is called scan your data it's going to focus on two functions uh the first one is what i'm running right now it's called scan data so what is that um well it makes a table scan so what is that uh basically you got a new data set you want to understand what's in that data set and well scan data can give you that that sort of rundown of what's in there i know in r there's a ton of packages that do this stuff um this is just one more that does the same thing um but i'm hoping that this is pretty good and it fits your needs i mean like it kind of like it goes in with it pairs nicely with data validation because you have to sort of understand your data get the lay of the land as it were uh before you you know you really get down to it and like create validation functions

so this is kind of i'm using uh uh from the palmer payments package the penguins raw data set that contains more data than just the penguins data set uh so basically what it shows me right here at the very beginning there's like a section report it's all in html uh you have sections here we can just go through we can see we ran the report you know you get some like you know environment information here uh understand how many columns and rows we have and the na's column types it's a brief summary of the table and then this is really cool uh you can go and toggle these details and see like for every column what's going on like we even have like little gg plots being made uh so sample number this is not going to be that exciting but uh we do have for some things which are you know for instance numerical values we have some stats here we go so common length millimeters uh toggle details we got some you know serious stats here in these different tabs which is kind of neat uh so we can really understand whether things you know what how the data is arranged what it looks like generally but also we can find some errors if things really creep out you may see a maximum that is kind of like you know not right um of course in this data we don't have that but you know who knows what you'll find and that can be the basis for creating uh validation functions in your you know validation checks

so i'm just going to skip through this a bit more but essentially it's kind of like this it so it knows about different types of columns and it gives you a different display uh based on those we also have this interactions table it's kind of like uh just a nice sort of correlation plot and uh it you can mix and match you know character values and factors um you know with numerical values and it it does its thing makes density plots and things like that so kind of neat uh correlations it's good to have as well um you can sort of see really quickly what correlates with what uh and what doesn't and what anti-correlates uh missing values this is kind of like uh like a little plot and it shows you know which columns have lots of missing values so you know this is like a fraction of missing values in each sector so each one of these like little squares is kind of like a a bundle of rows or bundle of cells really because they're split up and you sort of see here that yeah comments i mean not too many comments so that's you know mostly empty um you know 84 empty but you can sort of see it right there it's kind of nice

and here you can sort of see at the end we're getting a lot of missing values uh right near the end so interesting sort of like and see here that blue no missing values at all not even one so that's what this shows sample just gives you a little table gives you the first five rows uh last five rows and it's kind of just to sort of see the table um you know so you have some context about you know in case you forgot uh so that's scan data it's kind of cool and you can customize the report you don't have to have all the sections here uh the default value sections is basically this like little string that's glommed together each of these letters represents a different part you can just change that change the order even just by the way you present the letters and then you know arrange the report that way so you have a little or as much as you want different order

it's totally fine uh so this is kind of cool uh safety data so we have this dss right here i'm just going to like look at it quickly just a lot of columns a lot of variables okay so let's actually run this so we just want sections the overview uh sample in second and then variables last i'm gonna run that it's kind of nice because it gives you like a little rundown of what's happening um this is probably a good candidate for parallelizing because the variable section is a bit slow um and that's totally on me to make faster but it does again a lot of stuff

like a lot of ggplots a lot of sections a lot of html being generated um if it weren't for html tools this probably wouldn't be as easily made but we're using that uh to sort of generate the report sort of a bespoke report as it were okay so it's taking a while so we'll just continue with this uh we'll wait i'll just describe that yeah with scan data you can present the results in different languages um i had a lot of time on my hands so i was able to translate a ton of stuff and like make all these different languages um you know like basically all the little labels that we have here like sample will be translated to those languages so kind of cool okay so that one took nearly a minute um hopefully it doesn't take very long to show that's a that's a viewer thing

but we can keep going um oh no here we go okay so safety data adam adae okay great so we have the same report i guess the thing i want to show you okay there's sample right here so it can handle a pretty wide table that's totally fine uh but the thing i want to show you is oh look at this if we have labels in a data set it shows label which is kind of cool that's not too often in most data sets but i think every single adam uh data set here has that so that's kind of cool it's kind of a nice thing we have that in there so cool so it supports that

uh that's been the only other thing i'm going to show you uh character string lengths it's kind of neat we can sort of see this um but it won't go too much into that because i believe you covered that before okay great so kind of neat we can do that uh i'll make one more table scan just to show you something you can do with this um well really there's lots of things you can do with this uh this publish button is is here again so we can publish this to lots of places um you know our pubs if you want to go that way uh we can publish it to Posit Connect this is all very publishable we can remove this top navigation bar that can be totally removed i think i did that earlier right here now bar false so you can customize it that way i've even done things like putting this in an iframe and including it somewhere else that's totally possible so it's pretty flexible it's based on bootstrap um and it's you know useful mostly for like interactive things but it can also be shared okay so let me show you this table scan so i saved it to an object right here you can totally do that and this one is based on the dplyr storms dsn so same sort of thing okay but the thing i'm going to show you is that you can export this to an html file so export report it handles all sorts of different objects in point blank the agent report other types of reports you haven't seen this one uh you just give it the thing it can be the table scan it can be the agent it could be like the report from the agent you get from get agent report it accepts a lot and then you give it a file name and it makes a complete html file for you so i have that in the files here um table scan storms i'm just going to pull a little bit you click this you can open a browser there you go it's very shareable it's basically its own document great okay so that's all i'm going to say about scan data it's a cool function

i'm trying to make that even better i'm always going to iterate on that and make it more useful and hopefully i'll get the speed issue because i recommend getting some tea when you just run scan data or you know whatever

Draft validation

uh okay so here's a different type of scan but it's sort of like uh it sort of dovetails what we did before which is validation uh we have this function called draft validation so what does that do well what i often do when i don't know what something does is i look at the help okay nice draft a starter point blank validation dot r dot rd file with a data table so this is kind of like a magical thing you basically give it a table there's not much to it i mean it has some of the same like arguments as create agent because it just passes through uh but what it'll do is it'll create yeah a new file with a bunch of suggested uh validation steps so and it promises to work the first time so let's take a look at that uh so with dplyr storms this is the d7 one yeah so that's the display in the console it's got all sorts of things it's got some uh you know month day hour latitude longitude other values great

so let's just pass that all the way to like right into draft validation okay here's a new concept when you pass a table into table you can start with a tilde so what does that do uh basically that's instructions on fetching the table you may have something much more complicated where you actually have to do a few steps like you know like read csv with reader and then like you'll pass it in and maybe the data changes you know as you're getting it so what this will do is every time it runs or does an interrogation it'll refetch the table using this so it won't be like included right away when you run this you know create agent it'll run when you interrogate it so kind of interesting kind of subtle basically just lazily grabbing the table just in time so if you include that tilde that's what it does i'm including it here because when i create the agent it's going to pass that through and i'm going to run this in fact i already did and it's in the files um basically it makes a new file uh storms hyphen validation and it gives it a dot r as well okay so let's run let's take a look at that uh storm validation right here or open that up

so i swear this is what it did okay so it basically it it makes this it provides comments here uh basically these are the these are the briefs that it auto generates for you and it just puts them in line you can choose to not have that there's an option there in draft validation um if you just look here like add comments you can set that to false and uh yeah but by default it just puts those in in line and it makes all this and it runs it's kind of neat so let's actually run it so there we go agent brilliant okay now we're going to actually run the aid in itself to get that report

nice and everything you see it ran perfectly fail because it's taking the data as it is and assumes it's like you know like a good extract of data you can totally change all these things like this may be no not right it may be a bit larger like these bounds um you know like these are definitely tweakable you can tweak them a little bit and you can um you know like these are definitely tweakable so it gives you like a start uh you don't have to type all stuff in manually you can just start with this you can delete parts you you have a sort of like a template as well and a really cool thing with this is that it understands certain types of columns it'll do like it'll look into things like it knows that long is longitude so it knows what the bounds are even if there's no one minus 180 or 180 in the data set it just kind of knows like this is longitude and if there's lat it knows about latitude as well there's a bunch of things it does it's called column profiling it basically it goes by the column name it does like another cursory check inside the data and if it all kind of looks like it is what it really is like what it expects it is it'll set the validation values to be you know like more or less like standardized correct values and we have another function here we haven't seen yet um expect that column schemas match so what we're saying here is that these columns exist and there are these types and they're in this order right here so basically this is a column schema it's kind of a pain to like write out so it's kind of nice that draft validation does this for you basically if your columns change order or if they change the names or if their types change this will detect that basically it'll fail if it's not this so it captures the schema of the table so super neat it'll guarantee the work it pops in a action levels value for you like a little statement here and it does the same sort of thing here it passes in the table to this part okay i know this is a lot any questions about this at all

a really cool thing with this is that it understands certain types of columns it'll do like it'll look into things like it knows that long is longitude so it knows what the bounds are even if there's no one minus 180 or 180 in the data set it just kind of knows like this is longitude and if there's lat it knows about latitude as well

Q&A: scan data and competing packages

um rich i think there's one question it's about the scan data can you customize information show in the details okay okay in the details um

which details sort of i mean not really um you can't really do too much here you can like i said you can only change like a few aspects of this because this is more or less like just like a template and it gives you what it gives but you can change the order of things and like you know get rid of at least the top bar um and oh i see what you mean maybe these details i know currently you cannot but i would love to have it so like you can like programmatically like insert different like statements for a certain type like a custom type that you might have and just give you like the machinery to like or like the the way in to like you know set different like tabs and to do that currently it's not available um but that could be opened up as a custom thing uh later on i would thank you i never got that request before i would suggest although i thought of it but you know put it in the issue in the repo and i will definitely like you know like uh pencil that in for like future work because that's a that's a good one it's a kind of an obvious one actually

uh it's a kind of interesting question too um what will be you consider as a competing package for the analysis that's being performed here sorry what's the question again okay sorry uh what are the competing package for this type of amazing analysis oh do you mean like this type of scan yeah yeah i guess so yeah i guess it's quite a few uh like skimmer is one big one um i believe a summary uh table summary i kind of is also um it's hard to come up with names when you when it comes right down to it uh there's at least a half dozen to maybe a dozen of them um i i bet you the most popular one at the moment is skimmer and i don't know what it is i think it's called h i don't know there's a few of them yeah there's common one is narnia and one is this data yes narnia is one yeah it basically it does a lot of this but i think it's getting into the other stuff too although i don't seem to have the missing data report here but that's also quite similar it does a much better job with that because it it does have many more it specializes in that yeah basically i'm trying to do this is make this general but sort of like have like a lot of breadth really as it were and um yeah there's there's a great few of it but i think skimmer is probably the one of like that's the most popular of these types

i think one last just pop out what's the suggested data size limit for a scan um it's a good question what's the upper limit um i think what makes it slow would be like more columns because for each column it's creating one of these sections and just lots of them essentially. So, yeah, that's pretty much it. Yeah, I wouldn't go too high.

Summary and moving on

Great, thanks. That's all the questions I found now. Okay, great, thanks. Okay, so I'll continue on. First, I'll summarize. There's not much to summarize in this little section, but I'll do it anyways. So, I said here it's a great idea to examine data you're unfamiliar with, with scan data, and even better idea is using draft validation because we can skip a lot of like frustration, especially initially, and maybe always use draft validation just to just to get those tables, you know, within the validation framework right away without having to like, you know, research every single thing. I mean, like I said before, it's a great jumping off point. You can always tweak things. It's guaranteed to work initially because it's just based on the data, and you may discover lots of things you may, you probably won't think of, and it's pretty comprehensive. It checks columns for existence, you know, through the schema. It checks for types. It checks for duplications of rows, and, you know, if there are duplicates, it won't add that step, but it tries to populate a pretty large, populate the validation phase with a lot of steps, which is good. So, yeah, I recommend these. There's lots of like little customizations you can do. I think it's a great way to start off. I probably should have left with that, but I wanted to sort of like, you know, start with some theory first, I suppose.

Expect and test functions

Okay, so I'm going to close this up. I see some stuff in the chat, but maybe nothing. Oh, yeah, okay, there we go. Not too much in terms of questions, so I'll move on to the third module here. We're making great time here, which is kind of good, so a little worried about that. Okay, so functions, functions, functions. This package has lots. We actually have like another set of functions which mirror exactly all those validation functions you've seen, except they include expect at the beginning or test at the beginning. So, indeed, if you look at one of these like, well, not this, but if you look at any one of these function documents, I kind of lied before because like each one of these like health articles does include quite a few functions, but they're of the same type. So, there's call vows between, which is the validation function. Use that with an agent or directly on the data. Okay, and again, okay, there's more. There's also expect call vows between, and there's test call vows between. They're all at least with the same, you know, ending. They're all the same type. They're just, the way they're implemented is a bit different. The way you get out of it is different.

So, I imagine everybody here's heard of test that. It's basically, you know, a testing framework for our packages. You test functions. This is kind of like that. It fits within that framework. The expect functions, they are expectation functions that could work inside unit tests. So, I'll show you that in a second. These test functions, they're a bit different. All they do is give you true or false afterwards. Okay, so you can use them individually. They're great for programming. Say you want to have conditionals, you can use one or a bunch of these in your conditional statement, and you should expect, you know, logicals to be the return value of each of these. Okay, so that's the differentiation. That's why you have these different, like, basically cousins of, like, this function here. Okay, so each one of these has, like, an associate expect and test. So, there's 36, 36, 36 of all these functions that are in the validation part of the package. Okay, so let's try using this. So, let's use expectation functions.

As I said, test that has a collection of functions that begin with expect. We copy that, essentially. We fit within that framework. It's not really copying, I guess. So, we can put the files in, like, a test file inside tests. That's usually how it works. Test slash test that. Okay. But also, we can run them, like, independently of being inside that test framework. We can just demonstrate it. Okay, so I'll show you small table C. It contains these values right here. Okay, so we're going to test that column. So, our expectation with this column of small table is that values can be between 0 and 10, and any values are permitted. We have a few NA values. If we don't explicitly permit that, then we'll have failures, like, failing test units. So, there's a new argument here, NA pass. This is also available in, like, you know, the standard test function, NA pass. So, if you set that to true, that means NAs are passing automatically. We're accepting those. Okay. I didn't put the argument names here, but this is, like, left and right. So, the bounds. Okay. So, let's actually run this.

We get nothing. That's expected. When this works, expect functions, you get nothing. Basically, nothing's returned. Totally fine. Within, you know, a test framework, you know, it knows that it passed if nothing's returned. Okay. This one's going to error. I know that ahead of time. That's how I would label this chunk. This whole thing runs. So, between 0 and 7 this time, NA pass equals true, just like before, but we reduced the range, and we know we have an 8 in there and some 9s. So, it's not going to work. So, what you get is this. It's the same message as before that you've seen before with, you know, just call values between, but just, like, a little bit different inside of, like, test that. So, that's a little behind the scenes stuff, but basically this is how you can sort of check that this works in general.

Okay. So, as I said, there's, like, 36 expect functions. It's a lot. So, it's overwhelming. We can do a kind of a cool thing. We learned how to use draft validation before to create a validation plan. It's kind of nice. There's also another function, which is kind of similar in a way. It's called write test that file. What it does is it'll create a test.r file using the agent from the draft validation file. I sort of combined two different things here. So, let me show you how that works. I created the files ahead of time, so you can see that. So, if you run this, you get the file, game revenue validation, because I set that file name. So, basically this is another dataset in point blank game revenue. It's kind of a bigger one. It's got, well, 2,000 rows. Not that big, but, you know, it's bigger than small data, that's for sure. So, we've got this. We made that file, game revenue validation. Let's take a look at that. Sorry, one sec. Okay. Here we are. Great. So, this is using draft validation. It does its thing. It creates, like, this giant thing here.

So, what I've added at the bottom, I know this runs perfectly, and it creates an agent right here and sort of signs it. I've added, like, another line, write test that file, and I've given, you know, the agent to that. I'm calling this file that this is going to create, game revenue. I'm just going to put it in the, you know, in the working directory. By default, the path is going to be, like, the test that path that finds it. So, it does some stuff to, like, try to find where it should go. But just like this, we're putting it in the working directory. So, if we run this, I have the file, game revenue. So, it's called test game revenue, because it knows how to name it to fit within the test that framework. They all begin with test hyphen. So, I'm going to open up that file. Okay. Great. So, it says at the top, generate by point blank. It libraries in point blank. It creates that table, because you kind of need that for all the tests. So, it does that in a separate statement at the top. And then individual, like, tests, like, steps, like, are encased in their own test that function call. So, we see now expect call is character. Before, it was just call is character and then all these values. Now, we have expect. So, it's kind of cool. And what it does also is these functions that begin with expect and test, they have their own special threshold function. It's kind of like a simplified version of actions, because we don't really need the full thing there. We just need to have some sort of, like, way to say, like, this works or this passes or doesn't pass based on a single threshold. So, we have that there. We have either this or we can do some decimal value. But by default, it always chooses one. Okay. This can totally be changed because you're just getting a file out of this. But it's kind of cool. So, now, it's basically this. But in this form. And so, if you were to, say, for instance, create an R package and you had some data you had to integrate as part of it, you can run tests on that data. Say the data has to change quite a bit. You're changing the data once in a while. This is nice to have. You just run this again and see if the data fits those expectations. And maybe you change these things if the data evolves to something else. But at least you have something to know that this important data that you're using does fit expectations and it can be used within this framework. So, kind of cool. Kind of cool. You can do this.

Okay. So, I'm gonna go back. I'm gonna close these down. Great. And those are, again, they're in the repo. They're in the cloud project. Those will always be there for reference. Those files. Okay. So, oh, I could have run that. Maybe I will run that right now just so I can see it. Because, you know, we don't often see that. Okay. So, it's gonna be test game revenue. The button is here. So, you can actually run it outside of, like, the framework. I'm just gonna run it. Okay. Testing file using test that. It all passes. Done. Okay. So, if this file were part of, like, a larger number of tests that were just in that directory, it would be just one row. But we're just doing an individual file. So, pretty cool. It works.

Okay. Great. So, that's all the expect functions and write test that file. Any questions? I don't think there are any in the chat. One quick one before we go too far. I think there's a question about in part two. I'm not sure which part two. But you mentioned the column names can have a label. How is this done? Ah, yes, yes, yes. Okay. Great. Perfect. Perfect. I'm not sure how it's done. I think it's through the labeling package, if I remember correctly. It allows you to have oops, sorry. I'm trying to see how I can do this. Console. Perfect. If you look at this, it's not obvious at all to me where it is. I think if you use attributes, they appear. And this is one of those things that I never get right. I'm pretty sure I have it wrong. Here we go. Names. No, that's not it. Maybe you have to go to individual column. I mean, I know they're in there. How they get in there is a different story. I'm never quite sure how to access them. Maybe you have to do a column name. Oh, good. There you go. You have to go to individual column names. That's where the attribute list. So, label study identifier. You can do that yourself if you know how to set attributes. I think it's just ATTR. And then set it. Or you can use a package. I believe it's called labeling. Labeling. Or labeled. Labeled is the one, I think. Yeah. Yeah, this will be the one. Wish it would give me a description. But that's not to be. But, yeah, if you use labeled, I think it's easier to set those labels. Otherwise, you can definitely what point blank does, it looks for the label attribute in each of the columns. That's how it just looks. If it's not there, it's not going to show anything. If it is there, pretty rare case, it does show it. But this is the key to it right there.

Test functions and conditional logic

Thank you. I think that's all. Yeah, thanks. Okay, great. Okay. So, we're back to point blank test functions. Another variant of these validation functions. So, yeah, collection of test functions. 36 of them, again. They're used to give us a single true or false. Okay. That's kind of nice to know. So, say we want a script to error if there are any values in the daytime column of the small table DSA. So, we can write something like this. So, if I run this, will it error? No, it didn't. Okay. Because it's not true. Okay. But, you know, and we wrote a little message, you know, if that were true. The negative case. It's a little crazy. But essentially, you always want to have, like, you know, like, negative in front. And then, like, the stop condition inside. Okay. But in this case, this one does have an error. Okay.

So, in this case, we're using test call values increasing. Or the expectation that the call values are greater than 1 in column A. Either one of these. You don't really know going in. You just know that one of these is going to be true. It will enter this state right here. So, I'm going to run it. Yeah. I mean, which one is it? I don't really know. So, let's see if it's this one. Is this true? That's false. So, it's got to be this one here. I'm going to take this. Run it. There's your true. Okay. So, the cool thing about this is, you know, you can change your statement to be, you know, pretty complex. You can change the operators that are used. You can basically program with these. Because you're just expected to get a true or false. And you can do what you want with it. So, it's pretty nice when you're programming. Sometimes you'll need it. Sometimes you won't. We saw with email case, that could be useful. But we were really, you know, using it sort of differently. But at any rate, this could be useful at certain points. So, just to sum up, we can validate tabular data and test that workflow with the expect functions. I did mention that cool function right here. Test that file. Makes it really easy to take an agent and make a test that file for you from that. Basically, you iterate through making an agent, running the validation. Looks good. We just capture that in a file and then put it in the right place for testing. The test underscore functions, that collection is useful for delving conditional logic in programming contexts. I mean, that's probably better than other workflows or use cases. But this is the obvious one for me.

Break and Q&A

All right. We are at maybe halfway through the workshop. And we're at module 3. I think we can take another break. Breaks are good. I'm gonna say we have three more modules. This is actually like perfect timing, as it were. And I know these ones are a little bit smaller and, you know, a bit more relaxing than the first two, which were maybe a bit heavy. Again, any questions about the first parts or in general? Things were confusing? Now's a great time. Take a break. First, we can have questions after the break. But how about we take a six-minute break? So, we're at 15 minutes before the hour. And we'll begin again. I would try to catch all the questions from Slido or the Zoom. Thank you. Thanks. Yeah. Because I'm not paying attention to those. But thank you. Appreciate that.

Okay. We are probably back now. I'm trying to think. Sorry. Can I trouble somebody with Slido updates? I really should have gotten that Slido. That was totally my fault for being... Yep. Just one more question from Slido. With scan data, can you define and use a grouping variable, like reporting analysis by gender? No, unfortunately, you can't do that at all. It just takes columns holistically, and you can't split them. Strangely, though, not strangely, but incidentally, you can split by, you know, like categories or labels inside a standard point-blank validation. It's an argument we didn't see, but it's called segments. You just segment your data, just like a special sort of like way of doing that, the way you define it. But it basically splits between that. But unfortunately, for scan data, it doesn't happen.

One more. Does a point-blank play well with RStudio Connect deployments, like a living point-blank report? It does. Katie Macielo in RStudio has been doing a lot on that front, creating demos. I believe if you go to the repo point-blank demo, I will just try to find that in GitHub. It has some examples of deployments, and they're successful deployments as well. They generate daily reports. Let me just see if I can find that point-blank demo. Okay, here we go. Hopefully, this gets at it. Here we go. Here we go.