Marly Gotti | Risk Assessment Tools: R Validation Hub Initiatives | Posit

From rstudio::global(2021) Pharma X-Sessions, sponsored by ProCogia: we will present some of the resources and tools the R Validation Hub has been working on to aid the biopharmaceutical industry in the process of using R in a regulatory setting. In the talk, you will learn about the {riskmetric} R package, which measures the risk of using R packages, and you will also see a demo of the Risk Assessment Shiny application, which is an advanced user interface for {riskmetric}. About Marly Gotti: Marly Gotti is a Senior Data Scientist at Biogen and a former RStudio intern. She is also an executive committee member of the R Validation Hub, where she advocates for the use of R within a biopharmaceutical regulatory setting. Learn more about the rstudio::global(2021) X-Sessions: https://blog.rstudio.com/2021/01/11/x-sessions-at-rstudio-global/

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

I'd like to start by thanking Daniela and Samantha and Phil for organizing this session. I am very excited to talk to you about the R Validation Hub and the work that we have been doing to aid you in the process of using R in a regulatory setting and of course let you know how you can contribute.

So this is a quick overview of my talk. I'm going to start by telling you what is the R Validation Hub. I'll go quickly through the white paper which talks about an approach to assess the accuracy of R packages within a validated infrastructure and following this white paper and using this philosophy I'm going to introduce you to two of the tools that we have been working on an R package called Risk Metric and the Risk Assessment Shiny application and at the end I'll provide you with further resources where you can obtain more information and how you can be part of the R Validation Hub.

And of course a disclaimer, the views, thoughts and opinions expressed in this presentation belong solely to me and not necessarily my employers, organizations or committee views or thoughts.

About the speaker

So a little bit about me, oh excuse me, this is a very out of date picture, it looks more like this now. So I am a pure mathematician and a data scientist. I work as an intern for RStudio with Max Kohn in the Titan Models team. I enjoy anything R, I have taken the certification for Shiny and Tidyverse and I'm still waiting for Greg to put out more trainings so I can have fun taking them.

I currently work at Biogene in an awesome team led by Bob Ingle and I'm also part of the executive committee of the R Validation Hub which now has eight members, the chair of the R Validation Hub is Andy Nichols from JSK. We have members from Merck, Biogene, Gentech, RStudio, Fastar and the R Validation Hub was formed in 2018 and is part of the R Consortium working group.

We have around 100 members from multiple organizations across the pharmaceutical sector, as you can see in the work cloud on the right, our members are Johnson & Johnson, FDA, Amgen, L.A. Lilly and about 100 total members.

The R Validation Hub and white paper

Now the R Validation Hub is a cross-industry initiative to enable the use of R by the pharmaceutical industry in a regulatory set where the output may be used in submissions to regulatory agencies. Now to support our mission, we wrote a white paper titled A Risk-Based Approach for Assessing R Package Accuracy Within a Validated Infrastructure. This paper was written by Andy Nichols from JSK, Paolo Vargo from Janssen and John Sims from Pfizer and the aim of this paper is to propose a possible risk-based approach for assessing R package accuracy within a validated infrastructure.

This white paper addresses concerns raised by a statistician, programmers, quality assurance teams and others within the pharmaceutical industry about the use of R and selected R packages as a primary tool for a statistical analysis for regulatory submission work.

Now the white paper is based, or rather the definition that we use of validation in the white paper is based on the FDA definition of validation, which you can read more about in the linked article. And there validation can be separated in three main components, accuracy, reproducibility and traceability.

In the white paper, we focus on accuracy. We focus on this qualitative assessment of correctness or freedom from error. And in the white paper, we propose a risk-based approach to establish accuracy of those packages that are contributed packages. And so we make a decision between core versus contributed R packages.

And core packages, we say that those packages that are part of the official R distribution as formally released by the R Foundation. So these software is commonly referred to as base R plus recommended packages. Now these packages are considered to be low risk and we make this determination based on how the R Foundation develops the R language.

And you can read more about how the R Foundation describes and how the R Foundation takes their approach to developing the R language, their testing approach and how they release versions in the link document. And we can, by reading this document, you may agree with us that the R-Core team controls R like any of the companies that produces the commercial software that you may buy in this space. So the R packages are, the core R packages are very well controlled and so can be considered to be low risk.

Now the complement, we have those packages that are contributed that are made by the community, which are about 70,000 packages now on CRAN. The risk of these packages really depends on various factors, like how well is the package developed? Is it well tested? The package may have varying software development life cycles that you should be aware of. And you also can check for how much exposure has the package had within the user community.

Assessing contributed packages

And in the white paper, we propose, as I mentioned, an approach to assess the accuracy of the contributed packages as those core are considered to be low risk. So once someone in your company wants to use one of these contributed packages, the question is, what steps can you take to start to assess the risk of those packages? So the white paper can aid you in taking the first steps to start assessing the risk of using an R package.

So once you have decided that you want to use a package, the next step that we propose for you to do is to assess the package based on four criteria, purpose, maintenance, good practice, community usage and testing.

And so when talking about purpose, it is assumed that any package that we're talking about is intended to be used within a GXP workflow. So package directly manipulating data, creating graphics, or running a statistical model that forms part of the submission, we're referring to those. So in terms of risk, any package should be weighted equally. But we do recognize that modeling statistical packages deserve special attention. And we know that there is an innate difficulty to identify errors in those packages in those modeling packages versus those say on the manipulated data.

So for instance, if you would like to test the arranged function of the plier, it is easy to do. You can or relatively easy to do. You can use a small data set to test this function and check that the corresponding column has been sorted correctly. On the other hand, a statistical models are harder to test, and a statistician can roughly check that a model is correct, but not correct to the decimal places.

Now in terms of maintenance, good practice is good to to address the questions on how is the documentation of the package is a source code public on GitHub or any other medium. And we do we do know that when you when you expose a package to the community, you helps reduce the risk as even if the package has no tests, but it's popular, you know that it has been widely used by the community. And so lastly, testing is a crucial part of the SDLC and another maintenance best practice metric.

In general, the white paper proposes this workflow, which you are encouraged to read more in our website, which I'm going to provide a link to at the end of the presentation.

A misconception about validation

Now before showing you the the application that we have been working on, I want to point out a misconception with talking about open source software like R. It's important to note that no statistical software can purchase as pre-validated software in this regulatory context. So what happened is that validation is up to the user to implement. So for instance, SAS is not a pre-validated software. However, given the dominance of some of the servers in the industry, many companies have collected evidence through a long period of time that details the testing and validation of functions, procedures, etc.

And so when you use the software, rather than reinventing the wheel and duplicating testing, which has already been performed in the past by others, we make the decision to use that software based on its history of extensive previous use as we decide to do with SAS procedures, for instance. So following this philosophy, you can see our practice as having received validation through a variety of sources, validation by the original authors of the package, by users of the package in academia, by users in a wide variety of industries like Google, Amazon, Microsoft, by companies such as Mango Solutions, and even by the FDA themselves, who have been using our internally now for some time.

So in the same way that we can trust a longstanding SAS package, we can start thinking about our packages in the same way.

So in the same way that we can trust a longstanding SAS package, we can start thinking about our packages in the same way.

The riskmetric R package

So if we want to, if we believe this to be the case, then we need to look at which packages we can add to our safe list. To do that, we have been working on the RISCmetric R package, which follows the philosophy in the white paper and tries to answer questions like, who are the authors? How many times has the package been downloaded? Does the package, is the package well tested? What is the test coverage of the package? Is the source code public?

And so RISCmetric, as I mentioned, is an R package, which measures the risk of using R packages. And this risk is calculated based on a number of metrics that are meant to evaluate development best practices, code documentation, development sustainability, and community engagement. Now it's important to know that this risk, the risk portrayed by RISCmetric does not represent the risk of damaging the system in which the package is installed or the risk that internal statistical functions are correct. Although indirectly, the package does check that the statistical functions are correct by looking at the testing, the community usage and the bug reports and so on.

So if you apply the RISCmetric R package to ggplot, you will obtain a low risk. So the lowest risk, the risk ranges from zero to one, zero being the lowest risk, one being the high risk, the highest risk that you can get. With ggplot, you obtain 0.2. So that is a low risk. And if you use a new package like roles from the tidy models, which is a new package created this year, if I'm not mistaken, you will get a high risk. And of course, getting a high risk will point you to directions in which you have to look further in the package. Like, do you have to create tests? Do you have to provide more documentation? But in general, this doesn't mean that you can't use the package, but rather that probably you have to make more tests.

And so let's take a look at the functions inside RISCmetric or the main functions. And as I mentioned, the package is on GitHub in the link provided. Feel free to contact us to contribute. We're always looking for people to help out build this package.

And so if the package is on GitHub, as I mentioned, I'm not on CRAN yet. And so once you install the package using DevTools and you load it, you can follow our workflow, which starts by finding a source for package information using the package reference function. And it will look for packages installed, locally installed or on CRAN or Git.

Once you have applied the package reference function, you can move on to assess. So what that will do is look at the different metrics and find information for the different metrics for the metrics as vignettes and news currents and so on. And lastly, you can apply the package score function, which will score the package based on the assessment criteria on those metrics.

So then the results will be assembled in a data set that contains the overall risk and the other metrics. So as you can see below, you will see the output of metrics for to improve how it looks. Let's use cable extra. And there you can see the different metrics. So we have, for instance, so each column is information, some new information about the package. And on the rows, we have particular packages. So we have gplot and rules. You can see the version that we are assessing the risk as per trade by risk metric. The downloads on the score one year, which measures the percentage of the number of downloads in the last year. So you can see how much has gplot been downloaded versus rules and so on.

The Risk Assessment Shiny application

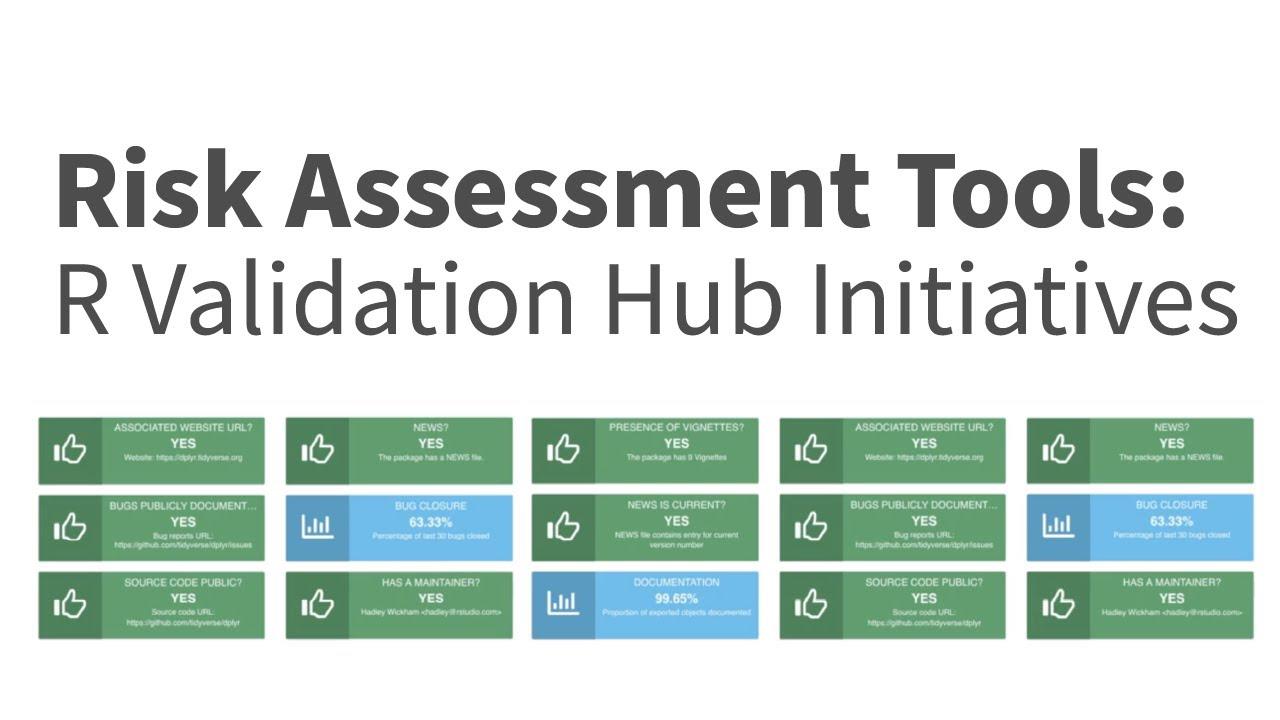

Now, to as you can see, reading the metrics on this table, although we have used cable extra, it's maybe hard to understand. And you can you can not interact with the race and with the packages. So to provide a better user interface to deal with the risk, we have been working on a Shiny application called the assessment application, which provides you a framework, a framework where you can interact with the risk, provide comments. And you can create reports.

And also you can in general, you can take advantage of using Shiny, meaning that you don't need to code. You don't need to explicitly call those functions on this metric. And you have an interactive way to measure risk.

So I'm going to do something risky. I'm going to try to show you how the application looks like. Give a quick live demo. And the application is on GitHub and feel free to reach out to us to contribute. We have meetings weekly and you can join us. The link to do that is on our website.

So let me try to switch to other window, see how that goes.

So once you load the application, this is how it looks like. You can provide a user ID and a role. When you click proceed to application, you can upload a package and the structure of the CSV file is, as you can see here on the screen, you can download this template and use it for the application for the sake of time. I have uploaded.

Three packages, so if we take a look at the player, for instance, you will see the same metrics that we saw on the table. Portrayed here, but you will have more information into what the metrics mean. And instead of seeing point nine, you will see a percentage as you were expected to. So it will tell you, does it happen yet? Isn't this current? How many exported objects that are. I will tell you the percentage of closure. Does it have a maintainer?

Let me do this when you can also look at the number of downloads in the last six months one year. And you can see that the play is widely popular. And so you can provide comments on the metrics. And once you provide comments and are happy with how the metrics are portrayed or the risk of the package, you can provide an overall risk, a low, maybe a high, depending on your assertion of the package. And after you do that, you can download a report containing all the metrics, the number of downloads and your comments on the package.

So the application has other functionalities that you can play around with. And again, the application is on GitHub, so you can you can feel free to do so.

So that's application again. Feel free to go to GitHub and play around with application, provide feedback or any new features.

Further resources and acknowledgements

And so to find out more about these tools, you can go to the Pharma website where you can see the blog posts, presentations and the white paper. Also, feel free to join our mailing list. And you can send a message to or or the email provided on the slide to contribute. And also, if you want to learn more detail about the white paper and these tools, we did a workshop last year on our Pharma. And there is a link on the slides that the slides will be shared later where you have the video to the workshop and testing exercises that we did during the workshop.

And I like to end by thanking the Evaluation Hub and the contributors of the risk metric package, Doug, Jilan, Eric, Eli, and I'm pretty sure I'm missing some contributors. And the contributors to the risk assessment package, the risk assessment, excuse me, application, especially to Robert, Aaron and Maya, who have been working with me on the application. And I'd like to thank our studio and Prokoja for organizing this pharma session and to all of you for coming to the session. Thank you.