Lou Bajuk & Sean Lopp | RStudio: A Single Home for R & Python| RStudio

Many Data Science teams today are bilingual, leveraging both R and Python in their work. While both languages are tremendously powerful, teams frequently struggle to use them together. We’ve heard from our customers how even experienced data scientists familiar with both languages often struggle to combine them without painful context switching and manual translations. Data Science leaders and business stakeholders find it difficult to make key data science content easily discoverable and available for decision-making, and IT Admins and DevOps engineers grapple with how to efficiently support these teams. In this webinar, you will learn how RStudio helps Data Science teams tackle all these challenges, and make the Love Story between R and Python a happier one: - Easily combine R and Python in a single Data Science project - Leverage a single infrastructure to launch and manage Jupyter Notebooks and JupyterLab environment, as well as the RStudio IDE - Organize and share Jupyter Notebooks alongside your work in R and your mixed R and Python projects This webinar will show examples of all these capabilities, and discuss the benefits of leveraging R and Python. About Lou: Lou is a passionate advocate for data science software, and has had many years of experience in a variety of leadership roles in large and small software companies, including product marketing, product management, engineering and customer success. In his spare time, his interests includes books, cycling, science advocacy, great food and theater. About Sean: Sean has a degree in mathematics and statistics and worked as an analyst at the National Renewable Energy Lab before making the switch to customer success at RStudio. In his spare time he skis and mountain bikes and is a proud Colorado native

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Thank you all for taking some time out of your day. We're going to start by looking at an R Markdown document and how we can use R and Python together inside of that document with some special new magic that's available in the latest versions of RStudio.

We'll also talk about how once we've finished that document, it can be published and shared in a reproducible way so that others can view it. And perhaps you might introduce some lightweight automation. We'll look briefly at how a similar outcome can be achieved with Jupyter notebooks using shared infrastructure.

And then we'll talk about how the model we're creating in this document can actually be put into place so that decisions can be made based on that model. And we'll look at how you can maybe do that with an interactive app or through something like a RESTful API. And then finally, we'll talk about how those APIs and applications can also be developed using some of the new options inside of the RStudio tool set.

Setting up the environment

So we'll go ahead and jump in. And to set a little bit of context, I am using RStudio on a server. And so that means I'm going to access RStudio through a web browser. And there's a couple of really nice benefits to doing this. The first is that I have more resources available to me than I would on my laptop.

And specifically, if I go in to start a new session, you'll see we're taking advantage of a tool called Kubernetes that allows me to kind of on-demand specify what resources I want for this work. Another benefit of using a server is that it means everyone on my team, Lou and myself, are speaking from a common playbook. And in this case, we're explicitly defining our environment through a Docker image where our session is going to run.

But even if you're not using Kubernetes or Docker, by having your work on a server, everyone has a common home, which helps make collaboration a lot easier. And then finally, because we're on a server, we're a lot closer to our data, which means our work can scale in an easier fashion.

Now, in a moment, I'll be jumping into the RStudio IDE. But while I'm here, I just want to point out that one of the things we've done in the last year for multilingual teams is added the ability for other editing tools to be accessed from this same interface. So it's almost like a workbench, where you can pick up the right tool from that workbench regardless of what language your current project demands.

And that means for multilingual data science teams, everyone has all those benefits I just described of a common playbook, regardless of what editor they're using. And for IT, it's really nice to have this common front door where these different environments are going to be accessed.

The visual editor and R Markdown

So we'll go ahead and jump into RStudio. And at this point, I want to point out that everything you're going to see inside of the RStudio IDE, even though I'm using RStudio Server, is going to be available in any flavor of the RStudio IDE. So whether you're using the open source desktop, or RStudio Server open source, or the professional product like I am, you'll have access to the interoperability that we're about to go through.

So to set the stage just a little bit more, I'm doing my work here inside of an R Markdown document. If you haven't seen R Markdown before, it's basically a format where I can combine prose, so what I'm thinking, along with my code, and then the output of my code. But what's new in the latest version of RStudio is something that we're calling the visual editor.

And what that means is that even though I'm editing in R Markdown document, on the fly, I'm getting a live rendering of what this document looks like. So that means I can do things a little bit easier than I used to. So for example, if I needed to put in a table, I could insert a table really quickly. I can add Markdown to that table, and it will be rendered on demand. I can even do things like insert citations or emojis.

And so I can use all these different tools inside of the visual editor, and whatever I'm doing is going to show up as kind of a real-time preview. And the reason why I think this is so important is that regardless of what language you're working from, ultimately, a data scientist's key job is to communicate. And so being able to write effectively in a tool that supports really rich technical communication is vital for any type of data scientist.

ultimately, a data scientist's key job is to communicate. And so being able to write effectively in a tool that supports really rich technical communication is vital for any type of data scientist.

And I've found that this visual editor makes things that used to be challenging for that type of technical communication really easy. One of my favorites is I can go in here and actually insert an image. I don't have to worry about figuring out where that image lives on disk, and I can even resize it interactively.

So we hope that regardless of what language, this visual editor is going to give you a head start as you're writing down what you're thinking and documenting your process. And it really combines kind of the best worlds of a Jupyter notebook and the RStudio IDE and R Markdown. And if you haven't seen this before, inside the latest version of RStudio, all you need to do is click this button in the upper right-hand corner. And what that button does is flip us back and forth between the plain text source code that's still available here, perfect for version control, and that pre-rendered view of the document.

The bike share data science exercise

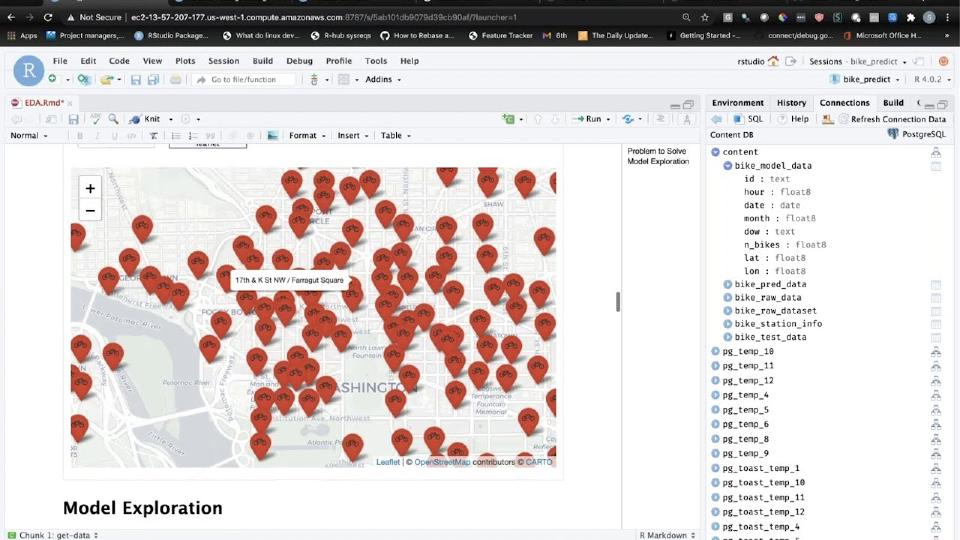

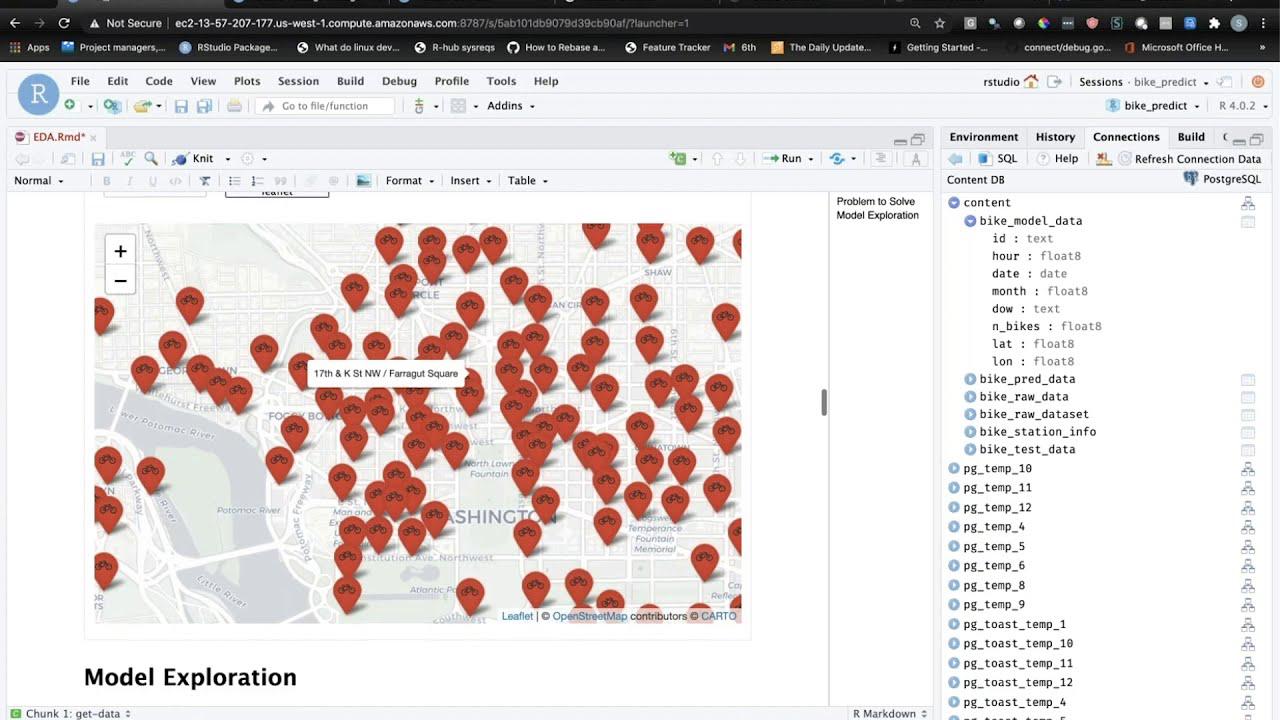

All right, so that's where we're doing our work, is inside of this visual editor. What are we going to do? Well, we have kind of a made-up data science exercise. And so like most data science exercises, we're going to start by pulling in some data. And this data is coming from a database. And again, something that we're excited about, regardless of what language you use, is the ability within RStudio to seamlessly manage connections to databases.

And so here I have a connection to a database called content. There's a number of different tables inside of it. And without switching to a different SQL editor, I can really easily preview the schema of this database table and even preview some of the records if I want. So I have this database. And I'm starting out by writing some R code that's going to operate within the database to do some basic transforms.

And so if you haven't seen this before, using dplyr, you can write R code, then that R code actually gets executed as SQL. And so that's what's going on here. So we're creating some data that we're then going to do an analysis on.

So let's take a quick look at that data. Essentially, we have spatial temporal data. And so we're looking at bike share data from the DC area. So those bike shares, if you've ever been walking around the city and you want to rent a bike, you can usually find a station with bikes lined up, and then you pay some money to be able to ride the bike around. And so the data that we have is how many bikes were available at any given station at any point in time.

So we have kind of this time series of bikes that are available. Then we also have kind of spatial component of where those bikes were located. So it's a pretty cool data set. And what we want to try to do is forecast into the future for a given station, how many bikes might be available. Say I have to commute home at 5 p.m., is there going to be a bike there that I can take?

Building the model in R

So we'll get started by doing that in R. And the first thing that we're going to do is create a test and training data set. And because we're working with time series data, it's really important that we don't accidentally use the future to predict the past. And so in R, we've created a test and training data set where the training data set sequentially occurs before the test data set.

And now that we have these two things, we can go ahead and build our model. And I'm using a new R package or an ecosystem of R packages called tidy models. There's a lot of great resources online if you want to dive into modeling in R. But essentially what this set of packages is allowing us to do is pre-process our data. And so we have here kind of latitude and longitude. That's the spatial element. We have the date and time. That's the temporal element.

But we've used some pre-processing tricks to create a factor for the day of week and also add some information about whether a given day was a holiday. So you have a nice, rich data set to do modeling on. And then the first thing that we might do is create a model in R. And so to do that, again, within this tidy models ecosystem, I'm essentially creating a workflow that's going to take our model pre-processing along with this gradient-boosted engine and fit a simple regression model.

So we can go ahead and do that. And if we look at the results here, we have a predicted number of bikes along with the actual number of bikes, kind of what we'd expect. And we can evaluate how our prediction did. So one thing that we can do for that quick evaluation is plot the prediction versus the reality. And if our model was really good, all of our data points would fall along this y equals x line where predictions match reality. You can see we have quite a bit of variance from that line.

We could look at the R-squared value of our model and see that it's not very good. That's why I'm paid to give webinars and not models. But this hopefully gives you a sense of kind of what your workflow might start to look like on R. We can even, because this is a tree-based model, look at feature importance. And so you can see that our model is emphasizing the location of our bike share station and not putting as much weight on some of those factors that we created.

Combining R and Python in a notebook

So we have this iffy model in R. What do we want to do next? Well, we could try a lot of different things to improve our model. One thing we might want to do is try something from the Python ecosystem. And so that's where kind of the heart of this webinar begins, which is how are we going to interoperate Python and R inside of this same context?

Well, the first thing that we'll do is create a Python code chunk. And inside of that Python code chunk, one of the things that we're going to want to do right off the bat is import some packages. So I'll go ahead and add the code to bring in these packages. And we can do a quick sanity check here to run the code chunk. And it appears that we have these packages loaded inside of Python.

Now, I can already see in the chat that many of you are asking, how is Python managed? Where are these packages coming from? Those are really great questions. So in the latest version of RStudio, you can use a project or global option to specify what Python interpreters should be used in the context where you're mixing R and Python together. And so for this project, I've created a virtual environment. And I've selected and told RStudio to use that virtual environment.

You can see that RStudio is actually aware of kind of the multitude of Python installs that are available on this server. That's one of those benefits, again, of working on a server is that if Lou and I were collaborating together, we'd have this common understanding of what Python installations are available. So that's where the Python engine is coming from.

The Python packages themselves are coming from a tool called RStudio Package Manager. So in our case, our server is not online. We have some sensitive data that lives in our environment. So we're not able to go reach out to the internet. So instead, Package Manager acts as this intermediary where we can install Python packages from a specifically governed mirror of PyPI.

And so that's what you're looking at here. I can search for packages. I can see information about those packages, how to install them, what they depend on. But something that's really special about this mirror is that it has a safety net that allows me to time travel as well. And so if I ever got into a situation where my Python environment wasn't working, I could go backwards or forwards in time to reinstall packages from a specific point in the past. So that's where these packages are coming from.

Let's go ahead and start writing some code. And this is where I think things get really magical. Because within this Python code chunk inside of my R Markdown document, I actually have access to everything that I've done up until this point in R. And you can actually see the IDE here is helping me autocomplete some of the objects that are attributes of this special R object that are available.

And so what does that mean? Well, I'm going to cheat a little bit and grab some code that I've written ahead of time. We'll just put this inside of our code chunk, and then I can walk you through it. So the first thing I'm doing, because we're going to fit this model in Python, is load some training and test data. But you can see that I'm not starting from scratch. I'm using this magic R object to actually pull in the training and test data that I already created.

And then I can use that as a jumping off point for fitting my model. And so we're going to do a little bit of preprocessing with pandas, and then we're going to use some functions from scikit-learn to fit another type of model. And one of the things that's really interesting, and why we might jump into Python here, is that scikit-learn has native support for time series cross-validation. So remember I said we can't use the future to predict the past? Well, scikit-learn knows how to handle that, even in the case where you're doing a whole bunch of cross-validation fitting. And so that's what we're doing here to fit an SVR model.

I can go ahead and run this Python code chunk, and we can take a look at our results. All right, so trainResults.mean, that's the mean of our cross-validation R-squared value. And you can see, it's really bad. Okay, so I'm not a great Python model fitter. But hopefully you get the idea that we can really seamlessly reuse things back and forth.

And you might have noticed a couple of other things that the IDE is doing to help me with this Python development. So one of the things that I briefly mentioned is that autocomplete. And so say we wanted to use a different model from scikit-learn. You can see the IDE is providing me with all the different options that are available, all the attributes and methods of these objects. And the help for those methods, the arguments that they take, are also all going to be available in the IDE with the rich autocompletion that you'd expect.

The other thing that I'll point out is if we look at our environment pane, when we switch to the Python context, we actually got a Python environment explorer as well. And so these objects that I'm creating inside of Python, I can actually see them and explore them inside of the IDE. And I could even preview them as well. So this becomes a really rich way to interactively do your work and debug problems.

And you can see that even things like the filter capability inside of the IDE, as well as sort, work for these Python objects. I can also switch at any time back and forth between R and Python because the two ecosystems are coexisting together inside of this notebook.

Speaking of which, there's one last trick that I want to show you. So I've fit this Python model, and I have some Python predictions. What if I then wanted to go and do some further analysis? Well, you saw how I can reuse R objects in the Python context. But I can do the same thing inside of R. So inside of R, there's this magic object called py that gives me access to everything that I've created so far in the Python space.

And so I can go ahead and use that object to do something like this. If I grab some code from my cheat sheet, place it in this chunk, I'm essentially taking the Python model predictions and plotting them with ggplot2. And you can see a little bit here why our model fit is so bad. It's because we're not capturing nearly any of the variance that is going on inside of our data.

Publishing and sharing work

All right. So we've fit this model that combines R and Python together inside of a notebook. What do we do now? Well, at RStudio, we're big believers that you should share your data science work early and often. This is the best way for people with domain knowledge or stakeholders to validate that you're on the right path.

And so one way to do that that's really powerful is through publishing. And within RStudio, we can publish. You see this blue icon here. And we can publish to a number of places. But within a team enterprise setting, our recommendation is to publish to RStudio Connect. And so I'll go ahead and do that. You can see the ID identifies my dependencies. And what happens when I click publish is that a reproducible unit is created here that contains not only my code, but also things like the version of R, the version of Python, the different packages that I need to reliably reproduce this document.

And so I'll go and show you the end result here on RStudio Connect, which is that same rendered notebook. So we have kind of all the information that I have been working up to this point, but in the context where I can easily share it with others. So I can specify who should be able to see this. And because it's that reproducible unit, at any point, I can reliably refresh this document to regenerate the results. Or I could do something like set up a schedule. So my data might change over time, and I might want this notebook to re-render on a regular basis.

Now, you might be thinking, that's great if you're a RStudio user, which probably most of you are. But what about my colleagues on the Python side? Well, the same option is available for sharing your work early and often if you're coming from Python. And so I want to quickly show you what that looks like.

In the RStudio IDE, we clicked that blue Publish button. And inside of Jupyter Notebooks, you can click that blue Publish button as well. But I want to show you a slightly different way that you can quickly and easily share your content. And that's to import content from a system like Git. So what I have here is a Git repository. That Git repository contains all of the example code we've been talking about today. And it's a public repository. So you can go ahead and play around with this as well if you'd like.

What I'm going to do is take this Git repository and tell RStudio Connect to import that content. And so I have different branches that I can choose between. And then this repository has a number of different directories. I'll go ahead and import the Jupyter Notebook. I'll give it a title here. And what happens when we import this content is the same thing that we saw in interactive publishing. The environment this content depends upon is recreated. So I have a reproducible unit of work around my notebook.

And if I go and open up that notebook, I can see the results. So I can share this even with a non-technical user who might be intimidated by the standard Jupyter Notebook interface. Here they just have a really clean HTML document that they can read. And a data scientist can specify who should be able to see this content, but also do those same things I was talking about for scheduling or re-rendering the content on demand.

Putting models into production

So we have our notebooks. We've shared them early and often. That's great. We've gotten this domain feedback. Maybe we iterate a couple of times and really improve that model. What do we do then? How do we ensure that the model is actually being used to generate decisions? That's kind of the key task of a data science team.

Well, there's two ways that we think about this at RStudio. One is to influence how decision makers are making decisions through your model. And a great way to do that is through interactive applications. So many of you might be familiar with tools like Shiny, which allow you to do that in R. But we've worked hard in the last year to ensure that multilingual teams have that same capability. So what you're looking at here is a Dash application, which is an interactive application written in Python.

And what this application is going to allow us to do is help stakeholders understand our model. So they can come in here and click through different stations and see the forecast and the location of the station. So it's a pretty simple app, but hopefully it kind of gets your wheels turning that even if you're a Python data scientist or you work with Python data scientists, they have the same ability to impact decisions by creating interactive content. And we see examples of that with Dash, with Streamlet, with Bokeh, with a wide variety of interactive Python frameworks that are supported through the RStudio stack.

So that's how you might go about influencing a person with your model. But what if you need to use your model to make a whole bunch of automated decisions? Or if you need to influence not a person, but a service? One way that's common to solve that problem is by creating an API.

And again, there's options for doing this in R and Python. So on the R side, we have tools like Plumber. On the Python side, we have tools like Flask. And both of these can be shared just as easily through RStudio Connect. And so I'll just quickly show you what that API might entail. Again, we have all of the same controls. And so we can look at the logs of this Plumber API. We could do things like scale. If we know we're going to have thousands of requests to this API at the same time, we can specify how we want the system to handle those requests.

But at the end of the day, the idea is pretty simple. It's that anyone can come in and place a parameter of your model. In this case, we're specifying the station that we want to make a prediction for and the time horizon that we want to make a prediction for. And then those inputs are passed to our model, and the results are returned. So here we have the results of our forecast. But as you can see, that passing of inputs and outputs is done in a way that machines can understand. So here we have the output in JSON. And just above that, we have the request that our kind of interactive exploration of this API would generate. So other systems or services or software engineers can take advantage of your model at scale.

Writing apps and APIs in the RStudio workbench

All right. So we've covered quite a bit of ground. We've talked about how to make these models through notebooks. We've talked about the different ways that you can enhance those models and put them into production. I didn't actually show you the code for either that Dash application or this API. So that's the last thing that I wanted to do is talk a little bit about how you can get started writing this type of code.

And so if I go back to the RStudio IDE, if you're an R user, my recommendation is to just click New File, and you'll see a bunch of options, two of which are Shiny web applications and Plumber APIs. So those are going to get you started with creating either web apps or APIs.

If you're a Python user inside of the RStudio stack, we looked at Jupyter notebooks. But if you want to do this type of coding for APIs or applications, you're probably going to need a little bit more robust editor. And you can use either JupyterLab or VS Code for that purpose. If I open up Visual Studio Code, the kind of last thing I want to show you here is just how easy that deployment of an API or an application is.

So inside of VS Code, again, I'm working off of that shared common Python environment. So it's easy for me to collaborate and automatically get the right Python environment as all my colleagues. I have my code here for a dash application. And then all I need to do to deploy is use a utility that we've created called rsconnect. So this is just a Python package that you can install really wherever you're writing Python code. And it has commands to help you then take that Python code and wrap it up in that reproducible context.

So for example, I'll do rsconnect deploy dash to a server called dev. And it's going to run through and identify all the dependencies of this application and then give me the link to the deployed app. And if we follow that link, you'll see the exact same bike share application we were looking at before.

So to recap, we covered quite a bit of ground. We created that document using R and Python and some really cool RStudio magic. That document was shared in a reproducible way. We talked about how Jupyter notebook users can do the same type of early and often sharing. And then how we can use that model to impact decisions either through apps or APIs. And finally, how you might go about writing those things using some of the new features in the RStudio workbench. With that, I will hand things over to Lou, who's going to kind of bring us home. And then we'll have the Q&A.

Summary and Q&A

Thank you, Sean. That was great. So while Sean was talking, I was taking a look at a lot of the questions coming in via Slido. There are a number of questions there that have been upvoted, some of which that Sean covered a lot of that material in his demo after those questions came in. But we'll get to as many of those as we can.

So Sean showed off a number of different things here. For the data scientist, he showed how you can use these two languages closely together without a lot of overhead. So that data scientists can use each language for their own strengths. Also illustrated some of the different IDEs that can be used, allowing data scientists to use their preferred IDE. Again, making that easy. And we now support in addition to the RStudio IDE, of course, Jupyter and VS Code.

Visual editing of R Markdown is a great advance. Again, making the user experience, the developer experience for data scientists much easier. And to answer one of the questions in the Q&A, that visual markdown is available in the open source version of the RStudio IDE.

So a number of different ways that data scientists can use R and Python to deliver these wow results to the rest of the organization. For the DevOps and IT teams, using these centralized environments makes it easy to support these common tools for both R and Python. And to operationalize both languages without doubling the work.

And by making both of these languages easy to use together, it helps data science leaders really optimize their team for the people, not for an arbitrary choice for single language. To better enable collaboration within the team and within their stakeholders, and really able to access these wider talent pools to hire new data scientists into their team.

And finally, for the business stakeholders in the organization, ultimately they don't care about what the underlying language is. They just want to have reproducible, accurate, understandable data science insights that they can use to help make better decisions. So by sharing this data science work through platforms like Connect, they can access this up-to-date interactive analyses and dashboards, or get the information directly in their email. So they can get the answers they need in order to make better decisions.

All these capabilities are supported by the RStudio team set of products, which together combine to provide a single home for R and Python data science teams. Again, RStudio Server Pro is the centralized environment allowing data scientists to use R or Python to analyze data and create these data products. RStudio Connect is a platform to publish the results to make them available to business users and other stakeholders using R or Python based data science products. And RStudio Package Manager to manage open source packages for both R and Python.

which together combine to provide a single home for R and Python data science teams.

One of the questions that I saw in the Q&A was a question of expressing pain around how difficult it is to manage packages in the Python ecosystem. And we've recently added support for managing packages from PyPI to RStudio Package Manager to help address that exact pain point. And I'll ask Sean to comment on that in the Q&A section.

RStudio is of course used by millions of people every week using our open source software. Things like the IDE and the Tidyverse and Shiny critical open source applications that we create as part of our open source mission. We're also used by thousands of active commercial software customers including over half of the Fortune 100 and many well-known brands such as the ones we see here.

We also have been really gratified to hear from our customers via trustradius.com. So if you are an RStudio user we encourage you to go to Trustradius check out the RStudio profile read some of the reviews that people have left there and add your own review because we read every single one of these we try and respond and certainly these are one of the ways that we hear from our users.

We've gotten great feedback from our customers on combining R and Python in a single platform and how it helps them collaborate among their team and to essentially allow these teams to as the second reviewer says make use of their preferred language for data analysis so that they can create and publish products via RStudio Connect using both R and Python to share with their internal clients and stakeholders as third reviewer shows here.

Now we've talked a lot along the way about our pro products but I want to emphasize that our core mission is to engage and support the R and Python community and we do that in a number of different ways. The most important of course is creating the open source software that our users use every week but there are a number of different ways we do it. We support the RStudio community site allowing R users and Python users to gather and ask each other questions and get answers to those questions. That's a great resource.

We do our annual conference. This year it was a virtual conference under current circumstances but that was just a couple weeks ago and we were very gratified by the engagement there. Check out our blog and there's a link there to all the globals, all sorry, all the videos from RStudio Global. Many different speakers from all around the world. Those are all free to watch.

Our education team is focused to help support the education of R. We do a lot of train the trainer capabilities providing training materials, etc. So if you're interested in being a certified RStudio R trainer check out our education page.

We're also supporters of the R Consortium a multi-vendor group to support and advance the infrastructure around the R language as well as a platinum sponsor of NumFocus which provides a tremendous support for the Python ecosystem among other projects. And then finally Ursa Labs. We've been a supporter of Ursa Labs from the beginning and Ursa Labs is devoted to developing cross-language capabilities such as using the Apache Aero project to provide access both within R and Python to those capabilities.

And it's important to emphasize how our open source and pro products tie together. Of course, it's our core mission as I said to contribute open source software to the community. And we spend over half of our engineering resources creating this free and open source software.

As the data science community adopts open source software this drives adoption within larger enterprises and commercial customers. These commercial customers in turn buy our pro products that are focused on helping scale out and operationalize open source data science. And by buying our pro products that provides RStudio the funds so that we can sustain our ongoing open source work. We call this idea the virtuous cycle. This idea that we're supporting our mission to deliver free and open source software to the community by selling the pro software to the enterprise companies that need those features.

So if you'd like some more information we have a number of different resources. Again, these slides and the recording will be sent out within a few days after the webinar. rstudio.com slash python is your one central portal to get to a lot of this information.

We also did a blog post recently on recapping all the Python related features we added in both our open source and pro products over the last year. So I encourage you to take a look at this. If you'd like to set up a time to talk to us one on one you can use this URL here rstd.io slash r underscore and underscore python to learn more set up a meeting get some answers.

Now going through the Q&A the most popular question was how to you know as an R user how can I learn Python? What would you recommend to experienced R users? I did a quick poll to our education team and these couple of books floated to the top are you know Python for data analysis or Python data science. Both of these books were recommended by our education team as being more data first as opposed to programming first.

And I would add to that Lou as part of this webinar afterwards we'll be sharing information on the RCU community page that rstud.io slash rpyqa link the link that is currently bringing you to Slido with all the questions will be redirected to that community thread. We'd actually love for all of you to give input to answer that question as well because there's a lot of diversity and how people learn.

We know these communities are coming from very different people and lots of diverse backgrounds and so if you have something that's worked really well I would recommend replying to that community thread. We'd love to kind of open source the answer to that question beyond us at RStudio.

Yeah absolutely. I think one of the most common questions was what parts of that demo are available on the open source side? What parts are part of the professional products? So apologies if I didn't do quite enough signposting there to delineate.

Essentially everything that you saw in sort of the RStudio IDE so that visual editor of R Markdown documents the ability to combine R and Python inside of an R Markdown document selecting what Python interpreter to use the Python objects in the environment pane the Python REPL even those are all going to be in that open source desktop IDE regardless of what version you use.

And in fact I would encourage folks to look at the Reticulate website that talks a bit more about some of the options that I didn't dig into for combining R and Python in that open source way. One of the questions asked if I just have a Python script can I use that in RStudio? And the answer is absolutely that's something that I didn't show but it is available in the open source IDE. So I'd encourage folks to go there.