Simon Couch | tidymodels/stacks: A Grammar for Stacked Ensemble Modeling | RStudio

Full title: tidymodels/stacks, Or, In Preparation for Pesto: A Grammar for Stacked Ensemble Modeling Through a community survey conducted over the summer, the RStudio tidymodels team learned that users felt the #1 priority for future development in the tidymodels package ecosystem should be ensembling, a statistical modeling technique involving the synthesis of multiple learning algorithms to improve predictive performance. This December, we were delighted to announce the initial release of stacks, a package for tidymodels-aligned ensembling. A particularly statistically-involved pesto recipe will help us get a sense for how the package works and how it advances the tidymodels package ecosystem as a whole. About Simon: Simon Couch is an R developer and statistics student at Reed College, where he is entering the final semester of his undergraduate degree. He co-authors and maintains R packages including broom, infer, and stacks, leads trainings and workshops as an RStudio-certified tidyverse trainer, and researches in algorithmic data privacy. He interned on the RStudio tidymodels team in summer 2020, and is currently applying to doctoral programs in statistics

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

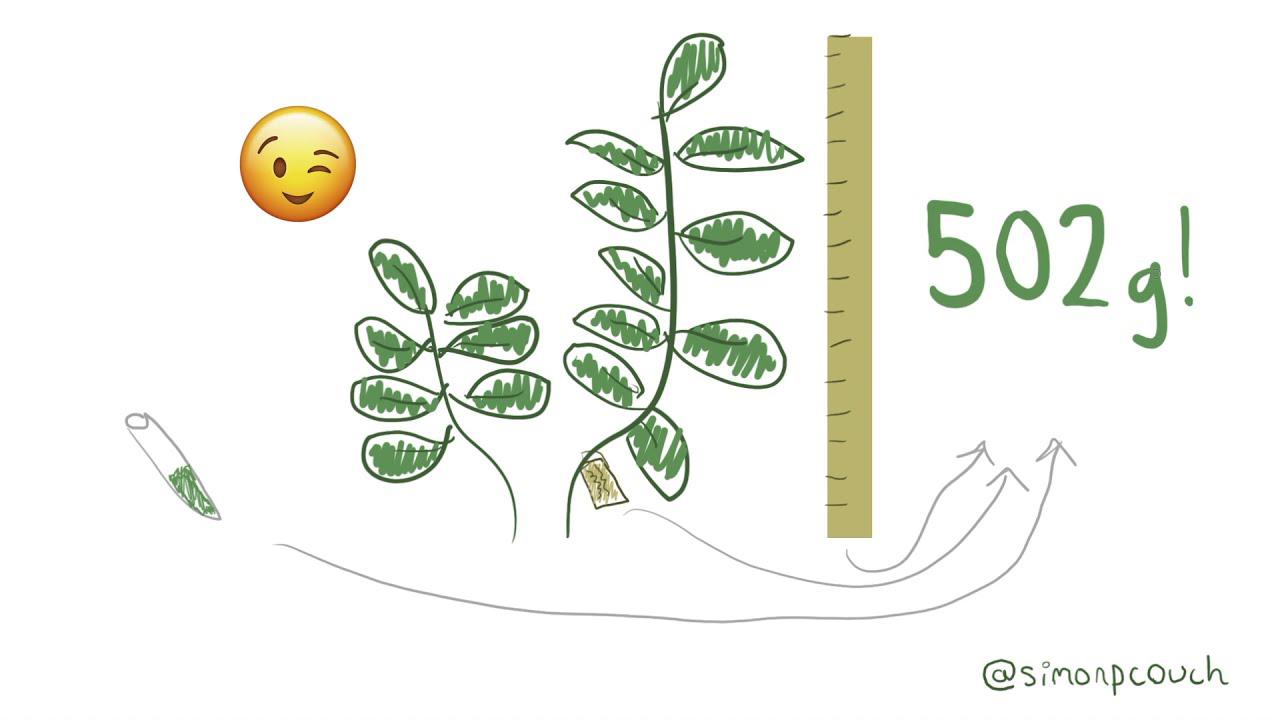

My quarantine fad of choice this last summer was gardening. Among other things, I was working with some basil, and a friend of mine sent me a recipe for pesto. Turns out that they're one of those good at cooking types, so instead of talking tablespoons or cups, this recipe was all in grams. Seeing that I don't own a scale, I realized that I had stumbled upon a totally real-world, definitely not contrived need for machine learning. I know how tall the plants are, what species they are, and some other yada yada about the concentration of some sort of chemical, and I need to estimate my basil mass. Thankfully, the previous tenant of 10 years has been collecting measurements on this same plot, so I have some solid data to work with.

So what's our strategy? Maybe there's no need for anything fancy. We could start out with a linear regression. Or maybe I'm the kind of feller that uses k-n-n in the regression setting. We could run with that. Or maybe I'm feeling trendy and decide that my best move is a neural network. Or... Okay, hear me out. What if I could figure out some way to combine those outputs? I want to come up with some sort of model that generates predictions that are informed by each of these constituent models. This is the premise behind ensembling.

Ensembling is a machine learning technique that has been shown to increase predictive performance in a variety of settings. In a community survey conducted over the summer by the tidymodels team, we learned that users felt that the number one priority for future development in this ecosystem should be ensembling. This December, we are delighted to announce the initial release of stacks, a package for tidymodels-aligned model ensembling.

This December, we are delighted to announce the initial release of stacks, a package for tidymodels-aligned model ensembling.

How stacks works

When you're coding up an ensemble with stacks, most of the code you'll end up writing isn't specific to stacks at all. I'll skip over the code that generates these models, but the gist is that I've created three model specifications here using functionality from the tidymodels core. Packages like parsnip, workflows, recipes, and tune. The linear regression spec here just defines one model definition. There aren't any hyperparameters to tune. For this KNN model spec, though, I'm tuning over several possible numbers of neighbors, so this spec actually defines four model definitions. Finally, for this neural network model, I'm tuning over six combinations of numbers of hidden layers and dropout rates, so this spec defines six model definitions.

In stacks, each of these eleven model definitions are called candidate members, or candidates for short. These candidates are the building blocks for an ensemble. The first step to putting together a model stack is the stacks initialization function, which works kind of like the ggplot function from ggplot2. It lays the foundation called a data stack for the model to be built on top of.

Now, the first core verb in stacks is add candidates. It takes in a candidate and adds it. We can iteratively call the function as many times as we need to to add all of the candidates. Under the hood, add candidates collects predictions on a subset of the training data from each candidate and appends them as columns to the data stack. The second core verb is blend predictions, which fits a regularized linear model on the data stack, predicting the true outcome using the predictions from each of the candidate members. We call the coefficients of this linear model stacking coefficients. A stacking coefficient of zero means that the predictions for the relevant candidate will not ultimately influence the model stack's predictions. Candidate members with non-zero stacking coefficients earn the title of members, and we can fit them on the whole training data using the fitMembers function.

And that's it. The output of fitMembers is ready to predict on new data. Fingers crossed that I'll nail this pesto recipe.