Tidy Data and tidyr -- Pt 2 Intro to Data Wrangling with R and the Tidyverse

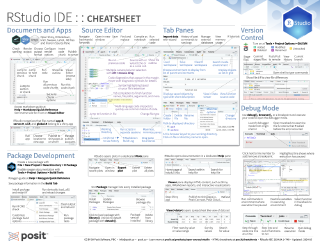

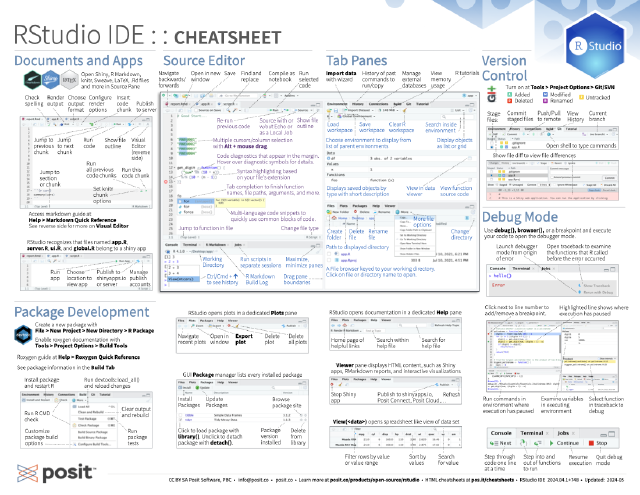

Data wrangling is too often the most time-consuming part of data science and applied statistics. Two tidyverse packages, tidyr and dplyr, help make data manipulation tasks easier. Keep your code clean and clear and reduce the cognitive load required for common but often complex data science tasks. http://tidyr.tidyverse.org/reference/ - http://tidyr.tidyverse.org/reference/gather - http://tidyr.tidyverse.org/reference/spread - http://tidyr.tidyverse.org/reference/unite - http://tidyr.tidyverse.org/reference/separate ---------------- Pt. 1: What is data wrangling? Intro, Motivation, Outline, Setup https://youtu.be/jOd65mR1zfw - /01:44 Intro and what’s covered Ground Rules - /02:40 What’s a tibble - /04:50 Use View - /05:25 The Pipe operator: - /07:20 What do I mean by data wrangling? Pt. 2: Tidy Data and tidyr https://youtu.be/1ELALQlO-yM - 00:48 Goal 1 Making your data suitable for R - 01:40 `tidyr` “Tidy” Data introduced and motivated - 08:10 `tidyr::gather` - 12:30 `tidyr::spread` - 15:23 `tidyr::unite` - 15:23 `tidyr::separate` Pt. 3: Data manipulation tools: `dplyr` https://youtu.be/Zc_ufg4uW4U - 00.40 setup - /02:00 `dplyr::select` - /03:40 `dplyr::filter` - /05:05 `dplyr::mutate` - /07:05 `dplyr::summarise` - /08:30 `dplyr::arrange` - /09:55 Combining these tools with the pipe (Setup for the Grammar of Data Manipulation) - /11:45 `dplyr::group_by` - /15:00 `dplyr::group_by` Pt. 4: Working with Two Datasets: Binds, Set Operations, and Joins https://youtu.be/AuBgYDCg1Cg Combining two datasets together - /00.42 `dplyr::bind_cols` - /01:27 `dplyr::bind_rows` - /01:42 Set operations `dplyr::union`, `dplyr::intersect`, `dplyr::set_diff` - /02:15 joining data `dplyr::left_join`, `dplyr::inner_join`, `dplyr::right_join`, `dplyr::full_join`, ______________________________________________________________ Cheatsheets: https://www.rstudio.com/resources/cheatsheets/ Documentation: `tidyr` docs: tidyr.tidyverse.org/reference/ - `tidyr` vignette: https://cran.r-project.org/web/packages/tidyr/vignettes/tidy-data.html `dplyr` docs: http://dplyr.tidyverse.org/reference/ - `dplyr` one-table vignette: https://cran.r-project.org/web/packages/dplyr/vignettes/dplyr.html - `dplyr` two-table (join operations) vignette: https://cran.r-project.org/web/packages/dplyr/vignettes/two-table.html ______________________________________________________________

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hi, I'm Garrett Grohlman, a professional educator at RStudio and the author of two books on R. I've put together these videos to teach you some of the main ideas of doing data wrangling with R and the Tidyverse. If you're not familiar with the Tidyverse, it's a collection of R packages that are designed to help you do data science.

In this series of short videos, we will walk through some of the most useful tools for wrangling your data with R. Also, keep in mind that you can skip around these videos to the tool that you want by clicking the jump to links in the video description.

What is tidy data?

Normally when you wrangle your data, you have two goals. First, you might need to make your data set suitable to a particular piece of software. For example, we're going to need to make our data suitable to R because that's what we're using. And then second, you can actually reveal information by changing the format and the structure of your data.

Let's start with this first goal, making our data suitable for R. So your data sets can come in many different formats, but there's one that R definitely prefers more than the others. And I have some toy data sets in this package, which I built on GitHub. You can download it if you want to use these data sets. It's completely not necessary. I'll be using these and you can just sit back and watch. But should you want to install this package, first install the DevTools package, which is on Crayon, and then use the install GitHub command in DevTools to install this from the link rstudio-edawr.

Inside this data frame, inside this package, I have some data frames that are all fairly small, but demonstrate the sort of layout and formatting issues that you might encounter with data in R.

For example, here are three data frames from the package. One's some data about hurricanes in the Atlantic Ocean. One's some cases of a disease, in this case TB, in different countries in different years. And one's some data about pollution. And we could go through these three data frames and try to analyze what they contain. In particular, we could look at what variables are in each of the data frames.

So in the storms data frame, it's pretty straightforward. We have a variable that talks about the names of each of our storms. A variable that has something to do with the wind. It's actually the maximum wind speed recorded for each storm. A variable that records the pressure of each storm and the date on which these measurements were made. So there's four variables in that data frame.

If we turn our attention to the cases data frame, we'll see that we have a variable that has some sort of country code. So we see France, Deutschland, and the United States there. We have a variable that lists different years. 2011, 2012, 2013. And we have a variable that's left over that's something like a frequency or a count. Or you might just call it N. And that's here.

And if you compare cases to storms, you can see already that we have the difference between the two data frames. They store the values of their variables in different ways. In storms, each variable is put in its own column. In cases, one variable's in a field of cells, one's across the column headers, and one's in its own column.

When we get to pollution, we see a different format still. We have a variable that's different city names. And then it looks like we have a variable that describes particle size and a variable that describes some sort of amount. But I think you can also argue, and this is what I would argue, that we actually have a variable which is the amount of large particles that each of these cities has in its air and a second variable which is the amount of small particles that each city has in its air. And if you wanted to compute some number for each city, like the ratio of large to small particles, then you'd have to treat these things as separate variables to do math with them.

So we could look at this data frame as having three variables that are structured in a different way. So this is what I mean by data sets come in many different formats.

But if you looked at how you'd pull the values of these different variables out of these data frames, you'll see that one of these formats is much nicer with R code than others. In the storms data frame, you pull out each variable using the same syntax. The name of the data frame, dollar sign, the name of the column that you want. But if you want to pull out the values of, say, the count variable from cases, you'd actually have to extract a bunch of cells and paste them together in some way. Or the names of the columns would come together.

When you move to pollution, you have to do something similar. And this is bad because when you write your code, you want to be able to make it as generalizable and as automated as possible. And if you have to stop and look at the data set that you're working with, and then think about how to extract the values of the variable you want within that data set, well, then it's going to be really hard to write a piece of code that will work with other data sets as well. And the chance for making an error will be much greater.

So just due to the way R works, its syntax structure and everything, it's much easier to work with your data if each variable is in its own column. But there's other advantages as well.

If we look at the storms data frame and consider making some sort of new variable, in this case the ratio of pressure to wind, meteorologically I don't think that's a meaningful variable, but this is similar to things you do with your own data. You'd use some math like this in R, and what that code would do is it would first extract the pressure variable storms, and then it would extract the wind variable, and then divide the two. And the way R operates is it's going to do element-wise operations. So instead of doing some sort of linear algebra thing here, it's just going to pair up the first value of each vector, the second value, third value, and so on. And within those elements it will do the task of division.

And so we get a vector that has, at the end, just as many values as the original vectors. Now this is great if you put your data in such a way that each variable is in its own column, and then each row contains a single observation. Because what element-wise operations will do is it will make sure that the values of one observation are only paired with other values from the same observation. Which as a data scientist is just what you want.

We wouldn't want to divide the pressure of Alberto by the wind speed of Alex. That's really not a meaning for the quantity. So we want to make sure we keep the pressure of Alberto with the wind of Alberto, and the pressure of Alex with the wind of Alex, and so on. Well, the structure of the storms data frame makes that happen automatically. It's taking advantage of R's element-wise operations.

So that's sort of a roundabout way to say that there's one structure that just works well with the natural quirks of R, and if you put your data into that structure before you begin to analyze your data, you'll have a much quicker, more efficient experience when you work with that data. And I'm going to call that data structure tidy data.

And I'm going to call that data structure tidy data.

This is a term invented by or popularized by Hadley, the author of tidyr and dplyr. And what it amounts to is you take your data, and you make sure that each variable is saved in its own column, which we saw storms did. Make sure each observation is saved in its own row. I'm not going to go into what an observation is here, but it's both obvious and puzzling at the same time. And then finally, you want to put basically all the data that you plan to work with in the same data table before you start working with it. Otherwise, you'll be recombining your data every time you make some sort of adjustment to it.

So if you make your data tidy, with variables and columns, observations and rows, and the type of data in its own table, it will be easy to access your variables, and you'll automatically preserve observations as you do things to your data. So that's sort of the goal. tidyr is the means to get there. The package that picks up where reshape2 left off, if you are a user of reshape or reshape2, and tidyr has two main functions, gather and spread, that do similar things as cast and melt if you're coming from the reshape world. And then there's several other functions we'll look at in there. But these are the main ones, and let's take a peek at them.

The gather function

So this is the cases data frame. This is how the variables were distributed. What I'd like you to do, and just for 30 seconds, because I can't really check in with you guys or communicate with you, imagine how this data frame would look like if you restructured it to be similar to storms, if it was tidy and had the variables country, year, and end.

I think if you tried to scribble this out on a sheet of paper or something, it would make it much easier to follow what gather does, which we'll look at in a second.

Alright, so hopefully you had a chance to imagine what this data would look like in a different format. And this is what I propose this dataset would look like in a tidy format. We want to keep all of the information in the dataset, and we want to keep the variables that are there and the values that are there. We just want to restructure them so the variables each appear in their own column.

And we know that the columns we want to end up with are something like country, year, and end. And we can also look at the old dataset to determine what values need to go in which rows of the new dataset. We need to have some combination of France, the year 2011, and the end value for that combination needs to be 7,000. We also need a combination of Germany in 2011 with its own value. And basically we need to port over all the values in this field of cells into our new data frame. And when we're done, our data frame will look like this. So this is a tidy version of the case's data frame. Now it's very easy to pull out each of these variables by selecting the column name.

And we can do this sort of thing with the gather function. And before we do it, we'll want to develop one piece of vocabulary.

So if you look at the output, you see it basically took the right-hand side of this data frame and turned it into two columns. The first column is a key column. These are the names that these cells had in the previous data frame, the column names. And we'll call the second column a value column. So these are actual values that appeared in that field of cells. And the idea of a key-value pair is actually pretty common. So we're going to use gather to take cells and turn it into just two columns, a key column and a value column.

This is how the function works. This function comes with the tidyr library. And you first give it the name of the data frame that you want to clean up. And then you give it the name that you want to use for the key column that results. And the name that you'll use for the value column. So we happen to be moving column names that were years into its own key column. And cell values that were n for frequency into their own column. But, you know, gather and tidyr can't know what those things actually stand for. So here we get to tell them what to name the new columns.

And then finally we just tell gather which of the columns we want it to use when it builds those final two columns. You may remember in the cases data frame, the first column was already tidy. The countries were already in their own column. So we don't include one in this index here. We leave one be. We just include two through four. Those were the columns that were not tidy. When you do this, your data will go from here to there.

So at this point, if you downloaded the EDAR package, you can load it. And you'll see that, you know, the storms data sets in there. The cases data sets in there. Actually, all the data sets on that cheat sheet are in there. And all the data sets will go over today. And so if I took the code here, I can run an R and verify. That gather comes from tidy.

That's a tidyr library. Now you can see that we're going from our rectangular data frame, you know, our table, to our tidy data frame here.

The spread function

Okay, so this is our pollutions data set. And it has three variables distributed like this. We can make this data set tidy with a column called city, a column called large, and a column called small. Before we do, try to picture in your mind what that would look like. Which will help you understand the goal we're working towards in the next few slides.

So the cases data frame look like rectangular data. That's the sort of data that you'll find very often when you only have a few variables and you want to display them in a format that fits in a paper pretty well. I don't know what to call this sort of data, but it exists quite often as well. And if you can switch this sort of data and rectangular data, you pretty much have full control over the format of your data frame.

So here's the pollution data set as it is. And we want to make it a data frame that has these columns in it. And just like before, it's only a matter of recreating the combinations that appear in the original data frame in our new data frame. And when we do that, this is what the result looks like. See, it's tidy. It's more rectangular than the previous data frame. There's fewer rows. We've removed some redundancy in here. Basically, we've made something that looks more like the cases data frame from before.

When we did this operation, we again were working, you could think of it as working with a key column and a value column. The function that does this operation is called spread. And what it does is it looks at the original data set and you give it a column to use as a key column and a column to use as a value column. And it'll use those columns to recreate a field of cells. But the way you call spread is, the idea would be that the field of cells you recreate will be a tidy field of cells.

The syntax for spread is, you give it the data frame you want to reshape. You give it the name of the column to use as the key column in the original data. And the name of the column to use as the value column in the original data. Here, that's size and amount. And then it makes a transition for you. You could think of spread as taking data that's in a key value column format and putting it into a field of cells format or a rectangular table format. Whatever vocabulary you like. And you could think of gather as doing the opposite.

And by going back and forth between gather and spread, you could actually move values into column names, out of column names, variables into a rectangular format, a tidy format. And since you don't have to apply spread or apply gather to the same columns each time, just by iterating between these two functions, you can take the same data, preserve all the combinations in the data, and make the layout very, very different than it was to begin with.

And since you don't have to apply spread or apply gather to the same columns each time, just by iterating between these two functions, you can take the same data, preserve all the combinations in the data, and make the layout very, very different than it was to begin with.

Separate and unite

So the tidyr package also has two other functions that I think are useful, but not always going to be that useful. If you looked at the STORMS dataset, and you're very persnickety, you might notice that it actually has three more variables in there. You could extract a year variable from the date, a month variable, and a day variable. And those variables are all combined into one column here, the date column.

What separate does is it takes a single column and it splits it up into multiple columns, based on some sort of separating character. So this is really easy with dates, because dates normally have some sort of separator between year, month, and day. And if we ran this code above here, we'd get a new data frame where the date column has been split into a year, month, and day, three columns.

If I save that as STORMS2, then I could use UNITE, which does the inverse. It takes several columns and puts them together in the same column, adding a separator between the values of the previous columns. So that's a great way to recreate some dates, although in this case those dates would be character strings. You need to reparse them if you want them to behave as dates in R, but that's another story.

So just to recap about the tidyr package, it primarily works with the layout of your data and reshapes the layout. You can use the gather function and the spread function to change the shapes back and forth. They're complementary, and then you can use separate and unite to split up and unite columns. They're also complementary. This package is designed basically to get data into that tidy format, which is the most efficient way to work with it in R.

Thanks for checking out our data wrangling video series. This is just one video in a larger set of resources on data wrangling, so here are links to all of our other videos in the series. And again, have a look at the video description for shortcuts to other parts of the series, as well as other resources we think might be helpful, like cheat sheets and further documentation. We hope you find all of these resources useful, and if you do, as always, we would appreciate a thumbs up or a share. Both go a long way to helping this content reach people who could benefit from it.