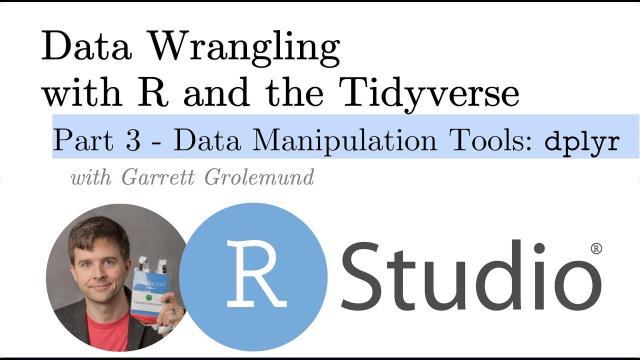

Data Manipulation Tools: dplyr -- Pt 3 Intro to the Grammar of Data Manipulation with R

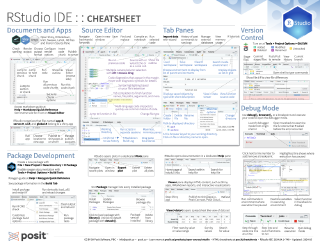

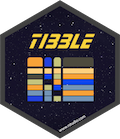

Data wrangling is too often the most time-consuming part of data science and applied statistics. Two tidyverse packages, tidyr and dplyr, help make data manipulation tasks easier. Keep your code clean and clear and reduce the cognitive load required for common but often complex data science tasks. `dplyr` docs: dplyr.tidyverse.org/reference/ - http://dplyr.tidyverse.org/reference/union.html - http://dplyr.tidyverse.org/reference/intersect.html - http://dplyr.tidyverse.org/reference/set_diff.htm ---------------- Pt. 1: What is data wrangling? Intro, Motivation, Outline, Setup https://youtu.be/jOd65mR1zfw - /01:44 Intro and what’s covered Ground Rules - /02:40 What’s a tibble - /04:50 Use View - /05:25 The Pipe operator: - /07:20 What do I mean by data wrangling? Pt. 2: Tidy Data and tidyr https://youtu.be/1ELALQlO-yM - /00:48 Goal 1 Making your data suitable for R - /01:40 `tidyr` “Tidy” Data introduced and motivated - /08:10 `tidyr::gather` - /12:30 `tidyr::spread` - /15:23 `tidyr::unite` - /15:23 `tidyr::separate` Pt. 3: Data manipulation tools: `dplyr` https://youtu.be/Zc_ufg4uW4U - 00.40 setup - 02:00 `dplyr::select` - 03:40 `dplyr::filter` - 05:05 `dplyr::mutate` - 07:05 `dplyr::summarise` - 08:30 `dplyr::arrange` - 09:55 Combining these tools with the pipe (Setup for the Grammar of Data Manipulation) - 11:45 `dplyr::group_by` Pt. 4: Working with Two Datasets: Binds, Set Operations, and Joins https://youtu.be/AuBgYDCg1Cg Combining two datasets together - /00.42 `dplyr::bind_cols` - /01:27 `dplyr::bind_rows` - /01:42 Set operations `dplyr::union`, `dplyr::intersect`, `dplyr::set_diff` - /02:15 joining data `dplyr::left_join`, `dplyr::inner_join`, `dplyr::right_join`, `dplyr::full_join`, ______________________________________________________________ Cheatsheets: https://www.rstudio.com/resources/cheatsheets/ Documentation: `tidyr` docs: tidyr.tidyverse.org/reference/ - `tidyr` vignette: https://cran.r-project.org/web/packages/tidyr/vignettes/tidy-data.html `dplyr` docs: http://dplyr.tidyverse.org/reference/ - `dplyr` one-table vignette: https://cran.r-project.org/web/packages/dplyr/vignettes/dplyr.html - `dplyr` two-table (join operations) vignette: https://cran.r-project.org/web/packages/dplyr/vignettes/two-table.html ______________________________________________________________

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hi, I'm Garrett Grohlman, a professional educator at RStudio and the author of two books on R. I've put together these videos to teach you some of the main ideas of doing data wrangling with R and the tidyverse. If you're not familiar with the tidyverse, it's a collection of R packages that are designed to help you do data science.

In this series of short videos, we will walk through some of the most useful tools for wrangling your data with R. Also, keep in mind that you can skip around these videos to the tool that you want by clicking the jump to links in the video description.

Let's turn our attention now to the dplyr package, which has a different design. Datasets contain more information than they display, and dplyr is designed to help you sort of access that information. So, you'll need to run library dplyr before you use the functions in this package, and you'll also need to install the package, of course, if you want to use it. The main functions that we'll use for manipulating data are listed here in their help pages, but we'll just go through them one at a time.

Also, I don't think I'll have a chance to open up this dataset, but I was planning maybe using this for some examples. The NYC flights 13 package just contains datasets related to flights that went in and out of New York City, and it's a great place to look at things. It's real data, and it's somewhat interesting, especially if you fly into New York City.

Extracting information with select and filter

So when it comes to accessing information in your data frame, it can help to just extract information that's already there to avoid the sort of overwhelming amounts of distraction that the other variables provide. So you can extract variables, you can extract observations, you can derive new variables, and you can change the unit of analysis that the dataset displays, and I'll talk about that when we get there.

dplyr provides four functions, each dedicated to doing one of these things, and let's take a look at them, starting with select. So, select is very simple. It's a way for you to just pull out variables or columns from a data frame. So if I only wanted the storm column and the pressure column from the storm's data frame, then I could select from storms the storm and pressure column. And you could list as many column names or as few column names as you want there, and it'll bring back everything you list. So that's easy enough to do in R, and you might ask, why would you use select to do it? Well, one thing that select does is it provides more ways to specify which columns you want. So you'll know that if you use brackets and integer subsetting, you put a negative sign in front of an integer and that stands in R, for giving you everything except this integer. With select, you can put a negative sign in front of a name, and you'll get back everything except that name.

So here we did everything except the storm column. You could also use the colon notation to get a range of variables. So this would say, giving back the win variable, the date variable, and any variable that appears in between them. So that'd do the same as the previous command. And then there's other functions that you can use inside of select, come with dplyr, that make it even easier. So here's a list of those functions. And you can see they allow you to pull out columns whose names have a certain string in them or a certain regular expression or begin with a certain word or end with a certain word or is in a group of names and so on. So if you have a very large data set, selecting variables out of it might be an important task, and these functions might make it very easy for you to sort of search through the variable namespace in an automated fashion.

Filter is analogous to select, except instead of selecting columns, it brings back rows, or if your data's tidy, these would be observations. And the way filter works is you give it a logical test. So here I'm giving it the test where wind is greater than or equal to 50. What filter will do is it will apply that test to each row in your data frame and give you back just the rows that pass the test. You can combine tests by putting a comma in between tests, and what filter will do is it will combine them as if there was an and statement between them. So these are only the rows where the wind is greater than 50 and the name, the storm name, is in Alberto, Alex, and Allison. If you want to do an or condition, we'll just use the Boolean operator for or and r to combine two tests into a single test. That's the pipe operator. So filter works best if you know how to use logical tests in R, and here's a quick list of the logical tests that exist in R. The table on the right are Boolean operators. These combine two or more logical tests into a single logical test, so you'll get a single true or false, and the ones on the left actually do logical operations. You'll notice at the bottom that is.na and !is.na are in there, which means filter is a great way to winnow out rows that have na values if you combine it with is.na or !is.na.

Deriving new variables with mutate

The mutate function takes your data frame and it returns a copy of the data frame with new variables added to it. Normally these will be variables that you derive from the variables that exist in your data. So before we had sort of the bogus example of calculating a ratio variable from the wind and pressure variable in storms, the information in that ratio variable was in the data to begin with, it just wasn't on display, but you could logically derive it from the data that's there, and this is how you do it with mutate. You run mutate on the data frame you want to add to or change, then you give it the name of the new column you want to make, and you set that equal to some expression that will make the values of the new column. You can make more than one column at the same time, and you can even use a column to make a new column in the same command. Make sure that the first column appears before the columns that rely on it. So here inverse relies on ratio, and since ratio appears first, inverse will be able to find ratio and use it to calculate inverse. This command, mutate, doesn't actually affect your original data frame, it returns a data frame that has the new variables added to it. So if you want to save that data frame and use those variables later, you'll need to assign it to an object.

There are a lot of functions that are useful to use with mutate to make new variables. This is a short list of them. This list, by the way, also appears on that cheat sheet you can download from the RStudio website. And each of these functions has something in common. They all take a vector and return a vector of the same length. So if you want to add a column onto your data frame, that column's going to have to have values for each row in the data frame. When you use these functions, you'll get a set of values that will fit right up with your data frame. You can call these window functions, because they pass the whole vector through a window and the vector changes, but each element is still represented at the end.

Summarizing data

So the last of those four functions we listed was summarize. Summarize takes a data frame and it calculates summary statistics from it. You get back a new data frame, and usually that new data frame is much, much smaller. For example, here I'm summarizing the pollution data frame by calculating the median value of the amount. I only need one value to show the median, so my new data frame only has one row. I'm also calculating the variance. Summarize works similar to mutate. You give it the name of the new columns you want in your new summary data frame, and then you set those names equal to the R code that will build those values. Like mutate, there's functions that seem to work best with summarize, things like the standard statistical estimators, min, mean, variance, standard deviation, median. All these functions are similar to each other in a part way. Unlike the window functions used with mutate, these functions actually all take a vector or a group of numbers and return a single number. So you could think of them as summarizing functions or summary functions. When you use summarize, you'll want to use these summarizing functions to build your summary statistics.

Arranging rows

There's one more function in dplyr that doesn't really add to your data or subtract from it, but you can consider it like a fifth horseman of dplyr, and that's a range. What a range will do is it will rearrange the order of the rows in your data frame based on the value of one of the columns. So here I rearranged the rows in storms based on the value of wind. And if we color-coded this data set, you could see that, it's easier to see, that whereas before the rows were not ordered by wind value, now they go from the row with the smallest wind value to the row with the highest wind value. If you want to reorder them in a different way, in descending order, you can use this helper function DESC, it stands for descend, and just wrap that around the variable whose values you want to be ordered from highest to lowest. So now the highest wind is at the top of the data frame. If you give a range one variable, it will range based on that variable and ties will just occur in the order they appear in the data frame. But if you give it a second or third variable, a range will use that variable as a tiebreaker variable within rows that have the same values of preceding variables. So you can see here that by adding date as a tiebreaker, the order of Alex and Arthur changes, even though Alex and Arthur both had a wind speed of 45, the date of Arthur comes before the date of Alex.

Using the pipe operator

So I mentioned that pipe operator earlier, and this is where it really starts to come into play. You could select data from your data frame, you know, in the regular R way, or you could do the same thing with the pipe operator. So now I'm just piping storms into the select command. Same with filter, same with all these functions. Each of these functions takes the name of the data frame as their first argument, which makes this really convenient. And then if you use the piping syntax, it's very simple to pipe the results after one expression into a second expression. So if you wanted to first filter the rows to extract all the rows for wind speed 50, and then take those results and select two variables from it, you could just add a second pipe operator in the select command at the end of your code. If you then wanted to mutate that data frame or summarize it, well then you could just add a third pipe operator in the next line, and so on and so on. So it becomes very easy to build up this sort of record of what you're doing to transform your data, and it's very easy to add to that record. And then if you read through what we're doing here, I think this way of reading code is actually very transparent. Take storms, then filter it to just the rows for wind speed 50, then select from it storm and pressure. Just read the piping operator as then, and I think it makes your code very legible.

Just read the piping operator as then, and I think it makes your code very legible.

So you should be able to guess what something like this would do. You start with storms, then you mutate it to create a ratio variable, and then you select from just the storm variable and the ratio variable, what would your output look like, and something like this. If you don't like typing percent greater than percent, there's a keyboard shortcut for that operator, it's command shift M on Mac and control shift M on Windows.

Unit of analysis and group by

But let's turn our attention to unit of analysis, and I apologize, I realize I'm not answering people's questions, and actually when I'm playing the slideshow I can't see your questions, but I will get to some of yours at the end. So this is where I think those five functions I mentioned earlier really become interesting. This is our pollution data set. It describes pollution particle amounts in three different cities, and we could calculate the mean amounts, the sum of the amounts, and the number of rows in this data frame and put them in the summary data set. If we look at that summary data set on the side there, well, let's look at pollution first. You'll notice it's a tidy data set, it has variables, it has observations. If you look at the summary data set, it also has variables, in this case three variables, and it does have an observation, just one observation. But each of these things, the mean, the sum, and the end, each of those values describes the same thing, and that should raise the question, what is it that those values describe? And if you think of a mean of 42 or a sum of 252, or even an end of six rows, it becomes pretty clear that what they're describing is this entire data set. It's almost like we've made measurements on a data set, we've made an observation on the data set. And all a data set is, is a grouping of observations or a grouping of rows. So if we make observations on an entire data set, well then we could also make observations on subsets of a data set. We could make an observation on six rows, why not make an observation on two rows, or three observations on two rows. And if we did that, then we could have means, sums, and ends that are keyed into, in this case, different cities, you know, the mean amount for New York, the mean amount for London, the mean amount for Beijing. And you can do this sort of thing, this group by operation, by combining the group by function, which comes from dplyr, with the summarize function that comes from dplyr.

Here's how it works. If you run group by on the data frame, and then you give group by the name of a column or variable, it will add some metadata to the data frame that tells R, or tells dplyr, what the different groups of rows are in the data frame. In this case, it will group the data into three groups, one group for each unique value of the city variable. Now at this point, it doesn't do anything. If you just ran this, that metadata will stick around with your data frame. And dplyr might use it if you run a dplyr command on that group data frame, but in essence, you know, it just shows up basically here. When you look at the table format, it'll tell you if the data's grouped and what's grouped by. In this case, it's grouped by the city variable. The magic happens when you combine a group data frame with summarize, or mutate or filter or whatnot. What dplyr will do is it'll apply summarize in a group by fashion, or mutate in a group by fashion, and so on, where it makes sense. So here, if I first group by city and then summarize to calculate a mean, sum, and end variable, dplyr's gonna split the data into groups conceptually. It's gonna apply the summary to each of the groups separately and put those summaries back together into a final data frame. This will be my result. This is how you can get group statistics or group-wise summaries from your data. You'll notice that the three variables I calculated with summarize, mean, sum, and end are in the final data set, but to those, dplyr has added the city variable. And that variable came from the grouping criteria, and it's necessary for dplyr to add each variable that's involved in the grouping process so we know what the values of mean, sum, and end refer to.

So if we put this process together, this is what it looks like. We take our data, we group it, and we get group-wise summaries. In this case, I'm only taking the mean. The row of the summary that applies the small is just the mean of the small group in this data set. Here I'm grouping by size, before I was grouping by city. The rows don't have to be near each other in a group. Everything will just be grouped together based on common values, wherever those values appear in the data set. Once you have grouped data, you can remove the grouping information with ungroup. That can be reassuring, because sometimes I want metadata just floating around. But also, if you were to summarize a grouped data set, there'll be some ungrouping that occurs as well.

So this data set doesn't actually exist. There is a TB data set in the EDAWR package, but it's much more complicated than this one. This is a simplified version. But you can imagine, you could take this data set that has different countries, different years, different genders, number of cases of TB, although there's more than one case, 99 in Afghanistan. Anyways, we could group it. In this case, we could add more than one variable to the grouping criteria. And what group-by will do is it'll create a separate group for each combination of those variables. So for example, Afghanistan in 1999 will be one group, and Afghanistan in 2000 will be a separate group, and so on. And then we can run summarize on that. And what we'll get is our summary. But when you run summarize on grouped data, summarize will strip off one variable from the grouping criteria. So now that it's made the summary, it's going to strip off the right most variable. In this case, that's year. And what you'll end up with is a data set still grouped, but it's only grouped by country. It's no longer grouped by country and year. Now why do that? Well, if this output here on the right was still grouped by country and year, each row would be a separate group. And at that point, the grouping criteria is not really that helpful. But by stripping off just the last grouping criteria, this will be grouped by country, and we can immediately run summarize on it again, and this time get summary statistics based on the different countries. And now let's go peel off that variable country, and the data is no longer grouped after we've summarized it a few times. This only applies to the output that we get from this command. That's no longer grouped. None of what we're doing is going to affect the actual TB data set. That's just going to exist as it always has in memory.

So this process of grouping by many variables and then summarizing, peeling off one level of grouping criteria as you go is a way to change the unit of analysis that your data looks at. For example, we can use the process to make these four data sets here. They all come from the original TB data, but one of them is really describing combinations of, in this case on the left, country by year by sex, and then we change that to just country by year, and then we change that to just country, and then we change that to the entire data set. And different types of information can become apparent at different levels of grouping here and summarization.

So this process of grouping by many variables and then summarizing, peeling off one level of grouping criteria as you go is a way to change the unit of analysis that your data looks at.

So I know it's been a lot. This is definitely going to be a whirlwind tour, but we're near the end. You can extract variables and observations with Select and Filter. You can rearrange your data with Arrange. You can make new variables with Mutate, and you can make new observations on new units of analysis by combining Group By with Summarize.

Thanks for checking out our data wrangling video series. This is just one video in a larger set of resources on data wrangling, so here are links to all of our other videos in the series. And again, have a look at the video description for shortcuts to other parts of the series, as well as other resources we think might be helpful, like cheat sheets and further documentation. We hope you find all of these resources useful, and if you do, as always, we would appreciate a thumbs up or a share. Both go a long way to helping this content reach people who could benefit from it.