Introduction to Recipes (R Package) | RStudio Webinar - 2017

This is a recording of an RStudio webinar. You can subscribe to receive invitations to future webinars at https://www.rstudio.com/resources/webinars/ . We try to host a couple each month with the goal of furthering the R community's understanding of R and RStudio's capabilities. We are always interested in receiving feedback, so please don't hesitate to comment or reach out with a personal message

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Alright, so thanks for coming this morning, or this afternoon, or wherever you're at. So we're going to talk about a new R package called Recipes, and its purpose is mostly about creating design matrices and datasets and pre-processing them with various techniques that we'll go into more.

So just to maybe get started, talk about a simple linear model that we might use. And there's a dataset in Carat, so if you were to load Carat and load the dataset Sacramento, what you'd find is a dataset where there are data for houses that were sold in Sacramento within a five-day period. So there are things like the sale price of the house, the square footage, number of beds and baths, there's like longitude and latitude and that kind of thing. There's also the type of house, which oddly only has three levels.

But that's all in that dataset, and let's assume that you wanted to build a model to predict the price of a house. So you might think about using LM to do that, and to create this model, you use the LM function, and LM takes a formula. And so we'll be talking about formulas a little bit, and you know, just as a demonstration formula, this is not probably how I would model these data, but you might take the log of the price because it's a very skewed variable, and that's your outcomes, and so it's on the left-hand side of the tilde there.

And then on the right-hand side, we have basically a symbolic representation of what our model is going to be. And basically this says, you know, we're going to model log of price as a function of the type of house and its square footage, and that data is in this data frame called Sacramento. And then with LM and formula methods, you can add a little bit here that's a subset command. So this would filter the Sacramento data to look only for where there are three or more bedrooms, if that's the data you want to nail on.

And so basically the purpose of the code chunk, if you take it step-by-step, is, you know, the first thing it would do would be to subset the dataset that you're going to model using that command. And then it creates what's called a design matrix, which is, if you think about linear regression, we usually think of, like, you know, Y is equal to X beta plus epsilon, and the X part of that is the predictors, and that's what the old-school people like me would call a design matrix.

So the second thing that that function does is to create a design matrix. Now there's two predictors. There's type and square footage, but you end up getting three model terms. The type variable is a factor, and that factor has three levels. And so when it creates dummy variables for that, it will actually make two columns of that three-level factor that are zero and one for the different types of houses, and then you have the third term for square footage.

So your design matrix, even though you have two predictors, actually has the non-intercept parts of that design matrix are those three columns. And then the third thing it does would be to log transform the outcome variable, which is price, and then it actually gets around to actually fitting the linear regression model using LM.fit and some other stuff that we don't normally mess with. But anyway, the first two steps that is really the part that it creates a design matrix.

Limitations of the formula method

And again, we usually, not going to be formulas really in here, but we represent that as X. So I wrote for RStudio a couple of blog posts on the formula method. And so just summarizing, and I have a link to those in the slides, and you can find those. But basically, the great thing about the model form is it's very expressive. So you can say what your predictor is, what your outcome is.

You can have these inline functions, like logging price will ensure that that happens in the... You don't have to pre-log your data. You can have interaction terms and nesting and all sorts of great things, so they're very expressive. And if you're doing something that's pretty simple, like the housing model I was showing, it's extremely efficient and nice to use.

Under the hood, and this is what the blog post goes into, it actually does some pretty elegant functional programming, which surprises me that that's been... I've been looking at it for years, but that's been around for quite a long time in the original S language. So you don't really think of R as a functional programming language, but a core part of that, the model.matrix and LM and all that is pretty nicely done functionally.

And again, I kind of already mentioned this, you can embed functions in the formula like I did with price here, and the cool thing about that is if we were to log, let's say, square footage, not only can we do that inline, and it would log that variable for the data that you have that you're feeding the model on, when you go to use the predict function on a new data set, again, you don't have to pre-log square footage. You would just give it the column of square footage, and since it knows it's recorded that transformation in the formula, it can actually apply to that, to whatever you're interested in.

There's some limitations to what the function or the formula framework can do, and as I'll talk about in the next few slides, there's some inefficiencies that can occur there. It's mostly, I think, because it's a really amazingly well-designed system, but it was designed where I think nobody envisioned having maybe hundreds of variables in their model, and the sort of large-scale modeling that people can do today, and so I think it sort of suffers a little bit in those instances.

So just some examples of that is let's say you want to do something a little more complicated with your features. Let's say you have some missing data that you need to impute, and then you're putting that data into a model like a support vector machine or partial least squares or something where you need to center and scale those values, and so you can envision maybe doing something with formula where you have an outcome, an utility, and then since you have these inline functions, you might think that, well, I want to impute my variable first based on k-nearest neighbors, and then since I need to center and scale, I can center them and take the output of that and scale it, and that seems fine, but that's problematic on a lot of levels in terms of how the formula infrastructure works.

And one of those being that if you need to impute a bunch of variables, let's say with k-nearest neighbors, you would need to store the original data set, and since the functional programming bit of LM deals with each variable separately, you'd end up storing the same data set many, many times for every variable you want to impute. So not only is it problematic just from a programming standpoint, just even if this code would work, it's kind of a bad idea because you end up having many, many variations or the same data set included many times.

So this is not a terribly obscure thing to do, but it does limit you to what you can do in the formula method. Another thing is there are a lot of modeling functions such as LM and Rparts, a good example, where you don't have a non-format interface to the model, so you're constrained to always specify your model using a formula, and the problem with that is you can't really recycle things. So if you've already done all the work to generate the design matrix for LM, there's no way to take that and recycle it into Rpart.

Another thing that I won't get into too much, but you can find it in the blog post that's linked down here, is that the way the formula method information is stored is pretty inefficient, and it's especially inefficient when you have a large number of variables. So we show in that blog post, based on using Rpart or Random Forest, that if you have a lot of predictors, like in the thousands, you can spend maybe half the time of that modeling function just creating the design matrix. So it does have some weaknesses for particularly wide datasets.

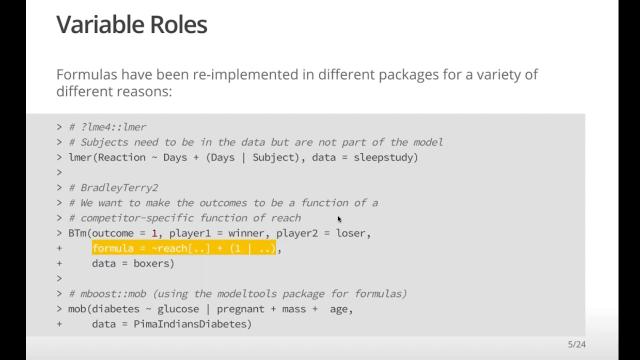

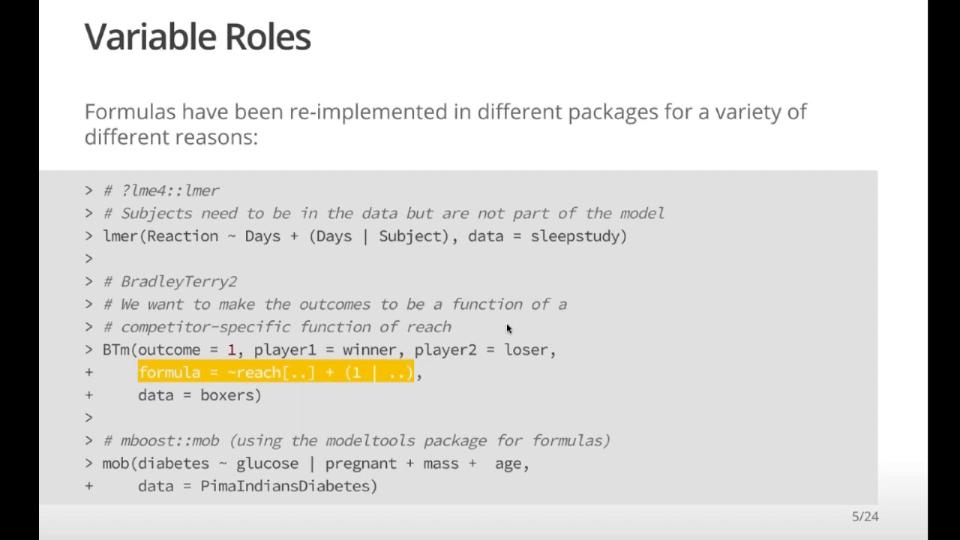

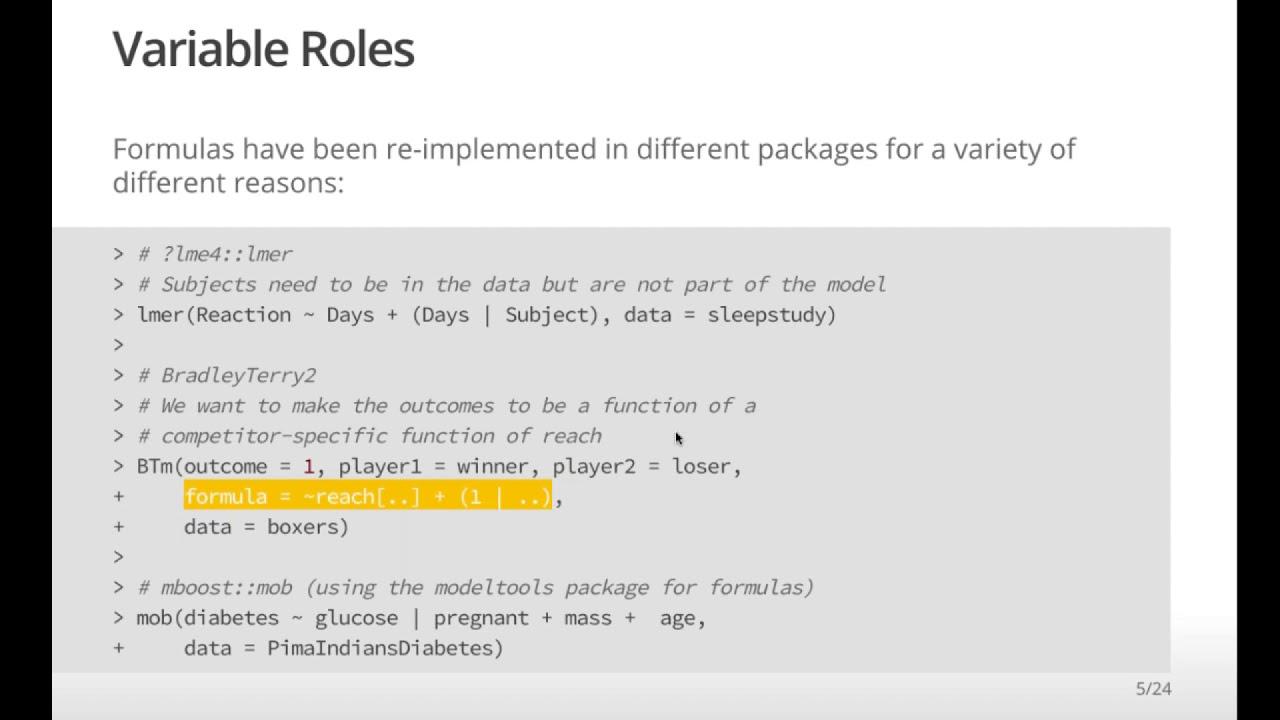

And then another more fundamental aspect of formulas is there's really a limited set of roles that variables in your dataset can take. And again, as we do things that are more complicated beyond linear regression, that becomes a big sort of more philosophical limiting factor of what this formula technology can do.

So as an example of that, let's say you're fitting like a hierarchical model, whether it's using Stan or LME or whatnot, you have variables in your dataset that are going to be included as outcomes and predictors, but then you have data that define the hierarchy. So if you take this example from the LME4 documentation, you have a subject effect. So you're modeling in the sleep study, you're modeling this outcome called a reaction as a function of days.

And I think this model is a random coefficient model that would give you a hard to model based on with random effects, probably for the intercept in days. And those intercept, those random effects are connected to the subject specification. And so subject is not a predictor, but it's something you need to carry along with a role is your ID variable for the methodology.

And so that's the reason that this formula looks different than the others is because that particular package in a way had to sort of rewrite the formula method to make that work because that's what they needed. Another example is something called the Bradley-Terry 2 package, and that's kind of an interesting package that can model competitions between variables. So like if you had a boxing, data on boxing, you might be modeling whether one person can win over another and you might have a dozen people.

And so the thing is, you might want to model that as a function of let's say reach. And so in the Bradley-Terry model, it's basically like a souped up logistic regression, but in order to have that formula method work in this instance, they sort of had to invent a new way of doing formulas simply because the current methodology doesn't let you really specify things.

And then another example is there's a boosting method called mob, and you can imagine it's sort of like a recursive partitioning model like our part, but it fits linear models in the terminal nodes. And so when you fit this model, you want to specify which variables are used for splitting to make the tree, and then which variables are used in the model in the terminal nodes. And so they wrote a whole new package called model tools that will allow you to have sort of multiple formulas.

And so again, with the bar here, this is another situation where people needed different roles of these predictors. You have predictors that you want to use for splitting and predictors that you want to use for modeling, and that sort of is not really going to happen in the current standard formula method.

So if you think about maybe other roles, the ones I could think of, if you have the traditional predictors and outcomes, sometimes you have stratification variables, like if you're doing a clinical trial and you want to stratify over sites, that's a separate variable again that's not, you're not necessarily doing direct computations on it, but you need it. A lot of models, when we want to capture the performance of the model, sometimes that involves other data. So if we're predicting whether someone's going to repay a loan or not, we don't necessarily care about the accuracy of that model. We care about the expected loss that comes from that, and if you're going to compute the expected profit, you're going to need something like the loan amount.

So again, it's another variable that you need to carry along, but it's not really a predictor or an outcome. So you can't really do much with the standard formula for that. There's a variety of other things like fastening or random effects, like I mentioned. Now case weights and offsets are kind of special cases and sometimes error terms, because there have been some exceptions written to the formula method where you could have like an offset variable and declare that in the formula method.

Those are kind of hard-coded things that are in the base package, and they're not easily extensible to have one for stratification. Well, there is a strata function in the survival package, but you can just by point is if you had an arbitrary rule that you're creating, you can't really do that with the current set of formulas.

So you can't really do much with the standard formula for that.

Introducing the Recipes package

So to me, philosophically, this is the larger problem. You know, all the programming aspects of formulas that we don't like so much, like the inefficiency sometimes can be programmed out, but this is something I think is really baked into the system. So we came up with this idea of something called a recipe, and there's a big food analogy that's going to happen here.

And so basically the idea of a recipe is you can think of a recipe really as a sequence of steps, right? So you're going to make a lasagna, it tells you what the ingredients are, and it says, you know, assemble it like this and then bake the thing. But if you think about the linear model we had earlier, you know, a sequence of steps might be saying, hey, you know, prices are outcome. That's maybe step one to declare that. And then we might declare the predictors to be type and square foot.

And then another thing we did there in LM was to say, well, we should log transform price. And then the fourth thing we did, we could say that that sequence of steps is, you know, let's convert that type variable into dummy variables. And that kind of defines the procedure that the LM formula method, you know, the formula method with LM actually did when we first showed that code.

And so the thing is a recipe, at least as I've stated here, is really what we're intending to do. It's not actually doing it necessarily. So one of the issues with, you know, model.matrix and all that is the specification of what you want to do is coupled directly with doing it. So that's one of the reasons you can't really recycle things very easily between methodologies.

So you know, what recipes does is basically says, well, let's specify what we want to do. And then we won't actually, we'll delay the execution of that doing until we, you know, say, well, these are the data we want to do it with. So we kind of separate the planning of what we want to do from actually making it happen.

So you know, what recipes does is basically says, well, let's specify what we want to do. And then we won't actually, we'll delay the execution of that doing until we, you know, say, well, these are the data we want to do it with. So we kind of separate the planning of what we want to do from actually making it happen.

I'll show you there's a link in the slides. But if you want to install it, it's not a crane yet. But there's a GitHub page for it here. And it's got, you know, a lot of examples. And as you can imagine, you've seen my other packages, there's a boatload of documentation. So right now, if you want to use it, you basically load the or install or load the DevTools package, and then use install into our GitHub with my GitHub ID and recipes. And that's currently the way you get it, but it really should be on CRAN pretty soon.

How recipes work: specify, prepare, bake

So once you load that, just for convenience, I also load a dplyr so we can get the pipe operator and some other things. And so if we wanted to reproduce maybe what Ellen was doing before, we start off, I'll show you a different way to create the initial recipe. But if we only have prediction outcomes, if you don't have any special roles that we want to apply to our data, we can start with basically a symbolic representation of what should be in the model by saying price is, you know, a function of type and square footage.

Now, the data that you use here doesn't have to be the original data. It's just what we do in this particular step is we want to record what data are in that data frame and what their their attributes are, like are they factors or the numbers and so on. So this doesn't have to be a large data set if you have a very large data set, which of the recipe basically you don't have to use pipes, but I'll do that for convenience here is you can start adding steps to the recipe.

So if we want to take the original recipe and add a step that says, hey, log transform this variable, we could do this little bit and that basically modifies the recipe to include a step of that recipe saying the log that variable called price. And then another thing we can do is to say to add on to that is to convert type to dummy variables. And so at that point, what we've done is we've come up with a specification of what the roles are using this for the method like we used before. And then we've talked about or described what kind of preprocessing or actions we want to happen for those variables by just adding these steps to it.

And so it's fairly reminiscent if you've ever used care to the sort of in some ways, like the preprocess part of care where you can decide what kind of preprocessing you want with your variables. But again, all this does at this point is it says, well, what is the specification? What I want to do. And all we've said at this point was price of the outcome type and square footage or predictors, we're going to log price and create dummy variables for type. It doesn't actually do anything but record those actions.

And then in this whole food analogy, we want to take this recipe and prepare it. Then what prepare does is basically you can think of it as training or learning or fitting the preprocessing steps. So, for example, we have dummy variables here for type. So when we do this, we're just saying we want to make dummy variables when we run prepare. What it actually does is interrogates the data that we've given it and says, OK, what are the levels of type and how exactly am I going to make those dummy variables? So once you start prepare, that's when you're actually doing computations and storing information about the specifics of those actions.

Now, one little thing that I've done here you wouldn't necessarily have to do is I'm saying retain equal true. And what that says is take the data set that I've given it. And when you estimate these actions that you're going to do for the recipe, keep the modified version of that training set around, keep it in this object. And that'll stop us from having to redo computations over and over again. So that's a nice little feature.

And you'll see that I'll mention that in a few minutes when I'm showing how things are sort of cached when we do this. So, again, we've specified what the actions are. We've sort of estimated things that we need to estimate. And then when you do that, it tells you which steps. There's a verbose option. But right now it tells you what steps are being executed as they're executed.

And then when we actually want to get the design matrix to put an LM, the analogy here is we're going to bake that recipe. So we take the the object that we created that has all the information we need from the data. And then the new data option here says, well, you know, apply this this recipe to this data set. So if you're like in the machine learning or the predict modeling world, you might have a training, a test set. And so you can apply these operations that you estimate from your training set to the test set or to any new samples that you get.

It's kind of like a predictor and apply method where you're basically projecting all the preprocessing methods on whatever data set you're interested in. And that will give you your design matrix.

So so that's the basic idea behind recipes is you specify what you want, you estimate the things that you need to estimate, and then you apply it at will to whatever data sets that you want, which could be the original data set like it was here. And that's the basic idea. So the rest of it is sort of talking about the features and some of the things that you can do.

Variable selectors and roles

Now, one of the things you may have noticed here is, you know, when I created this step for price, you know, I'm not quoting any variables here. And if you're a person who's used deep wire, you can see some similarity here where there's a bunch of deep wire like things happening here. Like we can pipe in information and sort of update objects. And you can see I'm not having to quote variables when I do this.

But the interesting thing about recipes is unlike deep wire, we might want to specify what variables are using different steps based on more than their name. So right now, and as I'll show you in a minute, you can specify any number of variables in these steps by using their name or the standard deep wire things like matches or starts with. So you can use these sort of like functions to declare what variables are used in the steps.

But sometimes we might want to do, let's say, dummy variables and do it on all the all the variables that I have in the data set that are not numeric, that let's say are factor variables. And so we also have these selectors that you can use that are things like all numeric. So if we want to have a step, we can say, well, do that for all the numeric predictors that are in my data set or do it on all the predictors or all the outcomes. And you can add and subtract these like you would with deep wire.

So, for example, if you want to do PCA, you can't really do that on factor variables. So you might want to specify do it on all the predictors that are numeric and then you can do that like sort of like you have here the sequence of selectors. We can always just type the names and with common delimited unquoted variable names. But if you want to be a little more concise about what you're doing, you can do that.

Now, one interesting aspect of a recipe is you might be doing or you might be saying let's do some operations on variables that don't necessarily exist yet. So, for example, if you're going to discretize predictors or, as you'll see in a little bit, do like PCA feature extraction, you might do PCA and say, give me the number of PCA variables that capture 95 percent of the variability. And that might be four of them or it might be a hundred of them, depending on the data set and the correlation structure and all that.

So what's interesting about recipes is you might be making you might be wanting to do operations in the variables that actually don't not even exist yet, but you don't know how many of them there are. So, you know, you can do things like, you know, matches, you know, PC one through nine, which would capture, you know, the first whatever principal components you have there and then make sure that you also capture the numeric predictors. But maybe your outcome is numeric. You might want to get rid of that one. So you have a really, really rich set of way to specify variables in these recipes.

Caching and reusing recipe steps

All right, so one cool thing about the recipes is you can start with a recipe like the one we have, the current recipe we have all the only thing it does is really logs the outcome, increase dummy variables. But again, let's say you want to center and scale your data and then maybe apply. It wouldn't really make sense for this particular example, but let's say you want to apply PCA signal extraction. Well, once you have your your recipe, whether it's been trained or not, you can just keep adding steps to it and make that a different a different recipe.

So you can sort of save in a way you can sort of like catch where you've gotten so far, save it as another name and add other steps to it. And I'll show an example of this towards the end with some dimension reduction routines. So if we start off this recipe that we've already done some computations on, we add some more steps when we go to prepare this recipe, it doesn't need to redo the ones that have already been executed.

So you can you know, when we go to prepare the recipe with our training set, step center is going to need it to calculate all the means of the numeric predictors and step scale, calculate the standard deviations of all the numeric predictors. And then when it calculates the loadings and things like that for PCA, that's the things to prepare. We'll do it won't redo necessarily the things which the things that you've already done in the recipe.

So when I go to run prepare on this new recipe now, I didn't say new data equal or I'm sorry, training data equal to Sacramento. And the reason I didn't have to do that is this retain equal true. If I don't if I don't retain the original predictors, the original data set, then I would need to add that here. But the value of having that sort of retain is when you go to do this new set of processing, you can see when it gives you the log of what it's doing. It puts pre-trained here because it's telling you I don't need to redo those computations because nothing's changed and that it does all the estimation needs to do for the remaining steps.

So the cool thing about this is, let's say you want to try a bunch of different things. You created a bunch of different, let's say, design matrices to see what works for your model or your data. And let's say, again, you have some expensive imputation step that you need to do before you start doing these things because you have missing data where you can do that imputation step, which might be sort of computationally taxing, but you don't have to redo it every time you create a different design matrix. You can just sort of recycle those computations. And so that saves you a lot of time potentially when you're creating these things.

Available steps

So, you know, this is kind of like the shock and awe slide. Here are the things that I've gotten into recipes so far. You have a lot of basic things like loots and roots and logs and polynomials and all that stuff. Again, anything I'm missing here, whether it's obscure or not, please let me know. There are a bunch of things we can do with encodings now, so we can do, of course, dummy variables, which is sort of a standard thing.

You know, one thing you could do, like in the Sacramento data set, is there's a predictor for city. So you might want to include the actual city as a as a different dummy variable in the model. But the problem is, you know, even though we have a thousand or so data points, some of these cities only have two or three instances in the data. So what you'd like to be able to do is to dynamically say, well, you know, give me dummy variables. But, you know, if the frequency of any group in that in that factor is very small, let's say like 1 percent or 5 percent or whatever your tolerance is, can you change the factor levels and dump those into an other level?

One other cool thing I had for an example or a data set I've been working on is let's say you have a predictor that is a date field. You know, it's a date class and are, you know, you're probably not going to analyze it as a date. You want to analyze whether the month has an effect or the day of the week has an effect. And so we have a step that basically can do all the encodings for dates if you want to take that single column of your data and make a bunch of features out of that for different ways to represent dates where you can do that. It also give you indicators if you want it for holidays. So, you know, if you're modeling something that is dependent on whether that date is a holiday or not, you might want to have pretty much in your model for that.

Similar to care, we have a bunch of filters for, you know, highly correlated predictors and things like that. Imputation right now, keen ears, neighbors, back trees, simple mean mode, imputation. Simple normalization, transformation type things. Again, these are very similar to what's in carrot. And again, if there's anything missing here, please let me know.

There's a great package called Dimred, D-I-M-R-E-D, and I kind of capitalized on that where it has a nice interface to a bunch of dimension reduction operations. And so, you know, right now it's really easy for you to do a step that's PCA or kernel PCA. So you might have a bunch of variables. You might have a ton of variables that you just want to do some plotting with and you might need to log some things and maybe do some transformations. And so what I'll show you in a minute is you can try a bunch of different dimension reduction techniques without redoing a bunch of computations prior to that.

Non-formula recipe specification

So, you know, I've already mentioned you might have said, well, Max, you know, the recipe you had before started off with a formula. I thought we were trying to get away from formula method and that would be right. The value of using the formula in the initial recipe is if you only have an outcome in or outcomes and predictors, you know, that's the most syntactically concise way of doing it, because the situation is not that complicated in terms of roles. Let's say you do have something more complicated. Another way of assembling the recipe is not using formula.

So you can say, you know, I want to have a recipe and the data is the original data set is here. And then what you can do is you can take all the variables that are in that data set and they'll be available and then you can start defining their roles so you can add a role for price being the outcome. And these are not hard coded. There's not like a pre-specified list that you have to choose from. So if you want to come up with some new type of role for whatever modeling method you're doing, this is basically like pretext. You can put in whatever you want.

Now, we do use outcome and predictor a lot in the package. So if that's the traditional roles these things are playing, stick to outcome and stick to predictor. But let's say you want to have the zip code as a stratification variable. So you might want to fit this linear model separately for every zip code. And so you can have that column in your data set come along for the ride, not be created into dummy variables. And then when you extract that using the bake function, you know what its role is and you know what you can get.

So so as an example, if you wanted to have a little wrapper around linear regression, you know, the underlying function that does the linear model fit is called LM dot fit and takes an X variable, which is your design matrix. And it takes a Y variable that is your vector. You could have that function be parameterized in terms of a recipe and some data. And then inside that function, you could actually do the prepare bit because the recipe already has the steps all pre-specified, so you don't need to specify them here.

And then when you fit LM, you can just get the design matrix by doing bake and then add dplyr selectors for what variables you want to be returned from that call. So if I just want the predictors, my design matrix would basically be this. And then if I only want the outcome, I can do the same thing, you know, use this bake command, but only get the outcome back. And so, you know, again, if you had stratification variables, you could say, you know, role is strata or whatever you want to select these things by roles.

One other nice thing about this is since the steps are all sort of independent of each other, you know, this isn't baked in quite yet. Sorry, I should use another food analogy, but this isn't really written yet. But the cool thing is, is if we have some preprocessing method that's available, let's say in scikit-learn and we have scikit-learn installed on a computer, you know, it wouldn't be hard to write a step that would then connect R to Python, pass the data to Python, have it do the preprocessing there, return all the information it needs to do that technique on new data and have that come along.

So, you know, R is sort of our default compute engine here. But we can certainly, since these things are sort of like modularized, we can certainly do that with, you know, Weka or TensorFlow or what have you. As long as that stuff is installed when you're in a place where you're using R and you have code to make that connection, we can start writing steps for other packages basically. So that's kind of a cool thing. Not available yet, but it's there, it will be there eventually.

Dimension reduction example

So here's an example in our book, we took some data, some image segmentation data where people took a bunch of images of cells and sometimes the imaging software did a good job of saying where the cell boundary is and other times it didn't. So that's called segmentation of a cell. And so we have a data set where we have a couple thousand rows, we have a couple thousand images and cells, and they've been already classified as being well segmented or poorly segmented.

And they wanted to have a model that basically predicted that based on a bunch of imaging derived qualities of those cells, like how big they are, how they're shaped and so on. And so basically we want to build a model that takes the imaging data and then makes a prediction on whether that cell was well segmented or poorly segmented. Because if we really think strongly it was poorly segmented, we probably want to filter those cells out of our subsequent analysis.

So we can build a model to do that. I'm not really going to do the model building here. What I want to do is show there's 58 imaging predictors in this data set and many of them are highly, highly correlated. So if we want to look at our data and say, OK, are there any outliers here? Are there any weird features in the data? We might do some dimensional reduction techniques to figure out how can we project those 58 dimensions down to only a few dimensions and still capture what's going on in that data set.

So I'll use some steps based on dimensional reduction to highlight that. So let's say you were to add or if you hadn't loaded dplyr or caret, you would load those. The data is in the segmentation data object and then segmentation data has the original authors pre-specified what data they use for training, what data they use for testing. So I'm going to use a little piping here and filter out to make this training version of the data set.

I'll filter out or I'll keep only the ones that had ksql train and then I'll get rid of two columns in that data set that are the data set identifier and the actual number of the cell, like it's individual cell ID. And then I do the same thing. So I have a data set that only has the original idea of a test that was there's about a thousand cells in each one of these data sets.

So what I'll do is I'll estimate any statistics I need from the training sets, like the PCA loadings and things like that, and then apply them to the test set. And then that's what I'll do the graphics on.

So that's the data set we'll use. So we'll start off with just a simple recipe. The class variable is the one that is a factor that is whether something is well segmented or poorly segmented. And I'll say, you know, everything else in the data set besides that class variable is a potential predictor. So that's the basic recipe where I've declared the rules for everything. And then what I'm going to do is some of these predictors are extremely skewed. And so that can mess up some of the things like PCA that use variance as an objective function and how they do their computations.

Now, some of these predictors are negative and highly skewed. And so there's this thing called the Al Johnson transform, which is sort of like the Box-Cox transformation. And it can have the effect of un-skewing your data. And we use this one instead of Box-Cox because Box-Cox requires your data to be non-negative. It can't be well, it can be zero, has to be strictly positive. And some of our data is not like that.

So we're saying is all the predictors in our data set, we're going to estimate and apply this Al Johnson transformation to make them a little less skewed. And then some of these dimensional reduction techniques, we're going to want to center and scale our predictors. So, again, we've only said what we want to do here. We haven't done anything yet. There's three basic steps so far that we've sort of declared. And then when I prepare that recipe, I've just kept the same name. We're going to estimate these things from the training set.

So let's say I want to do principal component analysis. Well, what I can do is I can take this sort of pre-trained recipe and add a step for principal component analysis. And so what this says is, hey, do PCA on all the predictors in the data set. Now, they're all numeric, so I don't need to specify their type. And this other option says is, you know, give me the number of PCA components that will capture 90 percent, basically the variability in the predictors. And so, you know, I don't know right now how many predictors that is, but it will give me enough to capture that information to make sure I have a pretty good representation of my original data.

So, again, what I've done here is I've just added a added a specification as to what I want, but I haven't actually done PCA yet. So there's a summary function for recipes. And when you run a summary function on it, what it tells you is what the available variables are, what they're, you know, at this point in the recipe, what their type is, are they numbers or are they factors and things like that. It tells you their role and whether there are variables that have been derived or the original variables. And so you can see right now I have 59 variables. So there's 48 predictors in an outcome class.

And and that's what it is, because I haven't done PCA yet. I haven't transformed this into principal components because I haven't prepared it yet. And so then let's say we do that preparation. We don't have to re-estimate again centering and scaling these things. But this four step when we do the pair function on it computes all the loadings that we need to do PCA. And then if I do the summary method on that, now it's telling me the things that are available in the recipe or these variables called PCA1, PCA2, because I've actually done the recipe.

So now we have the components, we can we can bake them and get their actual values for the test set. And then if you just do a simple GG plot, you can see, you know, at least using PCA, what kind of separation do I have in the data? I don't really see any outliers here per se. And there's, you know, a fair amount of overlap in the data. So, you know, how difficult of a modeling problem this is doesn't mean it's impossible, but at least from a linear classifier standpoint, you can get an idea that there's some separation, but not complete separation.

But you might think, well, you know, there might be some other sort of feature engineering type things I can do. And one of the things is if you've ever heard of support vector machines, what you can do is you can, let's say, project your original predictors into another higher dimensional space that that might be a nonlinear transformation. So you can sort of apply these sort of nonlinear transformations of your data in this thing called kernel principle component analysis. We'll do that transformation and then do PCA on top of the expanded dimensionality of your predictors. And the Kernelab package has a way to do that. We have a step for doing that.

So, you know, our PCA recipe utilized that basic recipe that just did the centering and scaling and transformation. And so instead of doing the regular PCA, now we can start with that original recipe and create another one that does our kernel principle component analysis. We have to tell what kernel to use and some of the parameters, which I've done here. But when we prepare that, we're only, you know, this has nothing to do with the PCA analysis we did previously, but it re-estimates everything it needs to for the kernel PCA. We bake that to get the values for the test set. And again, we can just ggplot that away. Here you see a little different distributions, but I think there's a little bit more separation between the classes by doing this kernel transformation beforehand.

So, you know, this might be a good sort of feature engineering step to do. You know, you can do like an isomap transformation, things like that. There are many other dimension reduction techniques you can do. A really simple one you can also try is since we know the classes, we can compute sort of the multidimensional class centroids for each class. So we can take a 58 dimensional space. We can find the center of the distribution for the well segmented predictors in the center of the distribution for the poorly segmented cells.

And then this step class this will will compute that. And then when you want to get new predictors, it will actually compute the distance of every individual data point to the centroid of the original classes. So it's almost like doing like a linear discriminant analysis and in here. But it's sort of a as a pre-processing step rather than as a model.

And so you can add a step for that. Again, use all the predictors since you need to know the classes to do the centroids. You need to tell what class to use. And that's this variable here. And these are distances which need to be skewed. So let's just log transform those predictors that we get that come out of this class this step. And so, again, we prepare that. We don't have to redo computations and then we bake them.

The data that comes out of that is we have the original class. You can see how the matches here. It says, give me all the variables in the computed recipe that start with class, whether it's uppercase C or lowercase C. And I have the original class variable. And then the distances to the to the poorly segmented class centroid in the well segmented class centroid. And it turns out when you do that, I think you get the most separation between these these data. You do see some outliers that are induced here. You might want to look into those data points.

But if you know what we did here is we did some different feature engineering steps utilizing this basic recipe and just kind of layered them on without having to redo a bunch of computations. So, you know, you can do the same thing with models where you might then put these design matrices into various models to see what does better or worse for the for the model that you're trying to use. And hopefully this is illustrated where you can just recycle all these computations and the sort of simplicity of doing that.

Next steps and integration with caret

So that's the example just to give you an idea of the next steps. We're we have another package that Lionel is working on called Tidy Select and recipes where it depends on that for some of the selectors. So as soon as that's on CRAN, which should be soon, we'll get recipes on CRAN.

Another thing that comes in handy is, you know, you know, recipes right now, since it's not linked directly to any modeling methods, it's kind of like having one shoe in a way where, you know, you can get pretty far with it. You can get your design matrices and things like that. But it would be nice to have that integrated into a bunch of models. And at least the first step of doing that is having a couple methods in care to do that.

So, you know, if you want to specify your model with some preprocessing, you would do some some syntax like this where you either use the formula method or give it the X and Y data sets and then say which preprocessing you want to use, like, you know, PCA or centering and scaling. What I'm doing right now is creating a new interface in addition to the ones that are already there where you can say I have this recipe. Here's the data you're going to use for training. You don't need to declare what the preprocessing is because it's built into that recipe. So you can start specifying your models and train at least, you know, which is wrapped like 200 different types of models and, you know, use that to both say what the predictors now comes are, but also do the big sort of expanded set of preprocessing techniques that are here compared to what is in care so far.

And then after that is, you know, there's there's more new modeling packages coming. So these are all integrate with those modeling packages. So that's what's next on board. And thanks for making it this far.

Q&A

OK, let's see, start with the flag ones, if you're right, so is it possible to store and return the results later? Now, if you mean the the results in terms of the intermediate steps, so like when we did PCA, we had to compute the loadings. Yeah, sure. I mean, that's basically what it does is it when you do the prepare, it computes the loadings in there. You know, I use cached. I mean, they're basically saved to that object so you can extract them. You can use them on future data points and whatnot.

And now when you say, can we store the results if you're talking about the design matrix? Yeah, I mean, you can you could use bank on that and save that as an object. So if I'm understanding you, those cases, yes, you can save and return them. That's that's one of the main things that we do.

Let's see. The training set, what happens if the variable is being turned into a dummy variable is not included? You have to declare that you want to make dummy variables out of factors. So if you if, for example, if I didn't use that step dummy, step under bar dummy for the housing type, then when you bake that recipe, it's still going to it's not going to be modified. So, you know, form of the water, Matt, well, 99 percent of the time for the method will automatically convert factor variables to dummy variables in most of the modeling functions. There are a lot of exceptions that like reinforce our part in a lot of tree based methods don't do that and they don't really tell you they don't do that. But right now, if you want to get dummy variables, you have to use that step dummy step to make sure that that happens.

Is there any steps and recipes to select the test training breakup? And what about cross validation? So, no. And I've thought about that quite a bit. The thing is, any steps that you do, they have to be operations that you would apply to any data set. And so if you think about splitting data, you're going to differentially do things to each data set.

So one thing I thought about including is there's a bunch of sub sampling methodologies like if you have class amounts, it's like downsampling. And I thought, oh, that would make a great step. I should put that in here. But you don't necessarily want to always downsample. So you might want to downsample your training set. But when you go to make predictions on your test set, you don't want to downsample that. So I've kind of left splitting steps out of there and things that would filter rows. For the most part, I've done that.

Now, one thing that we're talking about doing is and this is just we're just talking about this right now. I haven't implemented anything is being able to include deep layer type filters in steps. So you might, you know, pipe in a step to do centering and scaling. But then you want to, let's say, filter on some variable that's there. So you can imagine doing a filter by or filter, which is in deep layer to a recipe. And there are some some of those deep layer operations that I don't see any reason why they wouldn't work here. So there might be ways of doing that in the future.

But I haven't coded them up, but just realize anything you do in a step is something that you're going to always do to whatever data you're applying it to. So there might be some some things that you don't want to include in the step in terms of cross-validation. Funny you should mention that. I mean, I'm like 80 percent done with another R package that I haven't made public yet that will have a bunch of like standardized infrastructure for resampling, whether it's cross-validation or time series resampling and things like that. So, you know, it's it's very much like what Model R does with maybe some expanded ways of doing things. So in there in that vignette, which I shouldn't talk about things I haven't released yet, there are examples of how to include recipes into this cross-validation scheme. So it's not out there yet, but you don't probably want to wait too long to to see that.

Are there vignettes? Yes. If you go to the the recipes again, there'll be a link in the webinar notes. But if you just go to GitHub and Google and recipes, well, you're probably at Hadley has a like a home set of recipes like food, actual recipes. You'll probably find that first. But undermine my to Pepe GitHub account. If you go to that recipes directory or repo, there's a GitHub IO link there that has I think there's three v