Importing Data Into R | RStudio Webinar - 2016

This is a recording of an RStudio webinar. You can subscribe to receive invitations to future webinars at https://www.rstudio.com/resources/web... . We try to host a couple each month with the goal of furthering the R community's understanding of R and RStudio's capabilities. We are always interested in receiving feedback, so please don't hesitate to comment or reach out with a personal message

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

We're going to be talking about importing data into R. And with that, these are the topics that I want to cover. I want to start with a brief overview of why data is important and importing it into R. And then we're going to, you know, take a look into different types of data that are interesting to import.

We're going to start with tabular data. This is pretty much the file that you would be used to as importing a CSV, you know, text, flat text files, Excel files, SAS, SPSS. We're going to take a look at hierarchical data, which, you know, is pretty common on the web with things like HTML, JSON, and XML. Afterwards, we're going to jump to relational data, as in relational databases. And finally, I want to give a tease on how to import data from distributed systems. You know, the more common term here is big data, so we're going to do a quick demo for each of these categories. And afterwards, we're going to have a session of questions.

Overview of the data science workflow

All right, well, let's get started with the overview. And this is a diagram that shows how the typical data science project looks like. It came from the R4DataScience book, which is currently on pre-sale, I believe, but all the content is available online. And, you know, what this diagram tells us is basically, you know, the way that I want to see it is that data importing is pretty important. You really can't have data science if you don't have data. And this is your very first step before you actually clean up the data and start understanding it and, you know, before you communicate. So definitely relevant.

You really can't have data science if you don't have data.

And also the caveat is that there are so many sources that we can use to import data from that this webinar is only, you know, touch the surface of a lot of different concepts that we can use to import data. There's going to be more webinars that you can attend and more resources that we can point you to in order to import data into R. But we're going to cover the main ones.

Importing tabular data

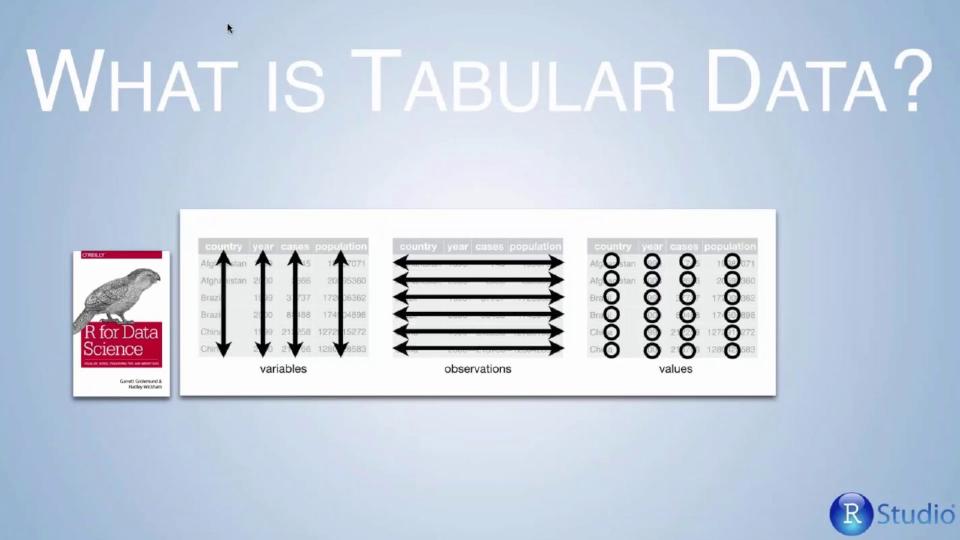

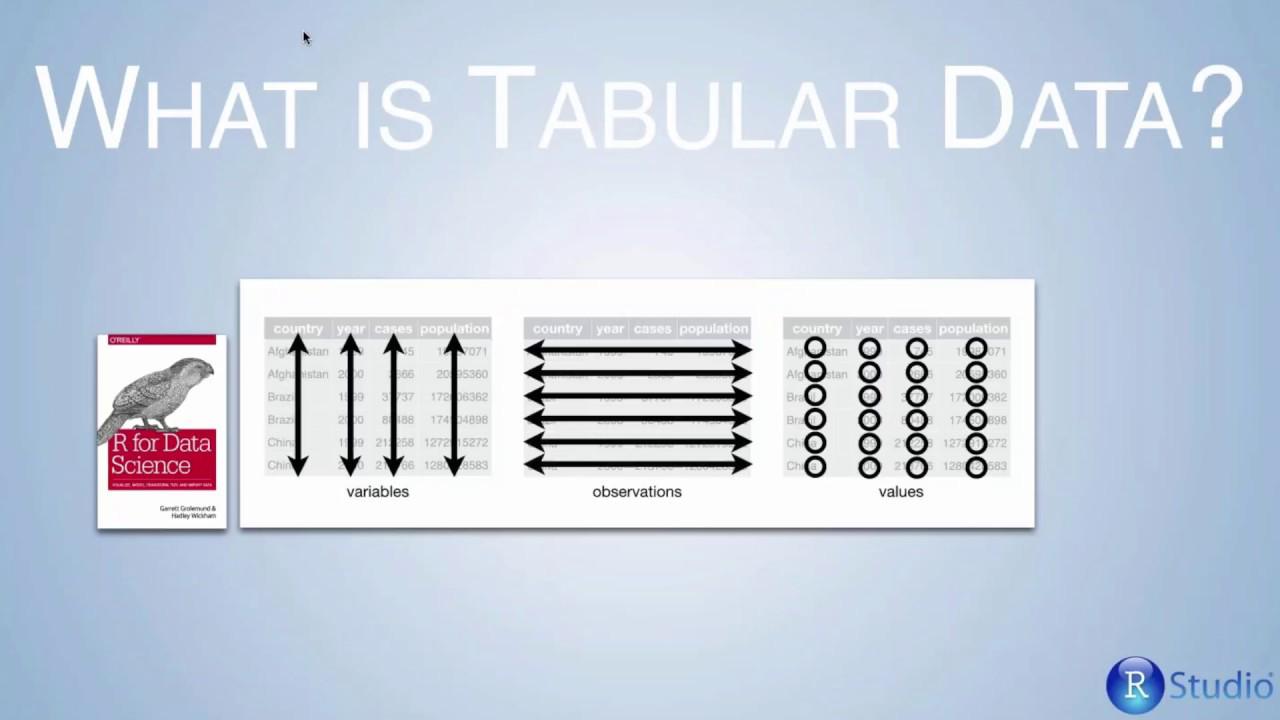

So with that, let's start with importing tabular data. And this might be obvious to a lot of you, but it's good to take a step back and just think about what is tabular data. A lot of times we think that we have tabular data, but we might not. And the structure is very simple. We have a set of variables, right? Each variable is represented in the column and each row represents an observation. Obviously, each cell contains values and we want these values per column to be homogeneous, right? We want them to have the same type.

You know, we want to have interiors in each column, if that's an interior column, et cetera. So still, it's obvious, but it's good that while you're looking into importing data, you have this notion of how a good tabular data source looks like. And if it doesn't look like this, you might be looking at a different type of data and you might need to use different tools.

So we're going to be covering a couple of the basic file types that you might be interested in importing. And for that, I'm going to switch to RStudio to run a couple demos.

And we have RStudio and we're going to start by importing a CSV file. And the package that we recommend using for importing CSVs and general flat files is read CSV. So you can run this comment. In the most simple case, you only need a path to your file and then you would be able to view the contents. This file is about two megabytes and we can load it all and have a sense of how it looks like.

We have a couple other options to import tabular data. For Excel files, we recommend the read Excel package. And again, they function in a very similar way. So we can run that with a single parameter, which is just the path to the file and get the results. And we have a couple other ones here which come from a statistical software, just executing the three of them at the same time.

So the simple use cases are very easy to get started with. There's just mostly a path to the file and each package will try to do inference on the columns and data that is being imported. However, the feature that I want to show is a new improvement that we have in RStudio to make our life easier while importing these data sources. And the reason why this is interesting is that not all the time is as easy as just importing a file. Sometimes we want to change column names, sometimes we want to change some of the settings that each package provides to us.

So here, if you were to click on RStudio under the import data set comment, you're going to get a new menu. Now there is a caveat here. This is a pretty new feature, so it's not on the last release of RStudio. You would have to download the preview version of RStudio. Now if you're watching this webinar later on, it's most likely going to be the case that it's part of the latest version of RStudio. But for now, the easiest thing to do if you're interested in using this feature is to download the preview by searching in your favorite engine RStudio preview and downloading that.

So you know that you have this feature if you have a menu that looks like this. And to import a CSV, all you need to do is click on import CSV. And what you would get is a text box with the file name and file path that you want to use to import data. This file path supports local sources, meaning that they live on your local computer. But you can also point this to a URL and import data directly from the web.

So let's do this. And as you can see here, we have some of the sources from this tutorial that you're going to be able to access. And we can pick a CSV file. So when we open the CSV file, we're actually not importing it into R yet. What we're doing for you is we're giving you a preview of how the data looks like. So you can see here that you have 50 entries. The file is actually longer, but we're giving you a preview of how this looks like. And you have a lot of options to configure how this data should be imported.

So for instance, a lot of data sources don't come with the first header as names of the columns. So you can toggle that really fast and create an extra one, create an extra row for the headers. And also what is good to see is that we're generating also a preview of the code that you would get to import this data. So here the parameter that we're changing is column names. So you can also use this dialog as a great way to just learn more about RADAR and the parameters that it supports.

You can change if you were to be importing a different delimited file, and you have a tab delimited file or just delimited by white spaces, you can also change those settings here. One of the interesting ones that it's very easy to do in this dialog is to choose columns or change their data type. So for instance, at times you would see that RADAR is pretty intelligent on choosing which is the column type that it's importing. So in this case, it thinks that this ID, it's an integer, which is the case. So that's a good data type to use to import this data.

But if you look at the date on this priority date column, you'll see that it's being imported by default as character, and that might not be what you want. So very easily you can say change to a date. You can specify the formatting string to parse this and accept the change. And as you can see, it has already taken place, and this column is going to be imported as a date column.

You can also preview the code the same way. We're basically creating a data import statement on RADAR to specify the column priority date as a date with a specific format type. The other good feature that I personally use a lot is to say you can select columns to skip or to only import. So sometimes we have a very large CSV file, and we're really only interested in three or four columns. So you can use also READSR functionality to select only the column that you want to import.

So for this case, we're looking at government data. There's different application names each with a date, and this might be all the information that I want. So once you have a good preview of how the data looks like, you can import that into R very easily. So this is how it looks like at this point. This is a data table that you can use to further do analysis on R.

In a similar way, we can import data directly from Excel. You want to select XLSX files. The functionality is similar but a bit different. One of the most used features in this dialog while importing data from Excel is to select the sheet on the source spreadsheet that you're interested in. So in this case, we only have one, but if you were to have multiple ones, you would be able to select the specific sheet that is interesting to you. And in a similar way, you can change some of the data types and skip some of the columns that might not be very interesting for your data analysis.

Let's take a quick look at one more code path to get your data imported. In this case, we'll try to import a sub-file. Here we have some data set. Again, some of the things that you could also do would be to change the data frame name. So maybe here we can say this data. And you would see that this updates real-time and you get a preview of how that import is going to look like. And again, you just need to click import and you would get a data frame with that functionality baked into the read sub-comment.

So this is pretty much it. I really encourage everyone just to take a quick look and play with the different parameters. It's a great way to learn about the community packages through the IDE.

So again, this feature is only available on RStudio Preview for now. So if you're interested in playing with this, you need to download RStudio Preview. And the main entry point is through the environment tab under import data set. You can select different sources and then you would get this dialog to do a preview, in-place preview of your data before importing. There's definitely, again, we're covering just the surface on how to import tabular data. There's more resources that Bill pointed to and there's definitely more upcoming webinars if you're also interested in further topics.

Hierarchical data

Sorry, with that, we're going to take a look at another class of data which doesn't fit well with tabular data. And this is called hierarchical data. So just a quick review of what is hierarchical data. And if you look at Wikipedia, it would say something like this, a data model in which the data is organized into a tree-like structure. In this particular representation that we have on this slide, you can look at it as an inverted tree, right? Where, you know, there's a model and that model starts with a root which, you know, has this label called pavement improvements.

So whenever you see branches, you know, nested branching on a data set, it's probably not tabular data. It's probably hierarchical data. I need to use a different set of tools to import that data. Now the interesting thing about hierarchical data is that it's really everywhere and that's the interesting part.

So one of the common file types is what we call XML, which stands for Extensible Markup Language. It looks like the top left block that we have on this slide. And, you know, in this case, we only have one level. It's a simple node from someone to someone. But we could have nested levels where, you know, like the structure is more complicated. Interestingly, there is a very strong relationship between XML and HTML. And HTML is basically everywhere, right?

So whenever you look at a web page and you look at the sources, you're going to see that it follows this tree-like structure when the document is defined as an HTML document and then there's a head that describes, you know, things like the title on the page and also a body where it describes the actual values. And in a similar way, sometimes where you're importing data not necessarily from a web page but from a web service or from a web API, the type of data that you would usually get is called JSON, which stands for JavaScript Notation. We have a sample on the bottom left of this slide. And it can also be nested.

And it's very common, you know, if you were to import data from Yelp or some of the web services that are out there, you would get a nested structure that describes the request that you're performing. And tabular, importing this as a tabular data structure wouldn't fit very well here.

This should be on the hierarchical data.r file on the resources that we're providing on this webinar. And we're going to start by importing data as JSON, right? So this one has multiple levels. So once the data is imported, we're going to take the first element of the first branch, and then we're going to take the first element of the next branch on that element. So I'm going to run that. And what you can see is that we're getting a nested list of data.

You can do something very similar by importing XML data. So in this case, the way that you access each node from this branch would be with XML children. So once you import your data, let's import it first. Now it's on this node. And once you want to access a specific data source on this nested structure, you would access it by going first to the first children in this case, and then getting the children of that particular node.

Now a more interesting use case is what happens when, you know, you want to import data from the web. And we just mentioned that HTML is another type of hierarchical data. And so let's look at the more interesting example here. So here we're looking at a Wikipedia page, List of Countries and Dependencies by Population. And obviously, you know, this is all text. But there is a table that looks very interesting.

And in this case, Wikipedia doesn't have, like, a download, you know, data, you know, feature or anything. But this might be something that you want to import directly into R. And there's going to be an upcoming webinar on just how to do, you know, web scraping. But I want to give you, you know, like a quick overview of how you would do this, right? So you have a URL. So you can copy that URL. And you would want to use, in this case, a different package called Arvest.

And you would call in a similar way that you read XML or JSON, you would say read HTML. And what this would do is would read the entire web page as HTML. So now it's parsed. It's in a hierarchical format. Still not very usable at this point because it's basically the whole web page. What you could do is you could look at a very specific element. So you can, if you were to look here at the source of this page, you would see that there is a table element on this table. And you could address that specific element by using what is called an XPath to the element.

So let's run that. And that's going to give you that particular element. And then finally, you can use one function from the Arvest package, which is very useful, which is saying, well, load this table as an HTML table from an HTML table. So let's execute this statement. And what you can see is that, you know, it starts with China and, you know, biggest population June 22nd, which, you know, like it's the first row that you would get on your web page.

And again, there's going to be an upcoming webinar for this. This is just scratching the surface of what you can do by scraping data from the web. But it's really interesting and certainly very, very helpful.

Relational data and databases

So we look at this couple of these examples with XML, Arvest and JSON. And now we're going to look at importing relational data. And again, I wanted to spend a little bit of time just kind of like understanding what's the difference between hierarchical data and relational data. So in hierarchical data, as we talk about, we're always talking about branching in relational data. What we're talking about is relationships between data.

In Wikipedia, you could find a quote that reads that data is represented in terms of tuples and group it into relations. And tuples is nothing more than a set of columns that are related with each other. But then we can also have relationships between them.

So, for instance, some of the things that wouldn't be supported in a hierarchical structure would be, for instance, when you have a list of when your company has a list of customers. So that relationship could be hierarchical. But the fact that also customers could have relationships with their favorite brands in there that are companies, you know, it's it's kind of like a loop that goes from customers to companies and from companies to customer that you would model with a with a relational looking data structure.

So this is how it looks like. And the best representation of this is a database. Right. I'm sure a lot of you would be familiar with databases. And the interesting thing to mention here is that there's obviously a lot of database software out there. The table on the right shows some of the different software providers of database software. And there's definitely there's just definitely a lot of them.

So what you really want to do, since you know, like this is this can get chaotic pretty fast, is you want to use a package that can wrap that complexity around connecting to each of the different data sources with something that it's usually called a driver, which is basically a translator between what you want to do with your database and the actual information in the database. And for that, a great package to work with these is called DBI. And DBI would allow you to connect to multiple data sources. And we're going to see a couple examples of these.

And one of the things that you want to do is obviously first install the DBI package, and then you would have to find which is the correct backend. And by backend, I just mean database that you need to connect to. There's definitely different backends that you could use. Some of them are what we call generic and some of them are specialized. So for instance, in this case, if you were to connect to Postgres, you would have to run a statement that looks like this, DB connect. And then you specify the Postgres driver.

The best thing to do is to use the driver that is specific to the database that you're connecting to. And mostly, mostly because this is usually a performance game. Usually most of the features are implemented on the on the specific backend that you're connecting to. If for some reason you don't find a driver to your specific database, what you can do is use one of the generic ones like our ODBC and our JDBC. And those are standard connections to databases that usually need a little bit more of configuration that we're not doing this this tutorial. But it would allow you to connect in a generic way to each of the databases.

Another example, very, very used database software is the driver for MySQL. And as you can see, you know, it's basically the same statement that you would execute for connecting to Postgres you would use to connect to MySQL. And that's the point of this package. The DBI package is trying to abstract away the complexities of dealing with multiple backends into a single package with very few configuration steps.

So for our for our example, during this webinar, what we're going to do is we're going to connect to SQLite. SQLite is also a database software that allows, it's very easy to allow you to connect in local settings like the one I'm using. In fact, in this case, I'm pointing this database to be stored in the database.sqlite file that you could see on the tutorial, sorry, webinar resources. So here you can see there is a database SQLite file. And all you have to do is connect to it and it's going to create a database connection to that particular data set.

The first step that you probably want to do while connecting to a database is just to list the tables and to kind of like understand what types of tables you have in this database. In this case, it's a very simple database. It has only one table that I created myself. And then in order to retrieve data from a database, you would want to say dbquery and execute a query. And this is a SQL query. There's a lot of references on the web on how to craft queries. There's also packages that we recommend using like the plier that will remove the need to use specific SQL queries.

But today we're talking about importing data, so we're not going to touch on other more advanced ways of transforming and manipulating data. But once you have your data locally, you can obviously look at the result sets. You can do the same type of processing that you would do with any other data frame in the same way that we imported hierarchical data and in the same way that we imported tabular data.

Finally, one last step but not less important is to disconnect from the database. It's just a polite thing to do. Otherwise, the data source might keep resources running and it could degrade performance. So just a single step code to disconnect away from the database.

If you were to use, again, one of these commands, it wouldn't obviously work because while dealing with databases, the first step that you want to do is disconnect. So again, a quick recap, we use the DBI driver. And in order to connect to the particular database of your interest, you want to use a driver. There's different types of drivers, much more than these three that I'm presenting here. And then you would want to manipulate and extract data using SQL-like statements or higher-level packages like Deployer that would allow you to do this without even selecting, having to craft your own SQL statements.

Importing data from distributed systems

And the last topic that we want to cover is importing data from distributed sources. And what does this mean? So we talk about tabular. We talk about hierarchical and relational. And this is a little bit of a similar concept, but the scope is different, right? So in a lot of cases, you know, when dealing with CSV files, you can have the CSV files on your local machine because they just feed into memory and that's all good. And it's usually, you know, it's usually okay to just import a CSV file directly from your local machine.

Sometimes as the data grows, data gets ported into a relational model. And you can import this data with tools like DBI, as we saw in the demo. But there's also cases where the data just doesn't even fit on or is not advised to be feeding to a database relational model. So in these cases, we're talking of what is also known as big data, where the data just doesn't feed into a single machine or even a few machines. You know, like databases, it's not uncommon to have like, you know, under replicated database with three or four machines. But sometimes data doesn't even feed on those systems. And you need like several hundreds or several thousands or even tens of thousands of nodes.

By node, I mean what we're representing on top of these on the top of the slide. By nodes, I mean just computers. So when data doesn't fit on a few computers or even a single computer, we need to introduce the concept of distributed data. And basically what it means is that we need to split the data into multiple different pieces in order to be able to deal with it, right?

So if you have like a really, really large file that doesn't fit on one machine, but only fits in like machine machines, you have to distribute that file across like all those different machines and somehow work with it on R. So that's a bit what I want to talk about on these last conceptual data source. You have a lot of data. It's distributed data. And it's just not possible to deal with all the data in your machine running, you know, like a single hard drive with a few processors.

So how does that look like? So there's two approaches to tackle this problem of having too much data that doesn't fit into one machine that you can't really import into your R session. So the first concept is sampling. And this is a statistical concept. And the idea is that, you know, you might not be able to bring all the Twitter data into your laptop. That's just not feasible because it's just too much. But you can definitely take a subset of that data.

So you can say, well, you know, I know that I can take all the information from all these countries. But I can take a few rows from each country and process that in a subsampled way on the local machine. So that's one way. And that's what we're going to explore today on the demo during this webinar. The second option is to say, well, I know that the data doesn't fit in this particular machine. So instead of running my code with a subset of data in this current machine, what I'm going to do is instead going to run my R code in distributed fashion across all the nodes and do the processing outside of this current machine. And that's definitely deserves a webinar by itself.

But today I want to focus on sampling. So two options. One is reduce the number of data that you bring down. And the other option is do processing of data outside of your current laptop and do it on each node.

So let's look at how it looks to sample data for a specific data source. How would we bring data into R? And, again, I'm not talking here about very specific technologies because there's just so many. I've listed some on the right side of the slide. We have Spark, Hadoop, Cassandra, Mesos. There's just a lot of technology. So that definitely deserves its own webinar by itself. But I want to at least expose ourselves during this webinar on how we would tackle this problem.

So we're looking at the sampling approach. And what I'm going to do in this case, and this is, you know, unrelated in some way with R, since it's going to be dependent on each system that has distributed data. But the approach would look a little bit like this. And, you know, we don't need to go into details. I just kind of, like, want to present how this would look like. And the idea is here what we're using is what is called we're getting data from Spark. And we're using what is called a Spark shell. And the approach that I want to present is pretty simple.

So what you would do is you would find where your data is. Usually the data is in systems like Amazon S3 or HDFS. In this case, for this demo, it's just a local file. And then you would load that file using the distributed, you know, system. And that's still, you know, we're working against, you know, not R but against a distributed system. In this case, it's Spark. Then you can do, you know, you can do a couple things like just getting the number of rows. And here the interesting thing, you know, we are only looking at 5,000 rows. But in a real application scenario, this could be billions or, you know, like even hundreds or, you know, it would be like a much more bigger data set that just is not feasible to bring down into your own machine.

So what we're going to do in this case is we're going to take a sample of the data. And a lot of distributed systems provide functionality one way or another one to, you know, get you to extract a subset of this data. So that's exactly what we're doing. And then in this case, what we want to do is we just want to save the file locally. In Spark, this concept is called collecting the data. What we're going to do in this case is save it. And as you can see, a new file appeared here, which is the sampled subset of the data.

And again, we didn't look at a lot of the details of how to do this, mostly because it's a little bit out of the scope of how you would import data. But my bigger point here is that, you know, if you were faced with this problem, what you would want to do is either ask someone or yourself, try to sample down the data for this approach, for the sampling approach. So here you can see that is a CSV file. And we can import this CSV file using the same tools that we just learned about today.

So let's browse for this file that just got imported today. It's called sampled with the same name. We're going to open it. And as you can see, you know, we have the data that we would expect, but it's sampled to something that is manageable on our specific laptop, right? So here, if I import this one, and I were to say, let's look at the number of rows from this data set, you would see that it only contains a subset of the data that we imported.

Q&A

So I guess we were back to importing CSVs at the end of this webinar. I might be a little bit ahead of time. But the good news is that we will have more time for questions then.

Okay. So the first one, is this new import database feature only available in the premium version? No. The answer is no. Like, actually, I should have mentioned this, but all this demo was performed in the open source version of the product. So the DBI package that I presented is an existing package. You can download it from CRAN. It's open source as well, so you can just go ahead and install it.

Are there version limitations for importing SAS or SPSS? Let's see. Over the years, the architecture of both files have changed. So my answer is here, no, to my knowledge. But, no, it's my belief that there shouldn't be any limitations. I don't know how far back we go into importing the file, but from all the files that I've tried, they all have worked for me. But I'll be happy to follow up and find for you if there's any particular restrictions that I'm not aware of.

Does DataImport provide means of importing fixed-width data? No delimiters, fields start at a given character, et cetera? Yes. The answer is yes, and I'm assuming that I'm still presenting, correct, Bill? Yes, you are. Yeah. Okay, perfect. So, yes, the answer is yes. There's tools for importing fixed-width columns. Now, we don't have that particular feature under DataImport at the moment. You know, it's only delimited. But if you were to look at the readr package, the function that you want to take a look at is readfwf, which stands for fixed-width. It comes with a few different parameters that you would have to specify through the actual import function. And that would accomplish what you are trying to do in this case, which is importing data with fixed-width.

What about reading zip files? Oh, this is such a great question. Yeah, so my recommended approach would be to, you know, extract the zip files yourself. This is just, to me, it just makes the most sense. There's actually packages, which I don't remember on top of my mind, to extract it if you want to extract the package directly from R. There is, however, a couple ways that some of the packages, I believe, readr would actually take a look at extracting the file itself. But it's not something that I would personally recommend. In general, you know, it's not just that, you know, usually it's not about just reading the zip file, but the zip file contains multiple files, et cetera. So I think it would be my recommendation to rather take a look at, you know, like what is inside first, use a different tool either from R or outside R to extract that zip file, and then import the data.

When will the new version, not preview, of RStudio with data import be released? Yes, so I guess my answer would be hopefully soon. I don't know, Bill, if you have more information there, but I don't think we've released anything particular. I'm really excited to, but like it's pretty decent. So I guess what I can say about this question is that, you know, like in RStudio we try to do a very good job on keeping our preview release versions as stable as possible. So I wouldn't be afraid of trying the preview version. Simply, you know, once in a while we might react, you know, to issues that we find in the preview version, but, you know, I would just encourage you to use the preview version.

Javier, I can add to that that if you subscribe to our blog or watch our Twitter feed, you'll see a whole bunch of announcements coming out when we release the stable or the full version. I like that, I like that. Perfect, yeah, so subscribe to the blog. That would be the best way to find news about new versions of RStudio.

What would be the best way to import a SAS data set into R other than converting it to CSV, reading the CSV file in R? Yeah, so I definitely went very fast on all the concepts here, but let's just redo the SAS case because you shouldn't need to convert to CSV, even though that's certainly an option. You can use SAS to export a CSV and then re-import it back. What we recommend instead is to use the Haven package, which is what we demo, and you can import by reading from SAS. In a similar way, you can go directly to the dialog and look for a SAS file that is of interest to you without having to go through the hoop of exporting a CSV and then converting back while you're reading in R.

So here what we're doing is we have a SAS file. I believe this is the data set from Iris, so it might look familiar, the data set from those of you who are already doing analysis in this data set in R. Yeah, basically what you would do is you would go ahead and import it directly. In this case, we're not converting to CSV. I know these dialogs look pretty similar, but actually what we're doing here is we're calling the SAS package with a particular file, and we're just going to import it, and it's not converting at all to CSV or reading from CSV. It's just directly going using the Haven package and getting it as a source.

Does the RStudio preview import interface work with the new fetter format? Ah, my goodness. Yes, I mean, no, but I would love to. So yeah, the fetter format is just a great file format. We didn't have a chance to talk about fetter today. For those of you that are listening, fetter is a new file format that is helping people interoperate between Python and R, and it's also designed with fast access in their design. So it's a very fast file format. Loading and saving data is very, very fast. I think it's just a great suggestion. Currently, no, there's no integration with the fetter format, but you can install fetter, the fetter package, and definitely search online for R fetter.

Will there be an echo system created by RStudio involving Spark, Cassandra, HBase with a flyer-like syntax? My goodness, some of these questions are tricky. Next week, we have USR, and we're planning to do announcements around new features, interesting things happening on RStudio. So definitely, as Bill was mentioning, the blog is a great resource to keep track of these. But currently, we don't have any particulars that we're ready to share. Obviously, distributed data and big data is interesting, so it's to help the community also connect to these data sources.

Let's see, is there a way to import cubes into R? And by cubes, probably, I guess what the question is referring to, my guess is it's talking about business intelligence cubes, just BI cubes and things like that. I actually don't know the answer to this question. My guess is that the answer is yes, because our community is so big and thriving so much that there must be something out there. We can do a quick search. It depends, because there's no file format for cubes, right? Cubes are specific to each vendor, right? So analysis services from Microsoft, for instance, would require different tools to import data directly, while some other source from Oracle, like Business Intelligence Suite, would require some other package.

How about, another question, how about the meta information usually present in other packages' files, like labels, notes, et cetera, in Stata? Will R be able to deal with meta information any soon? So, yes, so as I mentioned, what we're importing at the moment is, with the Haven package, is tabular data. So kind of like this question is suggesting, meta information, you know, like, would not be part of that tabular data just because the format doesn't match as, you know, as nice as you would want directly to metadata, right? Like there's really no great way of enhancing a tabular data format with metadata. I'm not aware if the specifics of the Haven package would provide you with a way of reading metadata. Again, feel free to shoot me an email for this. It's usually, the good news is that it's usually the valuable information is not in the metadata, but it would certainly be interesting to be able to access metadata as well.