Extending Spark Using Sparklyr | RStudio Webinar - 2017

This is a recording of an RStudio webinar. You can subscribe to receive invitations to future webinars at https://www.rstudio.com/resources/webinars/ . We try to host a couple each month with the goal of furthering the R community's understanding of R and RStudio's capabilities. We are always interested in receiving feedback, so please don't hesitate to comment or reach out with a personal message

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

All right. Thank you, everyone, for attending this webinar, and for the kind intro. This is going to be our agenda today. As you can see, we're going to start slow with just getting a view of how to use extensions from sparklyr, and we're going to work our way up to understanding how to build extensions for Spark using sparklyr. Definitely, if you're a new user, you should be able to get still a lot of value out of this webinar, and if you're an advanced user, I'm hoping that this gives you the extra information you need to get your packages and extensions going.

So, with that, let's start, first of all, with the overview. First of all, I want to give a quick reference to our first webinar. Edgar, who also works at RStudio, presented this webinar. If you haven't gone through the first webinar, I highly encourage you to take a quick look at that. It will walk you throughout understanding what sparklyr is, what Spark is, and what can you do with these two systems together.

So definitely take a look at that, but just for those of you that might have started cold or are taking a look at this first webinar, what I want to highlight for that first webinar is that Spark, its cluster computing engine, by itself, it does not provide storage, but you can use several storage providers through Apache Spark, wherever your big data may live. And as a quick intro to sparklyr, sparklyr is an R package. You use sparklyr to interface into Apache Spark from R. Think about sparklyr as any other package that lives in CRAN, which is our package repository for R packages, and you can make use of sparklyr to interoperate with Spark.

So that's like a really high-level overview of what sparklyr is and what Apache Spark is. Definitely, each of them have different functionality. For instance, when you look at Spark, you can see things like definitely, well, we mentioned that it's a cluster computing engine. It's also a SQL interface. It supports in-memory processing, an extensible API, machine learning, graph processing, streaming. And when you look at sparklyr, sparklyr allows you to make use of all this functionality.

And there's some functionality that is enabled by default in sparklyr. For instance, you can make use of the deployer backend, machine learning functionality, extensions, and the sparklyr 06 release also use distributed computations in R. However, there's this question of, well, what do I do if, for some reason, I need either more functionality in Spark or more functionality in sparklyr that is not the functionality that is available by default in the package or in Spark? And that's what this webinar is about. We're going to understand where to start by extending functionality and using functionality that others have extended in sparklyr. And we're going to learn how to extend the functionality that both Spark and sparklyr provides.

The extension development lifecycle

You can think of this as a life cycle of developing an extension to sparklyr. The first thing that you would want to use is take a look at the extensions that are already available in sparklyr. So, for instance, on the top right, we have an example where we're loading the R Sparkling library that will allow you to connect to H2O. One way of using extensions in sparklyr is simply by using other packages that provide support for sparklyr extensions.

It's very easy to use. We're going to go into that in detail. But it's good to know that that's the first step where you want to start by looking at new functionality that is not core to sparklyr. The next step is when you find yourself in a situation when you don't find an extension already available for sparklyr, what you can do is use R code to extend the functionality in sparklyr or use additional functionality from Spark, a Spark extension directly using R code.

We're going to cover this in detail, but it's good to know that there's a way for you to use additional functionality using only R code. And for those of you that are doing more advanced explorations and need more advanced features, the next step would be to actually use full functionality of Scala code. Scala is the programming language that was used to implement Apache Spark. So, by combining both R and Scala code, you definitely have access to 100% of the functionality available on the Spark ecosystem.

So, by combining both R and Scala code, you definitely have access to 100% of the functionality available on the Spark ecosystem.

So, it's both a pretty powerful tool, but also it usually requires additional understanding of Spark and APIs and things like. This step, using Scala code is somewhat optional. You can actually move to the next step from using R code directly to creating R packages without really needing Scala code. But worth mentioning that once you have a set of functionality that is worth sharing either with your colleagues in your internal organization or broadly with the R community, what you want to do is consider creating an R package. So, in the same way that you would consider creating an R package for a functionality that goes into CRAN or that you would distribute with your colleagues as a zip file or in a local CRAN repo, you can do the same with sparklyr and Spark R packages.

Using existing sparklyr extensions

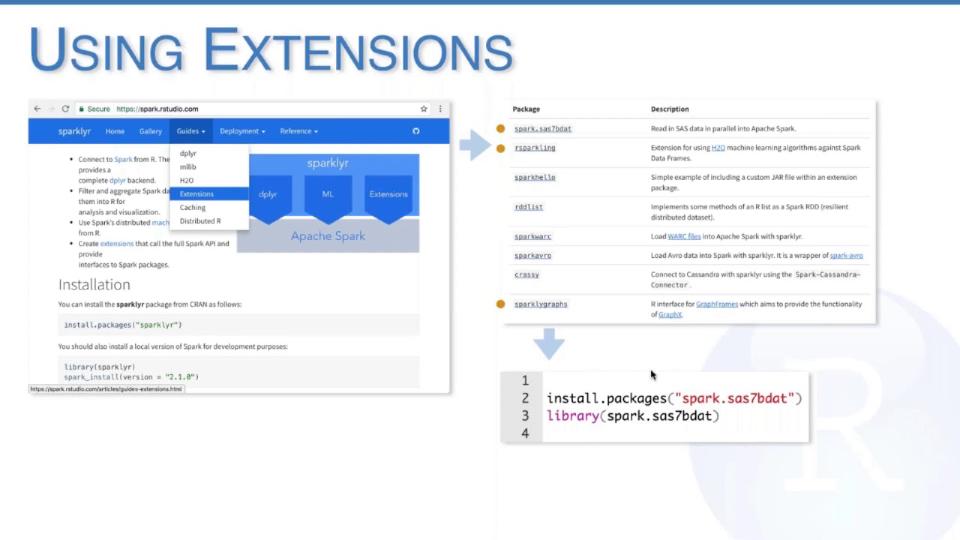

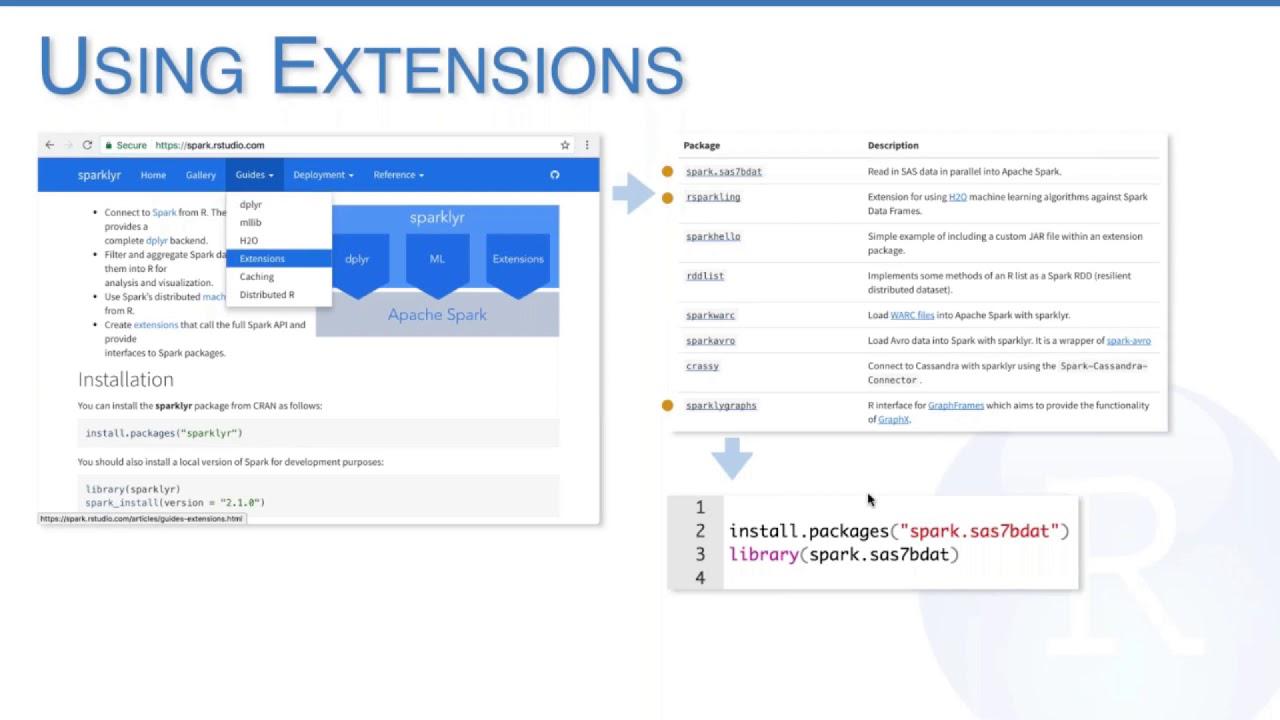

All right. So, this covers a brief overview of what we're going to talk about during the webinar. We're going to get started with how to use extensions with sparklyr. The first step in building an extension that you want to consider is to go to spark.rstudio.com. Extensions. You should be able to find a list of extensions that have been currently developed by the community around sparklyr. We try to keep this list as updated as possible.

But definitely let us know if for some reason you find an extension that is not listed here. In general, you want to take a quick look at what the extensions provide and consider whether this is an extension that is worth using on your end. For this particular webinar, we're going to take a look at three of the extensions that are already available.

We're going to take a look at spark.sas7b.dat, which is an extension to read SAS data in parallel into Apache Spark. We're also going to look at the R Sparkling extension, which was developed by H2O, which provides machine learning algorithms on Apache Spark using their cluster engine. And we're going to take a look at sparklyr graphs, which is a pretty recent addition to the sparklyr ecosystem, which provides support for graph frames. Graph frames is an interesting project because it enables the use of GraphX within Spark data frames.

So all of them are pretty interesting. We're going to switch to RStudio and we're going to see them looking in a second. All right. So you should be seeing a notebook. By the way, I'm going to make this notebook available. It's going to be posted on the webinar materials. So you should be able to also try these yourself. In general, what you want to do is basically just open the file and then reopen it in RStudio once you have the notebook.

So taking a look at the three extensions that we're covering today, to save us some time, what we're going to do for this demo is we're going to load the three extensions at the same time. And this is obviously kind of like something that you might need in some instances, right? Like sometimes you might want to work with only one extension, but it's also often the case that you might want to work with many of them. So you can do that by loading the libraries and first of all, installing them. Some of them, the first two are already on CRAN. The sparklyr graph will be on CRAN probably pretty soon.

So once installed, what you want to do is load the libraries, load sparklyr, and followed by connecting to sparklyr in the same way that you would usually connect to. What is happening in the background is basically when Spark is establishing the connection, when sparklyr is establishing the connection, it's asking each of the libraries what dependencies do they need in order to function properly on Spark, and it's loading them into your cluster. This means that this particular approach requires internet connectivity.

Good to know in case you don't, then you would, there's guys that we have that can help you also set up a cluster when you don't have internet connectivity. First extension that we're going to take a look at, it's the SAS extension. And what we're doing here is, well, first of all, is the first time that we execute a query on sparklyr. So it's basically initializing still some of the Spark components this first time, but then we're reading a SAS file into Apache Spark. Again, like if we were to re-execute this, it would be pretty fast.

And yeah, it's honestly, it's pretty straightforward, and it's basically what you would expect. On Spark, we have many several functions that follow the same pattern to read from different data sources, CSV, JSON, JDBC, Parquet, et cetera. As you can see, the Spark read SAS does not come from sparklyr, but rather comes from a community package, which is, it's great. So pretty straightforward, sparklyr and the community that developed this package already do the heavy lifting to get you up and running here.

And in a similar way, the next example that we're going to try out is using H2O with R Sparkling, throughout R Sparkling, which is the package that provides this functionality in sparklyr. To get us started, we're going to load the plier and copy some data. You can see the data has loaded and we can take a quick peek, just the cars table that we're all familiar with.

And the interesting thing here is, even though we haven't done much R code ourselves, a lot of things have happened on the backend in Spark. By running H2O flow for this particular extensions, what we're going to launch is the H2O's cluster interface. And what's happening under the covers is H2O is initializing. And then very briefly, we're going to get access to H2O's interface. This application is running on Apache Spark and provides a lot of functionality that would require probably a different webinar on this topic just to explain.

But for those of you that are interested in using H2O over Spark, it's a great way to get started. I'm not going to get into many details on how to use H2O, but it's good to know that the extension exists and we're going to run a very simple use case. So in this case, what we want to do is we want to convert the Spark data frame that we have into something that H2O understands. So we're going to run as H2O data frame and followed by running a simple GLM model.

So let's just run these two together. And again, it finished executing. And then what we can do here is take a look at the results of this GLM model run, not over MLE, but rather over H2O's computing platform running on Spark. This is the output of our model. It probably matches closely what you would expect from GLM in R and H2O, sorry, and Spark, but it comes with different set of parameters and variables that depending on the use case that you're following to might make sense to consider using H2O versus other technologies.

The next extension that we're going to cover briefly again is the sparklyr graph extension, which allows us to make use of GraphX functionality. We're going to start by first, again, copying a dataset. For those of you that are curious about this dataset, it's maybe not as well known as the two previous ones, but it's basically, it's graph-like already by itself. It's a dataset that contains friendships among high school boys dating 1957 and 1958.

But it's one of these datasets that we've used as samples on other graph packages on the R community. So we've loaded that and something that we need to know about this extension is that you need to provide two data frames for the extension. One contains the vertices and the other one contained the edges. This dataset by itself contains the data mixed together. So actually we can take a quick look just to see how it looks like. But basically it comes with an identifier of that particular person and their friendship relationship over the period of two years. So we're basically going to split this into a table of vertices and a table of edges. And once we have them in this format, we can run a page rank.

If you want to take a closer look at what functionality is supported in each of the packages, there's multiple ways of doing it. One of the ones that I personally like is just simply typing the package and then just looking at the functions without a complete. In this case, for this webinar, we're only going to look over the page rank algorithm, but there are a few others that you might be interested in. And same for H2O, there's definitely several functionality that it's worth exploring.

So let's just run page rank and kind of validate that we're getting this functionality out of the package. And yeah, that's kind of like covers our three extensions. If you want to take a look at our full list, what I would recommend is going to spark.rstudio.com. And you should be able to identify them on their extensions. Definitely if you're developing one and it happens not to be here, let us know.

Extending sparklyr with R code

We covered three of the extensions. If you really want to get your hands into this notebook, you can go to RPUPS, my username, and then sparklyrextensionswebinar2017. But we're also going to post these contents on our webinar page. So next step in our kind of like life cycle that we discussed at the very beginning. So what happens when you don't find functionality that is available as some sparklyr extension?

The first website that I would recommend you taking a look at, it's called sparkpackages.org. If you were to take a look at this website, you're going to see that this is a repository of packages that have been developed by the Spark community, completely unrelated with sparklyr, but that have been made available to enhance Spark functionality. You can see that there's different categories. For instance, there is a category under data sources that basically allows Spark to connect to multiple other storage providers and technologies.

Data sources happens to be one of the easiest extensions to develop within sparklyr, and we're going to take a look at this on the right side. So the code that we have on the right side is doing something a little bit more advanced than what we saw on the previous topic. We're loading sparklyr, and then we're creating a configuration object by calling sparkconfig. And then we're specifying this configuration parameter called default packages. As you can see, default packages is pointing to the Spark Cassandra connector, which happens to be an extension listed on Spark packages. So basically, by using the lines four to seven on this code snippet, and including the correct dependency, you can load any of the Spark packages available on the Spark community.

For this particular extension, you can make use of a generic function that we've implemented on sparklyr 06 called spark underscore read underscore source. And what that enables you is basically use any data source extension directly from our code. So this is a very, very easy way to extend Spark functionality in terms of connecting to multiple data sources that you can take advantage of, if that's what the functionality that you're missing in your day-to-day analysis.

Now, what happens when you want to do more advanced things that might not be covered by a generic sparklyr function? And the example that I want to cover today and go over is mostly just as an educational example, what it would take us to get started with Spark streaming if we really didn't have much, but what the functionality that we currently support in sparklyr.

So let's take a look at these. Well, first of all, there's a well-documented site on the Spark website for performing streaming programming in Spark. That's a great place to get us started. So I'm going to open that website and just walk you through what a day-to-day in developing extensions usually looks like on the Spark ecosystem. So regardless of the topic, usually what you find yourself looking at is a post based on most likely Scala code in which someone has made available new functionality. So in this case, we're taking a look at Spark streaming. And you can see that in this case, we have a section with Scala code. And the Scala code, it's doing a couple of interesting things. It's, for instance, creating a streaming context out of the current configuration and is giving it a duration.

So once you have a good sense of how this is being developed in Scala, you can start thinking in terms of how to develop this in R. And that's what we're going to do for this second part of our demonstration. We're back in our studio. And we're taking a look at our notebook. And you should be able to see here that I've copy pasted basically that fragment of Scala code that we've seen from the Spark streaming documentation.

The interesting thing here, I'll do this a little bit bigger for those of you that would appreciate this. And what you can see here is that we have Scala code. But what we really want to do is call R code to perform that functionality. And in this case, we're going to try to get just the bare bone basics of Spark streaming working with sparklyr with the functionality that sparklyr provides.

There's three functions that you should be aware of while working with R code. And this is functionality in sparklyr. The first one is called invoke. This function will allow you to call methods from a Scala object. There's one function called invoke static. And this one will help you call static methods in Scala. And there's also invoke new. Which this function will help you call new or create new objects in Scala.

So, these three functions are the core principles that you would use while running Scala code from R. I'm going to delete them since I already have some code ready for you here. And what you can see here is that in order to match this statement in Scala, what we want to do is call invoke new. Mostly because we're creating a new streaming object. So, we're going to do that. And invoke new requires the Spark connection that you're already familiar with. And then it takes a class name. A fully qualified class name. So, what you want to do here is make sure that even though in this case streaming context is the name of the class, the Scala code imported the namespace with the fully qualified version of it. And that's what you want to use.

So, in this case, we're going to run the fully qualified name for this class. We're going to pass this Spark context. And then there's another object that this method requires, which is the duration which you want the streaming to happen. The batch size for this streaming to happen. In their sample, they're setting it to one second. It's actually easier to demonstrate this if we set it to something longer, like 10 seconds. And what we want to do in order to create this object is we can call invoke new over the duration object and pass 10 seconds. Or 10,000 milliseconds. So, let's run that one as a start.

Nothing interesting so far. But well, I mean, it's interesting that we do have a streaming context, right? So, next step that you would see on their documentation is that they will create a socket stream. And while we're doing streaming programming in Spark, one of the basic demonstrations of its use is basically opening a socket, which is just a connection back into Spark where we can send comments directly into Spark rather than making Spark fetching them. So, we're going to do that.

As you can see here in this case, in their documentation, they're calling a method over the streaming context objects that we created. So, in order to do that, what we're going to use is the invoke function over the streaming context. So, same thing. We're calling invoke over streaming function and then we're calling the method, which is named socket text stream. And we're going to pass two parameters. One is local host, which is where this socket is going to be expecting the connections to be coming from. And then the port number. That's about it.

One thing to consider here is that invoke methods do require all parameters to be explicitly passed. And you'll see if you were to look at the definition of this method, which I'm going to provide at the end of this webinar, you would see that it actually takes one more parameter with a default value. So, we're explicitly creating one object, which is the storage level, and we're setting it to memory and disk. Okay. This has run. The next step is a simplified version of what you would see on the post. What we're going to do in this particular example is we just want to get the count of words that are coming into our Spark stream and printing the count. Very simple example. Just going to run it. Hopefully this syntax is starting to look familiar.

In this case, we're using the migrator pipe that is both used in the plier and the migrator package itself. You don't need to use the migrator pipe if you don't like using them. You can also get the return value and pipe it back to the next one. So, that's totally up to you. In general, we like a lot of this syntax, even while using Spark invoke, sparklyr invoke commands.

So, last but not least, what we're going to do is we are going to start the stream. And we're going to take a quick look at what's going on on the Spark UI. So, as you can see here on the Spark UI, we have a streaming job running. And you'll see the progress as we send things into this job. Also, once you create the Spark streaming context, you get additional functionality from Spark, et cetera. But just focusing on kind of like this webinar, which is about extending sparklyr, let's just do a quick run of how this would look.

And for this, I'm going to use the terminal feature, which is fully available on RStudio 1.1, which is currently on preview. So, think about this tab as just a terminal that you would use on outside RStudio. It's a great feature. I really love it. So, what we're doing here is we're going to connect to the Spark stream on the port that we specify Spark to be connected at. And we're just going to send some data. Let's just send A, B, C. So, that's three words. And what's happening under covers is Spark is getting this information and printing the line count of the output.

So, let's just try to take a quick look. There's going to be a lot of noise here. But what we can see in the log is that Spark streaming is doing what we would expect. It's basically printing the number of words that we sent through the stream. And again, this webinar is not really about Spark streaming, but I thought it would be great to give it a shot and see how far we can get with simple Spark invokes to get some Spark streaming going on this webinar.

Using Scala code in sparklyr extensions

So, so far it has been great that we've used only R code to get the basics of Spark streaming working. However, there's going to be more advanced scenarios that you want to address. And for instance, in this particular case, you know, like there's things, there's additional things that their blog post is doing, like creating a flat map out of splitting the words. So, rather than only counting the words, what they're doing is they're splitting each word and then counting the words per line. And you can look throughout the post and you're going to find that there's more advanced functionality that you would want to use in Scala directly.

And for that kind of cases, what I want to get back to is to our presentation and talk about how to, well, first of all, how to map data types between R and Scala, even while you're using R code. And then we're going to take a look at how to use Scala code directly in our package.

This is a table that I recommend taking a look at if you're, once you start doing, making use of invoke and related functions. You can take a look at this table directly in our extensions page by going under data types. Basically, what this table is explaining is how to map data types from R into Scala. So, I mean, some things are pretty obvious, right? Like if you want to pass an integer to Scala, well, that's just an integer. If you want to pass a character, well, that just becomes a string in Scala. But a couple other ones could be a little bit less intuitive.

So, for instance, a list in sparklyr becomes an array in Scala. And things, if you had to map, if you had to create a map or use a function that uses a map, you would want to use an environment that from R. The catch all object that you would use while using sparklyr extensions is called a J object. And this is a short name for Java object. It's basically a reference to an object. So, whenever there's an object that you need to pass, in the same way that we did with the duration object, you would want to create a J object and pass it directly to Scala as an object. But definitely once you start getting immersed into this topic, worth taking a look at this table. Especially to help you troubleshoot calls when you can find the right method to call.

So, last, you know, section on how to develop code in sparklyr. And what we're gonna cover here is just briefly how to create Scala, how to use Scala code from within sparklyr and how to package it together. One of the best ways to get started with writing Scala code from R is there's two ways. But let's take a look at first at a project called spark hello. It currently runs under my repo. Mostly because it's a very simple project. Doesn't have a lot of, you know, interesting things in it. Except that it showcases how to use Scala code from within sparklyr.

And to give you an overview of what's your goal while using Scala code in sparklyr, basically what this process is gonna trigger by using a function called compiled package jars is gonna enable to compile the Scala code that you have on your package into jars that are version dependent and become part of your package that sparklyr would use during connection. So, basically Scala code runs on the JVM once compiled and is packaged as jar files.

This is pretty technical for those of you that might not be familiar with Java programming. But it's honestly pretty straightforward. It's basically you can think of this jar as a zip file that contains compiled code from Scala. And sparklyr does all the magic by calling compiled package jars. All you need to worry about is creating the Scala code that you want to use to extend sparklyr.

Creating a sparklyr extension package

Now, to make your life easier, what I'm gonna briefly cover is how to create these kind of packages. So, there's really two ways of creating or there's two motivations for creating an R package, I believe, on the sparklyr ecosystem. One is to share it with your colleagues and your organization. And the other one is to share it with CRAN. You could argue that there's a third argument for creating a sparklyr package, which is for keeping your code structured while you're developing functionality. But definitely the first two make sense.

I'm gonna cover that while we talk about running Scala code. Because there's a really nice way for you to get started in creating sparklyr extensions. While you can create a sparklyr extension since version 04, what I'm gonna show you is a little feature that we have under development on the sparklyr version. So, by this means that you would want to install the development version of sparklyr by running dev tools installed GitHub. And what this is gonna give you is gonna give you a new project type inside RStudio that can get you up and running with your sparklyr extension and spark code really fast. So, let's take a look at that and talk also about compiling Scala code while we do this.

All right. So, as you can see, first of all, I want to make sure that everyone is aware that we're using sparklyr .709000, which is an unreleased version of sparklyr. Our latest version of sparklyr is 0.6 that you would find in CRAN. So, in order to get this functionality, you would have to install the new the latest version of development of sparklyr. And then you can go and say file new project. We don't need to save this. And we're gonna create a new directory. And if you scroll all the way to the bottom, you're gonna see that there's a new R package using sparklyr entry. And that's the one you want. And then you want to give it just a package name. The same way that you would give a package name when you create a R package. So, I'm gonna just call this one sparklyr test one.

And that's pretty much it. What you would get out of this, once you create the package, is we're gonna give you basically a template with the things that you want to use when you create a sparklyr extension. Well, first of all, we're giving you a readme. We're giving you a readme file with some instructions on how to get and compile Scala code for sparklyr extensions or use the R package itself. So, we're just gonna follow the instructions here. But before doing that, I want to show you that there's two components on a sparklyr extension. The first one is the R code. And this R code can be anything you would use on a R extension, right?

So, you know, it just happens. There's really nothing really particular about the R code that we have on the R files, except that at times you would want to use sparklyr as your main library for building this extension and spark related functionality. So, in this case, what we're doing in this function called sparklyr test one underscore hello is calling a spark function. Sorry, Scala function running in spark. And the class name is called sparklyr test one dot main, which happens to be the class that we've pre-created for you. And the method that we're calling is called hello.

So, if we now that we're switching into the Scala class, you can see that there's like a very, very small amount of Scala code here that basically what it's doing is saying, hey, whenever you call me, return hello world from Scala. And that's pretty much it. If you had to use, for instance, advanced Spark stream or why not, you would include here, you would add import directives and you would use all the functionality that you would expect from running Scala code, right? In Spark.

But let's just complete these the steps in the readme file. So, first of all, let's build a package. So, I'm hitting build. It just finished building. And then what we want to run is we want to build the jars. So, as you can see here on the right side, we only have the Java and R directory. But once we run sparklyr compiled jars, it's basically getting the Scala code out of your package and creating the correct set of jars that your extension would need. And again, this is mostly transparent to you, but you definitely want to at least be aware of these.

So, here they are. And then we want to rebuild to make sure that these jars become part of our package. And next step is basically that's pretty much it. We're done building the extension. Now we're going to use it. We're going to include our library, sparklyr test one. We're going to include sparklyr and we're going to connect and we're going to run our helper function. And that's pretty much it. Honestly, like most of the work, you'll find yourself spending either working with Spark or Scala. From sparklyr, we'll just get up and running and execute your Scala code with ease.

Something interesting to notice is that a lot of the functionality that we've developed on sparklyr, we've actually started it as extensions. And then we integrated that into sparklyr. Just mostly because it's really a great framework to get you started and componentize your functionality. All right. So, that covers our packages.

Resources and closing

Closing, before we get into questions, I just want to give you a few resources that you probably will want to keep in mind while you're developing extensions. The first one is the spark.rstudio.com website. The extension section would be a great place to start, but also just to learn more about Spark, RStudio, and sparklyr. The resources that I cover on this webinar are going to be posted under our webinars website. That would be under rstudio.com resources webinars. Find this webinar and in a couple days you should have access to this presentation and also the notebook that we used.

If you hit any issues, please follow us on github.com rstudio sparklyr. We encourage people to try Stack Overflow to answer generic questions, but if you're really stuck with something or if you have something that looks like a sparklyr issue, feel free to open it there and we'll take a look at it. And finally, mostly resource particular to this presentation is the spark documentation. And mostly you will find yourself working a lot with the Scala API if you decide if you choose to use Scala code to create your sparklyr extension. So definitely worth a look at this website. And that's pretty much it. I'm gonna switch back to questions.

Q&A

And yeah, I see three questions here. Let's see. So first question. In the Scala code for streaming, there are defaults. Do these defaults need to be specified in the R code or is this being demonstrated as a best practice? So today it needs to be specified. It's not a demonstration. It just needs to be explicitly listed in your sparklyr code. So definitely when you're dealing with defaults, it's really handy to have the spark documentation at hand while writing extensions. And mostly because you can have like a full sense of how the method is defined. So yes, take a look at the defaults and pass them explicitly.

Honestly, I do realize that this could be a little bit, could be annoying at times. So what I'm gonna do after this webinar is I'm gonna open a GitHub issue to make sure that we track this feature request. I think it would be great if we could just call it invoke without passing the defaults and remove some of the burden out of the package builders like myself from having to figure out what the default is. But yeah, as of today, you need to specify the default. It's honestly not that bad. And at times it's also a bit of a good practice to pass it. But yes, it's something that you need to specify.

Hi, thanks. Looks really powerful. Is there a way for reading binary files and thinking of images to sparklyr? Would I need to create an extension? Yes. So you would have to create an extension. sparklyr doesn't come with support for loading images by default. You know, you could work around a few ways, you know, doing a couple hacky things. But you don't want to do that. So I think what you want to look into is using an extension. Definitely it's not an extension that I've seen anyone developing on the sparklyr ecosystem. I think it would be pretty great, especially now with topics like deep learning and image classification would be something like definitely worth taking a look at.

First, my initial recommendation would be to first take a look at how Spark supports images in their framework. And I'm hopeful that actually we could follow a lot of those practices even by only using R code. As you can see, like we were able to get some of the basic of Spark streaming working and connecting to data sources is also pretty easy from R code. But yeah, definitely take a look at what Spark provides. If you want to, feel free to open a GitHub issue on the sparklyr repo as a question and I'll be happy to take a look. I think it's a really interesting topic and worth considering building something around this for processing images on sparklyr.

And I have one, we have one last question. Perhaps I missed it. But if I have a jar or a set of jars, are those jars included in the Java? Yeah, that's a great question. So the answer is yes, we include those jars in your package. So if you were to publish this into CRAN or share it with your colleagues, by default, we save those jars in the ins Java folder, which means that they're going to become available when you install your package. And when you connect from through Spark connecting using sparklyr.

Extending a bit on your question, there are other more advanced use cases where you would want to include your own jars. So suppose that either your company or you as a data scientist find that there's a really interesting library written in Java or Scala and is provided as a jar. One of the things that you could also use with sparklyr and spark compile would be to include these custom jar in the ins Java directory. And once your package compiles, you would be able to make use of this functionality within spark. So yeah, definitely good to know that these jars can actually be included. We include them by default. And you can also include additional jars in the package.

Okay. We have a few other questions coming. So feel free to post them. We still have an extra five minutes. So we can cover as many as possible. Let's see. So the question is, how would you manage conflicting jar versions between different extensions? Yeah. Oh, conflicting jar versions between different extensions. This is an actual tough one.

So let me just give you some background. So first of all, when we think of conflicting versions, the way that we solve this problem for sparklyr was by versioning our jars. So we basically we basically add support for multiple we compile multiple versions that you can actually see on our studio on the Java folder. If you were to have conflicting Java versions between extensions, I honestly I think honestly, you know, definitely it's something that we haven't hit mostly because a lot of the extensions use spark resources and there's no need to add additional jars. Some of the extensions do use jars, but it's a bit of a corner case that we haven't even hit so far.

If obviously one way of one way of unblocking yourself would be to load one extension at a time. And honestly, you know, this is a bit of a problem that we would hit on the spark ecosystem. So I think my answer would be try not to do it and avoid it. If you're using spark specific spark versions to build your jars that we, and that's the way that we recommend you do it with sparklyr we shouldn't hit this issue for the most part. However, there could be definitely cases where you hit this that said, I'm, I'm not, I'm not that sure that they would be that different from submitting jars to spark. So I think we would have to take a broader step back and figure out how to deal with these on the spark community.

I think for most cases people use spark in per application. And, you know, like you can, you can partition your analysis over, you know, the, the functionality that you use on your spark running application wouldn't affect auto running applications on spark. So in that way, they're content component ties. But yeah, if, if, if I use her yourself, you have two extensions that you want to use and you both submitting, submit them to spark. I think this is a bit of a, not, you know, broader problem in the spark community that we have to, to address. So let us know if you're hitting this, obviously happy, happy to help, but it does feel a little bit of a, of a corner corner case.

And last question. Oh, not, not a question, but I've been interested to see a description file tag describing spark version requirements for spark-based package. Yes, that, that makes a lot of sense. So the way that we work around this, well, so the way that we solve it on sparklyr is by including the system requirements and I might have a typo here requirements tag. And then we say something like spark 1.6, spark 2.0 or 2.x, whatever. So this, this tag is not, was not meant to really track spark dependencies, obviously, and it's also not a system dependency per se. But the, the suggestion that we got from the CRAN maintainers is to use the system requirements tag as a way of enumerating, which are the versions that are supported, but a feedback, feedback taken, I think we should definitely include a tag itself in, in, or, or a way of the, for a sparklyr extension to proactively tell us what, what's the supported version.