web API Updates

There is a vast amount of data available on the web, and much of it is accessible via APIs. Sources ranging from the US Census Bureau to the NOAA National Weather Service to S3 buckets and online databases can be reached programmatically from within R. This webinar will cover the basics, and some of the pitfalls, of calling web APIs from within R, including read/write operations, headers, authentication, error handling, and response parsing

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Thank you, Anne, for that intro. I appreciate it. As she mentioned, I'm an engineer at RStudio and this is going to be an expanded version of the talk I gave at RStudio Conf so I can provide more detail on working with APIs in R. We will begin with an overview, a quick review of the Web API basics, move on to tools for accessing those APIs. I have a couple of examples prepared and a practical application that we have built in-house using these techniques. And at the end, I'll give you some resources for finding out more information if you're interested in expanding your knowledge in this area.

API basics

So API basics. There are a lot of data accessible over the Internet these days and a lot of it is made available via APIs, which are application programming interfaces. These allow programs to talk to each other based on a communication contract. If you ask me a question in a way I understand, I will give you an answer. So there are two important parts to HTTP communication over the Web. There's the request, which is data sent to the server from the client, and then the response, which is the data that the server sends back to that client. Now this is a very oversimplified view of the client-server communication, but the basics of it are ask a question and get a response.

And this is predicated on the fact that the client and server need to understand each other's language, so to speak. The client can only send a request to the server that the server knows how to handle, and then the server will send that response back to the client, and the client will need to know how to parse that response to get the data out based on the question that was asked. Web APIs usually provide read and or write access to data stores when you're talking about Web APIs, and they allow you to access data on an ongoing basis. This is significant since it allows you to write scripts that pull an API to get the most up-to-date data. Not only will your analyses be current, but when the data changes, your scripts don't have to. Depending on how you design that script, data frames and plots will update automatically when your data changes without you having to download a new CSV or import data manually.

So as an example of this request response, I've put down here a curl request. Curl is a utility in the Unix world that allows you to make HTTP requests. In this case, I'm calling the OMDB API. This is the Online Movie Database API, and I'm giving it a request with a query parameter, T for title and R for response format. So in this case, I'm saying, OMDB, give me the data for the title, the movie named Clue, and send it back to me in JSON format. The server understands this request because you've put it into the format that it expects, and so it sends a response that contains the data you asked for, the title, the year, the rating, et cetera. Again, this is a very simplistic example, but it gives you an idea of sort of how simple it is to ask a question and receive an answer and pull that data into the scripts that you're writing every day.

Web APIs are organized into resources that are often in a particular hierarchy. In this example, there's a map that if you follow down from the top, you can get information about accounts, a particular account by its ID, the lists that a particular account has, the ID of those lists, the campaigns, the subscribers, the web forms, et cetera. So these are often organized in maps or webs of information, and if you know how to get to those different levels of information, you can construct a URL and ask for very specific things all the way down at the bottom, like clicks and tracked events in this case.

Now that presumes that you understand a little bit about the API that you're trying to use. Fortunately, most of it has, many of them, I should say most of them, have decent documentation. This example is from the Star Wars API. So in this case, you can see across the left the kinds of information you can learn from this documentation. There's a getting started guide here on the first page. But the things that you need to understand are the resources towards the bottom, so that you know what you can get and how to get it. For example, this being the Star Wars API, you can get information about people, films, starships, planets, vehicles, et cetera. And the beauty of this documentation, this particular documentation is very good, so it'll tell you exactly what URL to use to get to that information, and it'll give you an example response as well. So it will tell you how to ask the question and what format the answer will be in, so that you can then parse it appropriately in your script.

The important part here is that you need to learn that this, learn this API contract in order to use it effectively. You need to know how to ask the question and how the answer will be sent back to you. Fortunately, again, these documentation examples are often quite good, because they want you to be able to use it successfully as well.

Tools for accessing APIs in R

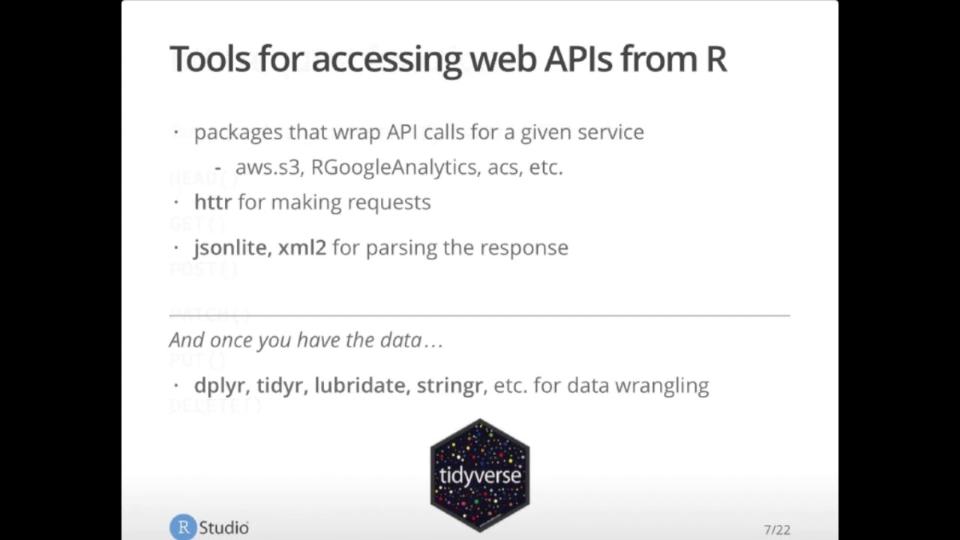

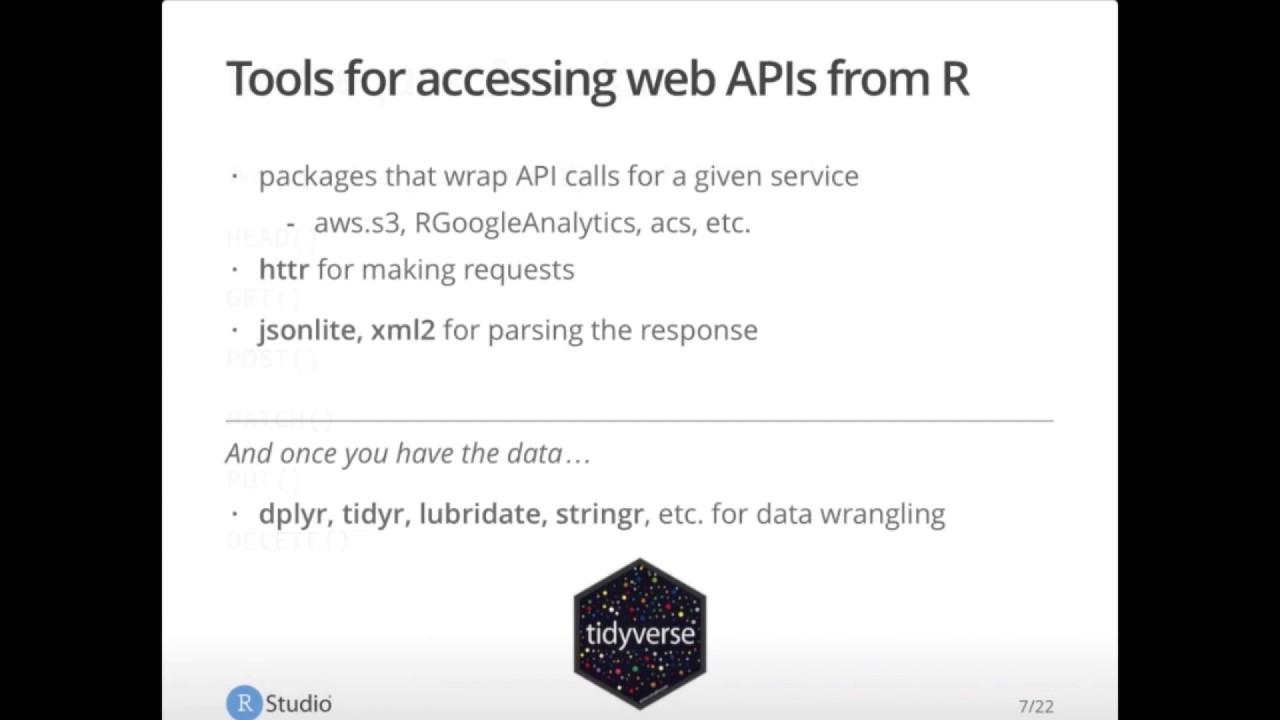

So to access these APIs and pull this information into R, you have a couple of different options. In some cases, people have already written packages that will wrap those API calls for a given service. The examples I've provided here are for AWS S3. This is S3 buckets in the Amazon Web Services universe. Our Google Analytics will get you information about Google Analytics based on the ID code that you have for your website. ACS is for the American Census information, et cetera. So some people have already wrapped these APIs into packages that are very easy to use. You do, I mean, if you can use them, by all means do. They make your life a lot easier. You do need to be aware that the people writing them may not have the same goals as you do. So they've written it to solve their problem and then made it public. But if you have other questions that you need to ask or if there's particular end points that aren't covered in that package, you may need to write that yourself. Or there could be no package for the data source that you're trying to reach, in which case you'll write it yourself with some other tools.

I recommend HTTR or Hitter for making the requests. And then JSON Lite or XML2 for parsing the response. The most common response formats of API calls are JSON and XML, and these two packages are extremely good at parsing that data for you. Once you have the data in your script, you can wrangle the data the way that you normally do with data that comes in using the various packages in the tidyverse to get your data in an orderly rectangle so that you can use it for your analyses and for your plots.

So Hitter request functions wrap HTTP verbs and fortunately they match them so they're very easy to figure out. Get is the one you'll probably be using the most commonly if you're interested in getting information from the web into your scripts. Post is to write back to a data store of some kind. Patch and put are for updating. Delete is fairly obvious. And head is identical to get but without the body. So in this case you'd just be getting the metadata around the request without getting the body data. This can be useful as you're developing to understand sort of everything around the data itself in a much cheaper call.

Making a request with Hitter

So to make a request, you first load Hitter with the library function, then call get with the URL. In this case I am using the Star Wars API. I'm calling planets with ID 1. So what I'm trying to do here is get information about planet number 1 in the Star Wars API database. And this will give you a response object. If you print that out, you get some really useful information. The actual URL that was used after any redirects. The HTTP status. The file or content type. And the size of that response.

So you can use various helpers in Hitter to dig into the response object. For example, the status code helper will pull out just the code itself. 200 is what you want to see. That means okay, successful. Anything else may be a problem that you would need to handle in your code. Using the headers, helper will get you everything, all the metadata that came around through the header of the response. Date, content type, connection, transfer encoding, et cetera. There's quite a bit there. And then what most people are interested in is the body itself. This is the content of the response. And so you would use the content function from Hitter to pull that out. And here I'm just looking at the structure of it. So you can see that it is a list and the various amounts of data that come back. You'll see some of it is nested. Some of it is URLs. So you're going to need to understand all this in order to handle it appropriately in your scripts.

So there are three main parts that you'll probably be most interested in in your HTTP response. The first is status. You can get the full status information with the HTTP status function. It will tell you everything that it possibly can about the status. In most cases, you're only interested in the code. So the status code, sorry, object, will come back with just the number that you can make decisions in your code based on that number in most cases. You can automatically throw a warning or raise an error if a request does not succeed, if it was not 200, with the warn for status and stop for status helpers in the Hitter package. I highly recommend these. They convert HTTP errors into R errors, making them much easier to handle in your script. And they'll either warn or stop just as they imply.

They convert HTTP errors into R errors, making them much easier to handle in your script.

The second of the components that you'll want to be interested in, that you'll want to handle are the headers themselves. Date, content type, connection, allow, things like that. There can be custom headers in here. It really depends on the API that you're working with. The one that you'll probably be using most often is the content type. Most APIs have a pretty consistent content type in their response. In this case, they're using JSON. So you may only have to worry about this once. But if you're calling an unfamiliar API or you're calling an API that changes frequently, it may behoove you to take a look at that content type to make sure that what you're parsing in your script matches what you're being sent. You can also get cookies, if you're interested. It really depends on your API or what you're trying to get out of it. But the thing, again, that most people are interested in is the body. You use the content function in Hitter to get the response body. In this case, you pass it your object and a modifier. So in this case, I want a character vector. So I'm going to pass in the word text. And it'll give me back all of that information that we just saw earlier in the structure output. If you have non-text responses, you can get the raw content with the raw modifier. Or you can give it the parsed. Hitter has some default parsers for common file types, including JSON, XML, and a couple of others. This can be really useful if you want a quick and dirty look at it. But honestly, I tend to want to give the parser I want to do the parsing explicitly. And so I don't tend to use this very often. But it's there if you want it.

Star Wars API examples

So let's turn now to some examples. Here I've got some examples from the Star Wars universe. Let's see if I can make that a little bigger for you. Great. So I'm going to be working with the Star Wars API in these examples. And we're just going to walk through some of the basics of how to deal with APIs in R. First thing I'm going to do is pull in Hitter JSON Lite, because I know that the response is going to be in JSON, and Regritter for piping to make it easier to read the code as I'm passing this data around. And our first goal is to get the data for the planet Alderaan.

So the components of this request in Hitter are the same as they are in HTTP. You need a verb or a message, in this case get, a URL endpoint to hit, in this case we're looking for planets, and a parameter. Here we're going to use search. Not all API endpoints need parameters, but in this case I'm explicitly going to do a search. And in this API, the key value pair is search and then your text that you want to search on. So if I run this, you'll see that the Hitter method get is in use. It's very straightforward. It's very readable. And I can take a look at that in a second. But I also wanted to point out here that there's different ways to do your parameters. If you have many parameters, if you've got six parameters, this URL gets crazy long and hard to read. So there's another format you can use with the get method where you pass in a query list and you give it your key value pairs in that list. We don't need to do that here since I've only got one, but it's worth pointing out, especially if you're looking for a very detailed data that has a lot of parameters in the search query or in the set of parameters you're sending to the API.

So now that I have that data, I'm going to take a look at the data frame names. And I've got URL, status code, headers, cookies, the content itself, and the request and things like that. So I'm getting back a lot of information from this API, which is great. I'm going to take a look specifically at the status code. This is a 200. Excellent. That means it's succeeded. And then I'm going to take a look at the headers, specifically the content type and its JSON, which is what we expect. Again, if you're looking at an API that you don't know very well, these fields can help you quite a bit in terms of deciding how to handle the data that comes back.

Now if I want the text of the response, I'm going to use the content method in Hitter. Pass it the object I just created. Tell it to give me text and specify the encoding. Hitter will default to UTF-8, but it throws a little warning, so I usually just go ahead and put it in there explicitly. If you take a look at the resulting object, it's giving you a count, a next, a previous, and the results themselves. And this is containing the data that you're interested for the most part. But it's not very easy to read, and it's not very easy to get that data back out. It's not very R formatted at the moment. So we're going to need to parse this. You can do so with Hitter, as I mentioned earlier. So if you passed that into the content method with the parsed modifier here, you can. I'm going to go ahead and run that, and then I'll take a look at it. It's easier to read than this is up here, but it's not necessarily easy to get to some of the information in the results themselves. So the count is pretty straightforward. And the results, if you take a look at the structure, it's what we would expect. It all looks fine. You've got some nested elements here under residents and films, and those are actual links to those, which makes it really, really easy to get that data programmatically. So it's all there, and it's all usable, and it's great. But in order to access this stuff, it's a little bit ugly in terms of this code. It's not the end of the world I've seen worse. But it's not always that easy to figure out kind of how that structure works.

So I use JSON Lite to parse this instead of the JSON Lite package. So I'm going to take that text content that we had up here. You can see that down here in the console. We have this. I'm going to pass that to the from JSON function in JSON Lite. And what this is going to do, I just ran that code, and then we're going to look at the object. It, again, gives you the count and the next and the previous, and we'll talk about that in a second. But the results are much easier to handle, in my opinion. They're certainly much easier to handle than this. So now that you've got that parsed in JSON content, I'm going to look specifically at the results and get the names. And now it's a standard rectangular object that we can deal with a lot more easily. And when you want to pull that data out, this code is a lot easier to work with, a lot easier to read, in my opinion, than this code up here. So I do tend to use JSON Lite just to make my life easier and also to, you know, when I come back to this code in a couple of months, I don't necessarily remember how I got that. But it's really easy to figure out how I got that. So JSON Lite, big fan.

So I do this all the time. I do this every day, 43,000 times a day, and I don't want to rewrite the same code. So I wrote myself a little helper function here, and all it does is take the content function on the response, tell it to bring out back the text encoded. If it's empty, warn. If it's not, run it through from JSON. So we can see how that looks here. In this case, I'm going to get all of the planets and stop for status. Again, we talked about that a little bit earlier. This is going to stop the script if I get anything other than a 200. So I'll go ahead and run that, I'll get all the planets, and then I'll JSON parse them. And now I have JSON planets. Again, we have a count, the next, the previous results. Every API is going to differ a little bit. In this case, this API is telling me how many results came back, or how many results are available, rather. That's the count. Next is going to be a link to the next page of results. Previous is going to be the link to the previous page of results. And results is the data itself.

So you'll notice here I'm going to run the JSON planets count, and there's 61 planets in this data set. But if you look at the length of the results that I just got back, it's only 10. This API pages, and that is not uncommon. You'll get your results back in pages rather than the whole shebang for performance reasons. You can imagine asking an API to send you a million results. It's not going to want to give those to you all at once because it would take down their servers. So this API, like other APIs, does not do that. But you'll see if you look at the next object, it gives you the link for the next page. So in theory, you could page through these, get all of your results into a single data frame, and that's what we're going to do down here, actually.

So that was the next page. I'm also going to take a look at the results themselves. So here I did the JSON planets themselves, take a look at the results, and then get the names of the planets. And so we'll get these first 10 planets that are in the database. So if I want to get the next page of results, it's really easy just to put the object itself as the target of the get function. Again, stopping for status. You don't even have to store it. All you have to do is just pass that in, and it will go get that next page at this URL that it already has in that object. So if we run this, the next page will... Actually, I'm going to parse it as well. Excuse me. See, parse it, and then I'm going to take those results, get the name. This is the next 10 planets in the database. So now I've got the first page that is Swappy Planets, and now I've got the parsed next page that has the next set of pages, the next set of data. So if your API results come back page like this, you can write a loop to follow the next URL until there are no more pages or bind all the data into a single data frame and have one big data frame full of all this data. It's not always what you want to do, but it's awfully convenient when you have small data sets. This is only 61 planets, so there's no reason not to do that. Again, this will depend on the design of your API. Not all of them are quite this nice. Not all of them have those next and previous links the way this one does, but if it does, you may as well take advantage of it.

So here I've written a little bit of code to grab all of the Star Wars planets that are available. First, I'm going to get the first page of results, stop for status, parse them, and put them into an object that I'm going to call Swappy Planets, Star Wars API planets, based on those results. Then the next page I am going to assign to the next object, and while that is not null, go get the next page, stop for status, parse, and R bind it back into the Swappy Planets, then reset next page. This API is very nice. If there is no next page, you'll get a null, which is why I'm able to do the is null. There are other APIs that I've worked with that aren't quite this nice. They don't tell you when you're on the last page, and that can result in some more involved code than we're talking about here, but we're very lucky in this case. This API is quite nice in that regard. Again, this all goes back to you needing to know your API very well before you start writing a lot of this. But once you do, this code is only a couple of lines of code that I've just run, and I've now got all 61 planets into one object. And I didn't have to do it by hand, and I didn't have to download a CSV and reimport it into R, and every single time I run this, it's going to give me the most up-to-date planets. If there are five more planets that get added between today and tomorrow, when I run this tomorrow, I will have 66 results and I can pipe that into whatever data script that I would like.

I didn't have to download a CSV and reimport it into R, and every single time I run this, it's going to give me the most up-to-date planets.

Other considerations: errors, paging, and rate limits

So this has just been a very kind of simple example of how powerful these tools can be. In real life, as I mentioned earlier, you're going to have to handle some other cases. For example, if your paging API doesn't tell you when it's on the last page. Errors that may get thrown. Headers, proxy, rate limits. Most times APIs have rate limits, so you can only call a given API a certain number of times per second or per minute or per hour, depending on the API. If you call any more than that, they'll throw an error, and that's just to keep people from overloading their servers. You're going to need to know how to handle that, if that happens within your script, and handle it appropriately so it doesn't crash your application. For more information on the functions that are available to help you with this sort of thing, you can get help on the header package. And if you are actually interested in Star Wars data at all, which you should be, somebody did already write a package for this API, so you can see, you can work with it directly if you like, but you can also use this other package that somebody has already written around this particular API.

Authentication with OAuth

So then what happens if your API requires authentication? So I've got two other examples here. There's a Twitter example which uses OAuth 1, and there's a GitHub example that uses OAuth 2. These scripts are very similar because the header package has some really nice helpers to be able to handle not only OAuth in general, but some common services like Twitter and GitHub and Facebook and things like that. There's an OAuth endpoints method that takes values for these most common services. In this case, Twitter is covered. So you need to declare that you are working with Twitter as your OAuth endpoint. Then in Twitter itself, you would register a new application that this script will talk to, and you set the callback URL to something very specific. Bear in mind that this is an example that Hadley wrote up, and it can be found in the header demos if you want, if you need to come back and refer to this. But at any point, you need to set up the endpoint the first time around and register the application and associate that application with the OAuth endpoints that you just created.

So here we are setting up an application, or we are setting up a reference to the application you just created in Twitter. We're giving it a name and the key and the secret that were generated when you set up this application in Twitter. This is going to allow this application or this script to talk to the application you just created in Twitter. Now, in real life, you would not put your key and secret right in your script. That's bad practice. You would set that up as an environment variable to keep them out of the code. But in this case, it's a test application, so I just left it in here for simplicity. Once you have that set up, you can use the OAuth 1 token function to associate the app you just created with the endpoints that Hitter already knows about because Twitter is a known service. So what you're trying to do here or what you are doing here is generate a token that uses the endpoints you've already set up and the application you already set up to get a token that you can use to make calls, authenticated calls. The first time you run this, you're going to be asked to authenticate to approve this application or this script's use of this application. Once you've done that, as long as you have cache equals true, you shouldn't have to do it again until that token expires. The token expiration is really up to the API. I don't remember what it is for Twitter, but I don't have to do this very often at all when I give these demos. So now that you have a token, you can actually use it to make a request. Again, we'll use the get function. What I'm trying to get here is the RStudio tips from the timeline, and I'm going to pass it that token that I just generated. So let's see if this will run for me. Stop for status. Again, we're only looking for 200s. We did get a 200, or this would have thrown an error. I'm going to pull out the content using the content function in Hitter, and then I'm going to take a look at the first one, which isn't actually a tip. It must have been a response. But at any rate, you can see how this works. Once you've set up this Twitter token, you can use it for as long as it lasts. Again, that's up to the Twitter API. I'm not sure how long that lasts, but you only have to run steps one and two once, and you should only have to run step three periodically based on the API. Then you can run as many requests as you want to within the throttling limits.

The GitHub example is basically the same, just using the different method because it's OAuth 2. Again, you're going to set up the endpoints and the app. Again, you would not put this in your code. You would put it in your environment variables, but it's there for simplicity. You'd set up your application in GitHub itself, and then tell this script to call that application that you just set up based on the key and secret. Use the OAuth 2 token method, sorry, function to associate the endpoints with the application you just set up. Again, it will ask you to authorize on the application side saying, is this script okay to use this application? You should only have to do that once. If you have cache equals true. And then you can use the API itself. So we'll set up the configuration, pass that in to a get request. This is just another way to set up the configuration, by the way. Over here in Twitter, we passed config token Twitter token directly into the request, but rather than writing that a bunch of times, in this case, we set up a special object just for that configuration, and now we can pass that in to any of our get calls and make requests. So I'm going to make a request. Stop for status. It was 200. Excellent. I'm going to take a look at what the rate limit is. It's 5,000. So that's probably 5,000 per, I would guess, per minute. Could be per second. I'd have to go figure that out from the API. I don't know this API as well as I could. At any rate, this is also an example from Hadley's demos. So if you want to take a look at these yourself and play around with them a bit, modify them. This is just a modified version of what he has written up already.

So as you can see, authentication can be handled easily. If you, depending on how you handle the stop for status or the warn for status, you can also have your script automatically handle errors that come back from your API calls.

Additional considerations and Hitter helpers

So I touched upon some of these earlier. There are some other considerations. Again, you really need to know your API. You'll need to know what it expects and what it's going to send back to you. And you need to make sure that that documentation is honest. I know that developers make the best effort to make sure that those API documentation examples are accurate. But sometimes things change. Sometimes you happen to be looking at the wrong version. Sometimes there are mistakes. So you just want to experiment with it a little bit to figure out exactly how this is going to work. You do have to deal with paging, which we touched on a little bit, as well as rate limiting. Time to first bite is a little trickier. This is response time. So in some cases, APIs are not very fast. So you're going to have to figure out how to deal with that in your script and how to get around that, whether or not you need to wait for the response, things like that. You may need to handle data storage if you are putting this API information into another data store for you to analyze later. And it's a good idea to understand the hitter functionality that augments the things that we've already been talking about. So we did talk a bit about OF1 and OF2. You can also use proxies. And the authenticate method itself is a little more generic. You can use it to authenticate with basic authentications and other kinds of tokens. There are request modifiers as well. These tend to come up in post methods rather than in get methods. So this is when you're sending data to a data store. You can cookies, add headers, set contact type in the accept headers as well. And finally, there's some other just helpers that make life a little bit easier. Stop for status and warn for status we've already talked a bit about. You can also set a timeout, again, for those long response times. You can have it set to verbose, which will give you a lot more information about the HTTP conversation, request and response. Parse URL progress, things like that. So there's quite a bit in there. I would definitely recommend taking a look at the vignettes in the hitter package in the CRAN.

Practical application: support ticket analysis

And finally, I wanted to tell you a bit about a practical application. So this is what I work on every day. I spend a lot of time analyzing support tickets to identify product improvements, documentation enhancements, process improvements, things like that. I want to know how the user experience can form the improvements of our products. So I spend a lot of time in R crunching the data around support tickets. This application is in two parts. The first part is an R script that gets the support ticket data via API and puts it into an S3 bucket. And the second part is where various applications and reports pull that data from S3 and then run those analyses.

So the reason I have this in two parts is because our support ticket tools API is quite slow. And I have to page through a lot of data. And to pull that into a single data frame the way that we did with that Star Wars API data takes a lot of effort. And it takes a lot of time because these API calls are not quick. So if I asked somebody who's loading an app or a report to sit there and wait for five minutes while that data comes back, they would close the window. They wouldn't do it. So in this case I have two different parts. And I've traded real-time access for application load time. In this case it doesn't really matter. What we're looking at here is historical trends. So we don't necessarily need real-time data. And so having this script run once a day even or five times a day and push that data into S3 allows the application itself to just make one call to that S3 bucket and pull it back really quickly. So we've traded that off. Another alternate approach might be to make a series of incremental API calls that update the apps as the data comes in. But we didn't think that was necessary for this particular application which is why we have it in two parts.

So the first part pulls that data, filters, tidies, flattens it using the tidyverse and saves it to an S3 bucket. And we have deployed that script to RStudio Connect and scheduled it to pull the API to update the data store automatically. I never touch that unless I want a different set of data. Unless I want to augment the data. It's beautiful. It runs automatically every night. I don't have to worry about it every morning. My reports are brand new and up to date. The second part are those applications and reports that pull that data from S3 and visualize that information. And again, we use this data to make things better.

So this is an example of this is real data from our support tickets. And this is how we have been using this data that we're pulling from an API. And this first one is ticket volume for the past two years. Blue is created and orange is solved. So we're solving them as fast as they're being filed, which is a good thing. Over here on the right is ticket volume by product for this last year. So we can take a look at which products are getting the most tickets filed and potentially target those for improvements. Prioritize them rather for improvements. Down here, year-over-year volume by week. Our patterns are very similar, although the volume this year was higher than last, as one would expect, as we have more people using it. And over here we have categories of tickets. So things like installation or configuration or defect, an actual bug that someone found. And we graph those over time as well so that we can tell what categories need the most love in the products and things like that. So this is a really practical application that we use in-house every day to figure out where we can target improvements based on information that we're pulling in from a couple of different APIs, actually, that we pull together using the tools that I have just described.

Recap and resources

So to recap, I can't emphasize enough how useful APIs are, especially for data sets that are updated regularly. Again, I don't touch that script. We deployed it to Connect and it runs every night without me touching it and pulls all that data in by itself based on the code that I've written to handle that API. It's very easy to pull that data in, keep it up to date with the right packages and tools. You are going to have to do some work around the API contract to make sure you understand what's coming back and to handle errors and things like that.