RStudio Shiny Server Professional Architecture

Jeff Allen covers the differences between Open Source Shiny Server and the Shiny Server Professional product from RStudio

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Okay, so I want to talk to you today about the Shiny Server Pro architecture, and give you a bit of a feel for how things are organized and how things are run within Shiny Server and Shiny Server Pro.

All right, so first of all, Shiny Server Pro is kind of an, can be viewed as an extension of the open source Shiny Server package, which we generally just call Shiny Server. And so basically, you can view Pro as kind of enhancements and new sets of features that add on to the open source package. So when you talk about the architecture of Pro or open source, it probably makes sense to start with a discussion about the open source Shiny Server, and then we can kind of grow from there into what Pro adds on to that.

But, you know, I mentioned here a couple of the features that are often most compelling for Pro. So, you know, we do user authentication with whether that's LDAP or Active Directory, and then also we do application scaling across multiple processes for a single application, which is helpful.

How Shiny speaks WebSocket

So okay, so to go kind of all the way down the hole, we get down into the Shiny package, and I suspect that most of you are familiar with Shiny, but basically Shiny is, as you know, an R package, but what's important to realize is that Shiny speaks WebSocket. And so what that means is that any browser that's compatible with WebSocket can connect directly to a Shiny process and do, you know, the communications there back and forth between those two entities.

So that's great, and you probably notice this when you're developing applications locally. So if you're using RStudio, for instance, and you press run app, you're going to get a little viewer. That viewer is communicating directly to your R process, or, you know, if you open in a browser, you're communicating directly with an R process, and there's no Shiny server intermediary between those two, and that's because Shiny speaks WebSockets. So it is possible to open a direct connection from a browser into the Shiny process.

So that's great, but there are a couple of limitations on this. First of all, as I mentioned, that only works for browsers that can communicate over WebSockets, and so that rules out Internet Explorer 8 and 9 and some other versions of Firefox and Chrome and things like that. The other problem is that the Shiny process has to be running at the moment you try to open a connection to it, and so that can be a little complicated in that if you have, you know, as an organization, maybe you have, you know, hundreds of Shiny applications that you've developed, and at any given time, maybe, you know, a handful of them are actually being used and interacted with.

The problem is here that, you know, in order to communicate, Shiny would have to be running all of, like, you would have to be running one process for each of those applications all the time around the clock, and that creates a lot of problems in terms of resource utilization. If you're trying to run these, you know, hundreds or even thousands of processes all the time, even though many of them may not be used, you know, but, you know, a few minutes a day. So there are a couple of limitations to just running Shiny on the bare metal, but it is possible.

What Shiny Server sets out to accomplish

So Shiny server sets out to accomplish a few goals. The couple that are interesting for this discussion are, first of all, it should start and stop Shiny processes on demand and as needed, and also it should translate non-WebSocket traffic into WebSocket traffic that Shiny can understand. And then finally, it can also map different URLs to particular applications.

So if you were just to run an army of Shiny processes on your bare machine, all of those would have to run on different ports, and so your users would need to enter a port number in order to get to an application, and it would be, you know, a little sloppy. And so Shiny server offers a kind of a cleaner, more convenient way to be able to navigate between the different applications, and we do that by offering a few different hosting modes. So you could just host one application. You could allow users to host their own applications, or you could host an entire directory of applications, and you can do these at different URL subspaces. So it just allows you to kind of more meaningfully manage, you know, many Shiny applications.

And so a couple of these to kind of revisit. So starting and stopping Shiny processes, so again, this is, you know, if you're running this naively, you would need to be running that Shiny process all the time, but because you're running Shiny server as kind of this long-running, you know, always-on daemon, what that means is that when a user goes to connect into the Shiny process, if it's not running now, Shiny server can kind of hold that request for a second while it goes to start the Shiny process, and then once the Shiny process is ready, it can let the traffic through into that process, so it can kind of broker and manage the communications between applications that may or may not be running at the moment they're requested.

And then also if it notices that all the users have left and that application's been idle for some configurable length of time, then it can go ahead and reap those processes to free up some more resources for other applications.

And again, like I mentioned, older versions of Internet Explorer are not going to be able to handle WebSockets natively, and so Shiny server uses a library called sock.js, which basically allows you to work as if you were working with WebSockets, but it will kind of gracefully fall back into other protocols as needed. And that's actually really important, even if you're not planning to support older versions of Internet Explorer, because many networks, if you're using older network equipment that was designed before WebSockets gained popularity, then you'll actually end up in a situation where they may be dropping or blocking WebSocket traffic, and we see this quite a bit, especially at universities and academic settings, they for some reason tend to have network equipment that isn't friendly with WebSockets, so you can kind of end up in the situation where even if you're using all the latest and greatest technology, there may be some router between you and your client that is going to interrupt your WebSocket traffic, and so it's nice to have a technology that will allow you to fall back to something that is supported if needed, so that ends up being a pretty important feature.

And that's actually really important, even if you're not planning to support older versions of Internet Explorer, because many networks, if you're using older network equipment that was designed before WebSockets gained popularity, then you'll actually end up in a situation where they may be dropping or blocking WebSocket traffic, and we see this quite a bit, especially at universities and academic settings, they for some reason tend to have network equipment that isn't friendly with WebSockets, so you can kind of end up in the situation where even if you're using all the latest and greatest technology, there may be some router between you and your client that is going to interrupt your WebSocket traffic, and so it's nice to have a technology that will allow you to fall back to something that is supported if needed, so that ends up being a pretty important feature.

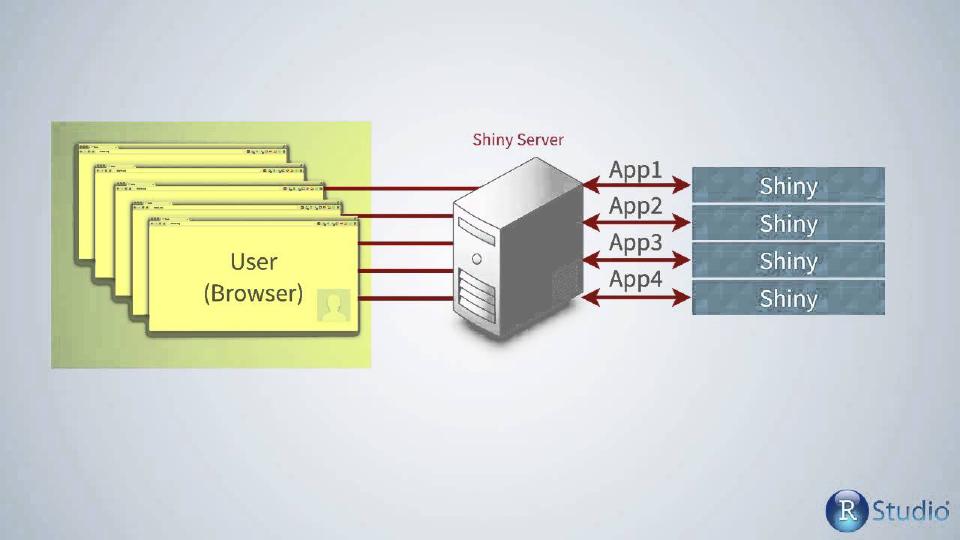

Shiny Server architecture overview

And then as we already discussed, being able to map URLs to different applications. So kind of an overview of the architecture, you can envision it looking something like this. So you have a variety of users over here, I don't know if you can see, you have your variety of users over here, which could be a handful, or we run servers where there are hundreds of concurrent connections from different users around the world, but they're all opening connections into your Shiny server instance, which again is kind of this always-on server process that's running as a daemon on your server.

And then it's going to open and close, or start and stop these Shiny applications, and kind of proxy the traffic as it goes through from users' browsers into the Shiny applications, and so what this typically looks like, just to give you a feel if you're going to get down to the network level, the first request, of course, is just for kind of the root URL of some application, Shiny server would go in and inspect, is that application already online? So let's say it maps some URL to app one, so it would go through and say, is app one already online? If it is, it'll proxy the traffic through, if it's not, it'll start app one, and then proxy the traffic through.

And then just kind of continue to keep these channels open between the user and the application. So what this looks like at a network level, the first request is going to be for kind of the root URL of that application, and then that's going to return some HTML that is going to kind of point the browser to different static assets that may be of relevance to CSS files and JavaScript files and things like that. And so it'll open up more connections for all of those files, and then eventually it'll actually open a WebSocket or WebSocket-like connection that will be persistent between that browser and that Shiny application, and that's kind of how Shiny, the Shiny magic works is via that WebSocket or that WebSocket-like channel that allows the user and the Shiny process to communicate back and forth for as long as that connection is open.

So a couple of points here, just as I mentioned, the user's connection to the Shiny Server may or may not be a WebSocket, though these connections between Shiny Server and the Shiny process must be WebSocket connections, because that's the only protocol that Shiny can understand. But these external connections, again, may or may not be WebSockets, they don't have to be. If you're using an older browser or some network intermediary is blocking WebSockets, that's fine, we can handle other protocols as well.

And then the other point here, of course, is that Shiny applications are going to be started and stopped as needed, and so the running processes may not encompass all of the available applications that we know about, you know, there may be others that we could start as a request comes in for that application.

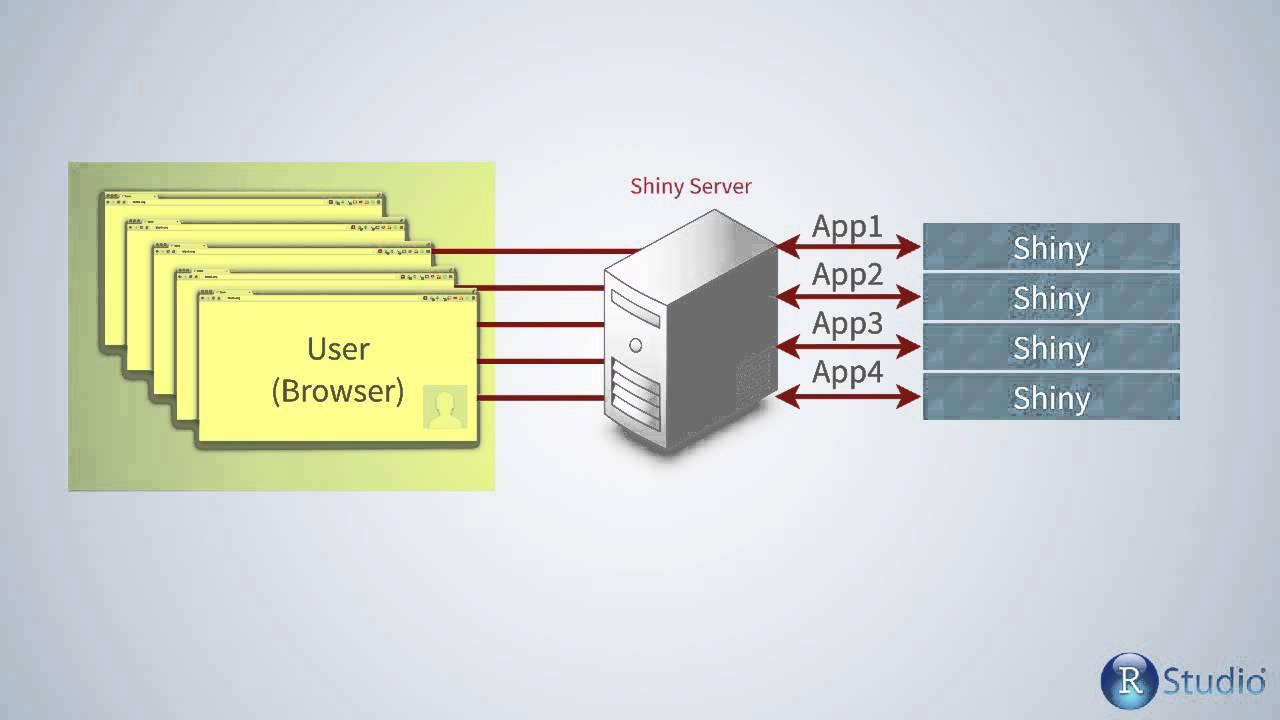

Shiny Server Pro features and architecture

So that hopefully gives you a feel for Shiny Server, which is kind of a simplified architectural diagram compared to what you're going to encounter in Shiny Server Pro. So Shiny Server Pro adds a variety of different features, and of course we enumerate all these on the product pages on our website, but a couple that are of relevance today, if you're considering doing something like high availability or load balancing between Shiny Server processes, there are a couple features that you need to be aware of in Shiny Server Pro.

So again, the Pro product is going to add a few features like authentication and more scalability, so being able to support a single Shiny application having multiple processes. One of the nice benefits here, if you're looking at doing high availability or load balancing across multiple instances, is that there's really not a whole lot of centralized storage to coordinate between multiple Shiny Server Pro instances, so you don't necessarily need like an NFS share or, you know, some centralized database or something like that. Most of the state is actually kept on the user's browser, which I'll kind of explain here in a minute, but there's not a whole lot of centralized storage, so the only statefulness that you need to worry about is just the information that pertains to the open connections right now at that moment, and so you need to know about which user is mapped to which worker and how to be able to connect those two entities consistently.

And, you know, as I mentioned, most of the information is actually stored in the browser, so the authentication information in particular is actually stored in an encrypted cookie that gets placed on the user's browser, so you don't need to worry about a session store in, you know, Redis or a database or anything like that. That's all just kind of pushed out into the user's browser, which is nice, so you won't need to worry about coordinating any central storage like that.

Okay, so this is a very similar diagram for Shiny Server Pro as we saw with Shiny Server, but there's one distinction, which is that you can see that a single application may be backed by multiple workers, and that's kind of what I was referencing earlier when I was saying that, you know, you can scale an application to multiple processes. That's one of the big selling points for Pro, so what that means is that you can have different workers each with different IDs backing a single application.

It's important that you understand that distinction because when a user opens a connection to Shiny Server Pro, but ultimately into a Shiny process, it's very important that that user be consistently mapped back to that same Shiny process, and so there are some cases where that's really important in Shiny, but it's extremely important in R Markdown, and you'll notice if you're hosting any kind of R Markdown documents, you'll notice very quickly that things are going to start breaking in kind of weird ways if your users are not being deterministically mapped back to the worker that they open the connection on.

So basically what that means is that if I as a user come in and request a page, if I request App One, then Shiny Server Pro is going to go through and see, do I have any workers associated with App One yet? Yes, I have two, and now it's going to pick one of these based on whichever one has the least load currently, and so let's say that's Worker 13, so it'll point me to Worker 13. Worker 13 will fulfill my original request for the base URL, but then what I'm saying is that every HTML request and ultimately the web socket that gets opened between the user and that application must always be routed to Worker 13.

So we handle, in this architectural diagram that you can see here, we handle all this complexity for you in Shiny Server Pro. So we ensure that when a user opens a connection to a Shiny application, that all the traffic that they're going to have associated with that application will always be directed back to that individual Shiny application, or that Shiny process rather, and so that's important. So you'll never have a user that opens a connection where some traffic is served by Worker 13 and some is served by Worker 27. That would never happen in this single instance of Shiny Server Pro scenario. You would always get the same worker.

Load balancing and high availability

So now if we're looking at doing load balancing or high availability of Shiny Server Pro instance, this is where you need to start thinking about the implications of some of those things. So first of all, if we put a load balancer here in front of two Shiny Server Pro instances, you'll notice that both Shiny Server Pro instances may be running processes associated with App1. So in this case, we have one Shiny Server Pro over here that's hosting two workers associated with App1 and one over here that's also hosting two workers of App1.

And so this is nice because these could be running and typically would be running on different physical boxes and may have separate network connections and things like that. So this will allow you to do high availability and redundancy. But what's nice about this is that then any resources that you're using here, if you're doing real-time streaming, for instance, onto one of these Shiny processes, then you need to be able to kind of monitor, you know, frequent updates to data or you're storing a large amount of memory or something like that, then it can be really nice to be able to scale this out to multiple physical boxes, which you can do as in this diagram.

But a couple of things that you'll need to be aware of in doing so. So first of all, you'll obviously need a load balancer in front of the two instances that's going to balance traffic between the two. And keep in mind that there are WebSocket-friendly load balancers, like NGINX, I believe, now can support WebSockets and load balance traffic just fine with those. And Amazon DLB, if you're on Amazon Web Services and things like that, they can do these well. But older proxies or load balancers may not do WebSockets completely, they may not support that protocol. And so just be aware that if you're using an older load balancer or one that doesn't support WebSockets, then all of the WebSocket-like connections coming in from users will be degraded down to some other protocol that is compatible.

So it's not necessary that this be WebSocket-friendly, but just be aware that WebSockets are kind of going to be the most efficient and lowest latency channel between the Shiny process and the user. And so just be aware that if you have a non-WebSocket-compatible load balancer, that there's just going to be a little bit more latency and a little bit more network overhead between your users and your Shiny instances. Really it's probably going to be negligible, but just so you're informed.

And then the point that I was making earlier is that there's no state that you need to worry about coordinating between these two instances. So you'll notice we don't have some NFS share mounted between these two or some database that's coordinating between these two. You don't need to worry about any of that. The one thing that you do need to be aware of, though, is that, again, as I emphasized earlier, a single user must be directed deterministically back to the same process.

And so what that means, again, we handle the complexity within Shiny server, but you would then be responsible for handling the complexity of ensuring that a single user is always going to get load balanced to the same Shiny server instance. So this is, as you probably know, called sticky sessions or sticky load balancing. What that would mean, then, is that when a user comes into your load balancer, if they've already been directed to a single instance of the Shiny Server Pro instance for some application, that they would continue to have all of their traffic, either HTTP or WebSocket, directed back to that same instance, and then from there, we'll handle the rest of the complexity of making sure that they get back to the right worker. So you would need to be aware of that if you're getting into a high availability situation.

So this is, as you probably know, called sticky sessions or sticky load balancing. What that would mean, then, is that when a user comes into your load balancer, if they've already been directed to a single instance of the Shiny Server Pro instance for some application, that they would continue to have all of their traffic, either HTTP or WebSocket, directed back to that same instance, and then from there, we'll handle the rest of the complexity of making sure that they get back to the right worker.

The one other piece here, and this is kind of nuanced, we can talk about the details of this later, but so, again, since we're using encrypted cookies to store the user's authentication information on their browser, you would just need to be aware that the two Shiny Server Pro instances should probably use the same key when going to encrypt those cookies so that they can both understand the encrypted cookie that may be stored on the user's browser, regardless of where they get balanced in the future.

So, again, we've done this before, and we've seen this model set up very well, where you do this high availability setup, just using sticky sessions, you'll just drop a cookie on the user's browser, either at that subdomain, or make it applicable to a certain path for the application, and then just make sure that for the rest of the session, any time that they go to access that application, that they're always deterministically back to the same Shiny Server Pro instance. And that is it.