How private are individual data in the context of machine learning models? The data used to train the model, say. There are types of models where the answer is simple. Take k-nearest-neighbors, for example. There is not even a model without the complete dataset. Or support vector machines. There is no model without the support vectors. But neural networks? They’re just some composition of functions, – no data included.

The same is true for data fed to a deployed deep-learning model. It’s pretty unlikely one could invert the final softmax output from a big ResNet and get back the raw input data.

In theory, then, “hacking” a standard neural net to spy on input data sounds illusory. In practice, however, there is always some real-world context. The context may be other datasets, publicly available, that can be linked to the “private” data in question. This is a popular showcase used in advocating for differential privacy(Dwork et al. 2006): Take an “anonymized” dataset, dig up complementary information from public sources, and de-anonymize records ad libitum. Some context in that sense will often be used in “black-box” attacks, ones that presuppose no insider information about the model to be hacked.

But context can also be structural, such as in the scenario demonstrated in this post. For example, assume a distributed model, where sets of layers run on different devices – embedded devices or mobile phones, for example. (A scenario like that is sometimes seen as “white-box”(Wu et al. 2016), but in common understanding, white-box attacks probably presuppose some more insider knowledge, such as access to model architecture or even, weights. I’d therefore prefer calling this white-ish at most.) — Now assume that in this context, it is possible to intercept, and interact with, a system that executes the deeper layers of the model. Based on that system’s intermediate-level output, it is possible to perform model inversion(Fredrikson et al. 2014), that is, to reconstruct the input data fed into the system.

In this post, we’ll demonstrate such a model inversion attack, basically porting the approach given in a notebook found in the PySyft repository. We then experiment with different levels of $\epsilon$-privacy, exploring impact on reconstruction success. This second part will make use of TensorFlow Privacy, introduced in a previous blog post .

Part 1: Model inversion in action#

Example dataset: All the world’s letters1#

The overall process of model inversion used here is the following. With no, or scarcely any, insider knowledge about a model, – but given opportunities to repeatedly query it –, I want to learn how to reconstruct unknown inputs based on just model outputs . Independently of original model training, this, too, is a training process; however, in general it will not involve the original data, as those won’t be publicly available. Still, for best success, the attacker model is trained with data as similar as possible to the original training data assumed. Thinking of images, for example, and presupposing the popular view of successive layers representing successively coarse-grained features, we want that the surrogate data to share as many representation spaces with the real data as possible – up to the very highest layers before final classification, ideally.

If we wanted to use classical MNIST as an example, one thing we could do is to only use some of the digits for training the “real” model; and the rest, for training the adversary. Let’s try something different though, something that might make the undertaking harder as well as easier at the same time. Harder, because the dataset features exemplars more complex than MNIST digits; easier because of the same reason: More could possibly be learned, by the adversary, from a complex task.

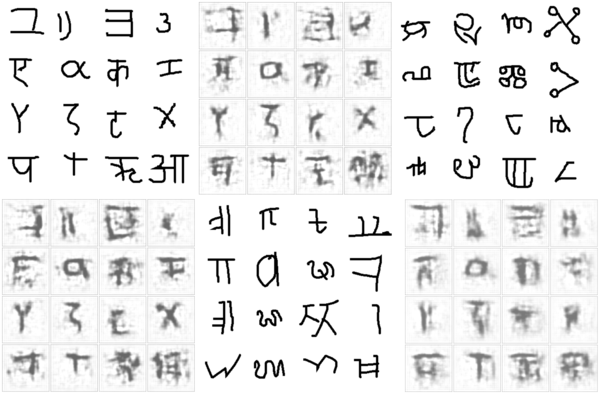

Originally designed to develop a machine model of concept learning and generalization (Lake et al. 2015), the OmniGlot dataset incorporates characters from fifty alphabets, split into two disjoint groups of thirty and twenty alphabets each. We’ll use the group of twenty to train our target model. Here is a sample:

The group of thirty we don’t use; instead, we’ll employ two small five-alphabet collections to train the adversary and to test reconstruction, respectively. (These small subsets of the original “big” thirty-alphabet set are again disjoint.)

Here first is a sample from the set used to train the adversary.

The other small subset will be used to test the adversary’s spying capabilities after training. Let’s peek at this one, too:

Conveniently, we can use tfds , the R wrapper to TensorFlow Datasets, to load those subsets:

|

|

Now first, we train the target model.

Train target model#

The dataset originally has four columns: the image, of size 105 x 105; an alphabet id and a within-dataset character id; and a label. For our use case, we’re not really interested in the task the target model was/is used for; we just want to get at the data. Basically, whatever task we choose, it is not much more than a dummy task. So, let’s just say we train the target to classify characters by alphabet.

We thus throw out all unneeded features, keeping just the alphabet id and the image itself:

|

|

The model consists of two parts. The first is imagined to run in a distributed fashion; for example, on mobile devices (stage one). These devices then send model outputs to a central server, where final results are computed (stage two). Sure, you will be thinking, this is a convenient setup for our scenario: If we intercept stage one results, we – most probably – gain access to richer information than what is contained in a model’s final output layer. — That is correct, but the scenario is less contrived than one might assume. Just like federated learning (McMahan et al. 2016), it fulfills important desiderata: Actual training data never leaves the devices, thus staying (in theory!) private; at the same time, ingoing traffic to the server is significantly reduced.

In our example setup, the on-device model is a convnet, while the server model is a simple feedforward network.

We link both together as a TargetModel that when called normally, will run both steps in succession. However, we’ll be able

to call target_model$mobile_step() separately, thereby intercepting intermediate results.

|

|

The overall model is a Keras custom model, so we train it TensorFlow 2.x - style . After ten epochs, training and validation accuracy are at ~0.84 and ~0.73, respectively – not bad at all for a 20-class discrimination task.

|

|

Epoch: 1 -----------

Train accuracy 0.195090905 Validation Accuracy 0.376605511

Epoch: 2 -----------

Train accuracy 0.472272724 Validation Accuracy 0.5243119

...

...

Epoch: 9 -----------

Train accuracy 0.821454525 Validation Accuracy 0.720183492

Epoch: 10 -----------

Train accuracy 0.840454519 Validation Accuracy 0.726605475

Now, we train the adversary.

Train adversary#

The adversary’s general strategy will be:

- Feed its small, surrogate dataset to the on-device model. The output received can be regarded as a (highly) compressed version of the original images.

- Pass that “compressed” version as input to its own model, which tries to reconstruct the original images from the sparse code.

- Compare original images (those from the surrogate dataset) to the reconstruction pixel-wise. The goal is to minimize the mean (squared, say) error.

Doesn’t this sound a lot like the decoding side of an autoencoder? No wonder the attacker model is a deconvolutional network.

Its input – equivalently, the on-device model’s output – is of size batch_size x 1 x 1 x 32. That is, the information is

encoded in 32 channels, but the spatial resolution is 1. Just like in an autoencoder operating on images, we need to

upsample until we arrive at the original resolution of 105 x 105.

This is exactly what’s happening in the attacker model:

|

|

To train the adversary, we use one of the small (five-alphabet) subsets. To reiterate what was said above, there is no overlap with the data used to train the target model.

|

|

Here, then, is the attacker training loop, striving to refine the decoding process over a hundred – short – epochs:

|

|

Epoch: 1 -----------

mse: 0.530902684

Epoch: 2 -----------

mse: 0.201351956

...

...

Epoch: 99 -----------

mse: 0.0413453057

Epoch: 100 -----------

mse: 0.0413028933

The question now is, – does it work? Has the attacker really learned to infer actual data from (stage one) model output?

Test adversary#

To test the adversary, we use the third dataset we downloaded, containing images from five yet-unseen alphabets. For display, we select just the first sixteen records – a completely arbitrary decision, of course.

|

|

Just like during the training process, the adversary queries the target model (stage one), obtains the compressed representation, and attempts to reconstruct the original image. (Of course, in the real world, the setup would be different in that the attacker would not be able to simply inspect the images, as is the case here. There would thus have to be some way to intercept, and make sense of, network traffic.)

|

|

To allow for easier comparison (and increase suspense …!), here again are the actual images, which we displayed already when introducing the dataset:

And here is the reconstruction:

Of course, it is hard to say how revealing these “guesses” are. There definitely seems to be a connection to character complexity; overall, it seems like the Greek and Roman letters, which are the least complex, are also the ones most easily reconstructed. Still, in the end, how much privacy is lost will very much depend on contextual factors.

First and foremost, do the exemplars in the dataset represent individuals or classes of individuals? If – as in reality

– the character X represents a class, it might not be so grave if we were able to reconstruct “some X” here: There are many

Xs in the dataset, all pretty similar to each other; we’re unlikely to exactly to have reconstructed one special, individual

X. If, however, this was a dataset of individual people, with all Xs being photographs of Alex, then in reconstructing an

X we have effectively reconstructed Alex.

Second, in less obvious scenarios, evaluating the degree of privacy breach will likely surpass computation of quantitative metrics, and involve the judgment of domain experts.

Speaking of quantitative metrics though – our example seems like a perfect use case to experiment with differential privacy. Differential privacy is measured by $\epsilon$ (lower is better), the main idea being that answers to queries to a system should depend as little as possible on the presence or absence of a single (any single) datapoint.

So, we will repeat the above experiment, using TensorFlow Privacy (TFP) to add noise, as well as clip gradients, during optimization of the target model. We’ll try three different conditions, resulting in three different values for $\epsilon$s, and for each condition, inspect the images reconstructed by the adversary.

Part 2: Differential privacy to the rescue#

Unfortunately, the setup for this part of the experiment requires a little workaround. Making use of the flexibility afforded by TensorFlow 2.x, our target model has been a custom model, joining two distinct stages (“mobile” and “server”) that could be called independently.

TFP, however, does still not work with TensorFlow 2.x, meaning we have to use old-style, non-eager model definitions and training. Luckily, the workaround will be easy.

First, load (and possibly, install) libraries, taking care to disable TensorFlow V2 behavior.

|

|

The training set is loaded, preprocessed and batched (nearly) as before.

|

|

Train target model – with TensorFlow Privacy#

To train the target, we put the layers from both stages – “mobile” and “server” – into one sequential model. Note how we remove the dropout. This is because noise will be added during optimization anyway.

|

|

Using TFP mainly means using a TFP optimizer, one that clips gradients according to some defined magnitude and adds noise of

defined size. noise_multiplier is the parameter we are going to vary to arrive at different $\epsilon$s:

|

|

In training the model, the second important change for TFP we need to make is to have loss and gradients computed on the individual level.

|

|

To test three different $\epsilon$s, we run this thrice, each time with a different noise_multiplier. Each time we arrive at

a different final accuracy.

Here is a synopsis, where $\epsilon$ was computed like so:

|

|

| noise multiplier | epsilon | final acc. (training set) |

|---|---|---|

| 0.7 | 4.0 | 0.37 |

| 0.5 | 12.5 | 0.45 |

| 0.3 | 84.7 | 0.56 |

Now, as the adversary won’t call the complete model, we need to “cut off” the second-stage layers. This leaves us with a model that executes stage-one logic only. We save its weights, so we can later call it from the adversary:

|

|

Train adversary (against differentially private target)#

In training the adversary, we can keep most of the original code – meaning, we’re back to TF-2 style. Even the definition of the target model is the same as before:

|

|

But now, we load the trained target’s weights into the freshly defined model’s “mobile stage”:

|

|

And now, we’re back to the old training routine. Testing setup is the same as before, as well.

So how well does the adversary perform with differential privacy added to the picture?

Test adversary (against differentially private target)#

Here, ordered by decreasing $\epsilon$, are the reconstructions. Again, we refrain from judging the results, for the same reasons as before: In real-world applications, whether privacy is preserved “well enough” will depend on the context.

Here, first, are reconstructions from the run where the least noise was added.

On to the next level of privacy protection:

And the highest-$\epsilon$ one:

Conclusion#

Throughout this post, we’ve refrained from “over-commenting” on results, and focused on the why-and-how instead. This is because in an artificial setup, chosen to facilitate exposition of concepts and methods, there really is no objective frame of reference. What is a good reconstruction? What is a good $\epsilon$? What constitutes a data breach? No-one knows.

In the real world, there is a context to everything – there are people involved, the people whose data we’re talking about. There are organizations, regulations, laws. There are abstract principles, and there are implementations; different implementations of the same “idea” can differ.

As in machine learning overall, research papers on privacy-, ethics- or otherwise society-related topics are full of LaTeX formulae. Amid the math, let’s not forget the people.

Thanks for reading!

Dwork, Cynthia, Frank McSherry, Kobbi Nissim, and Adam Smith. 2006. “Calibrating Noise to Sensitivity in Private Data Analysis.” Proceedings of the Third Conference on Theory of Cryptography (Berlin, Heidelberg), TCC'06, 265–84. https://doi.org/10.1007/11681878_14 .

Fredrikson, Matthew, Eric Lantz, Somesh Jha, Simon Lin, David Page, and Thomas Ristenpart. 2014. “Privacy in Pharmacogenetics: An End-to-End Case Study of Personalized Warfarin Dosing.” Proceedings of the 23rd USENIX Conference on Security Symposium (USA), SEC'14, 17–32.

Lake, Brenden M., Ruslan Salakhutdinov, and Joshua B. Tenenbaum. 2015. “Human-Level Concept Learning Through Probabilistic Program Induction.” Science 350 (6266): 1332–38. https://doi.org/10.1126/science.aab3050 .

McMahan, H. Brendan, Eider Moore, Daniel Ramage, and Blaise Agüera y Arcas. 2016. “Federated Learning of Deep Networks Using Model Averaging.” CoRR abs/1602.05629. http://arxiv.org/abs/1602.05629 .

Wu, X., M. Fredrikson, S. Jha, and J. F. Naughton. 2016. “A Methodology for Formalizing Model-Inversion Attacks.” 2016 IEEE 29th Computer Security Foundations Symposium (CSF), 355–70.

-

Don’t take all literally please; it’s just a nice phrase. ↩︎